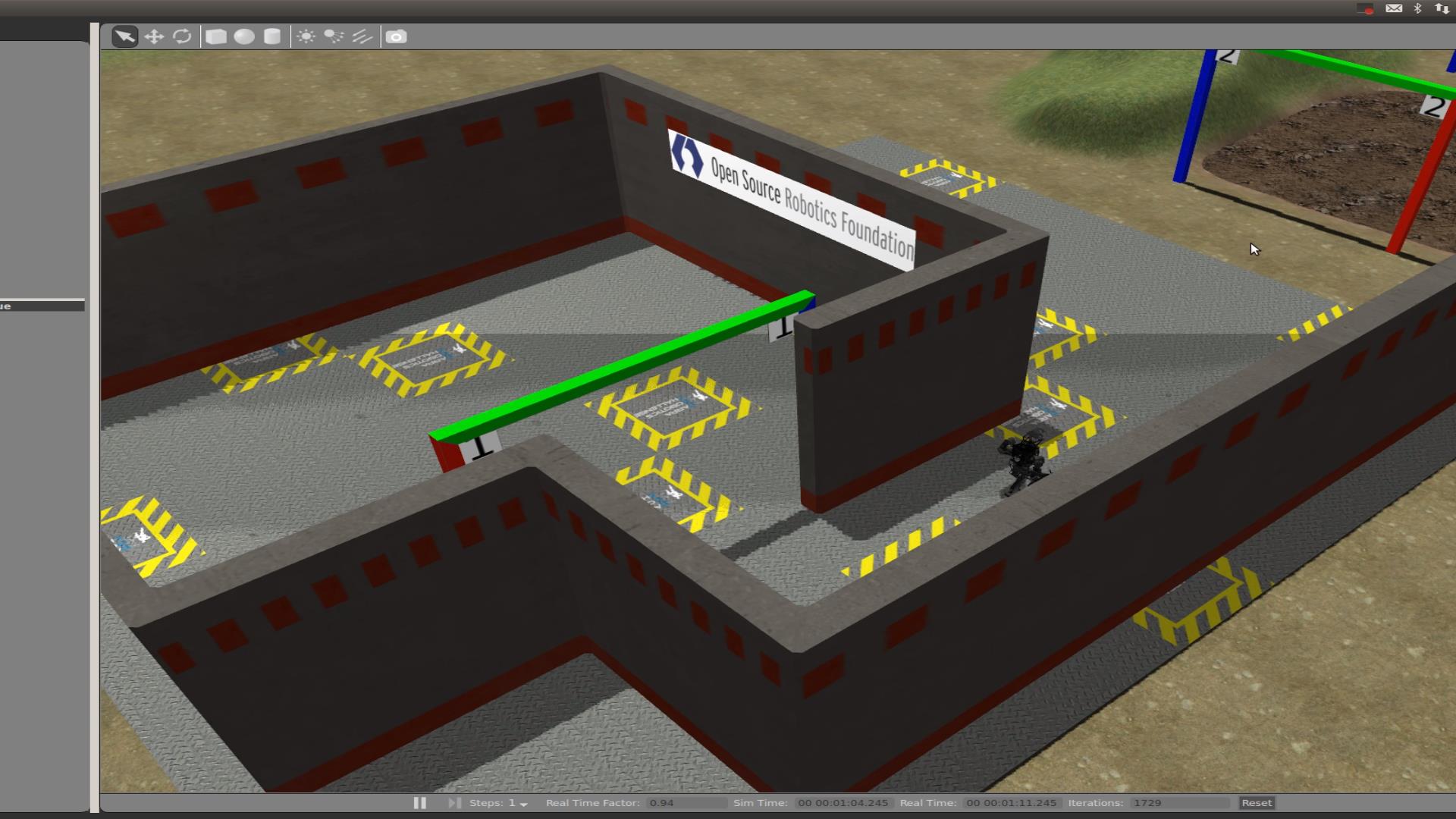

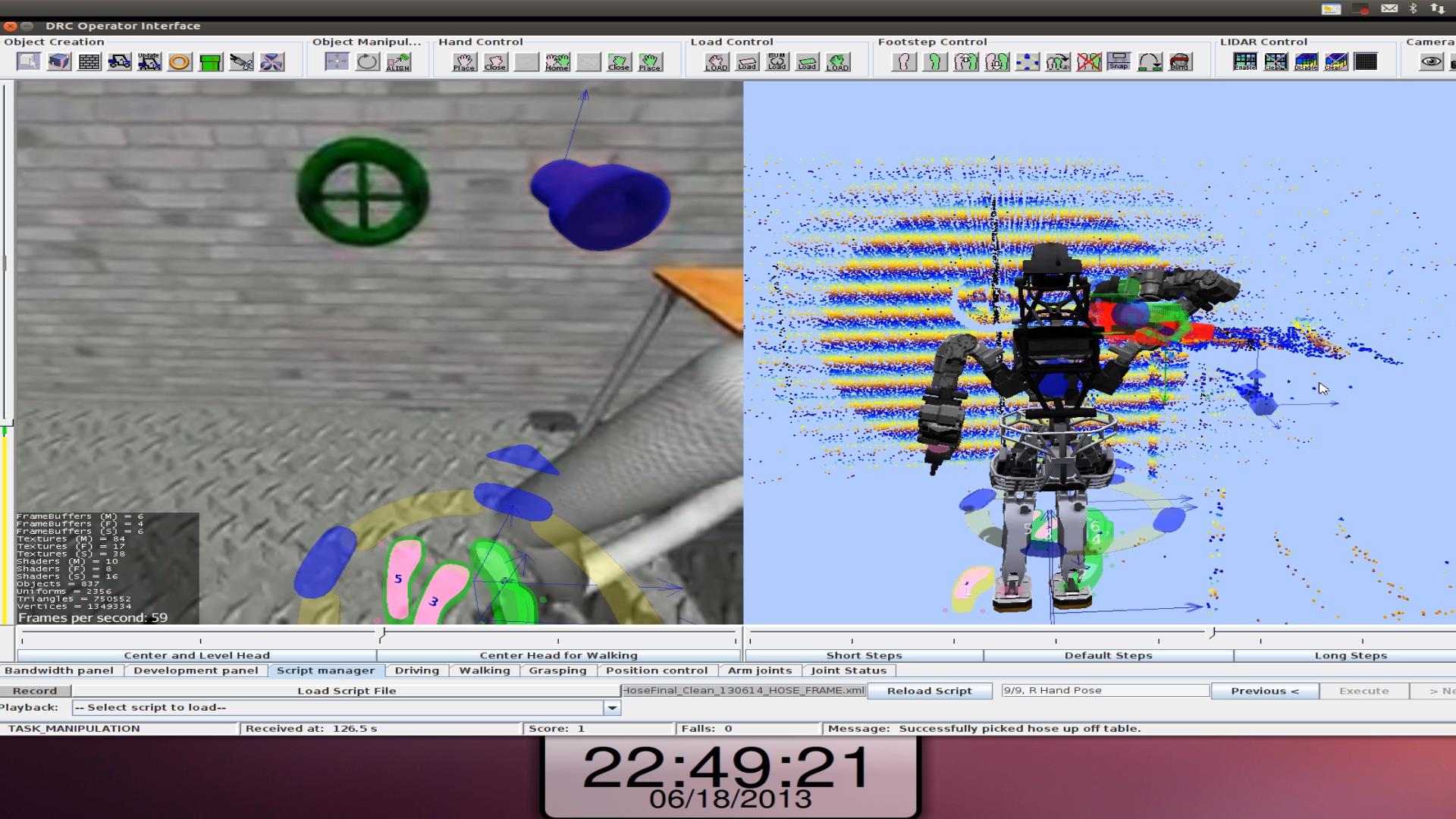

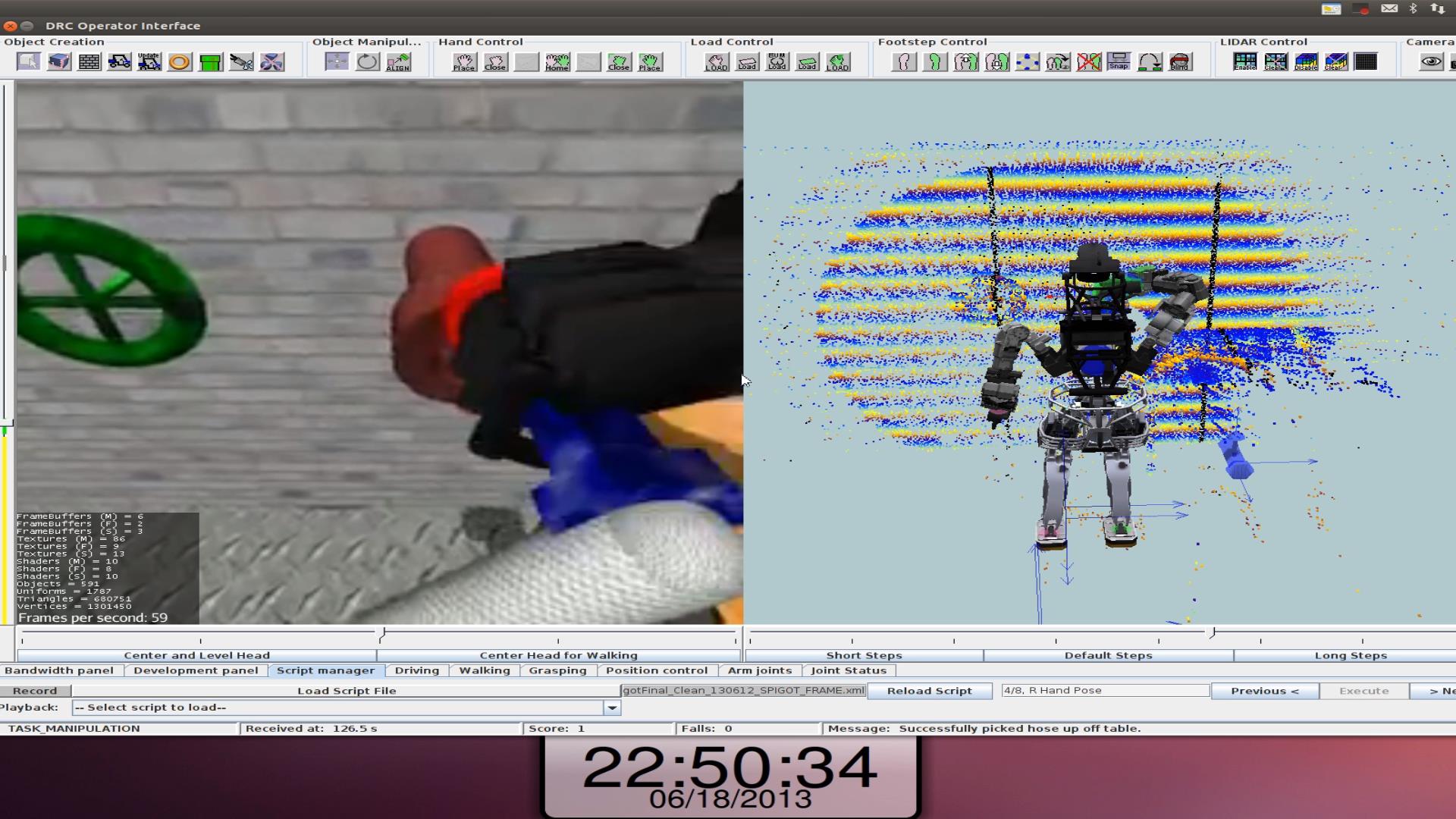

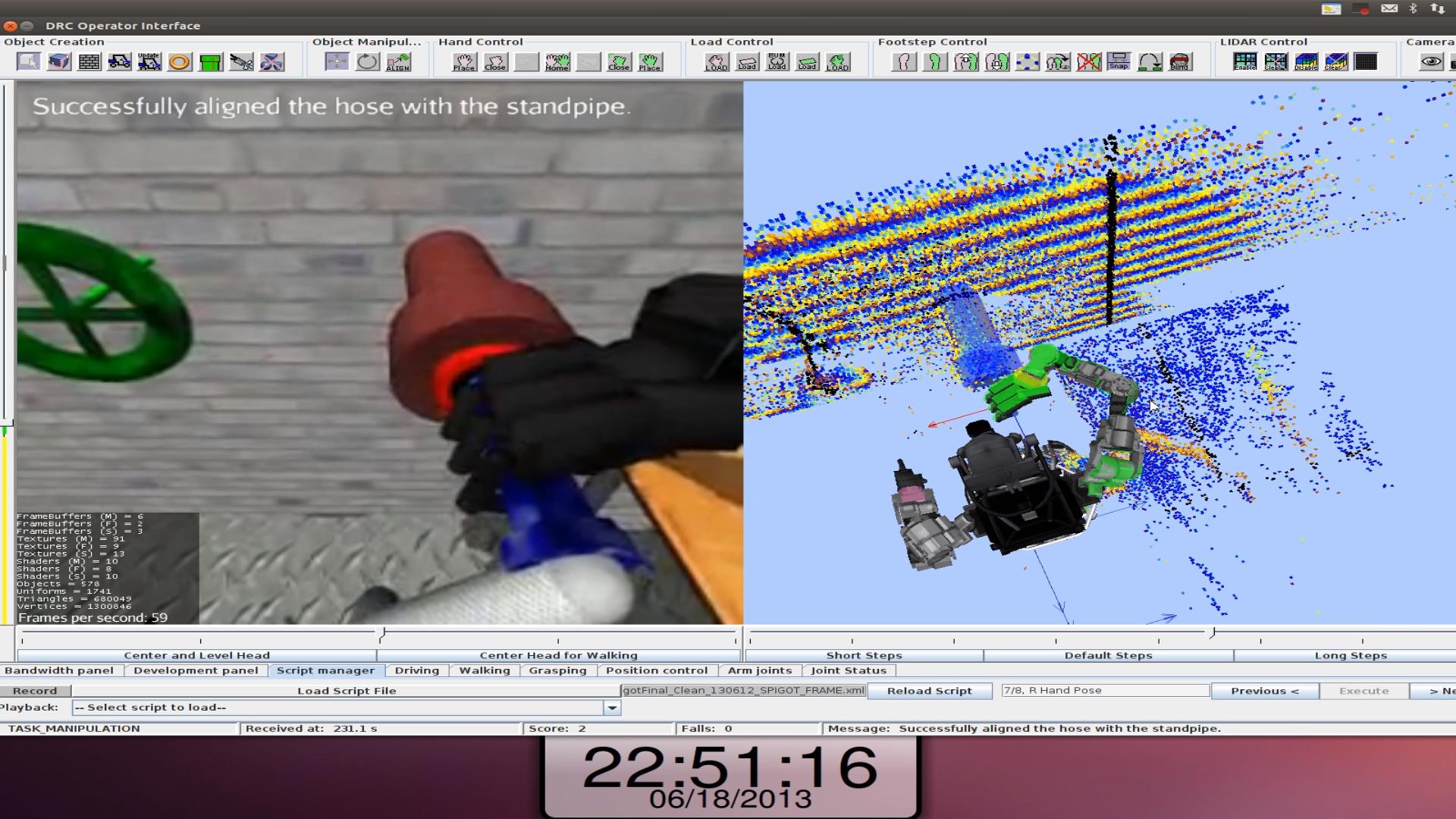

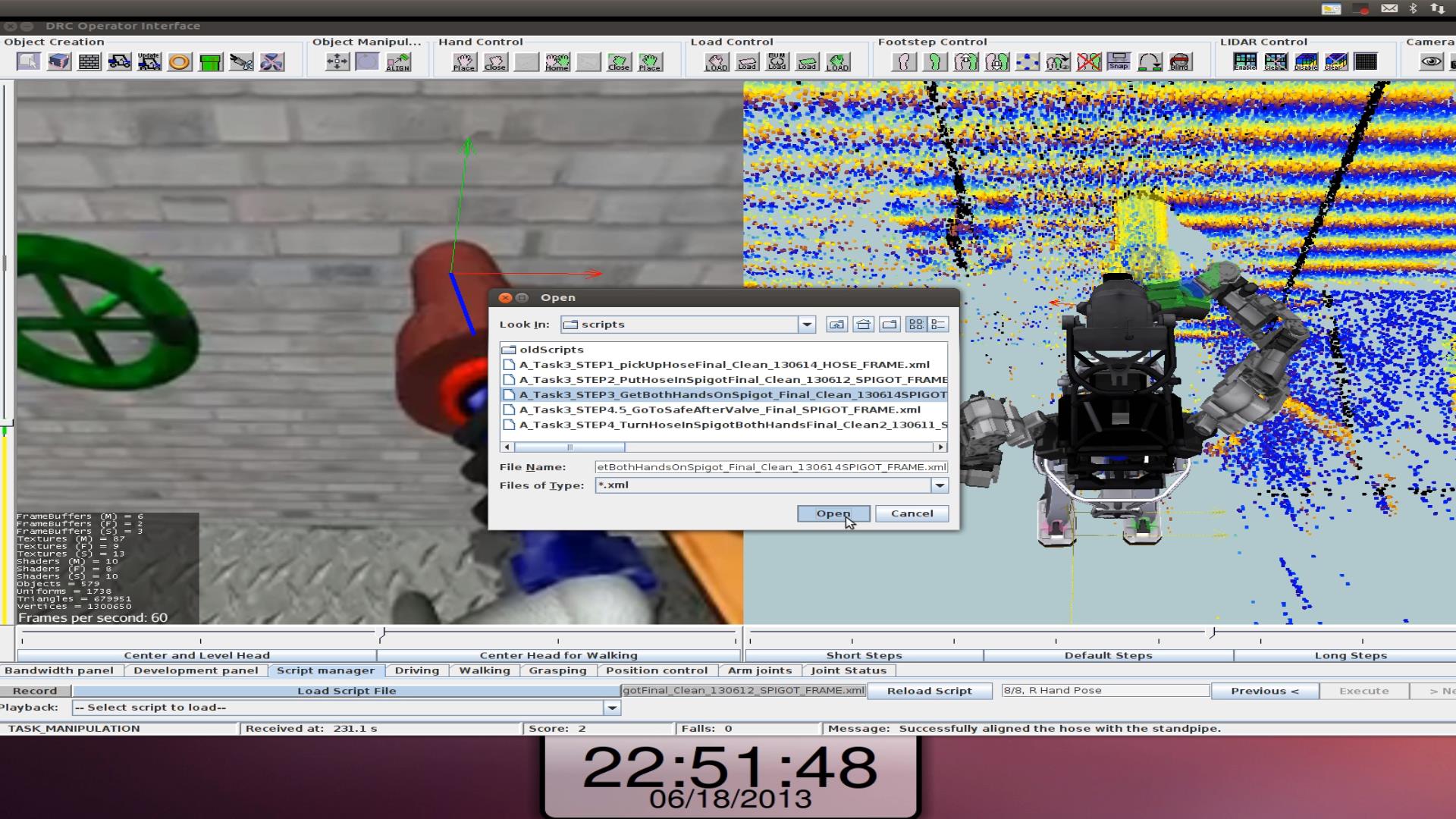

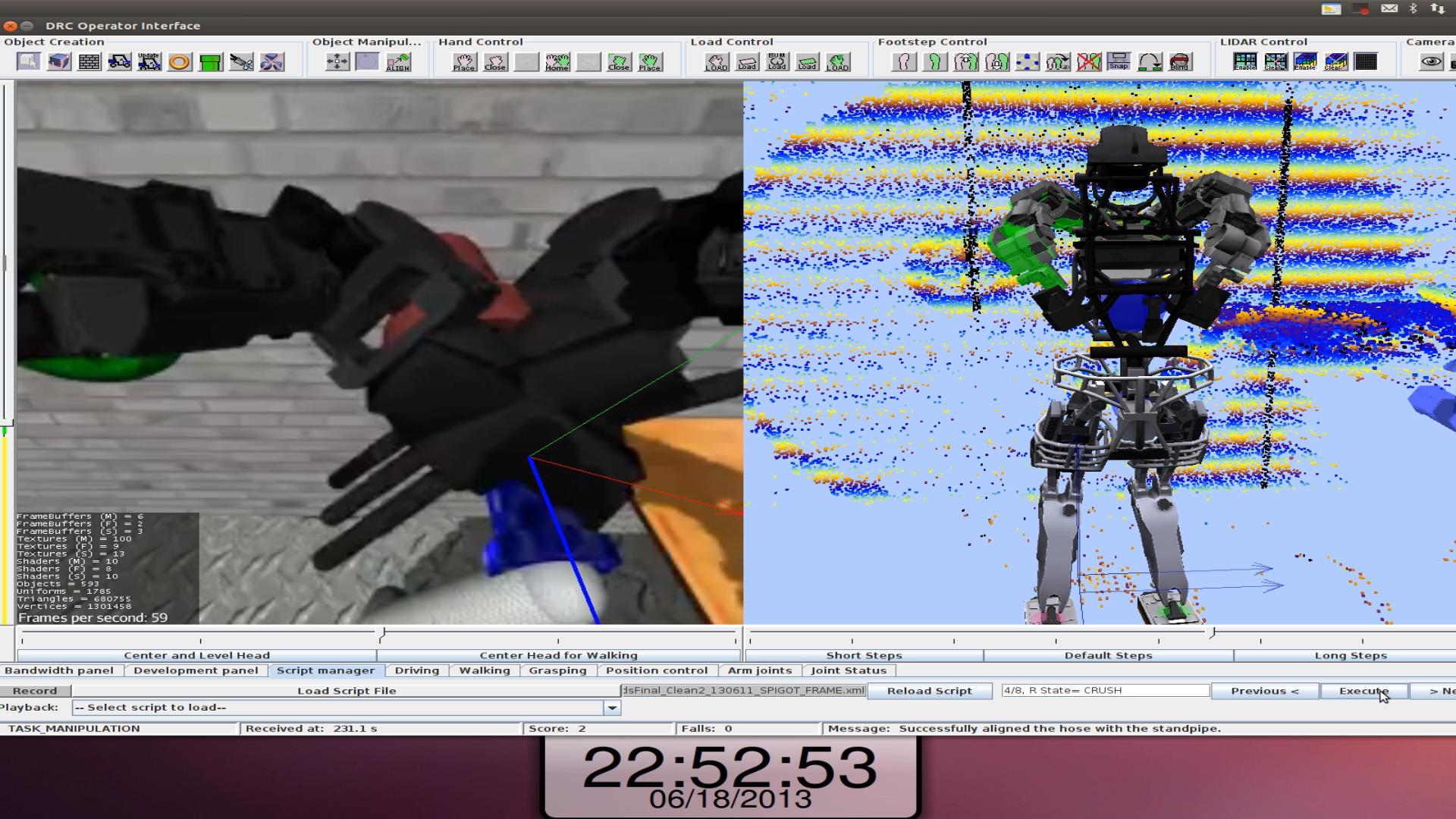

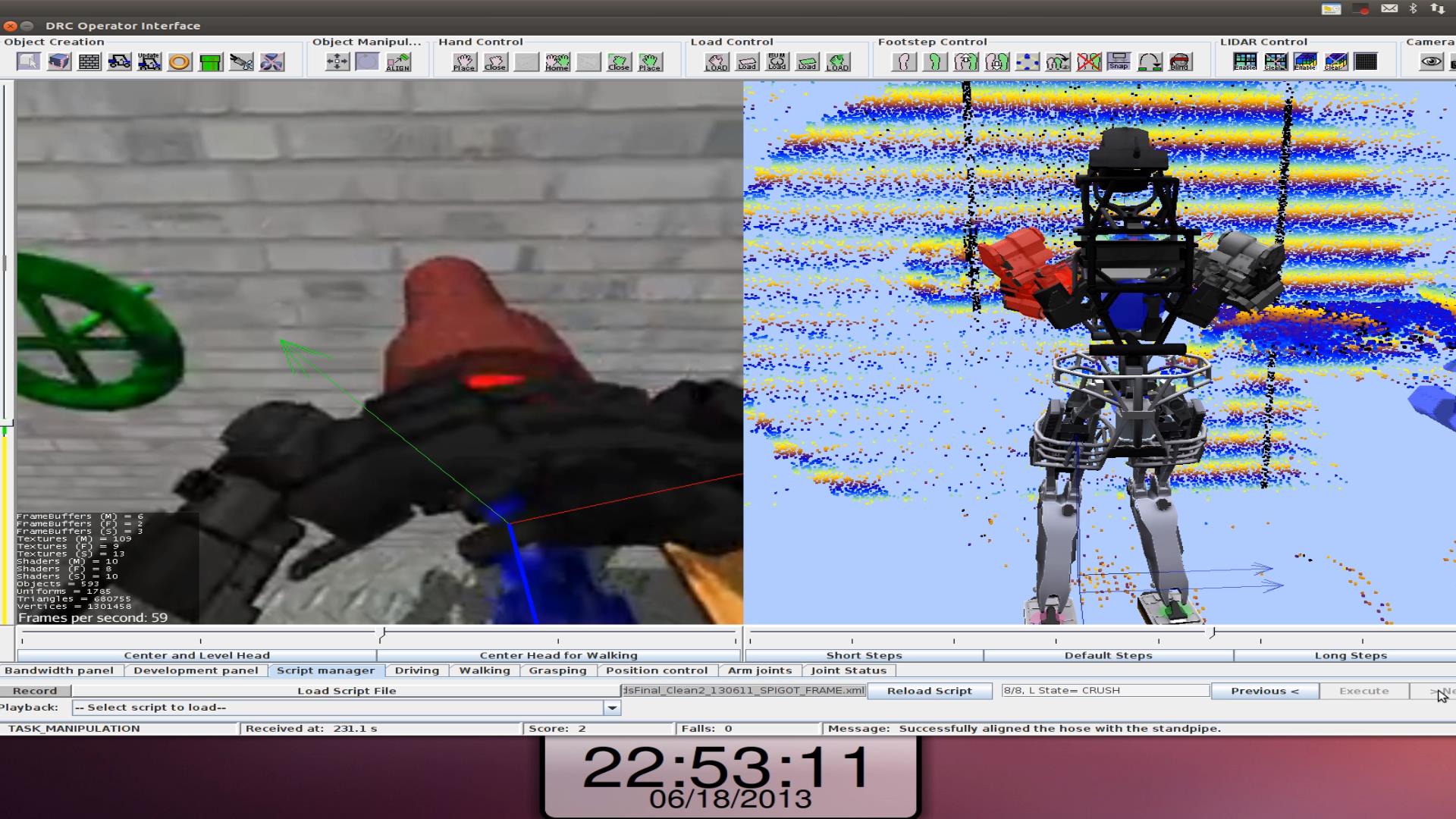

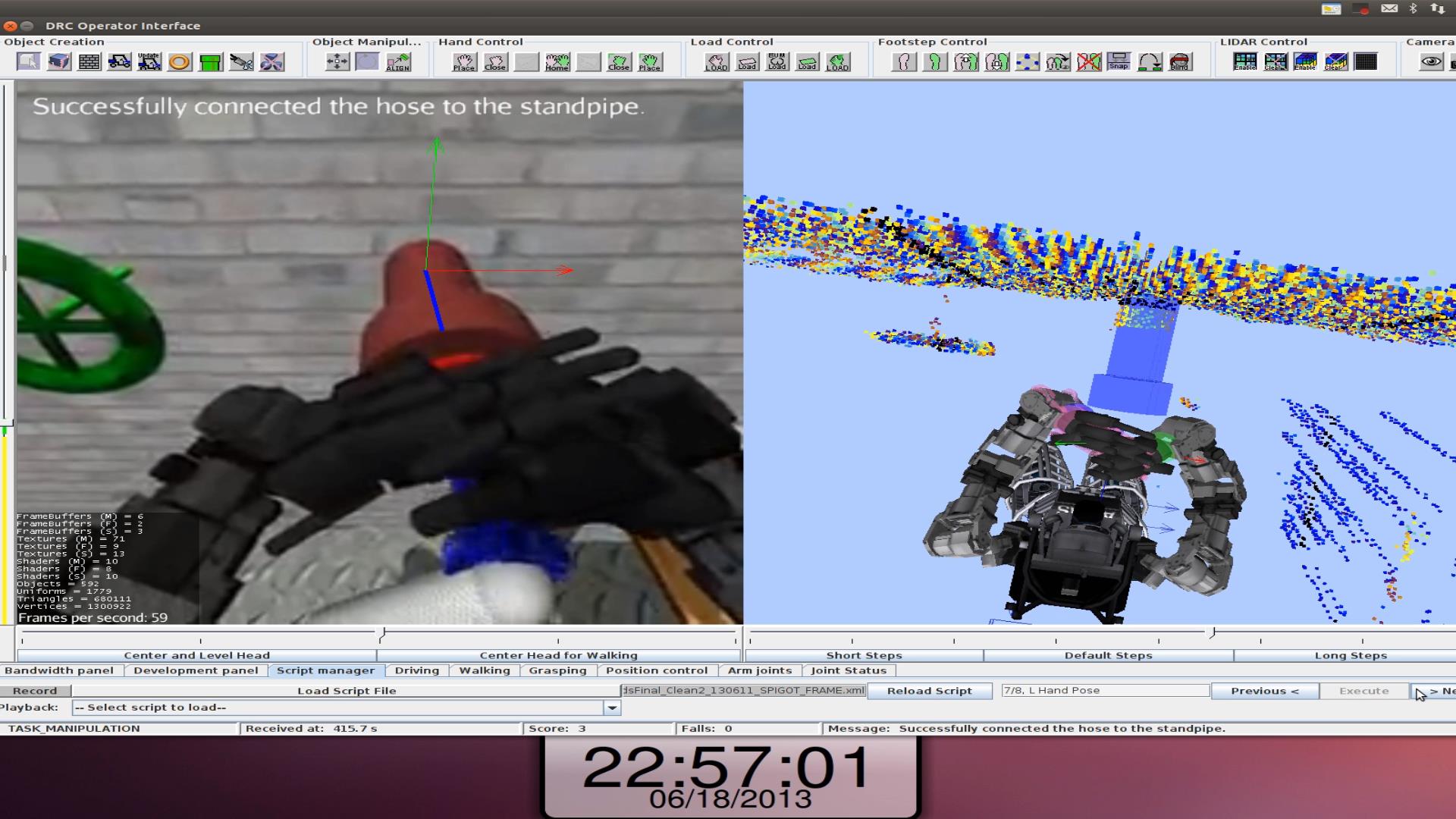

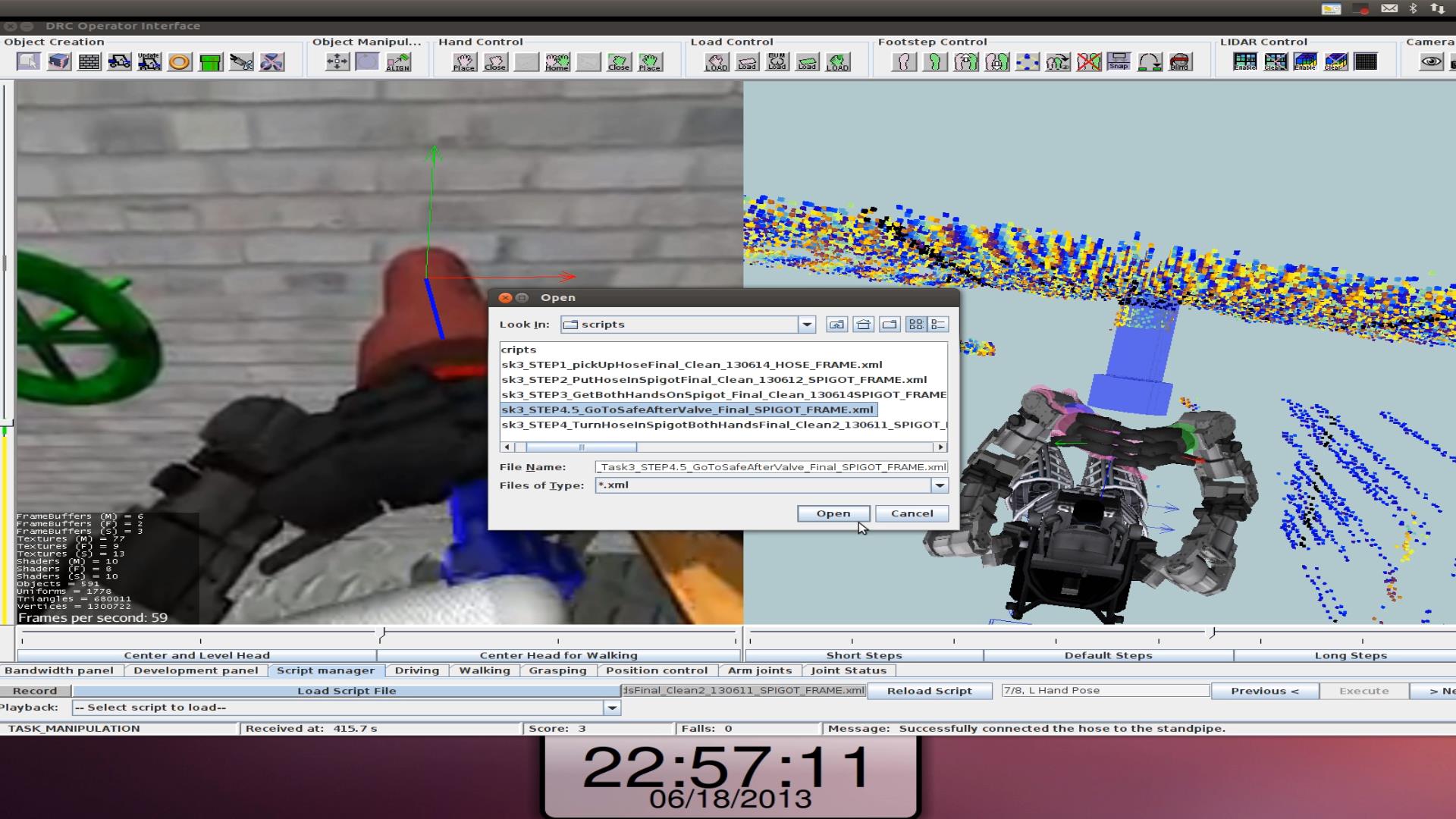

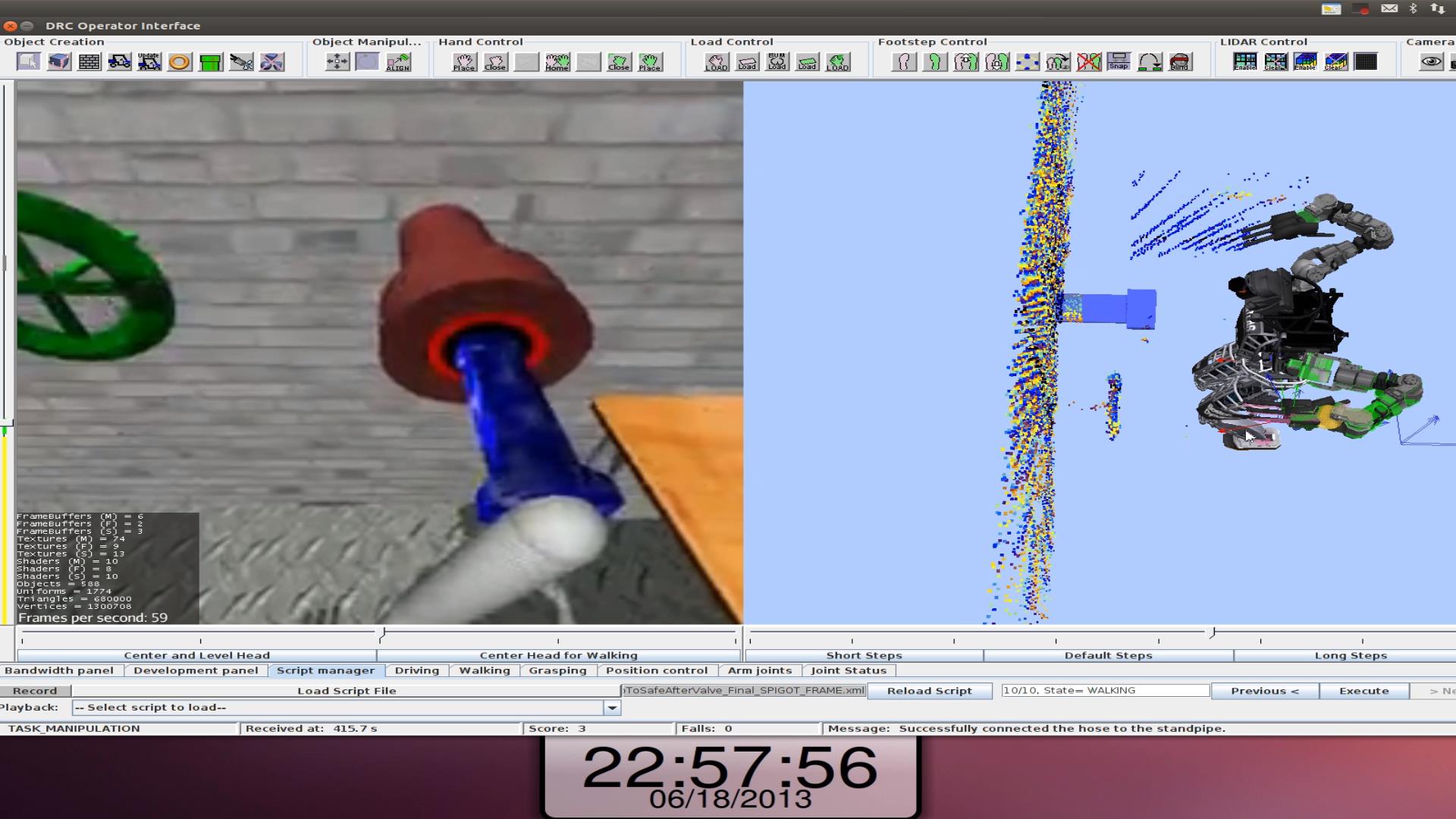

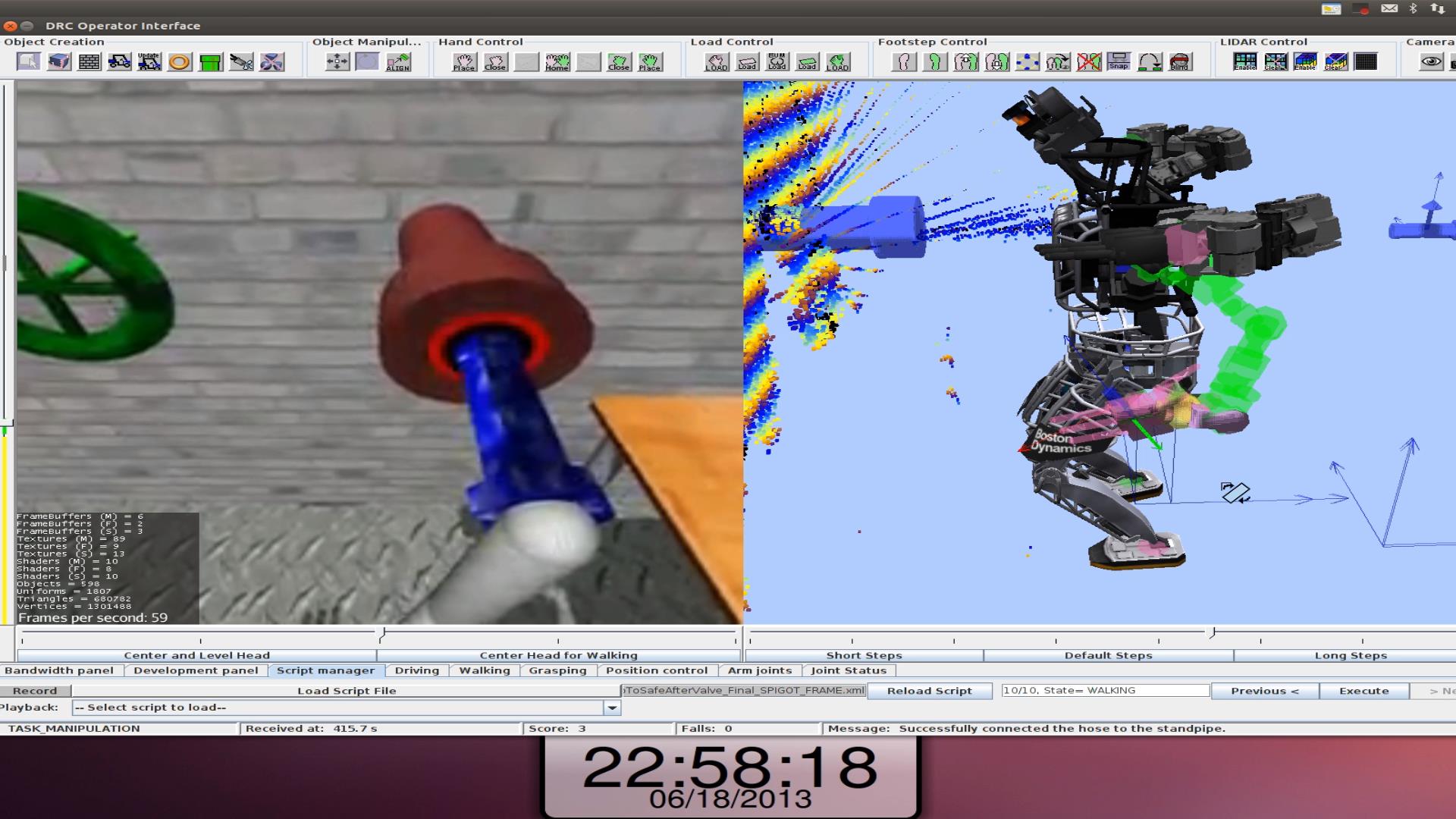

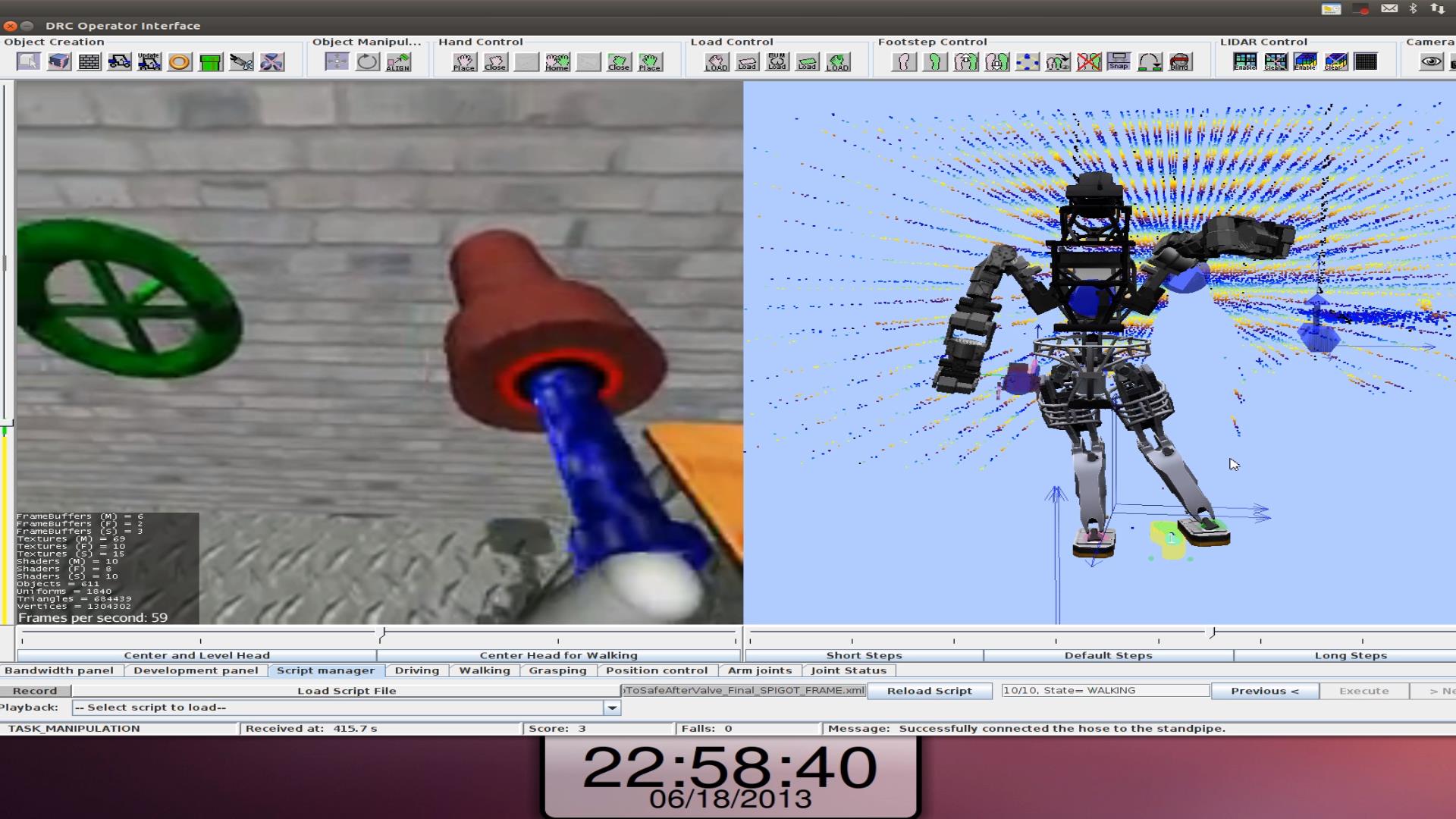

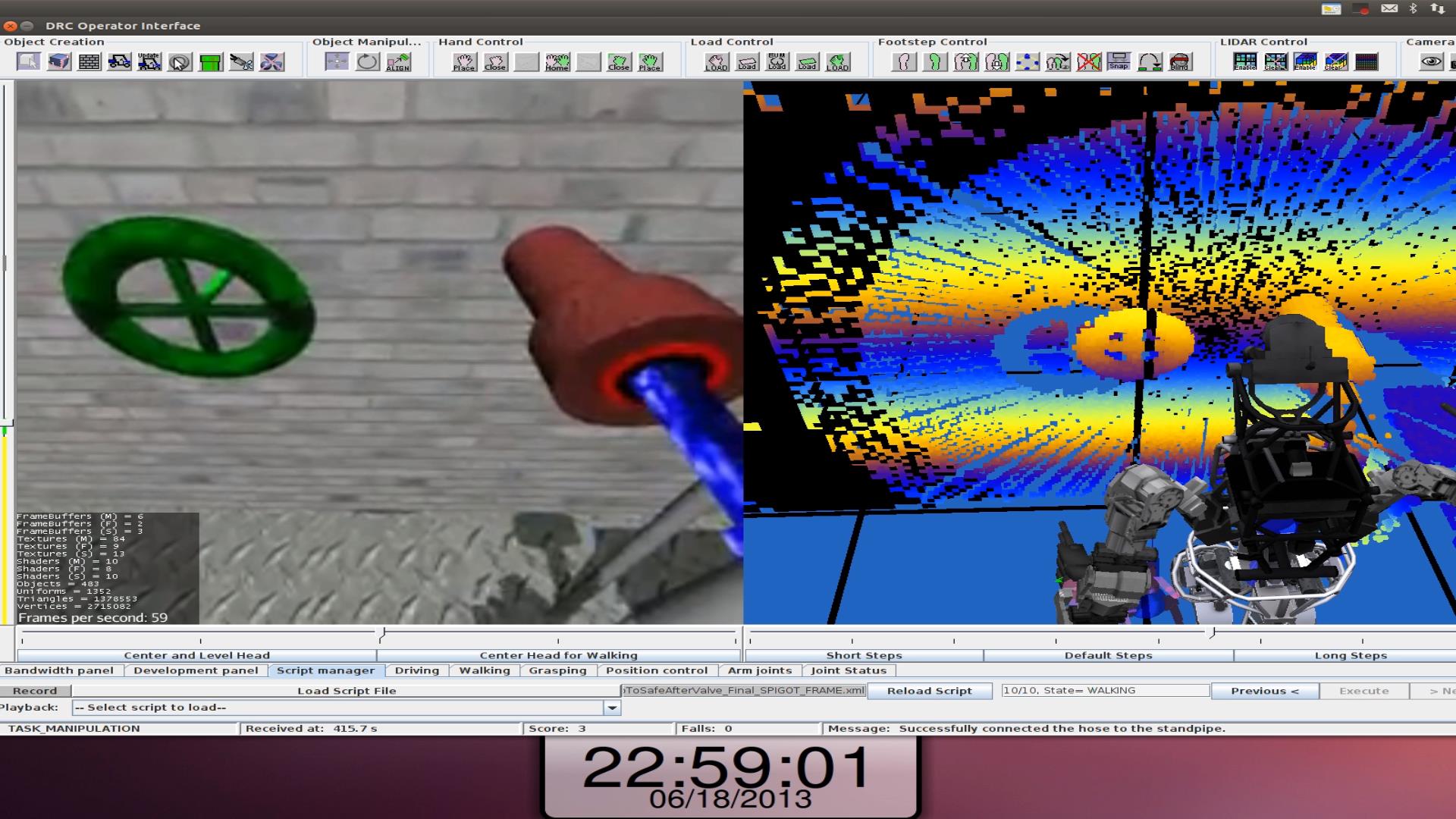

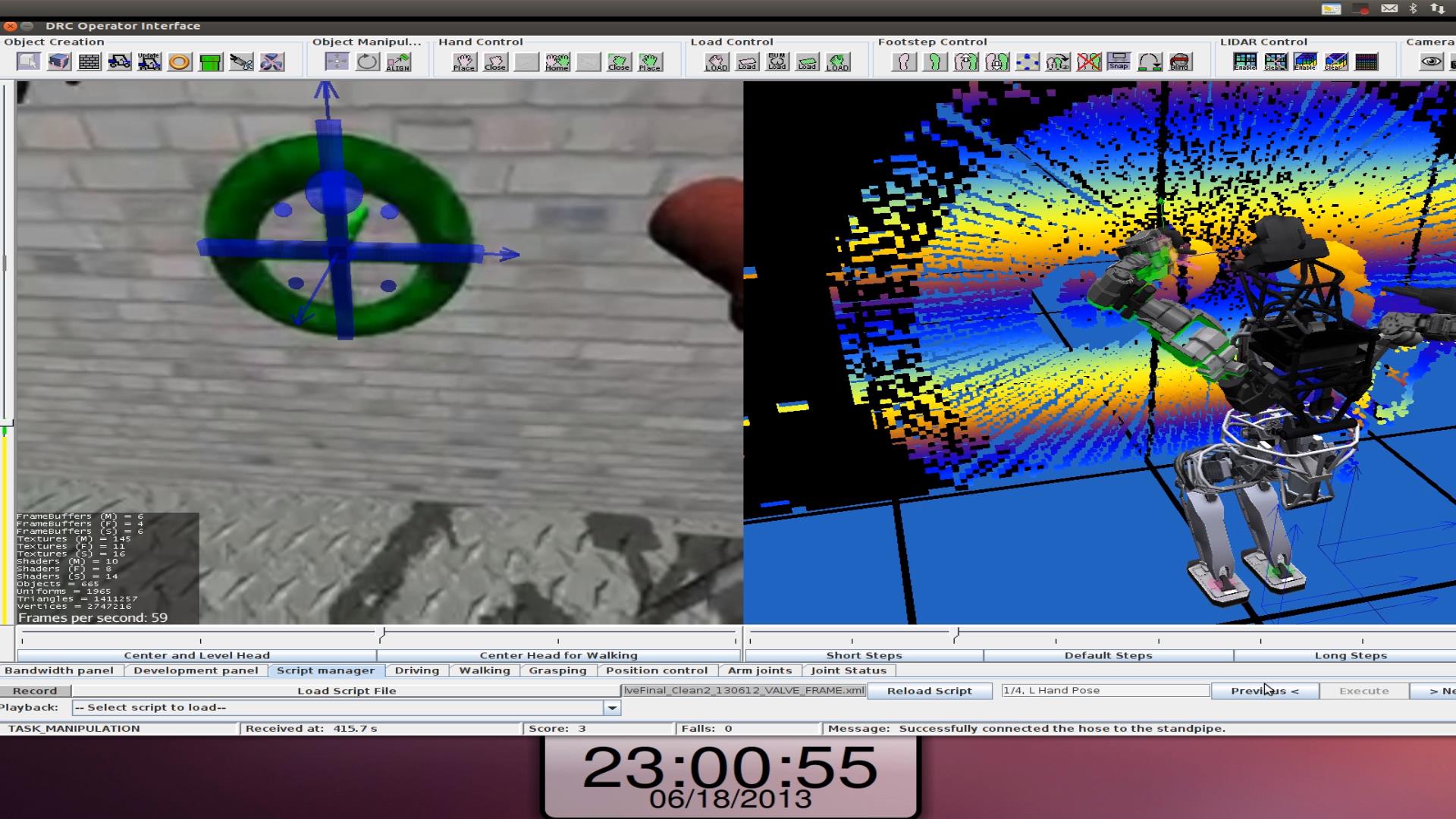

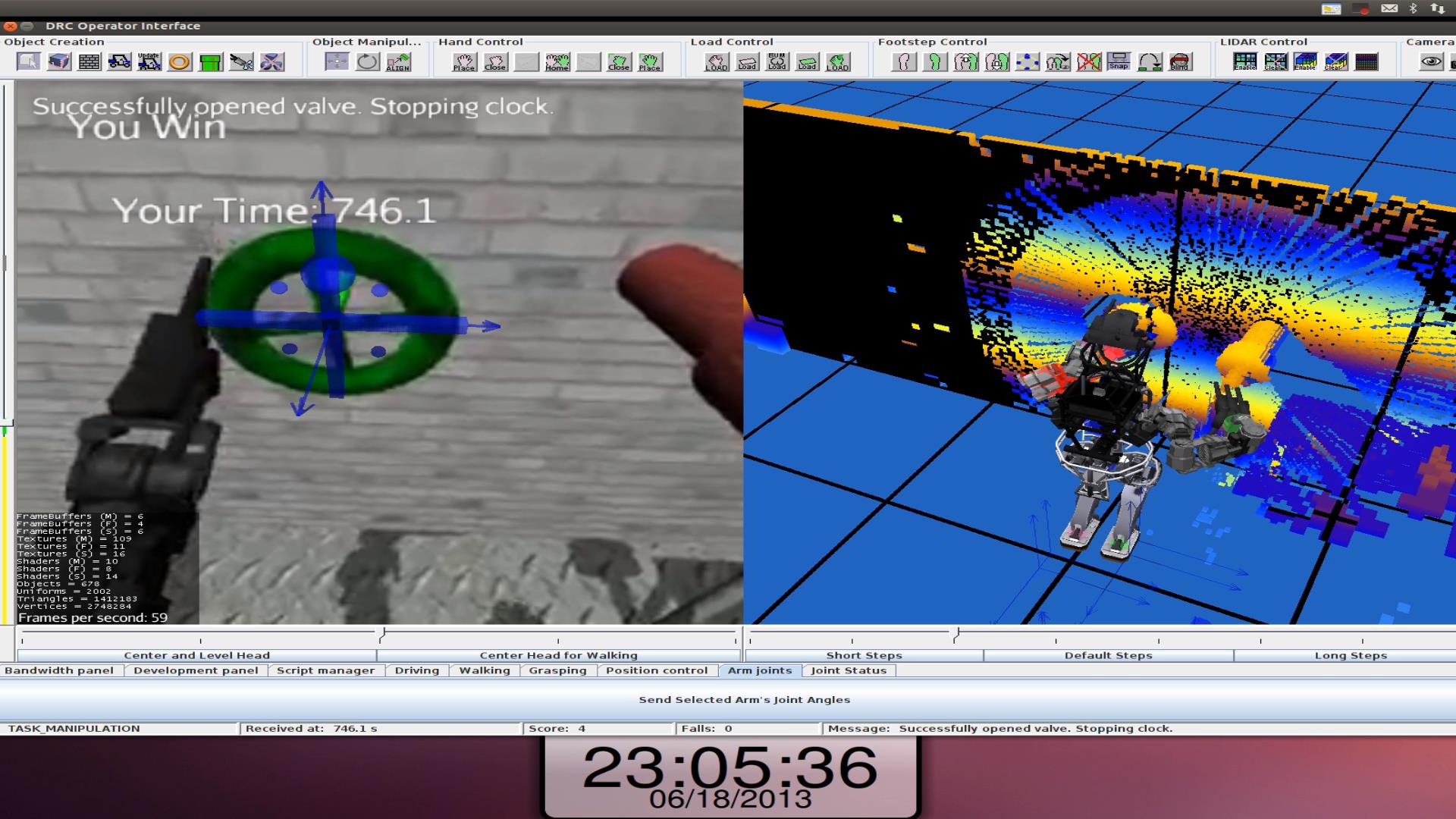

The following images are of the runs we did for the official VRC competition. There are screenshots taken from the operator interface and replays of the Gazebo logs of what really happened. There were a total of 15 runs, 5 each of 3 tasks: driving, walking, and connecting and turning on a hose. General images are shown for each task that show different features of the task as well as how we approached them. In addition to the general images, some image sequences have been selected from a single task to show the progression through a single task.

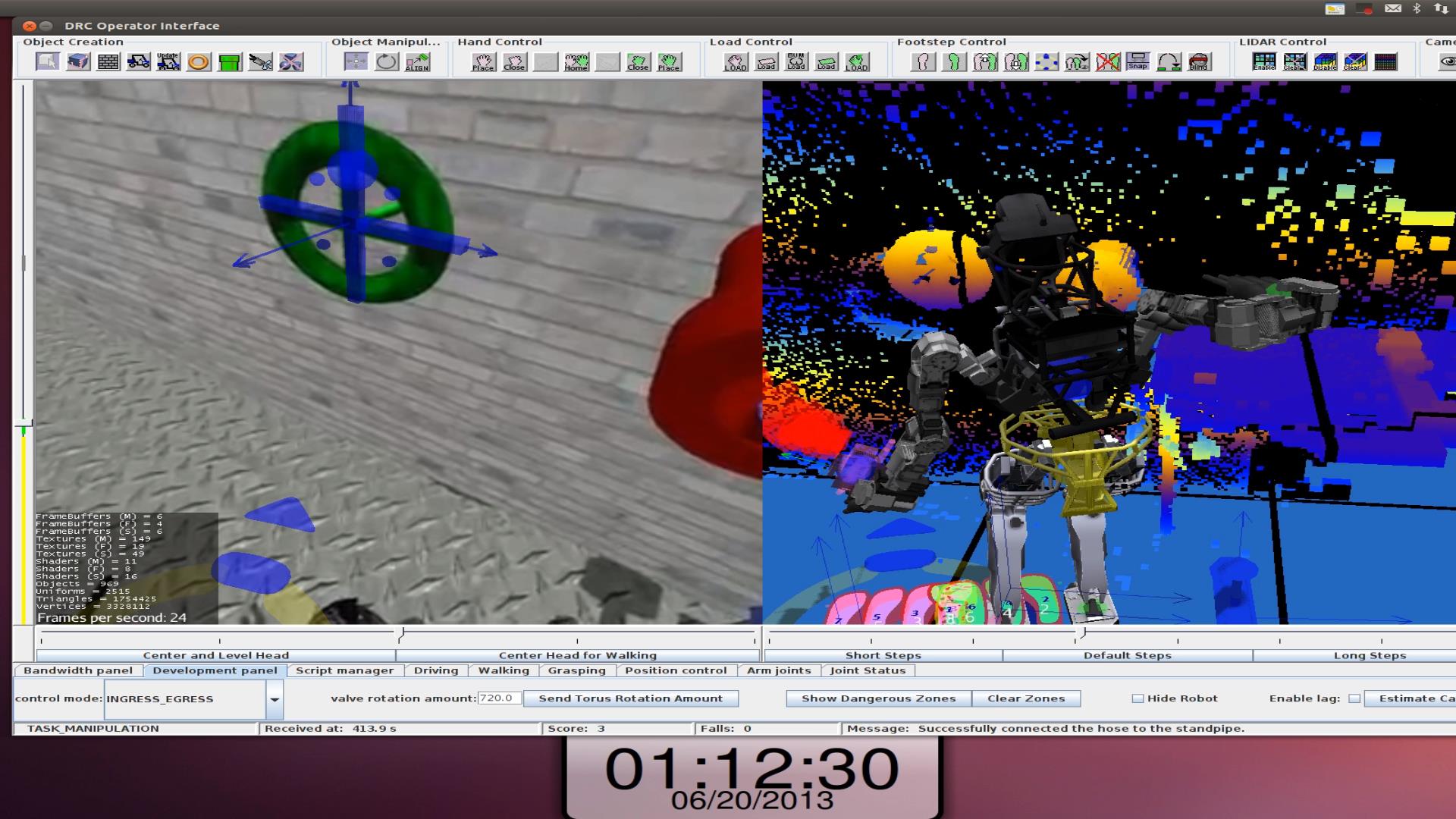

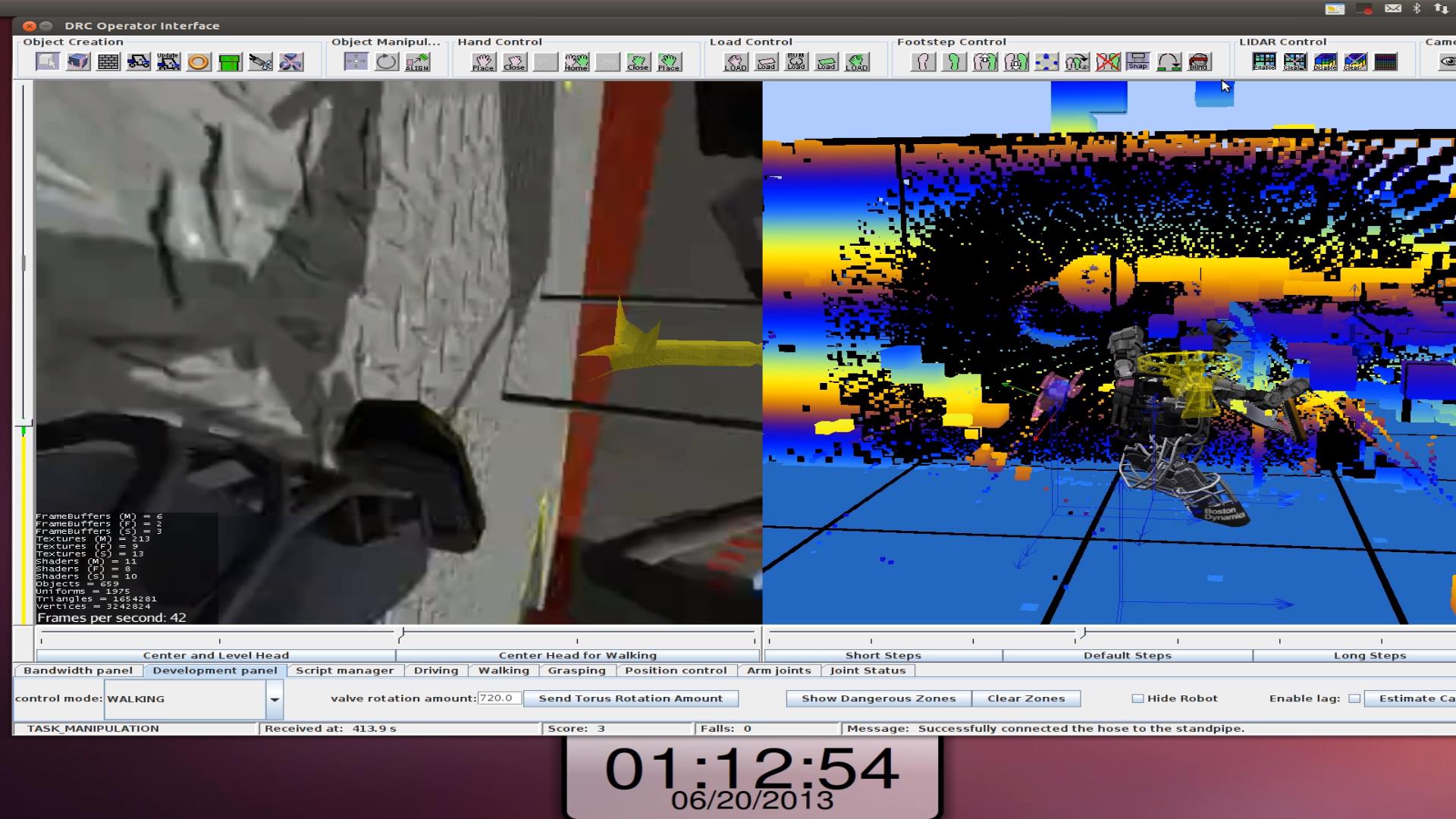

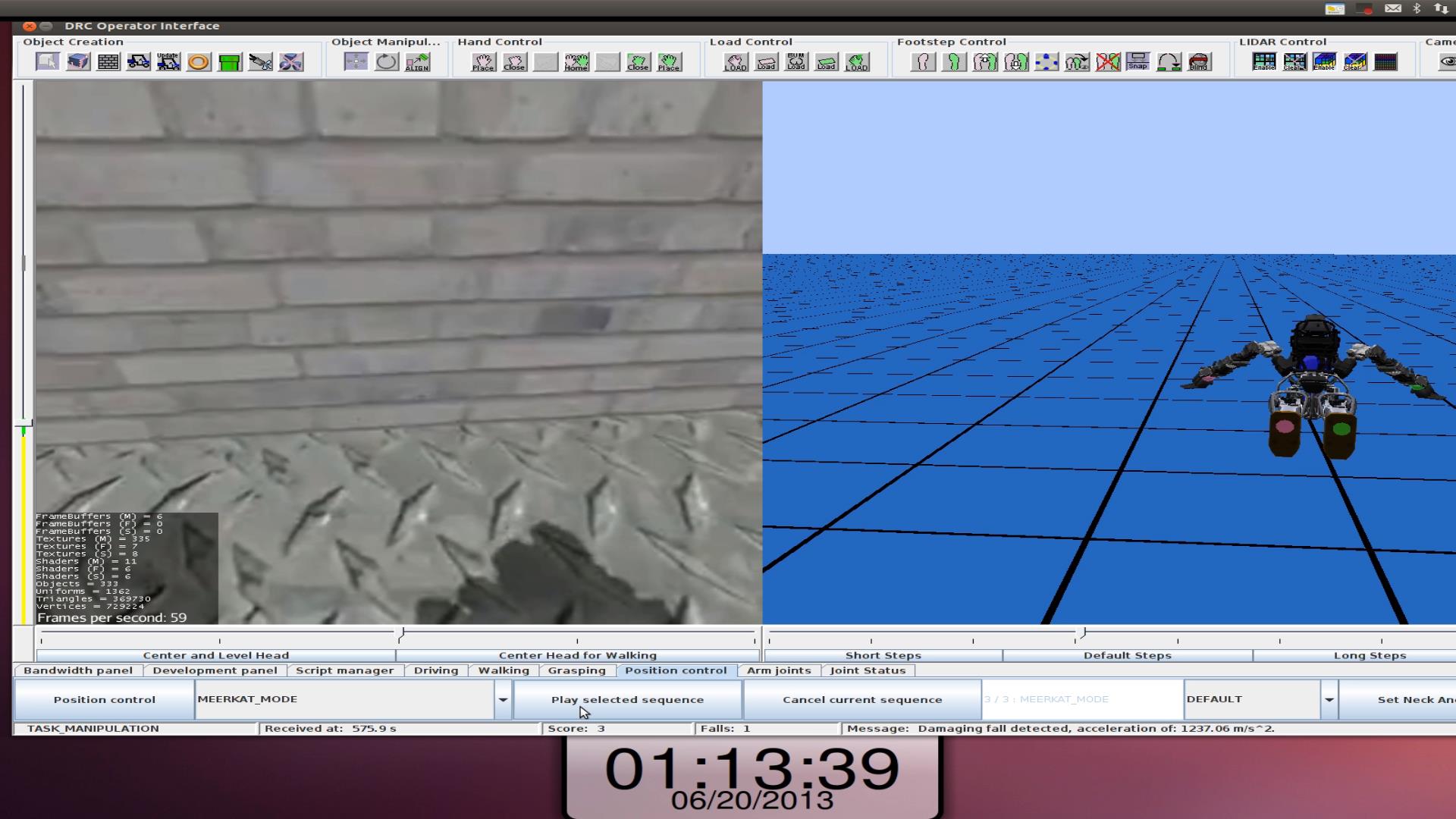

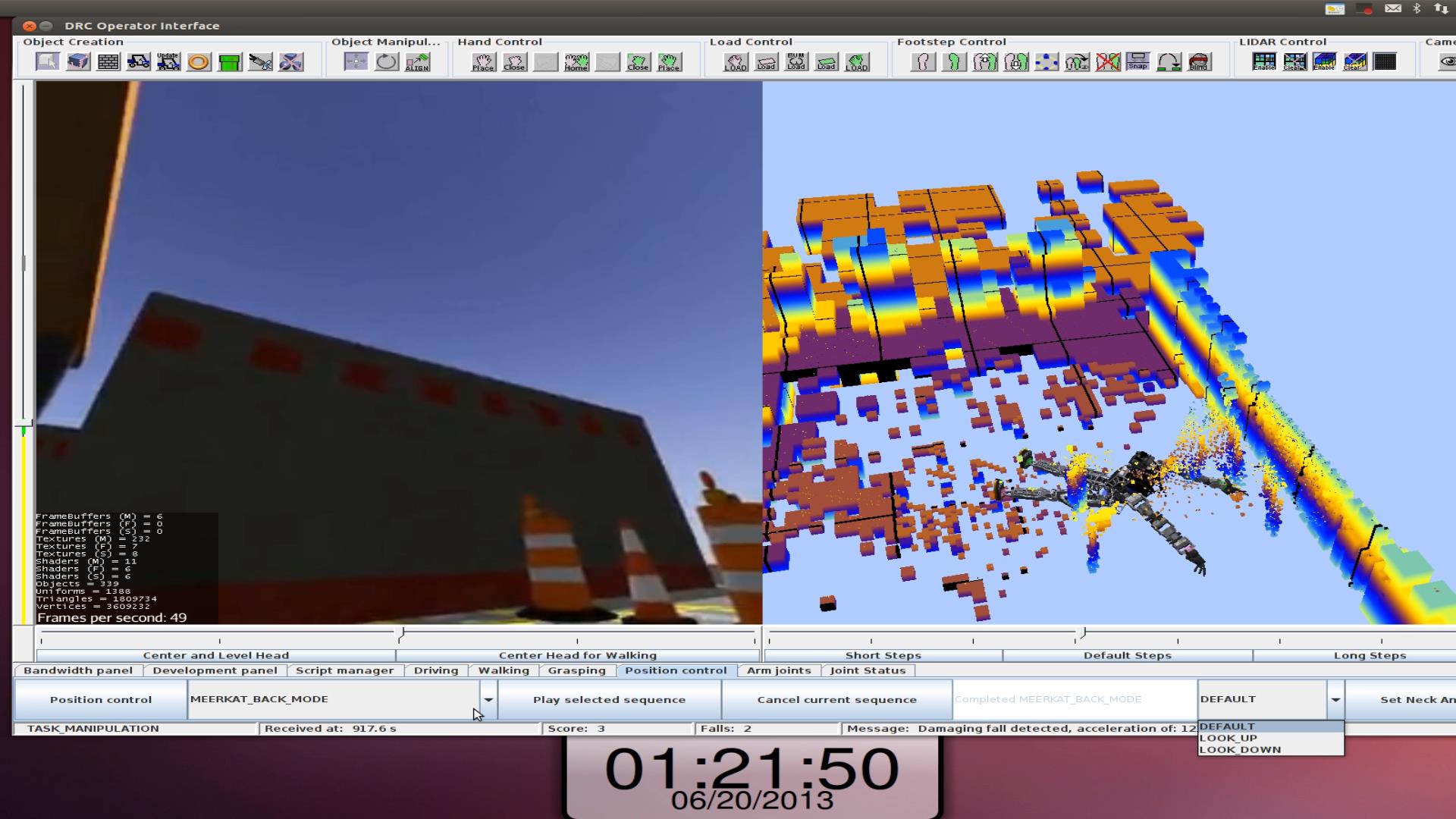

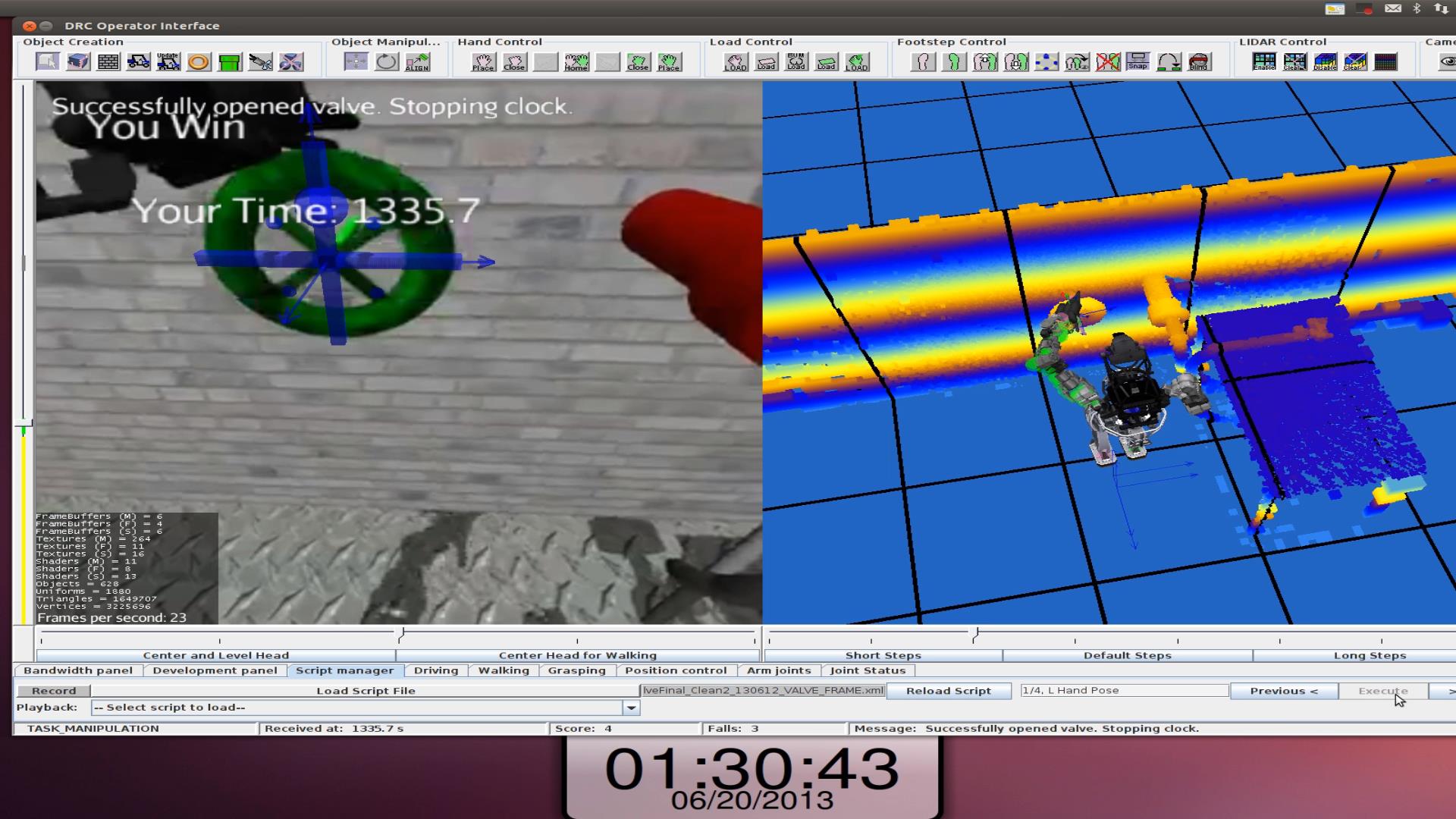

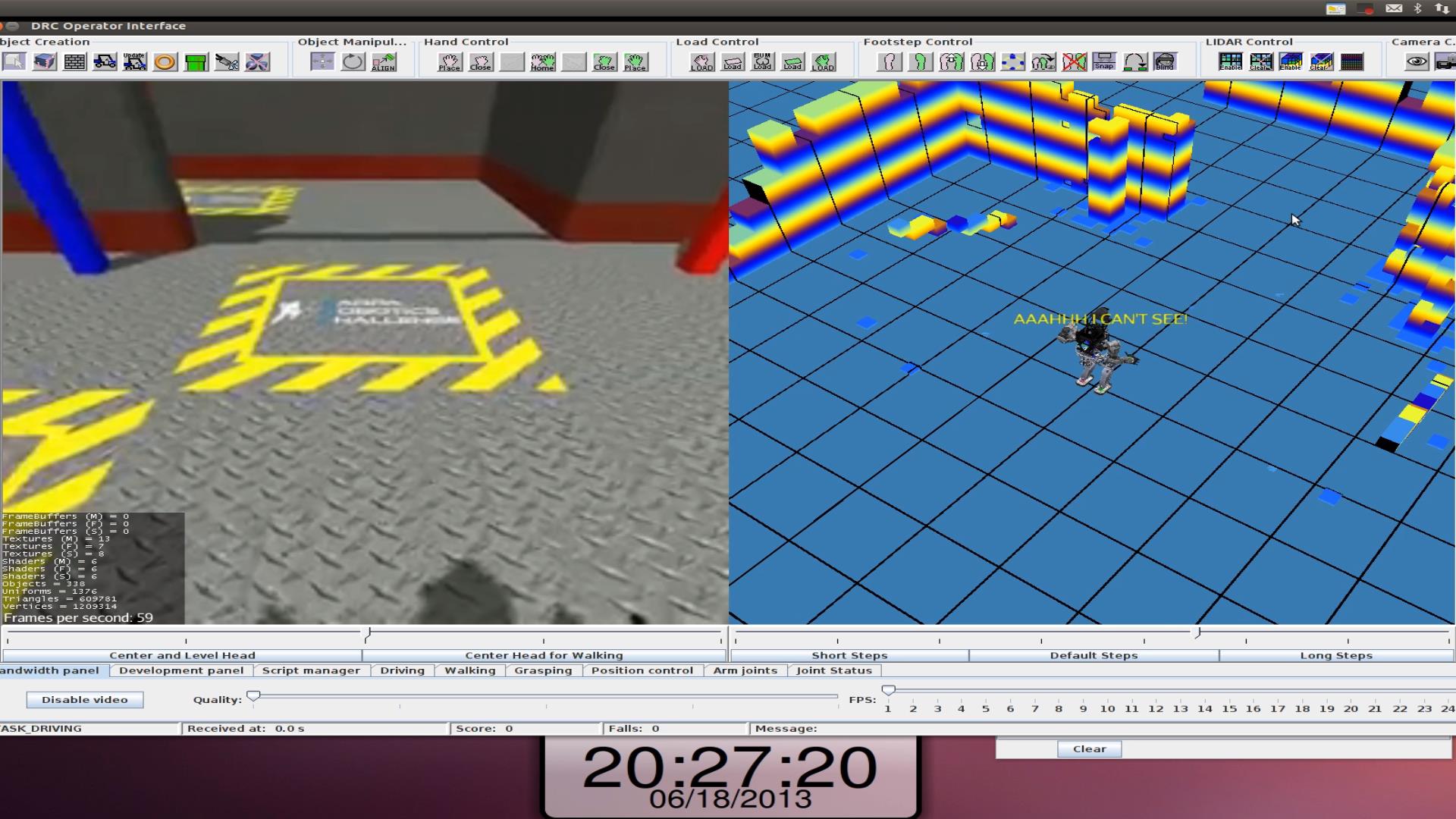

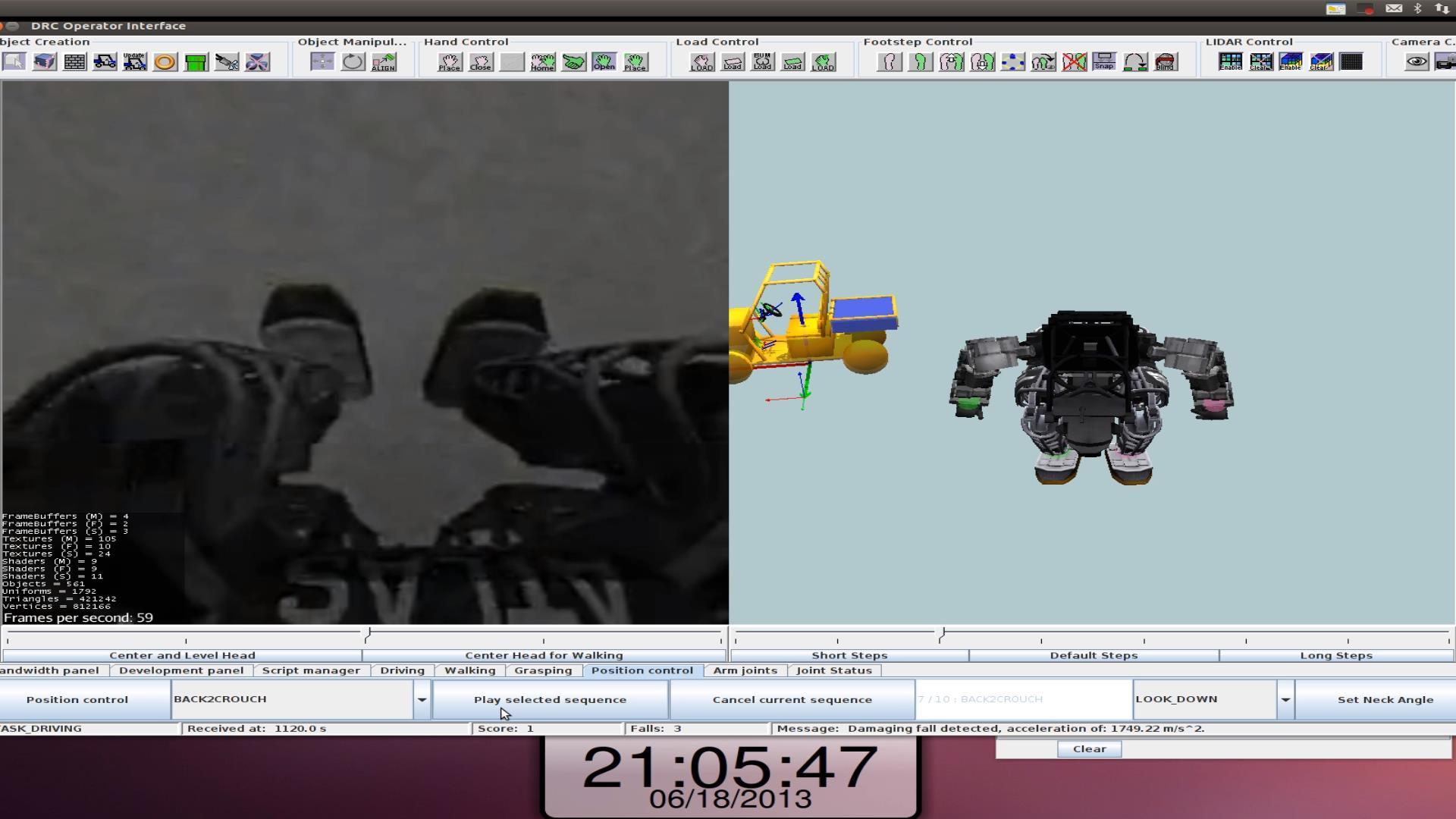

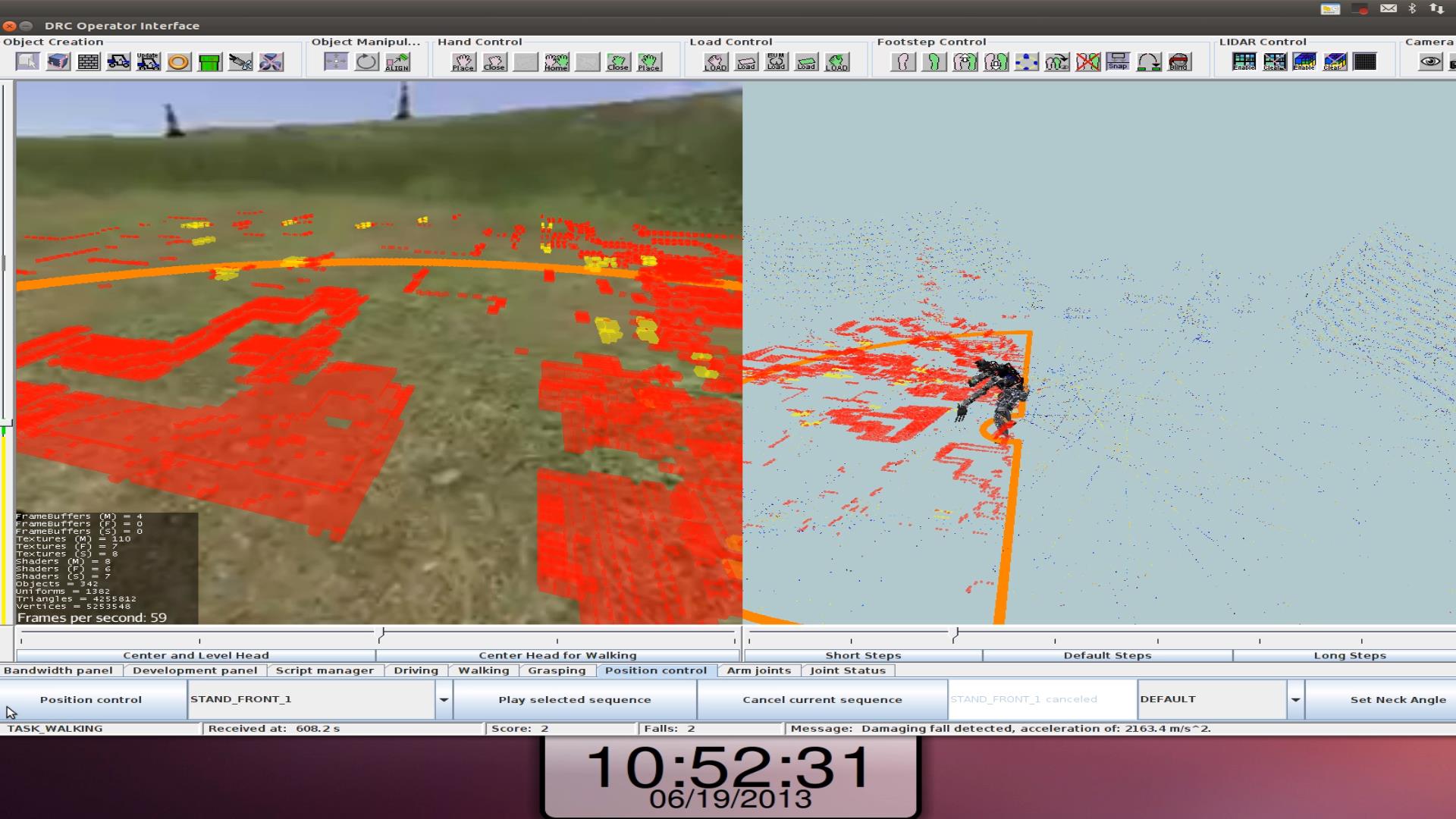

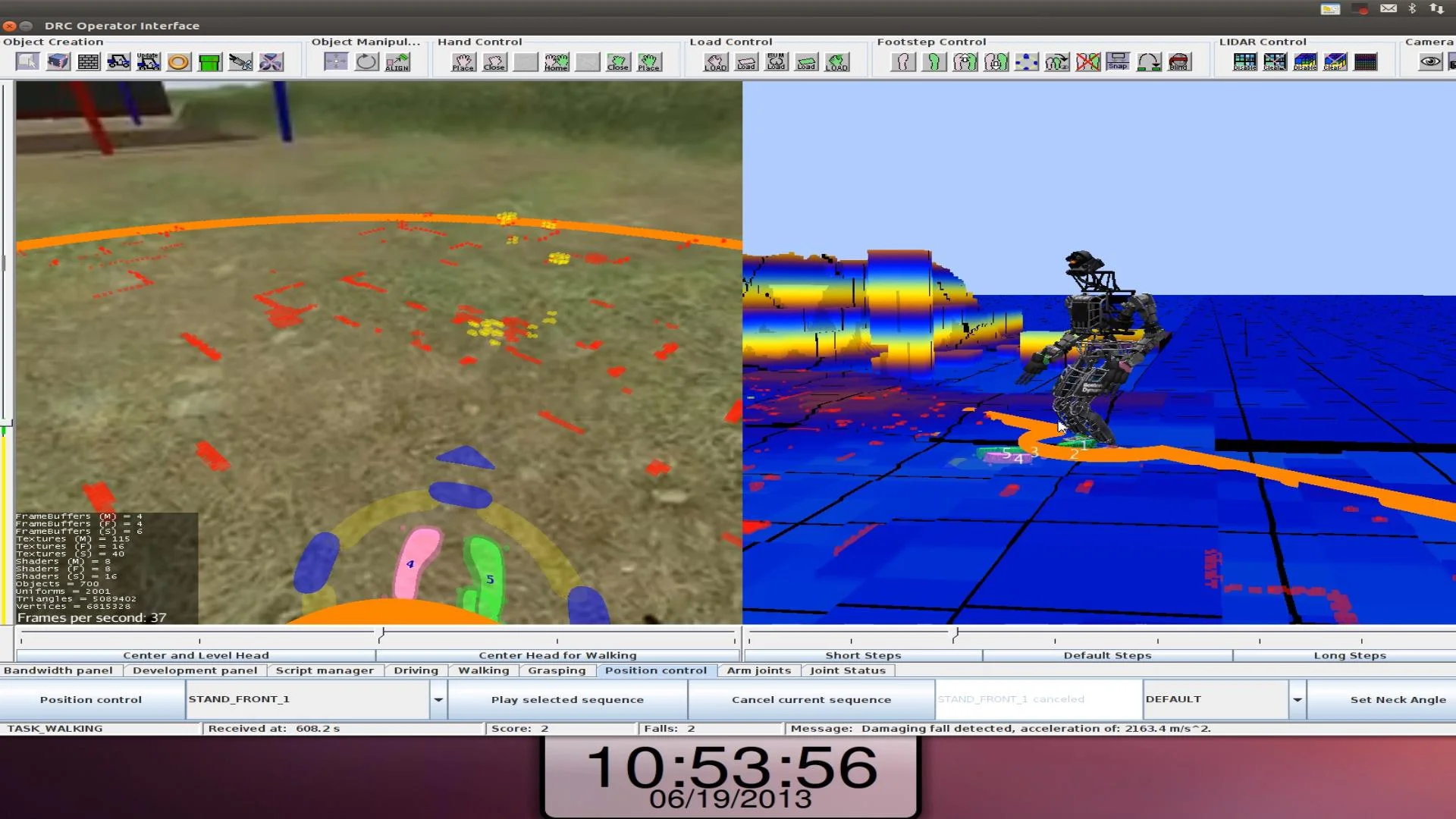

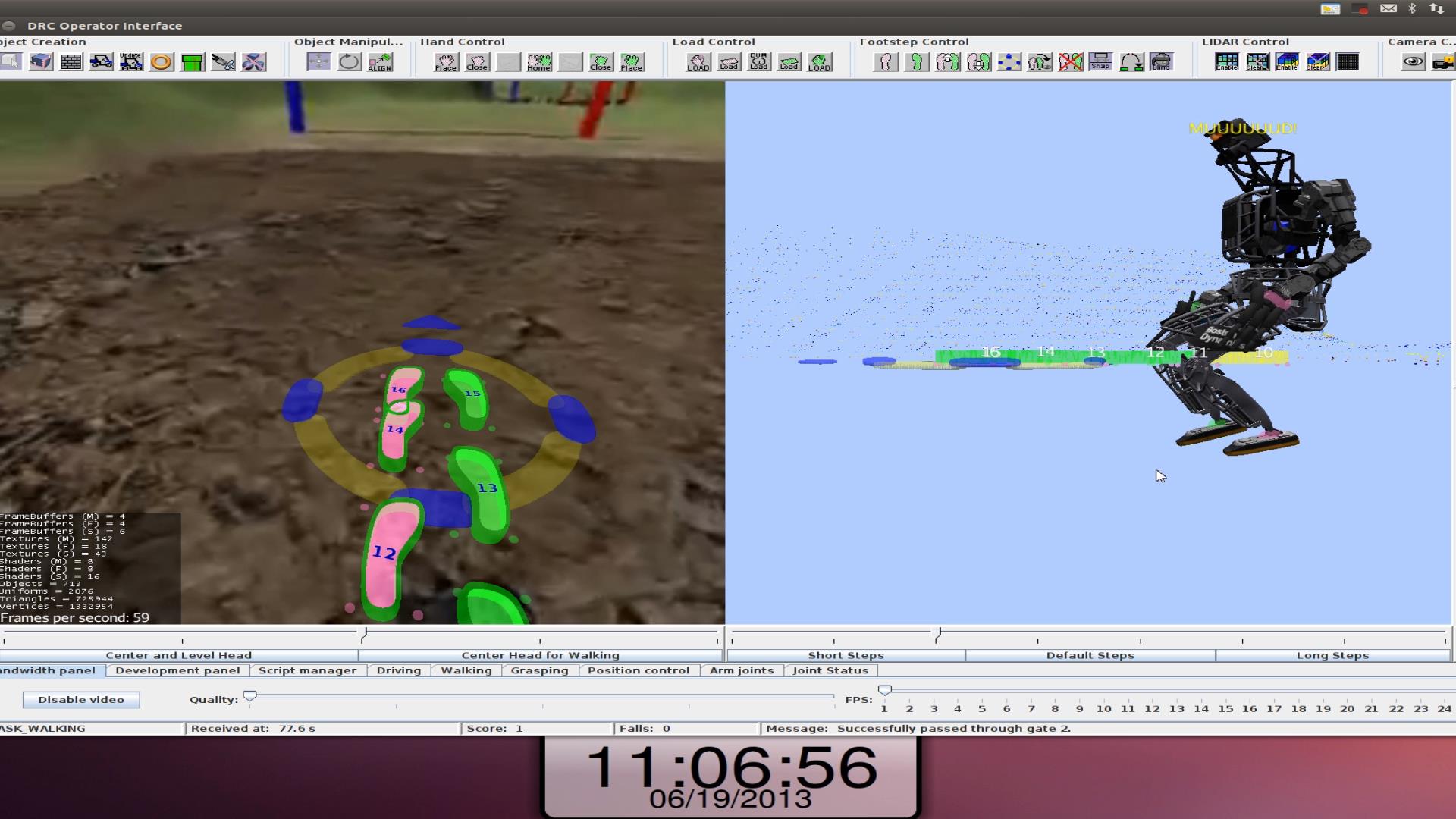

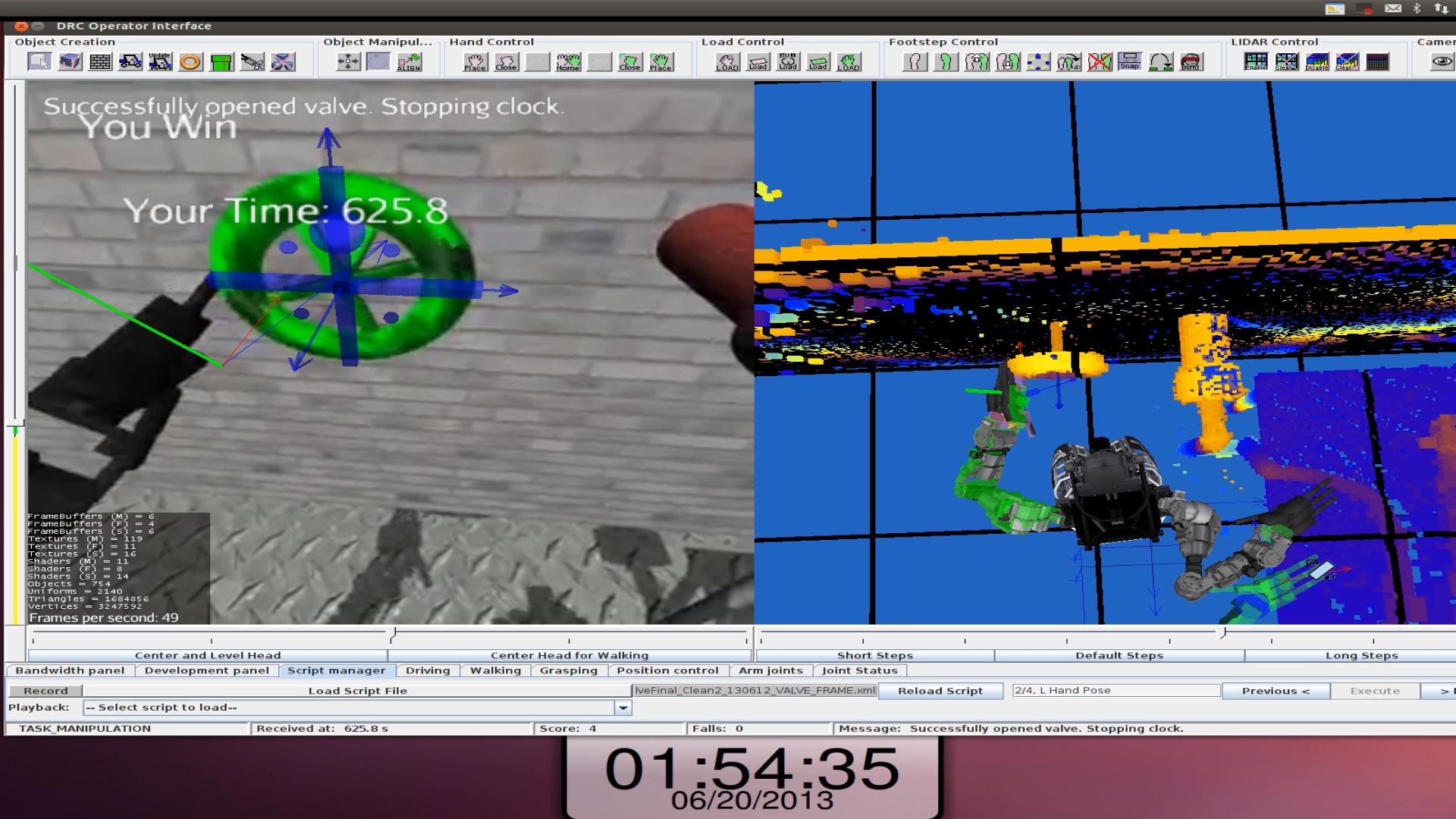

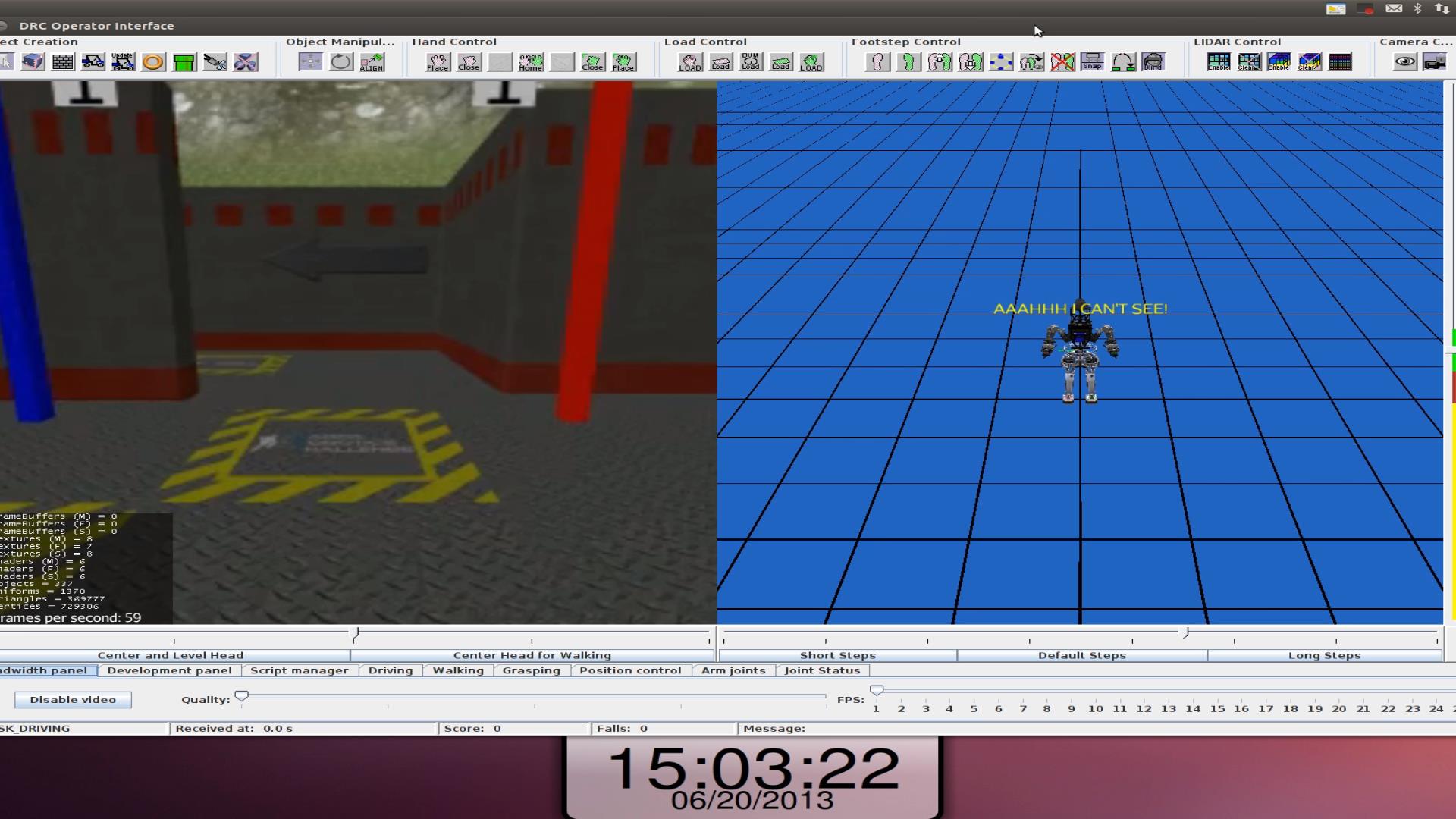

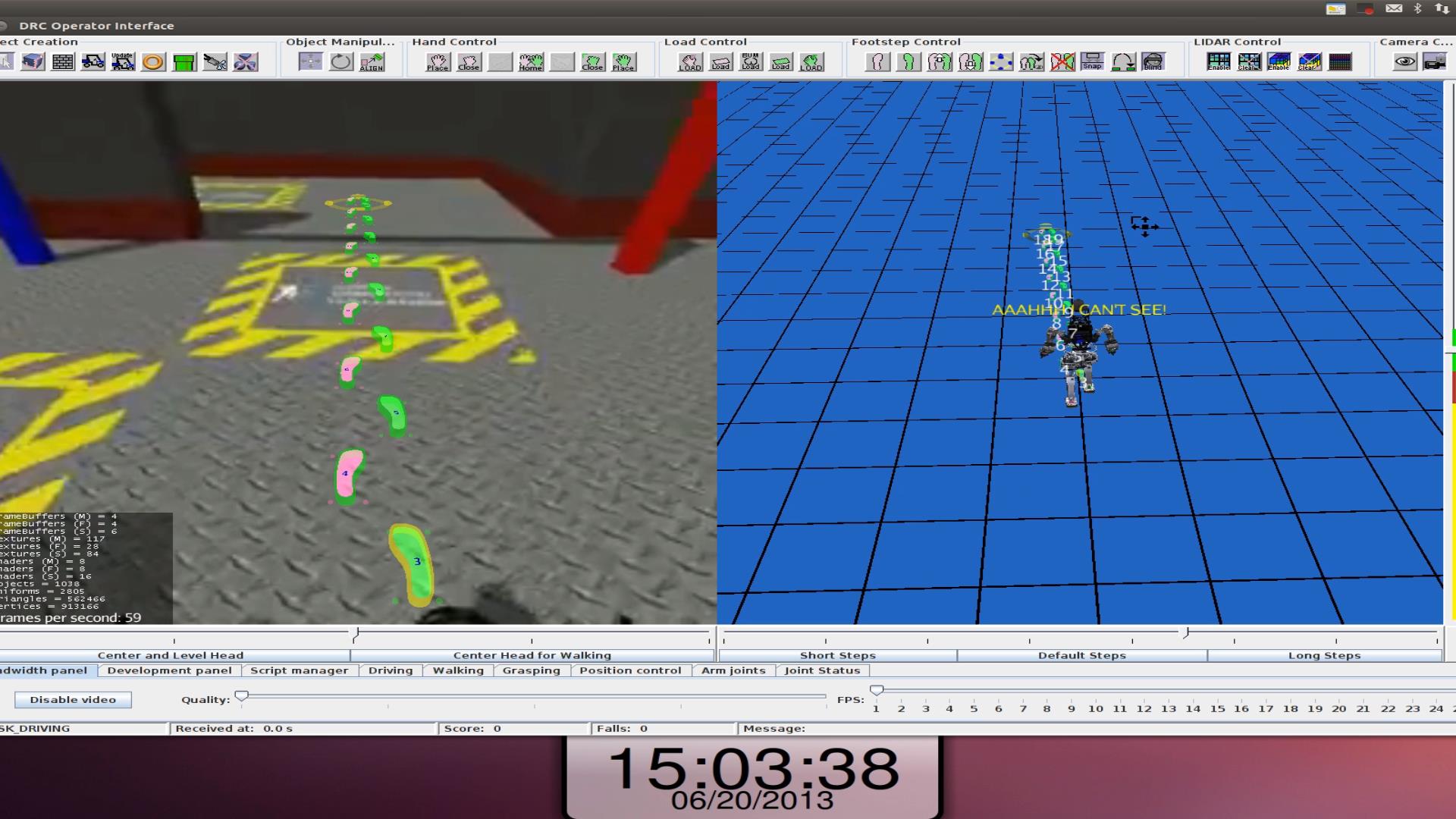

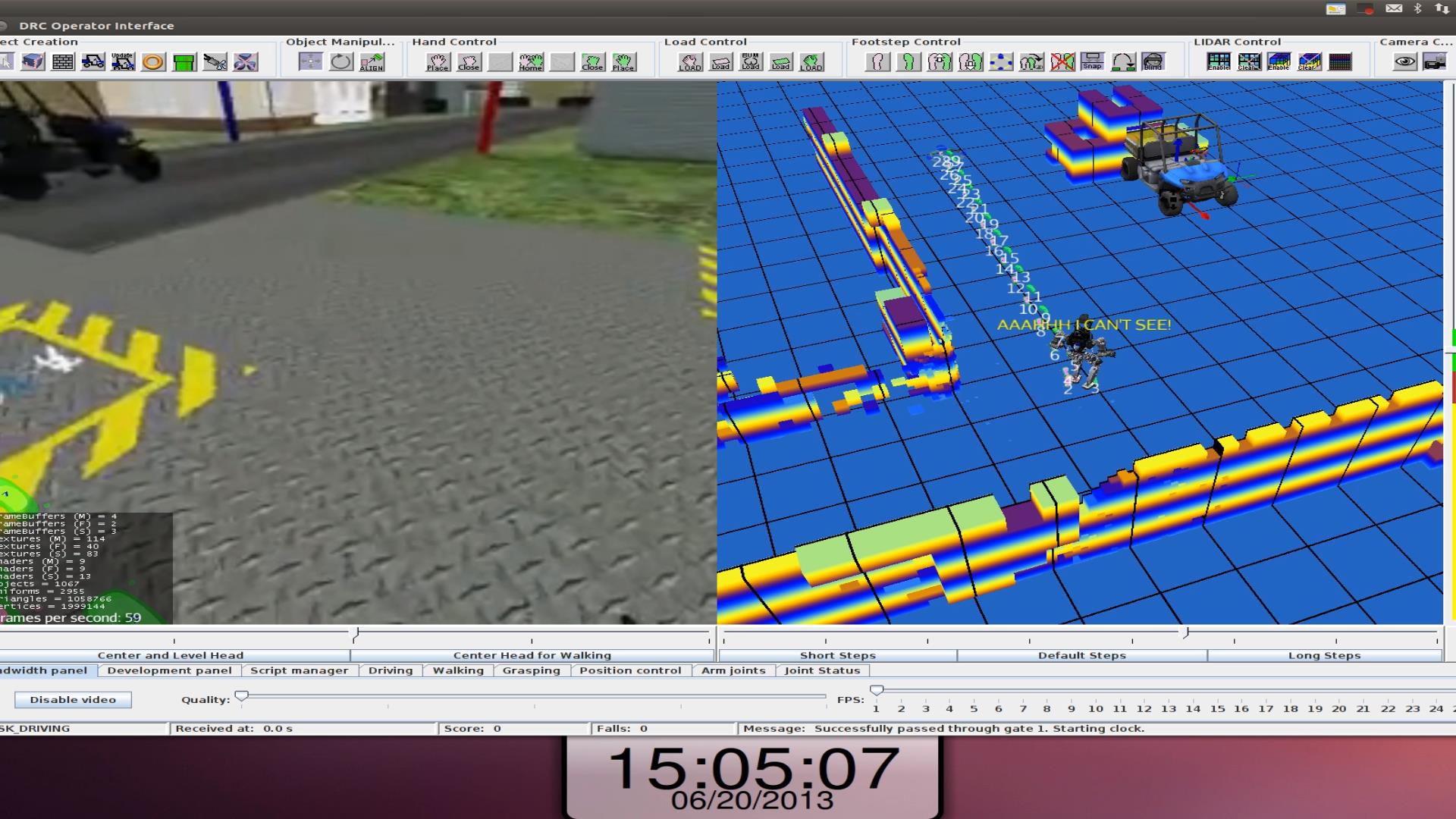

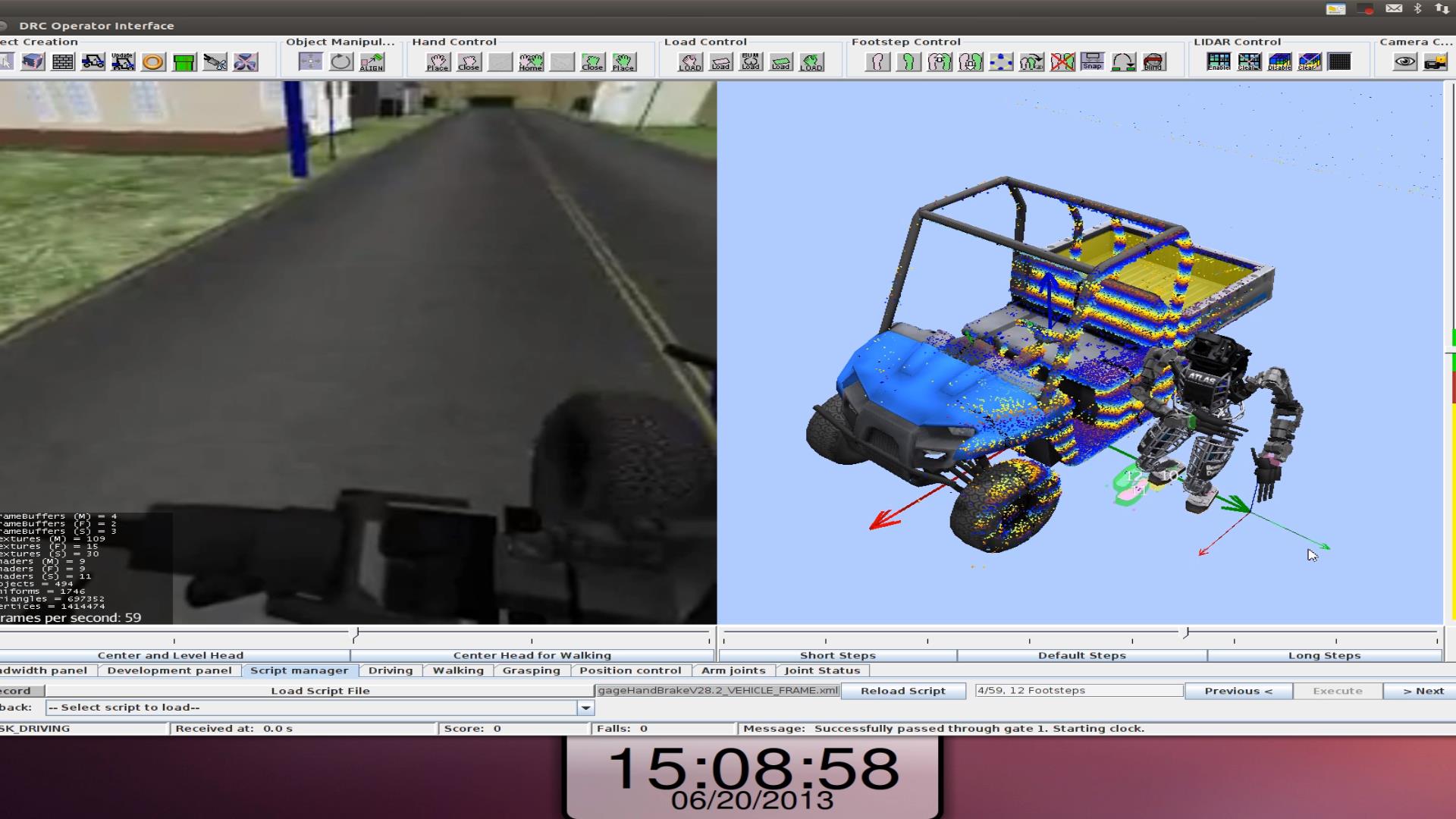

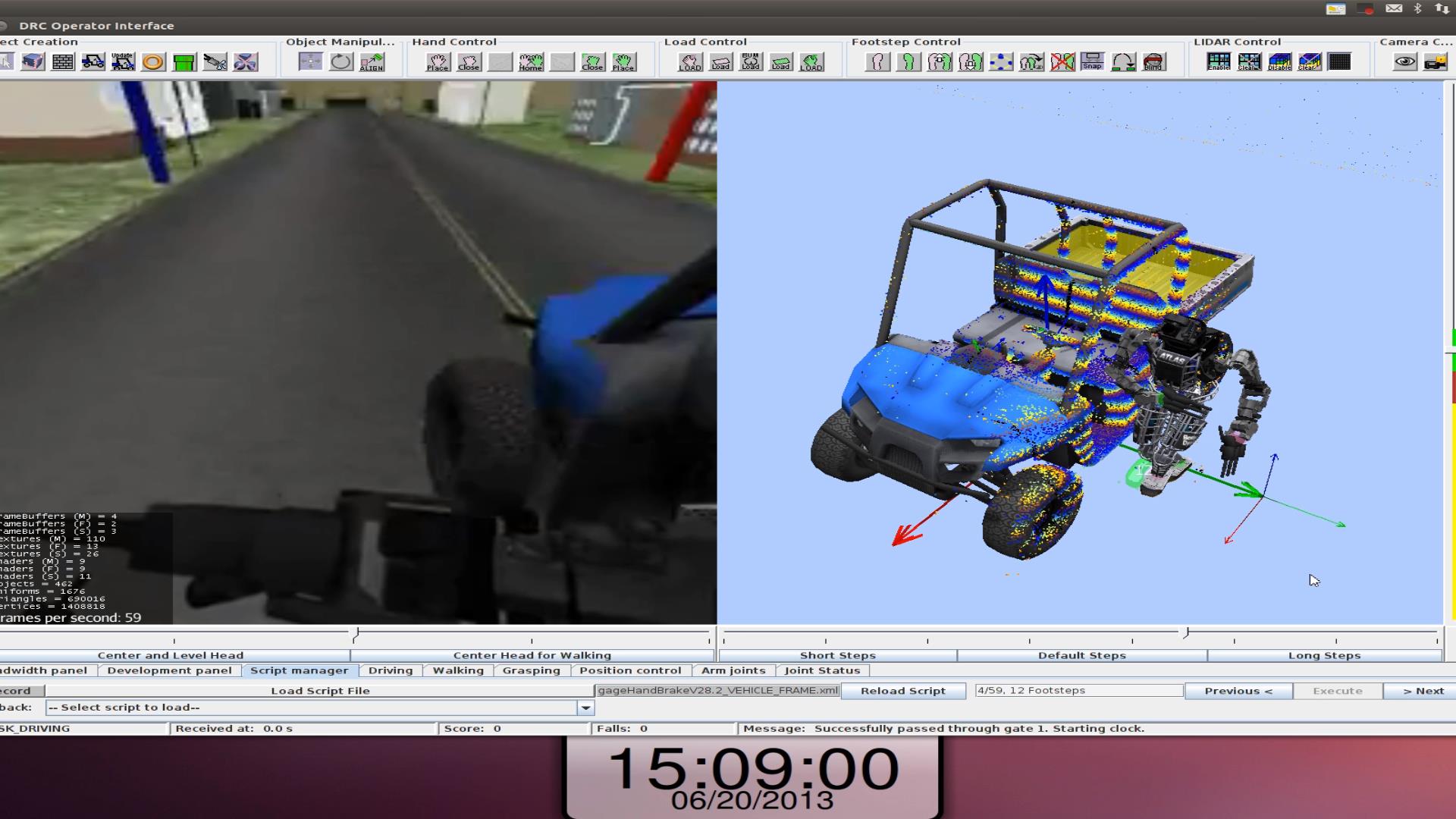

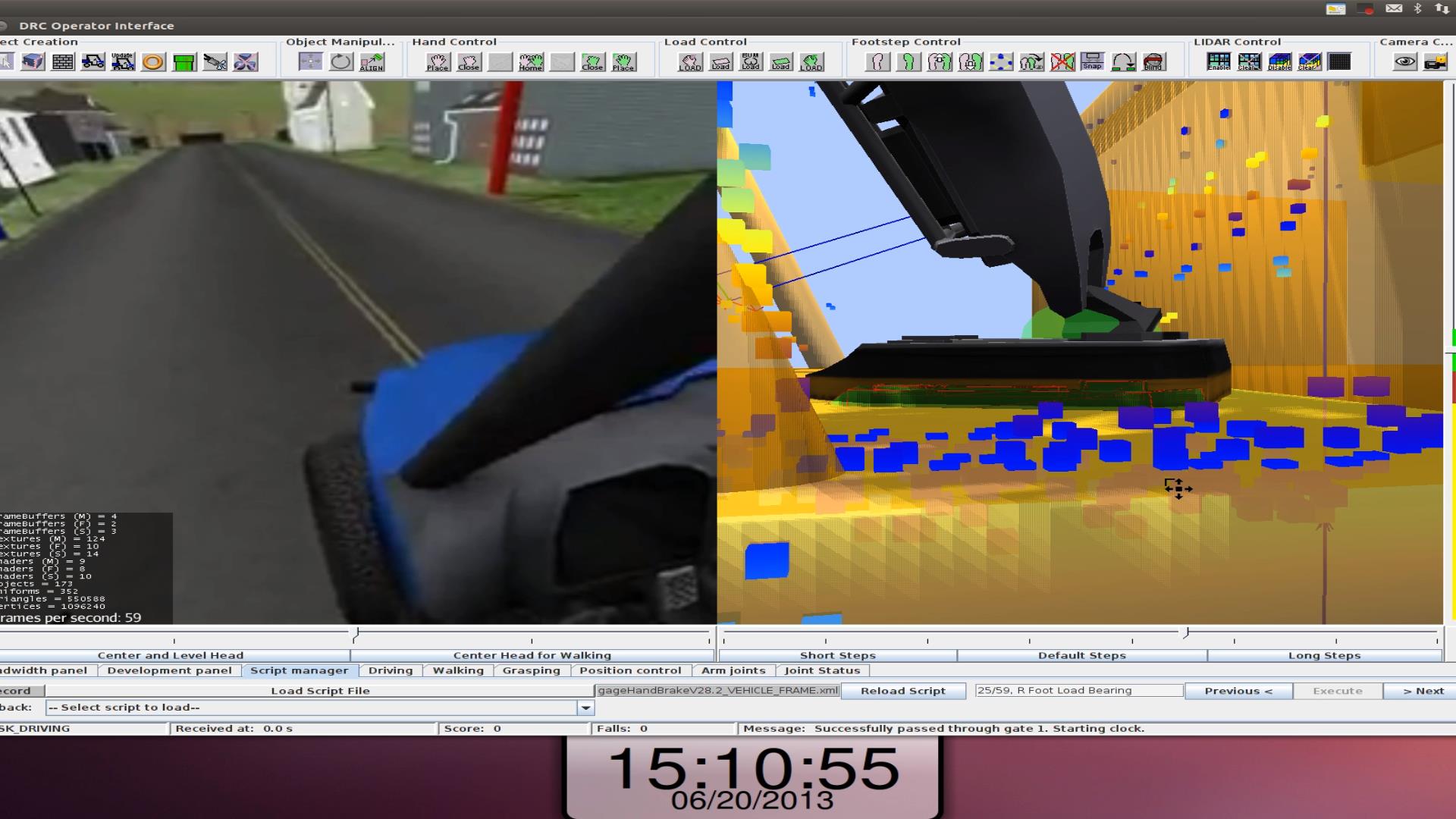

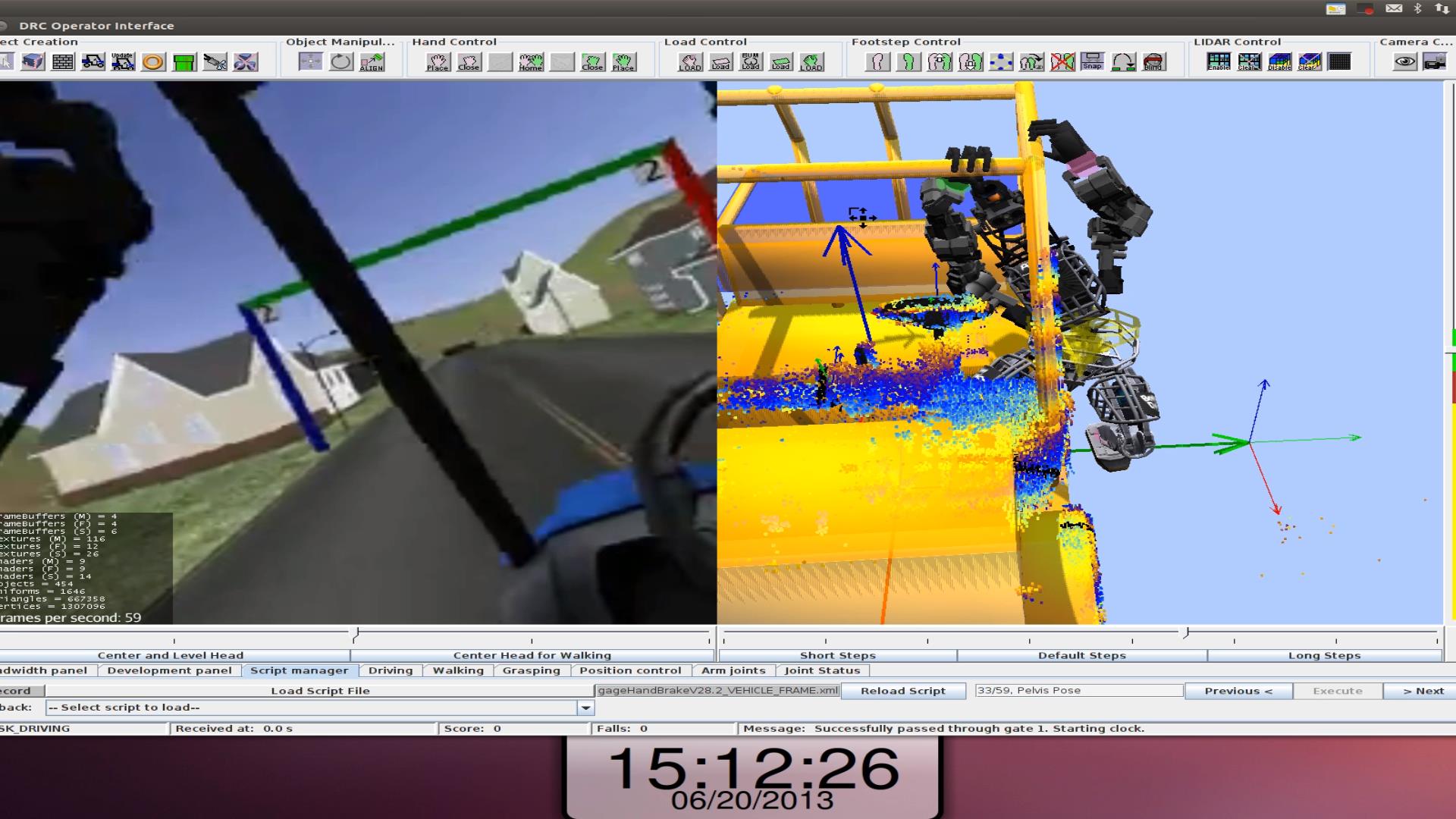

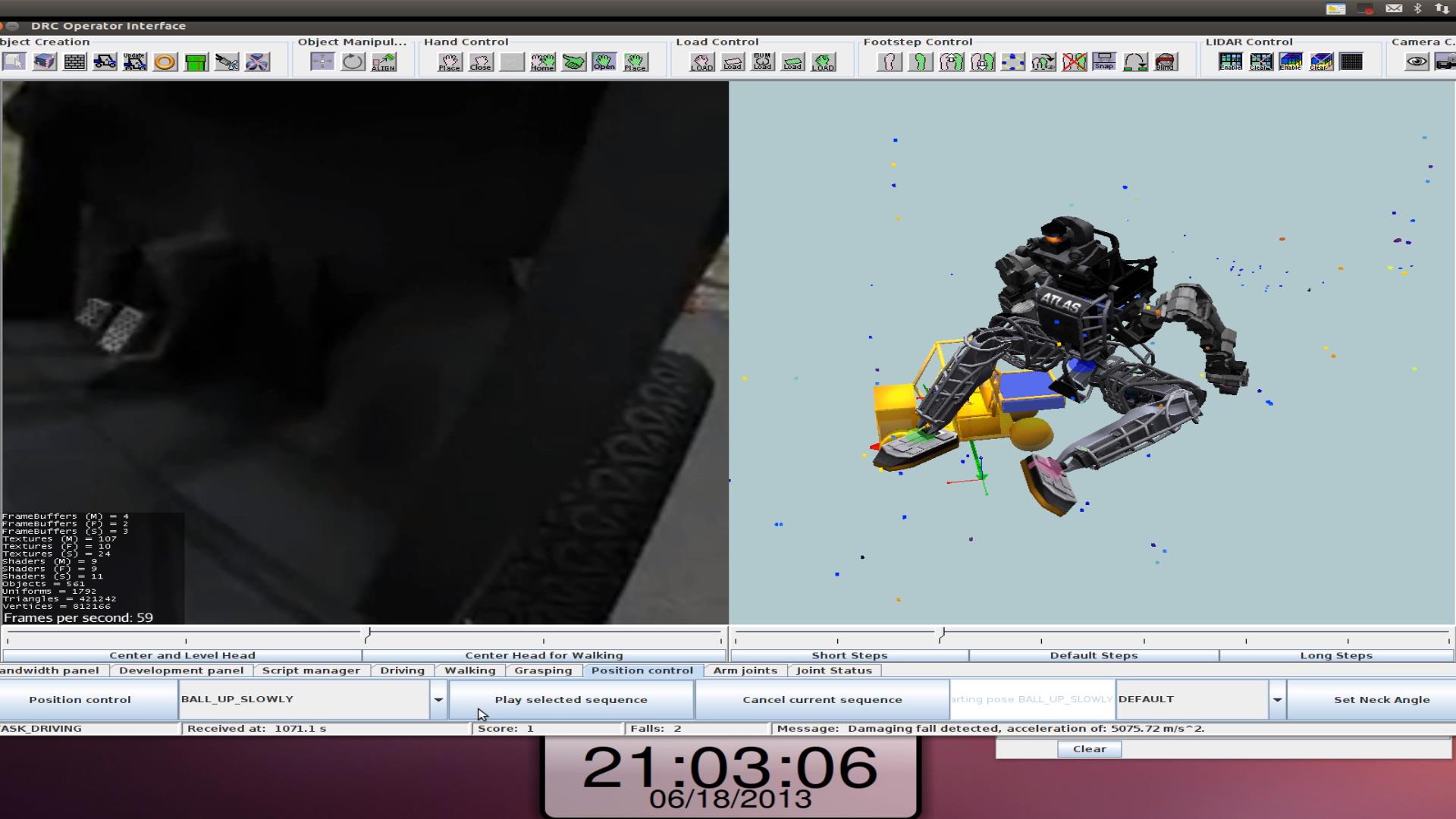

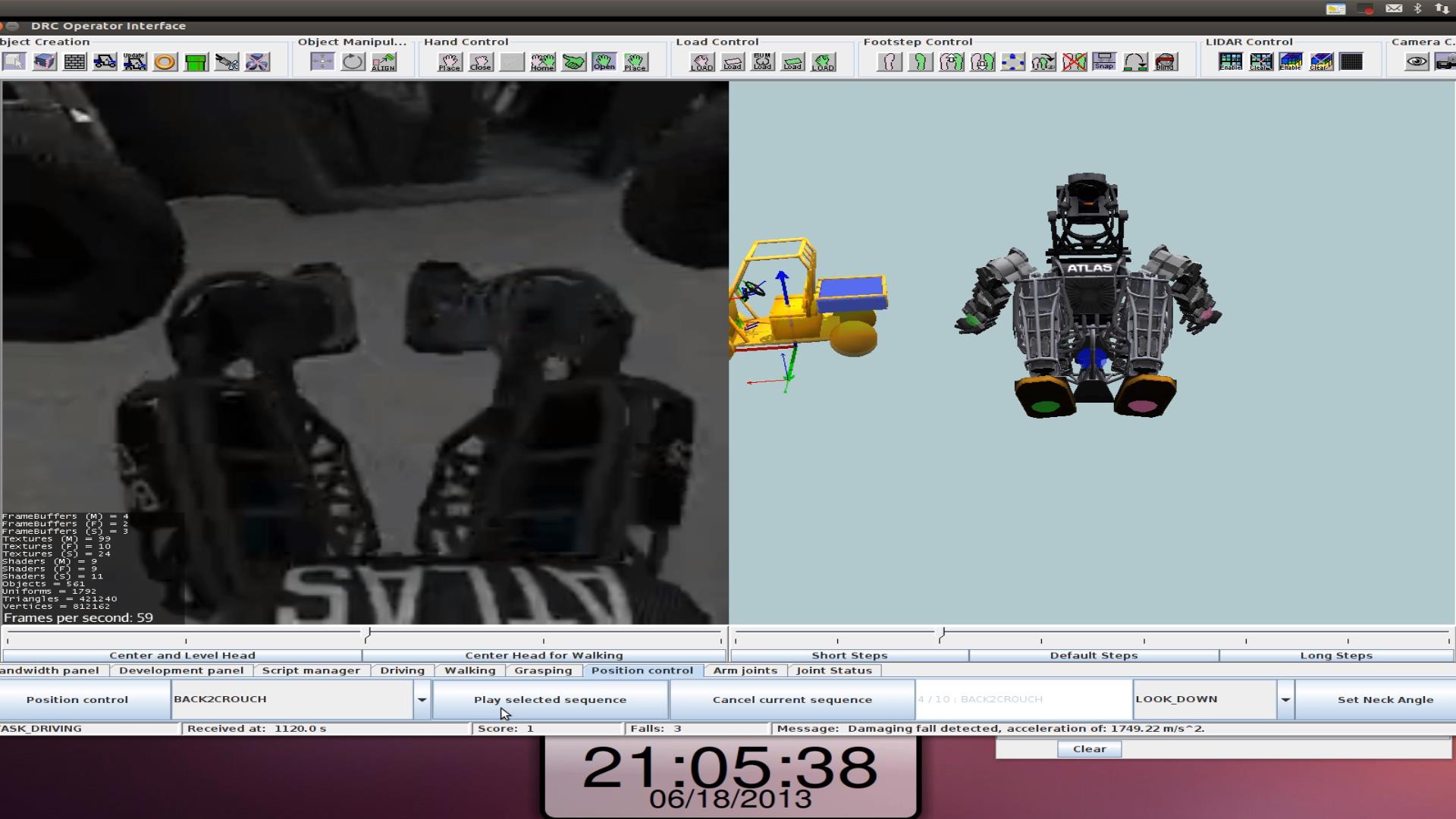

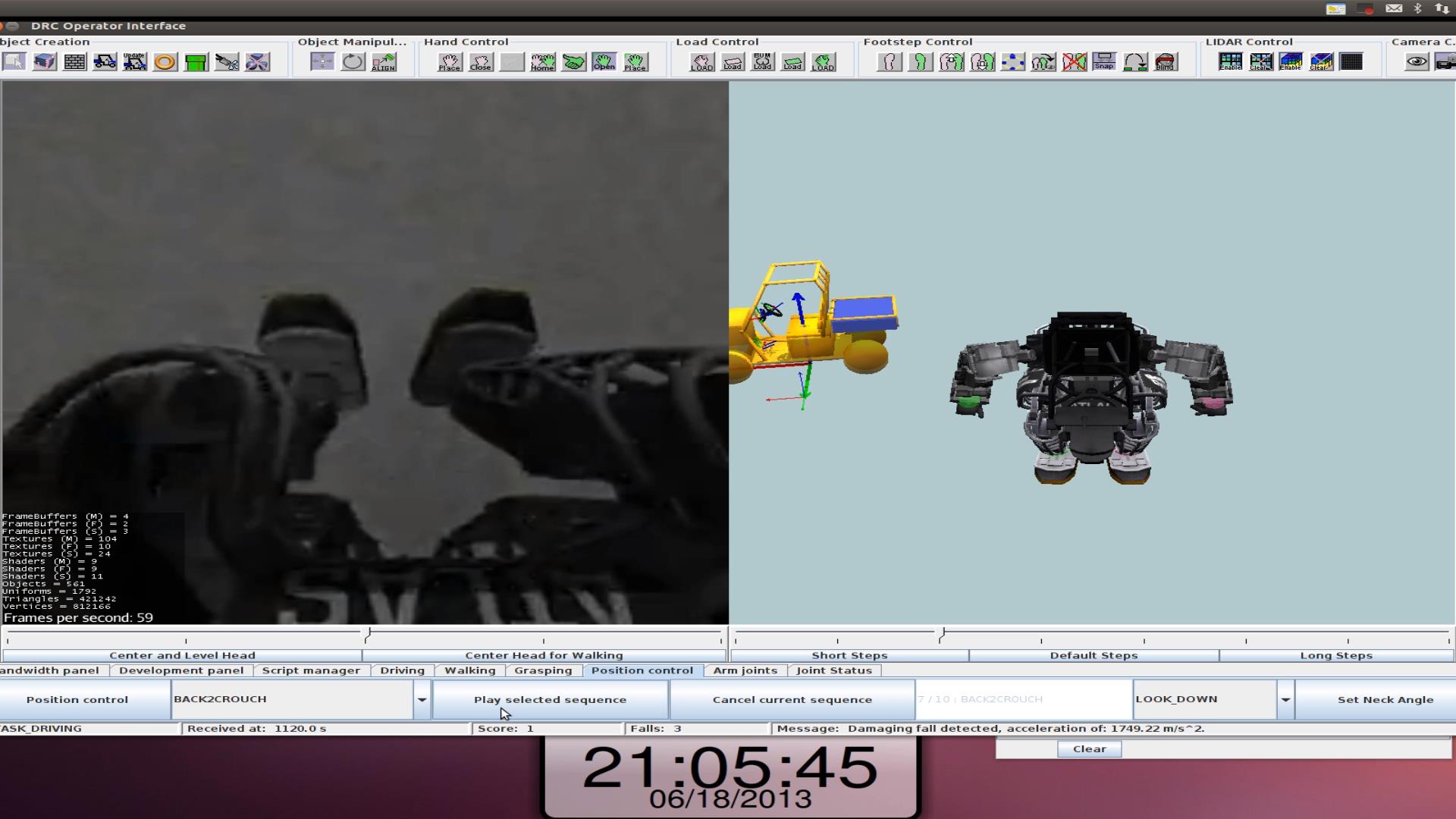

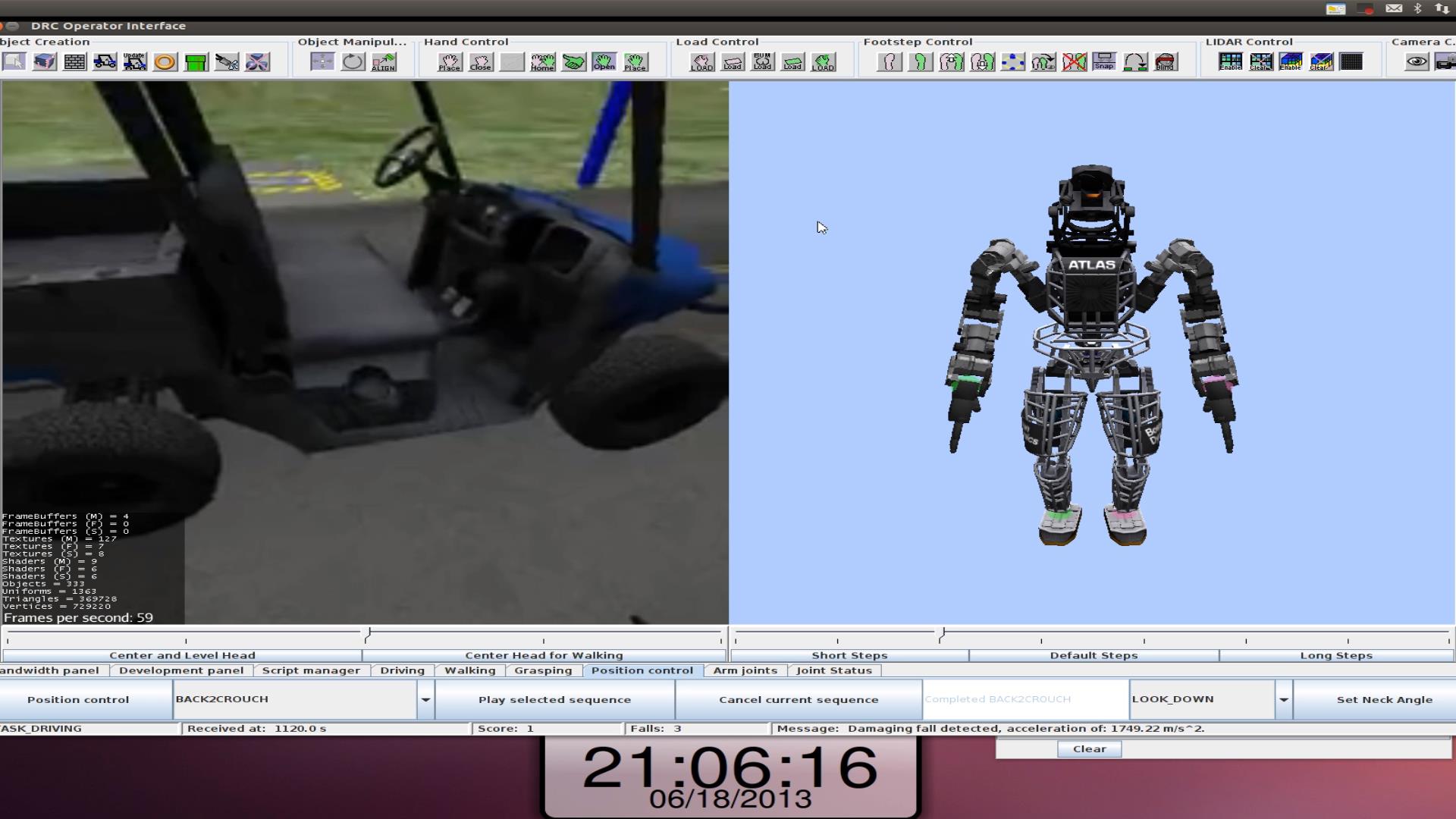

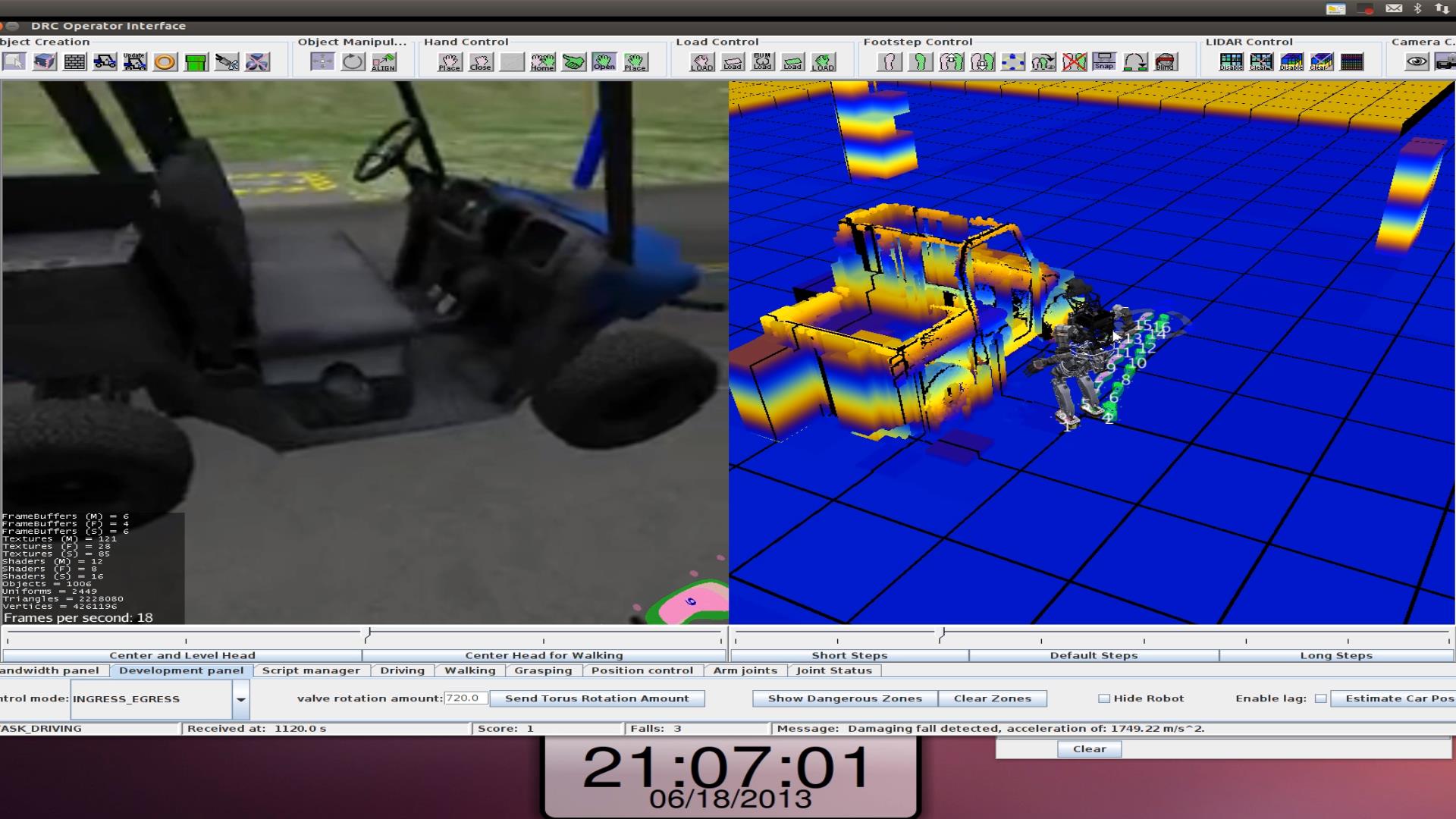

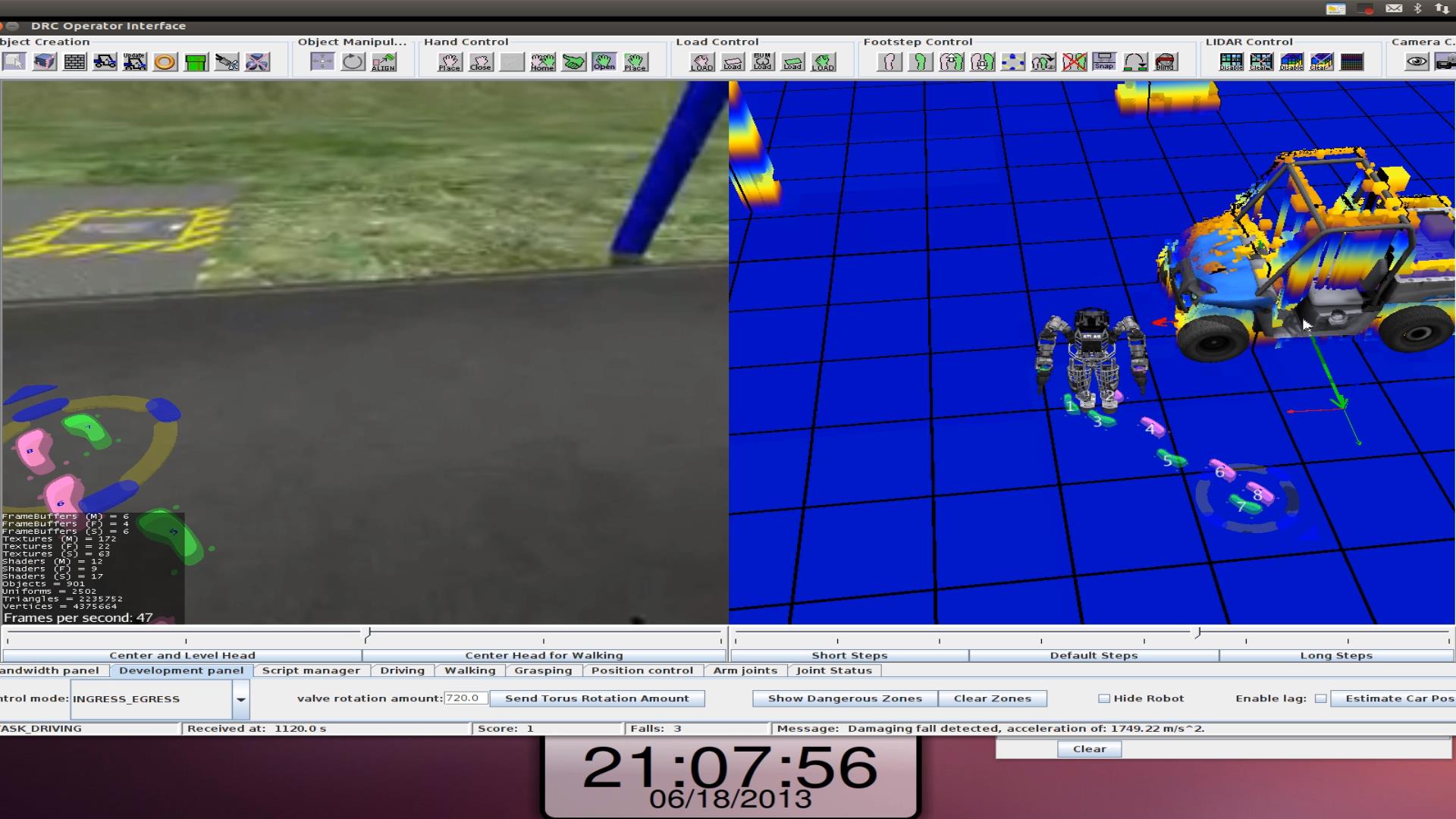

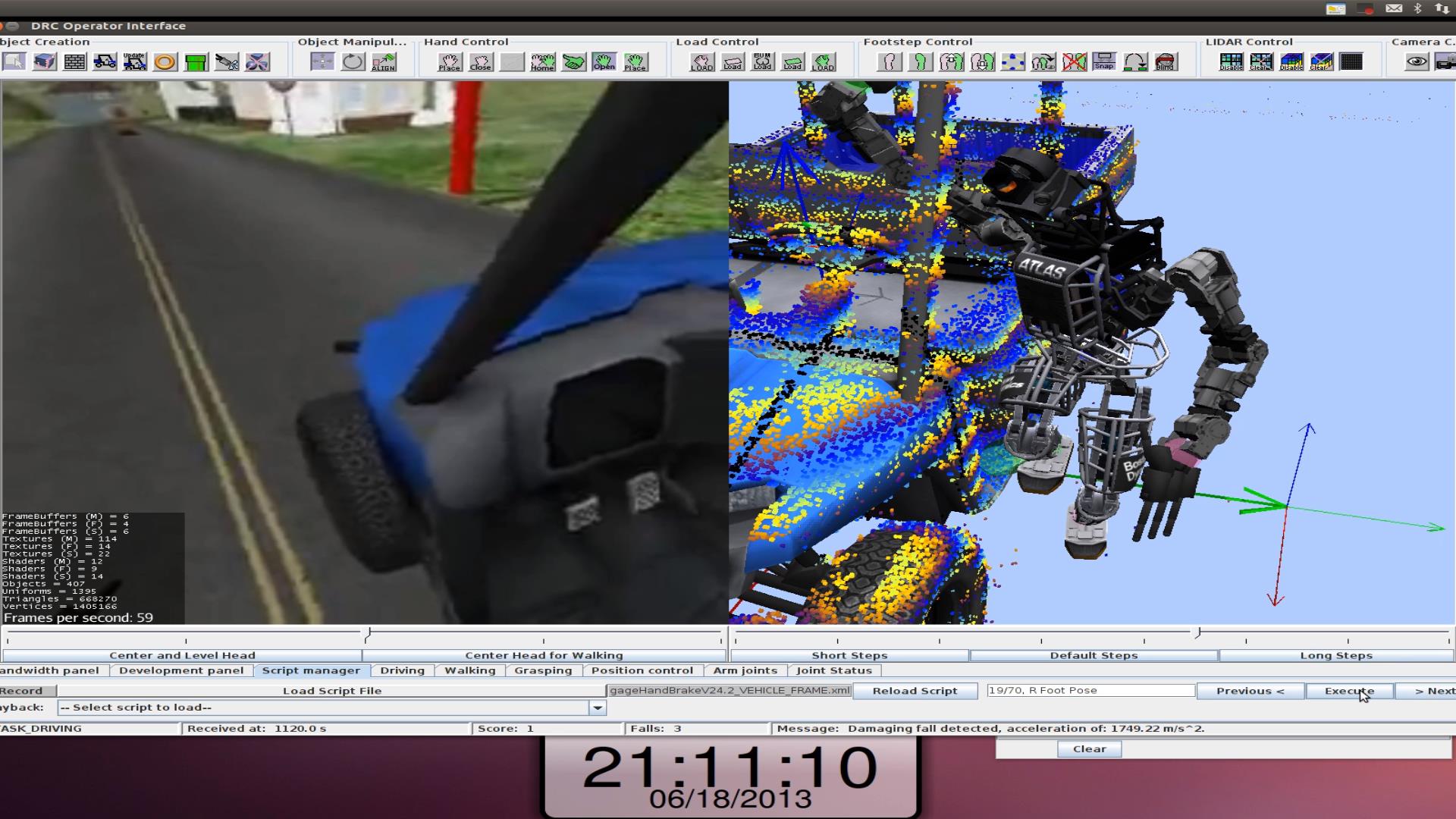

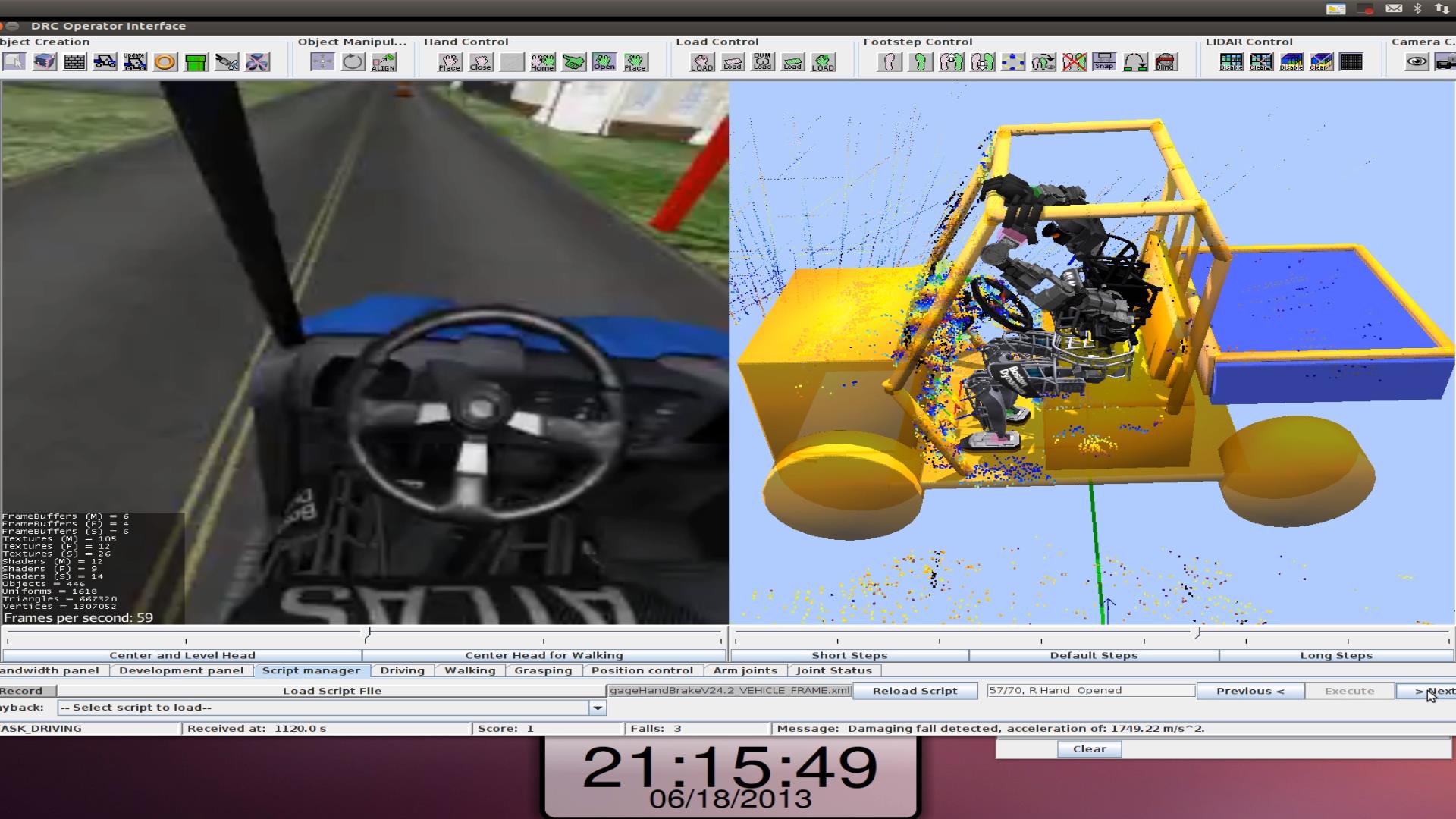

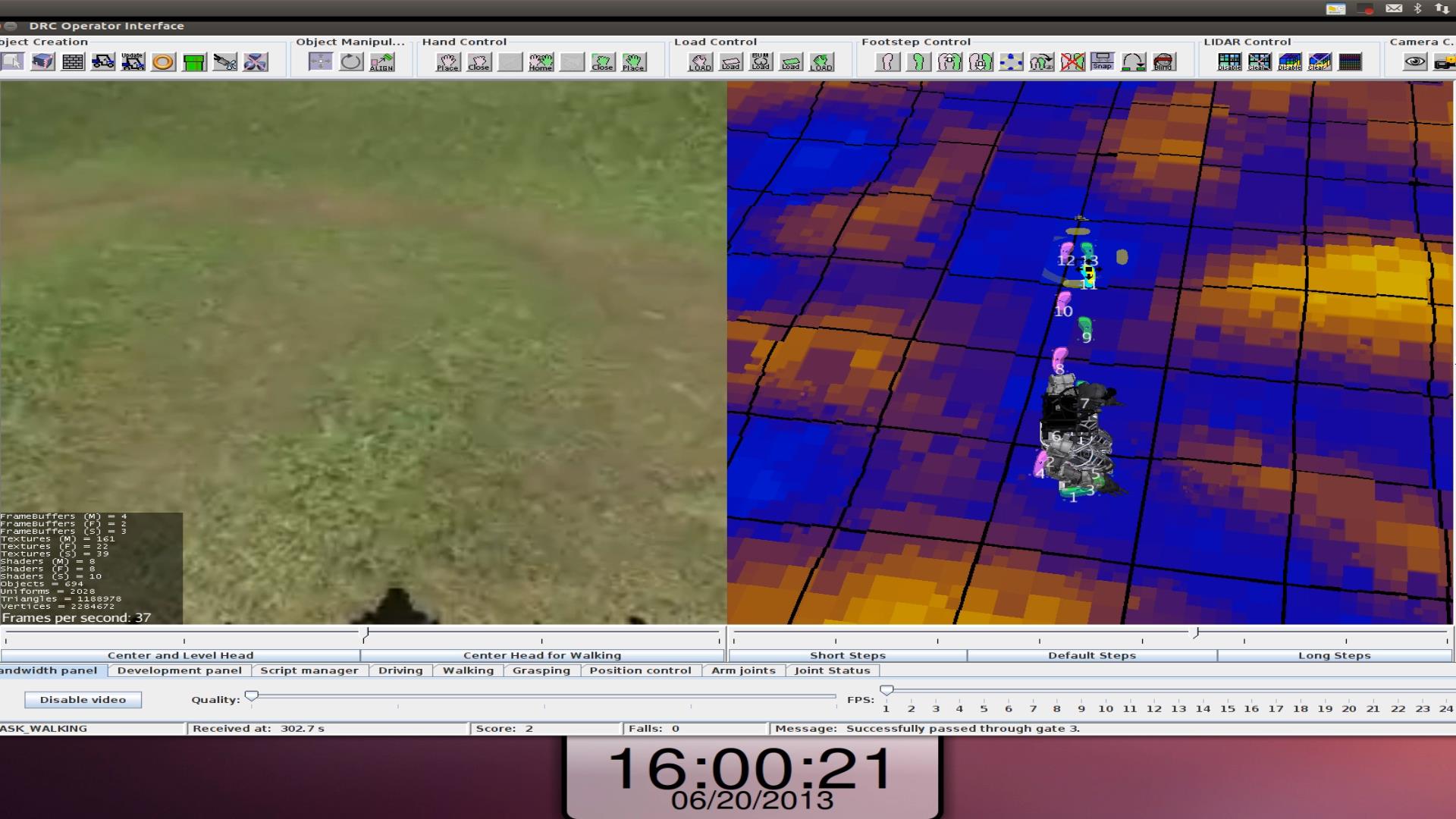

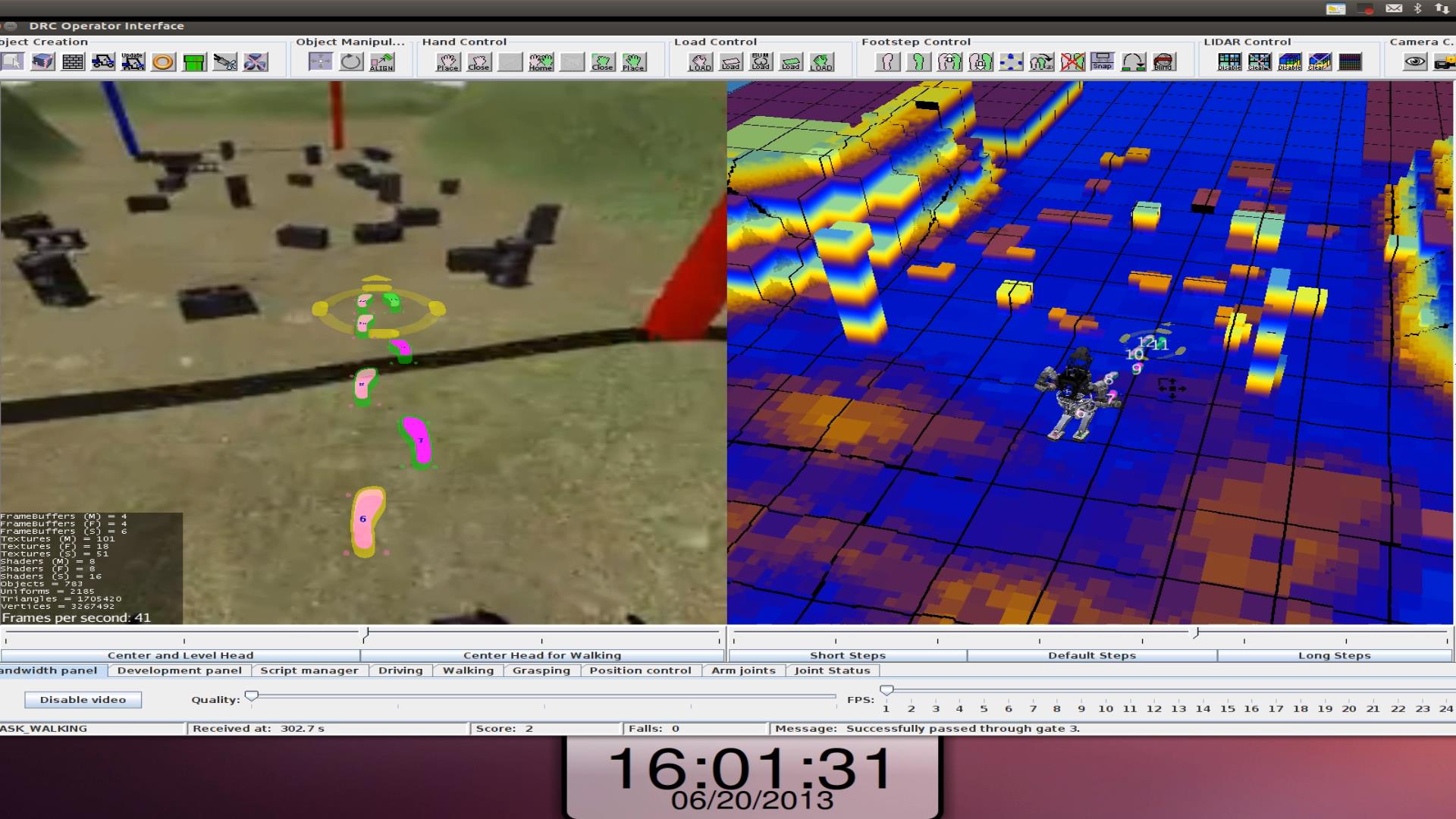

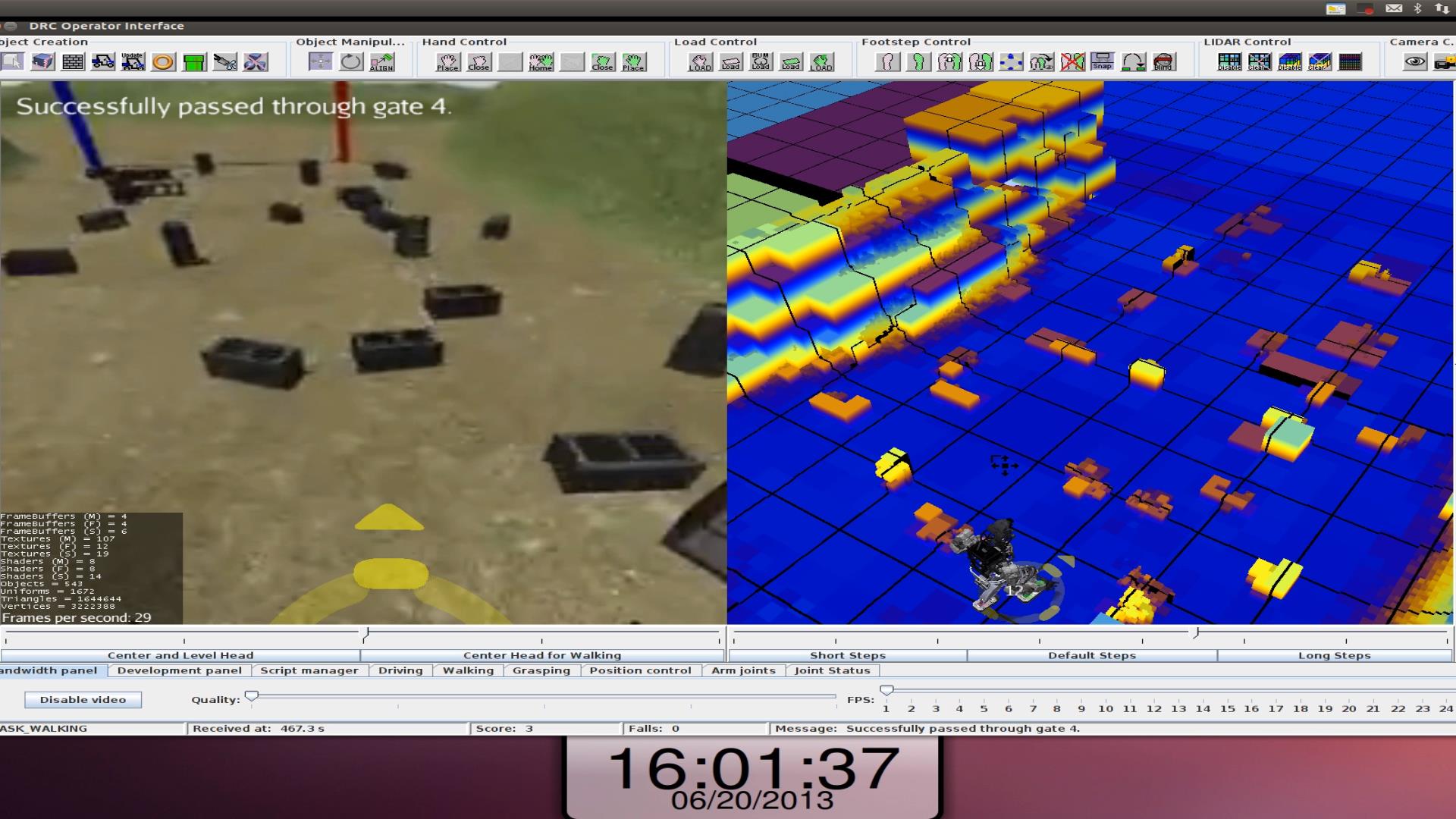

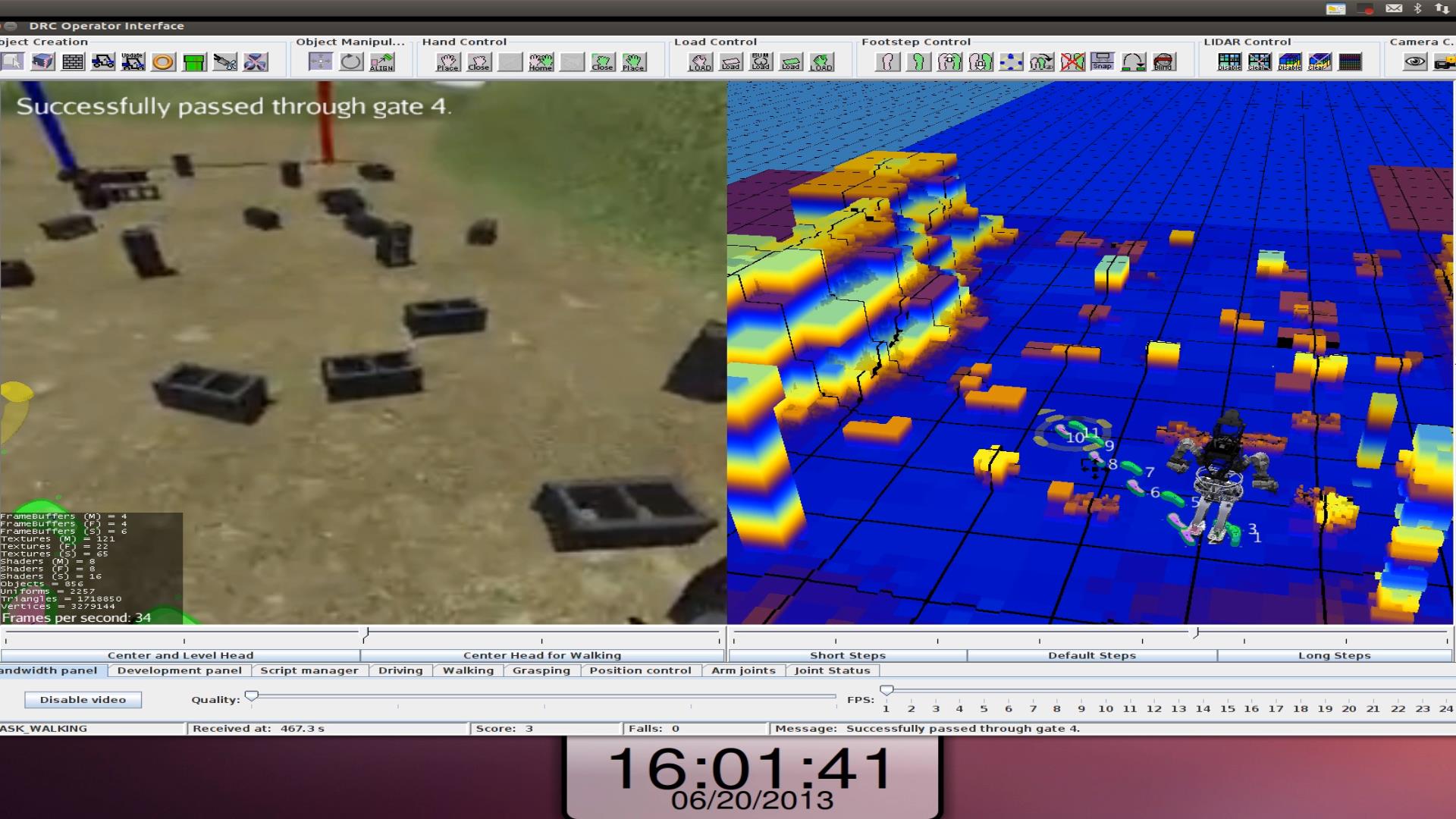

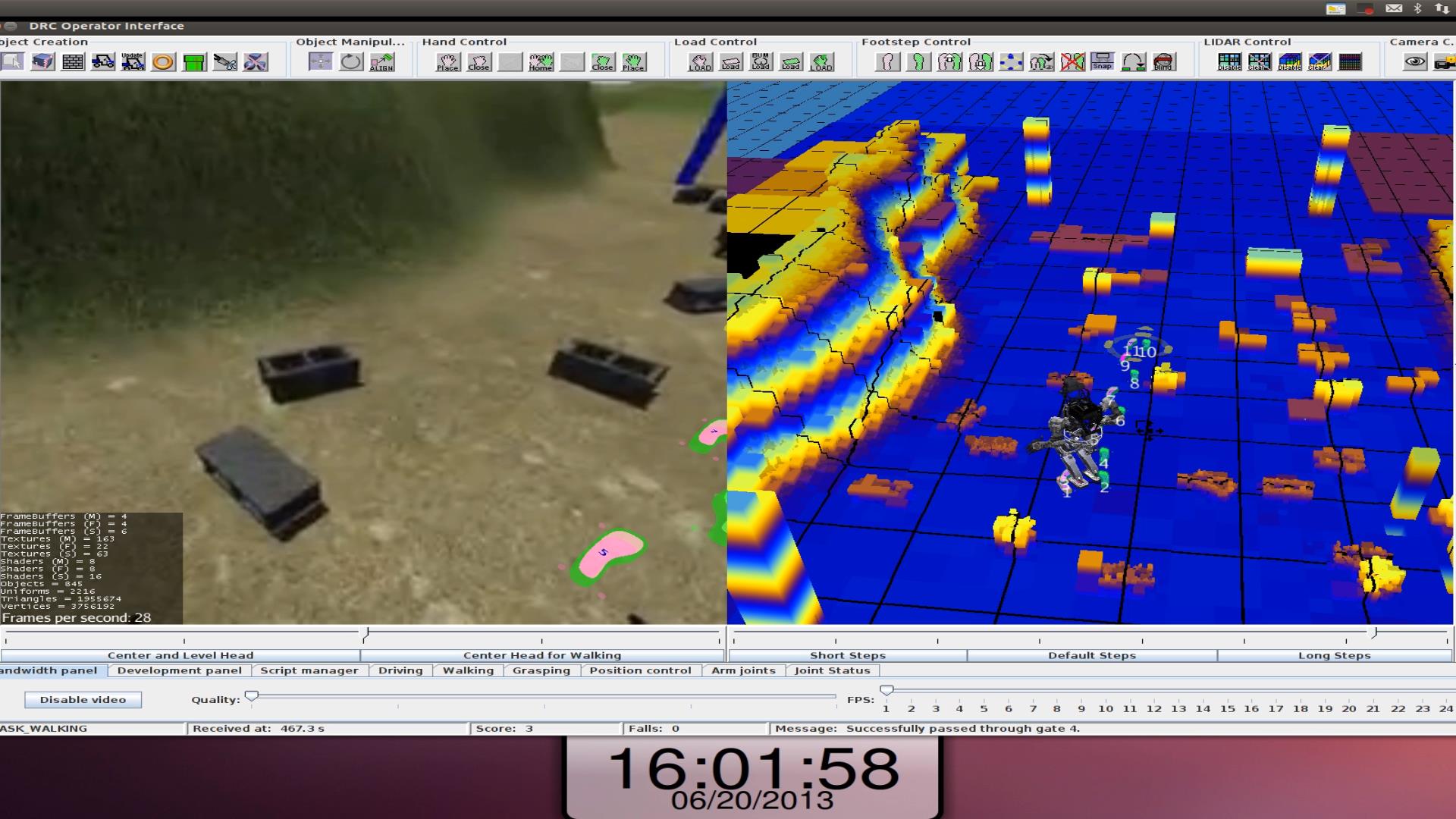

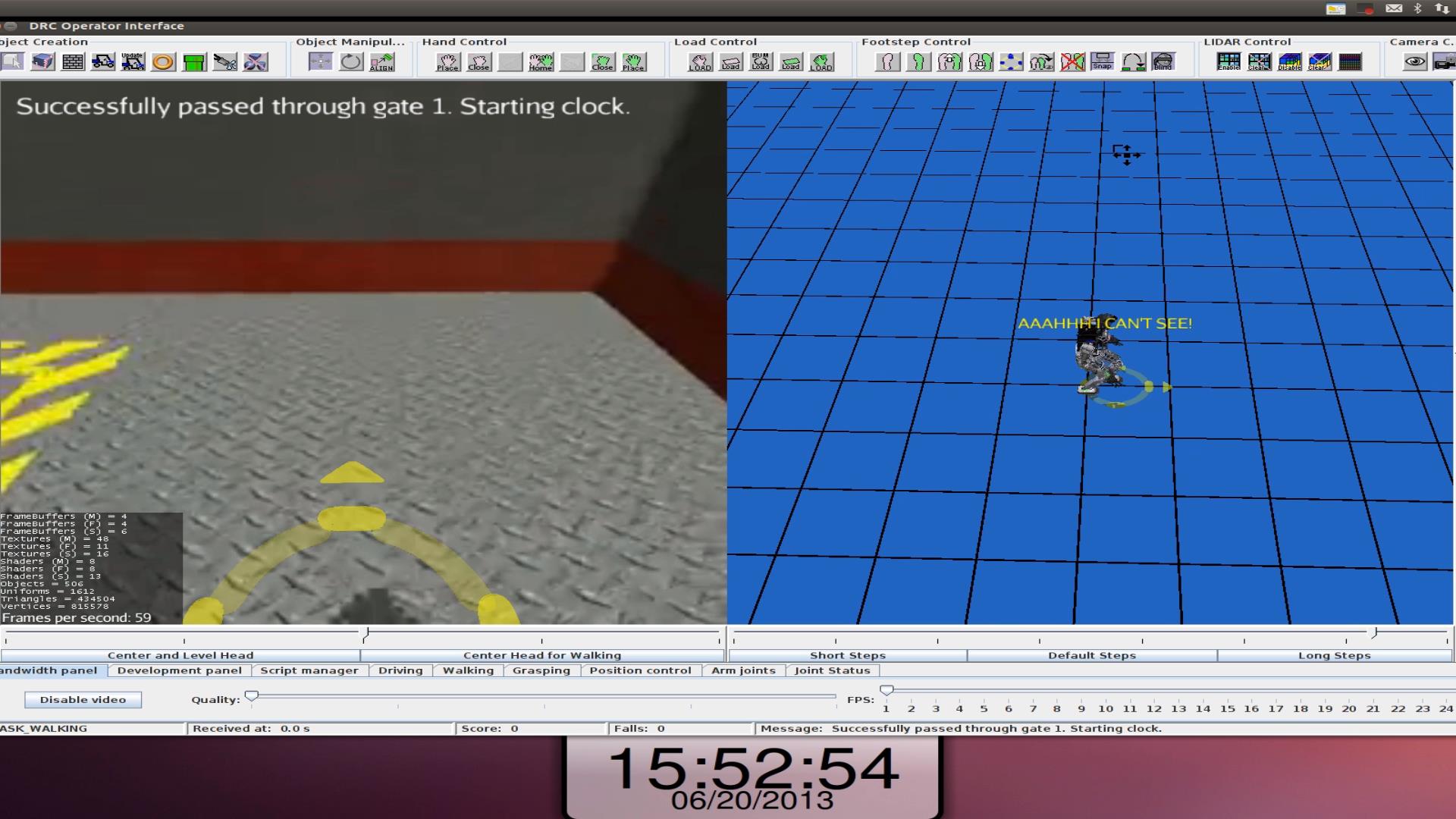

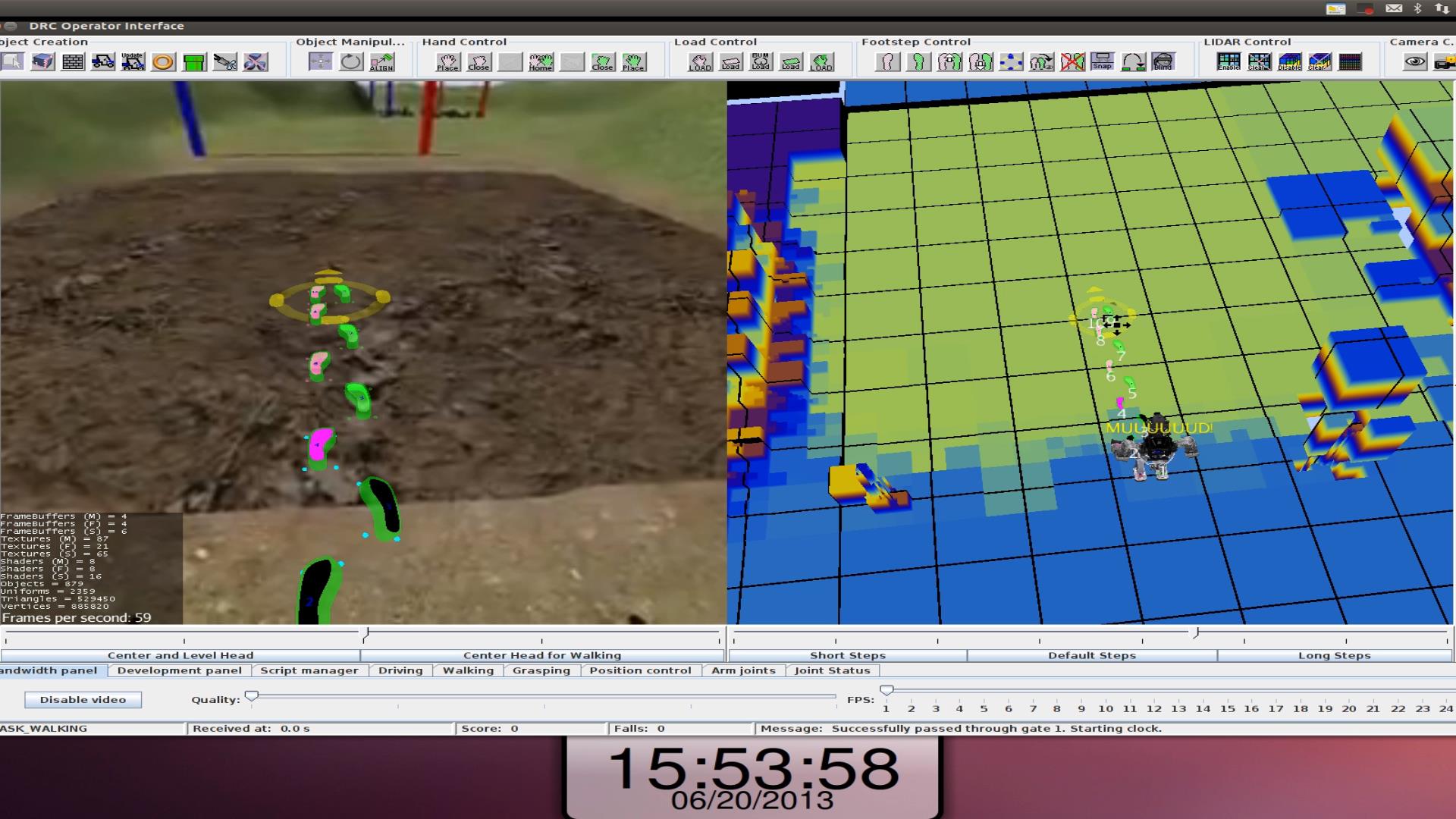

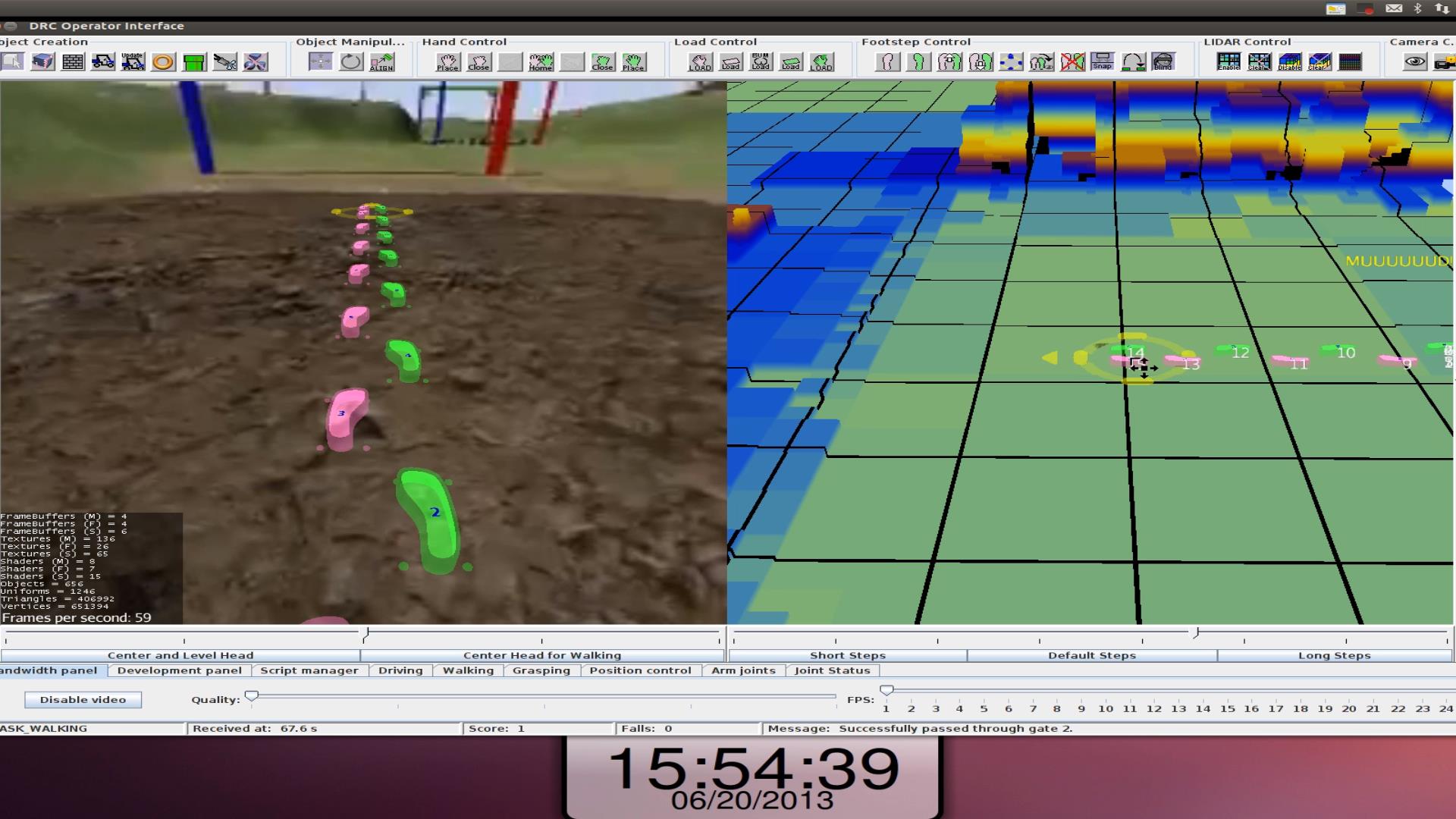

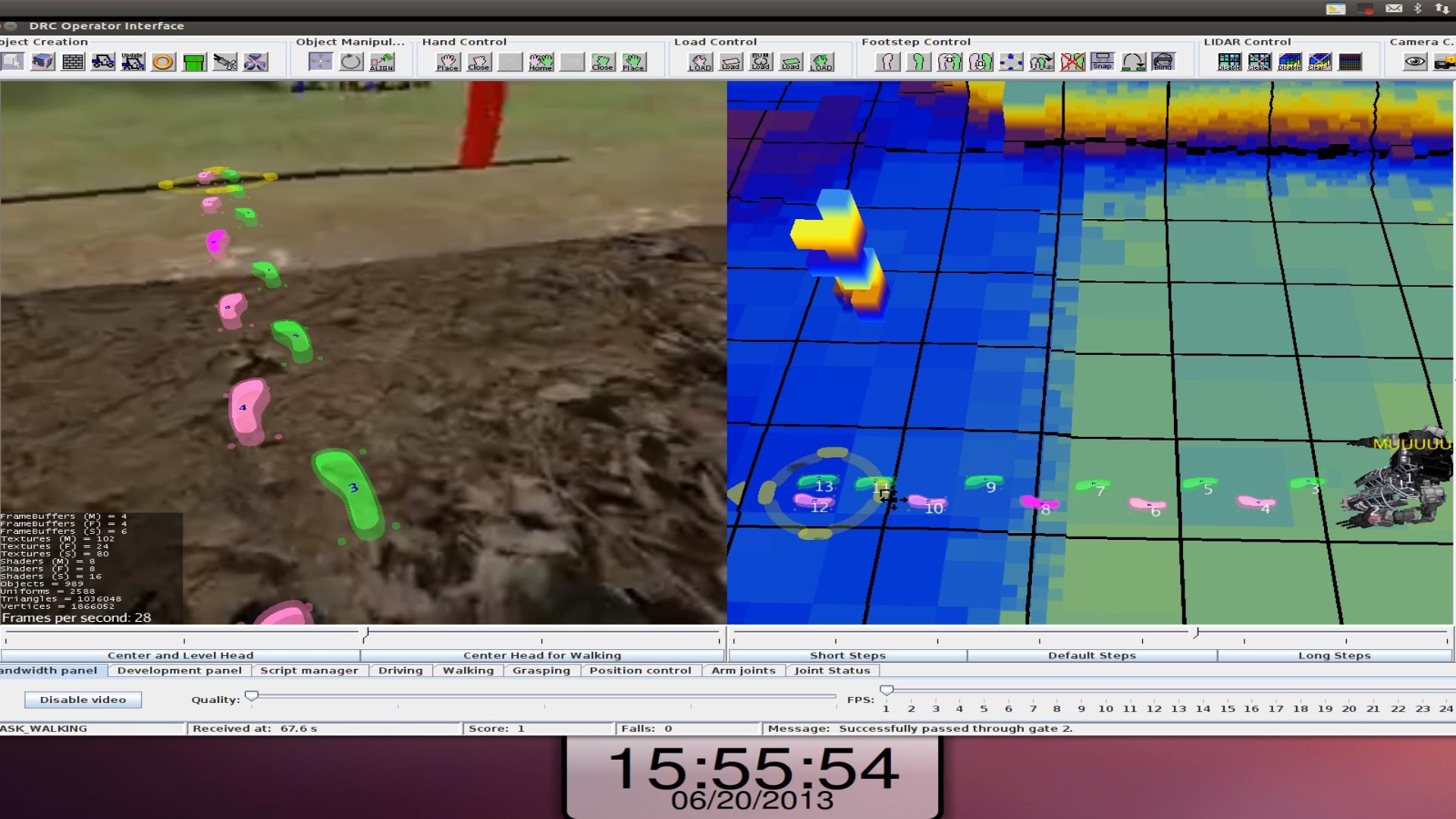

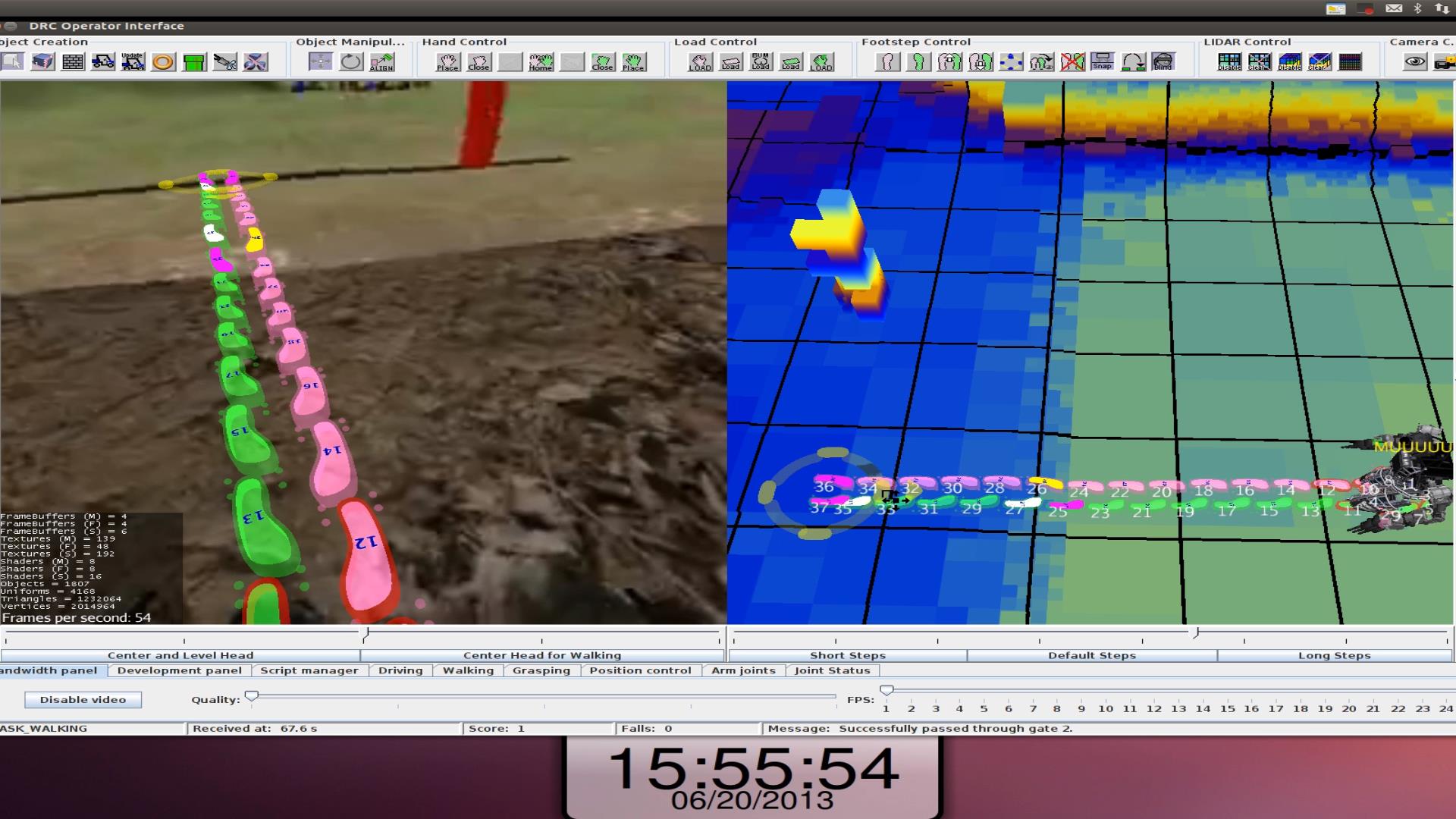

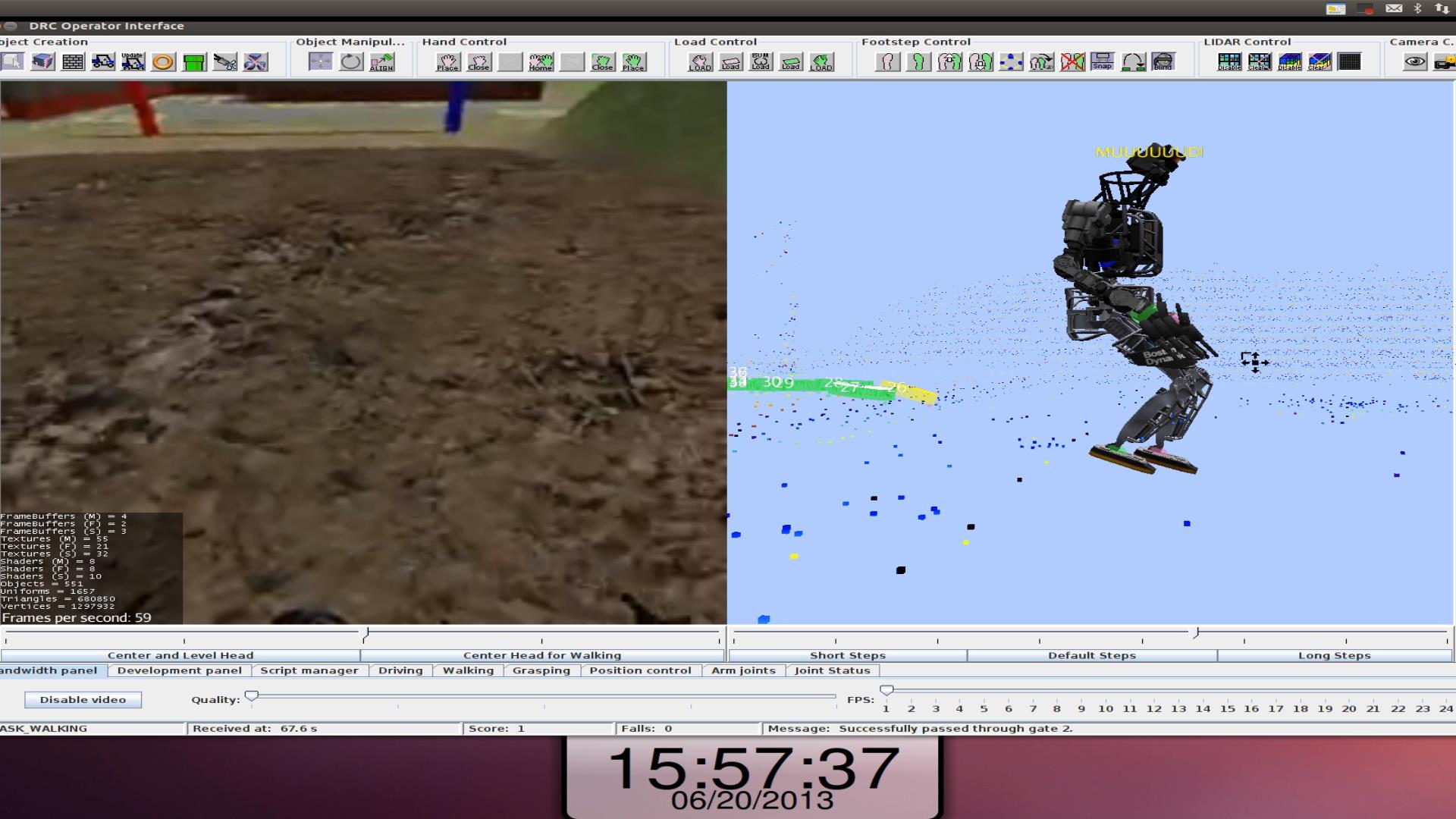

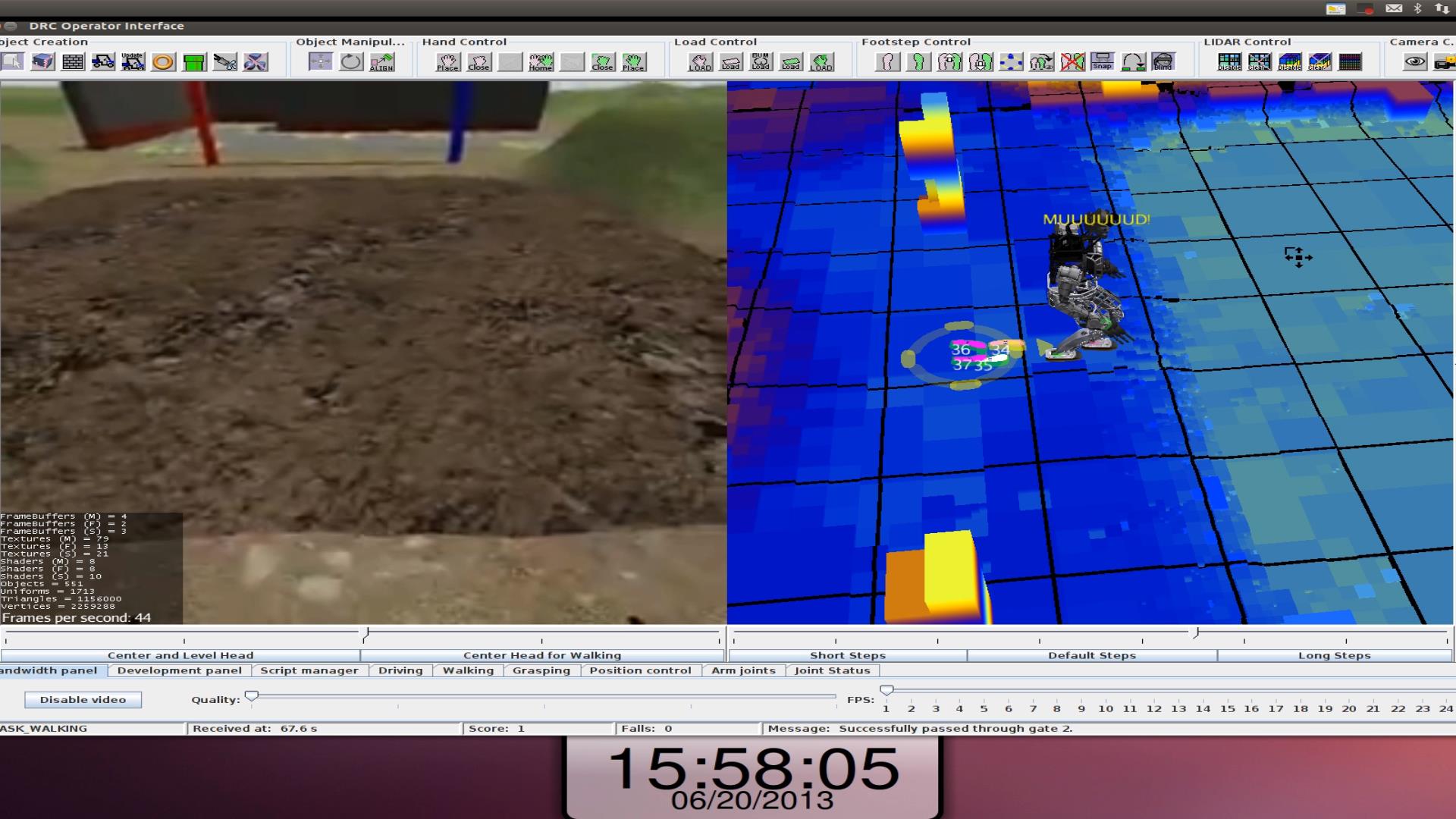

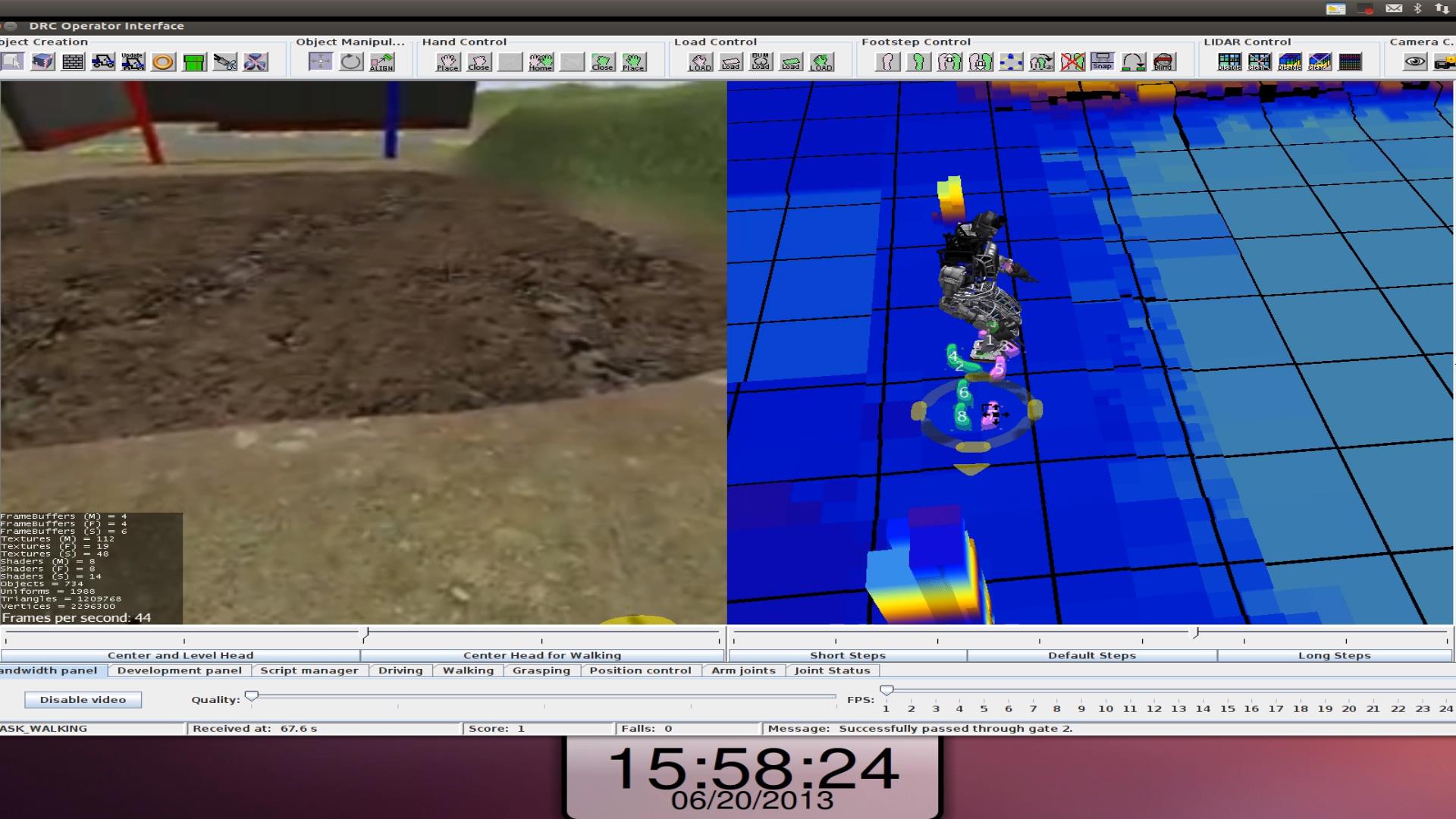

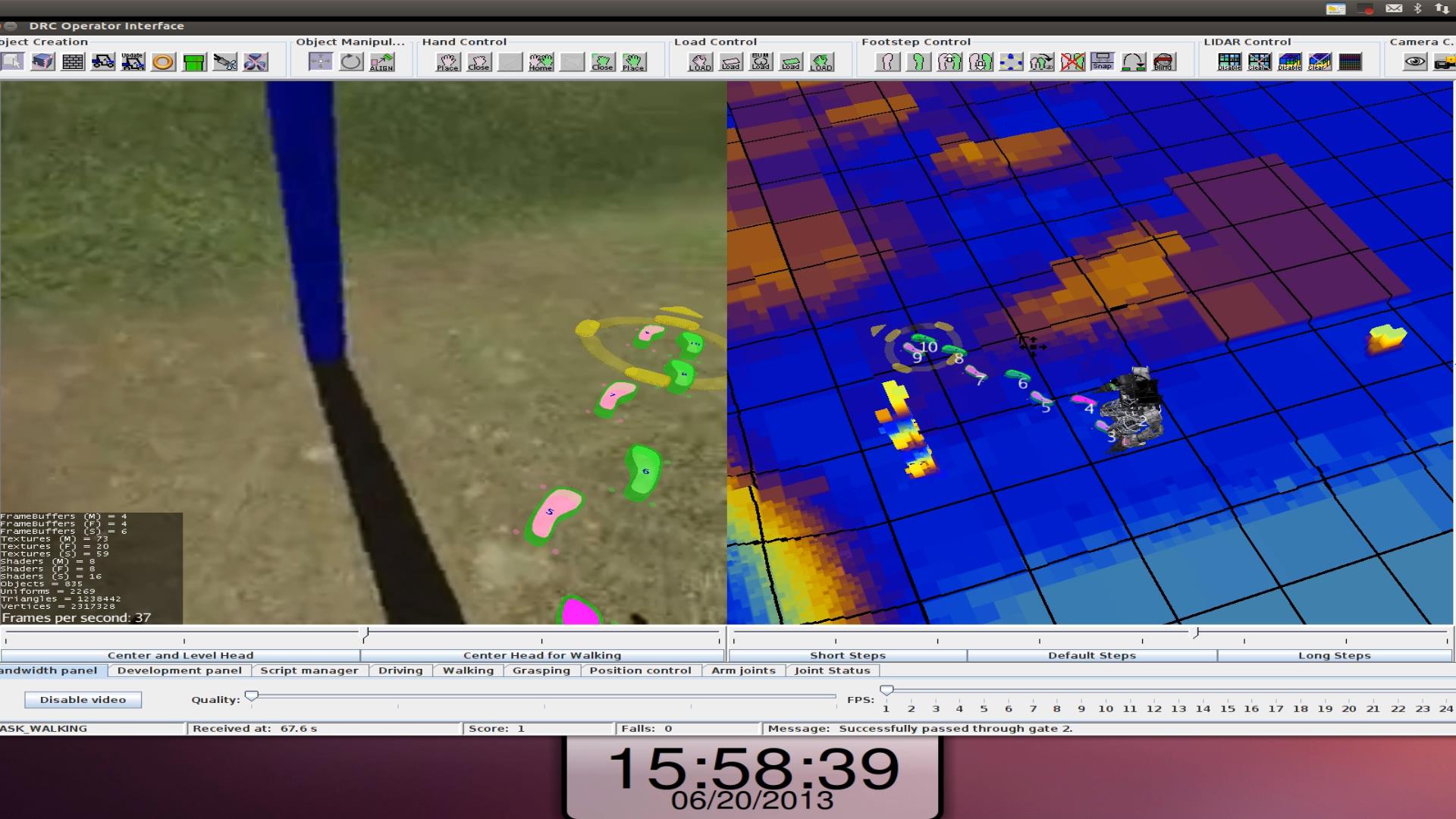

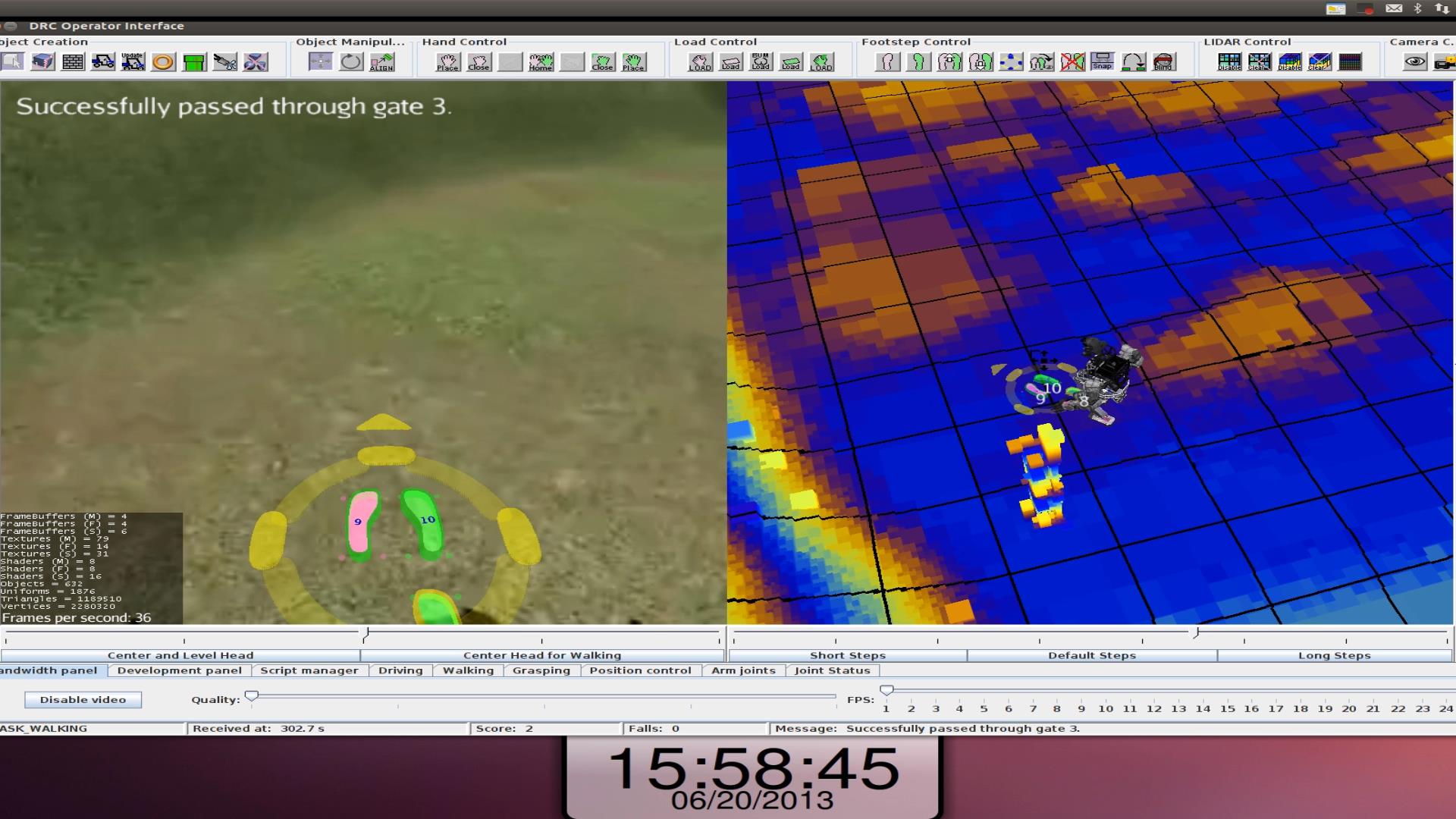

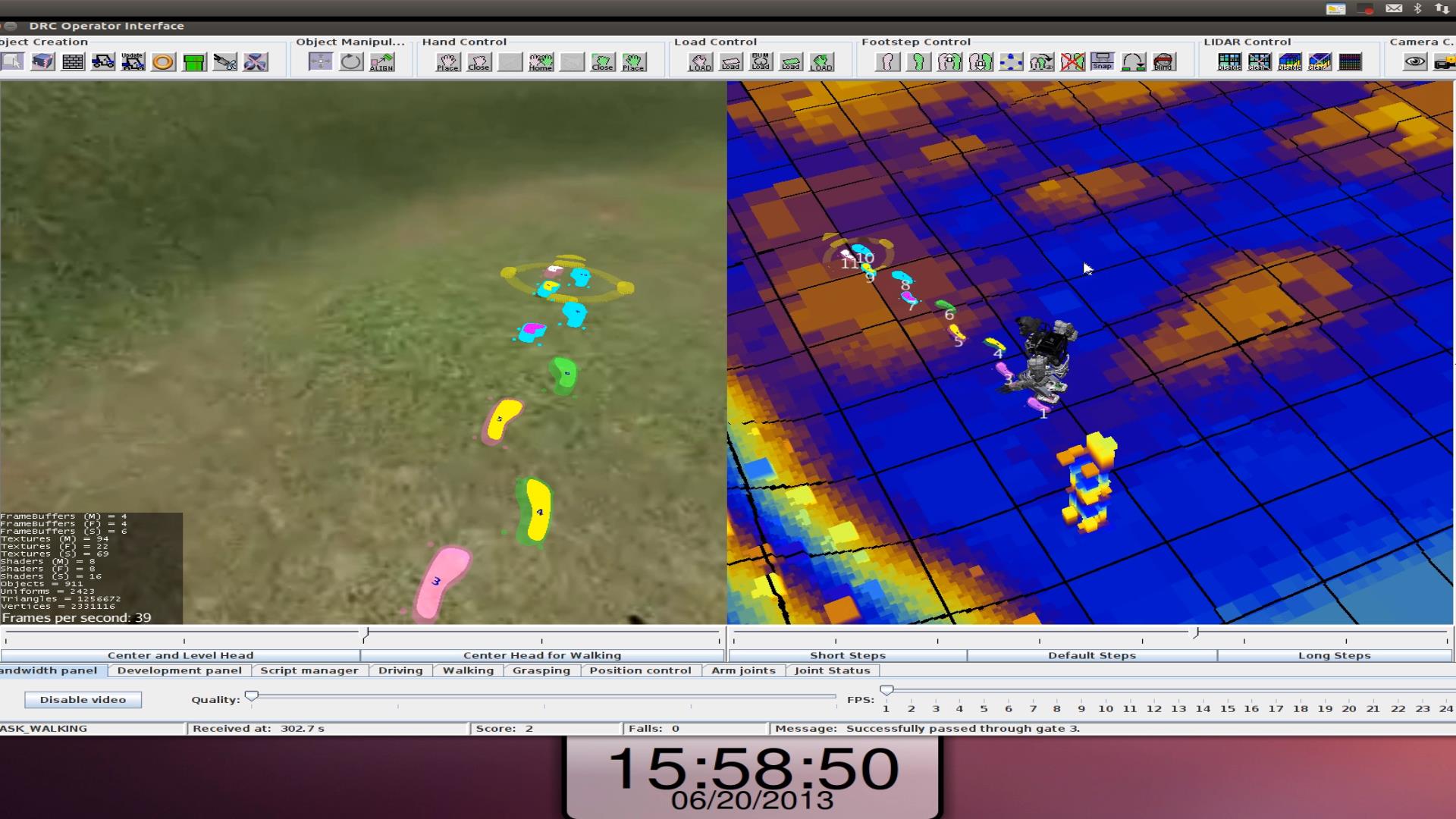

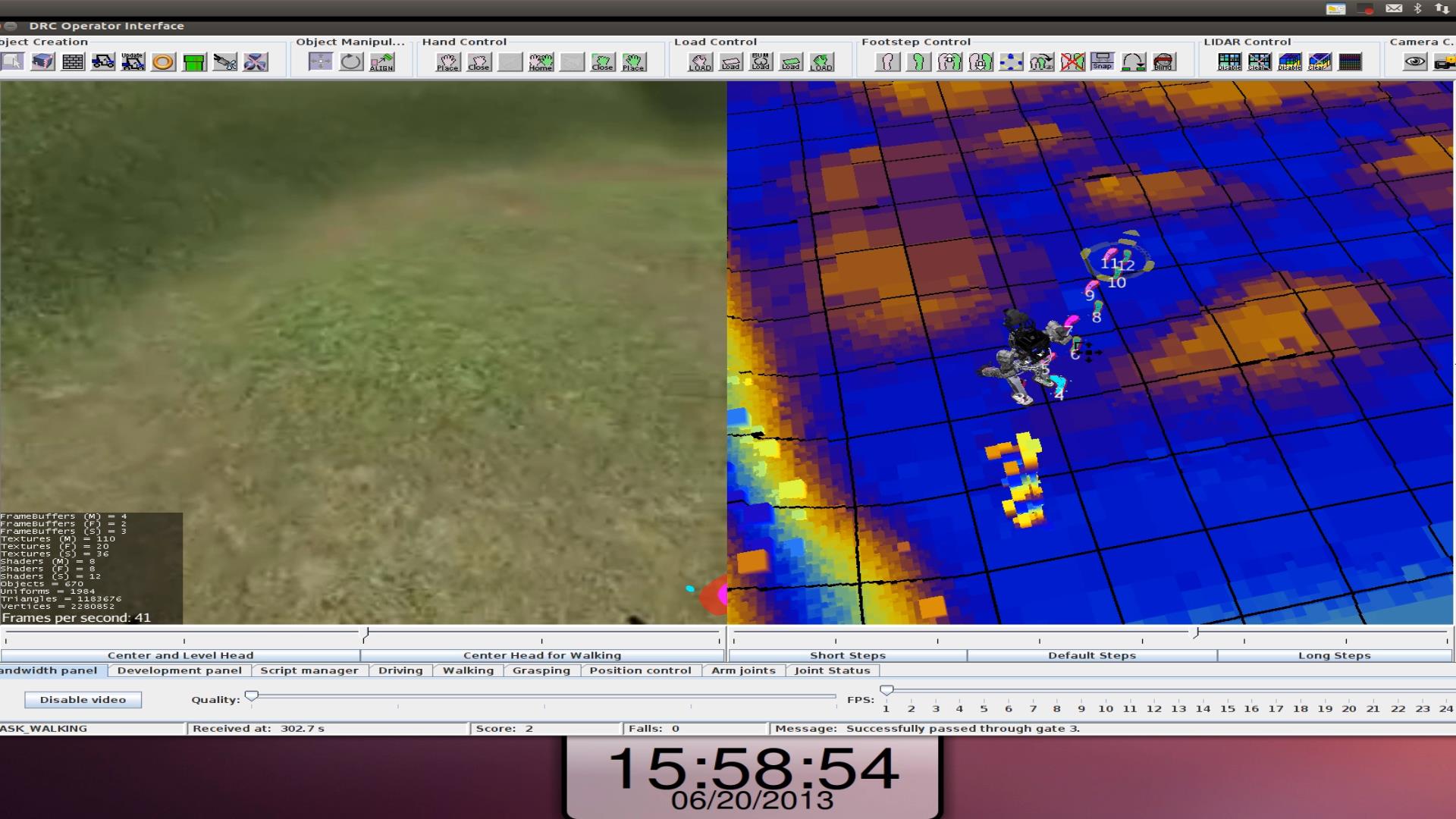

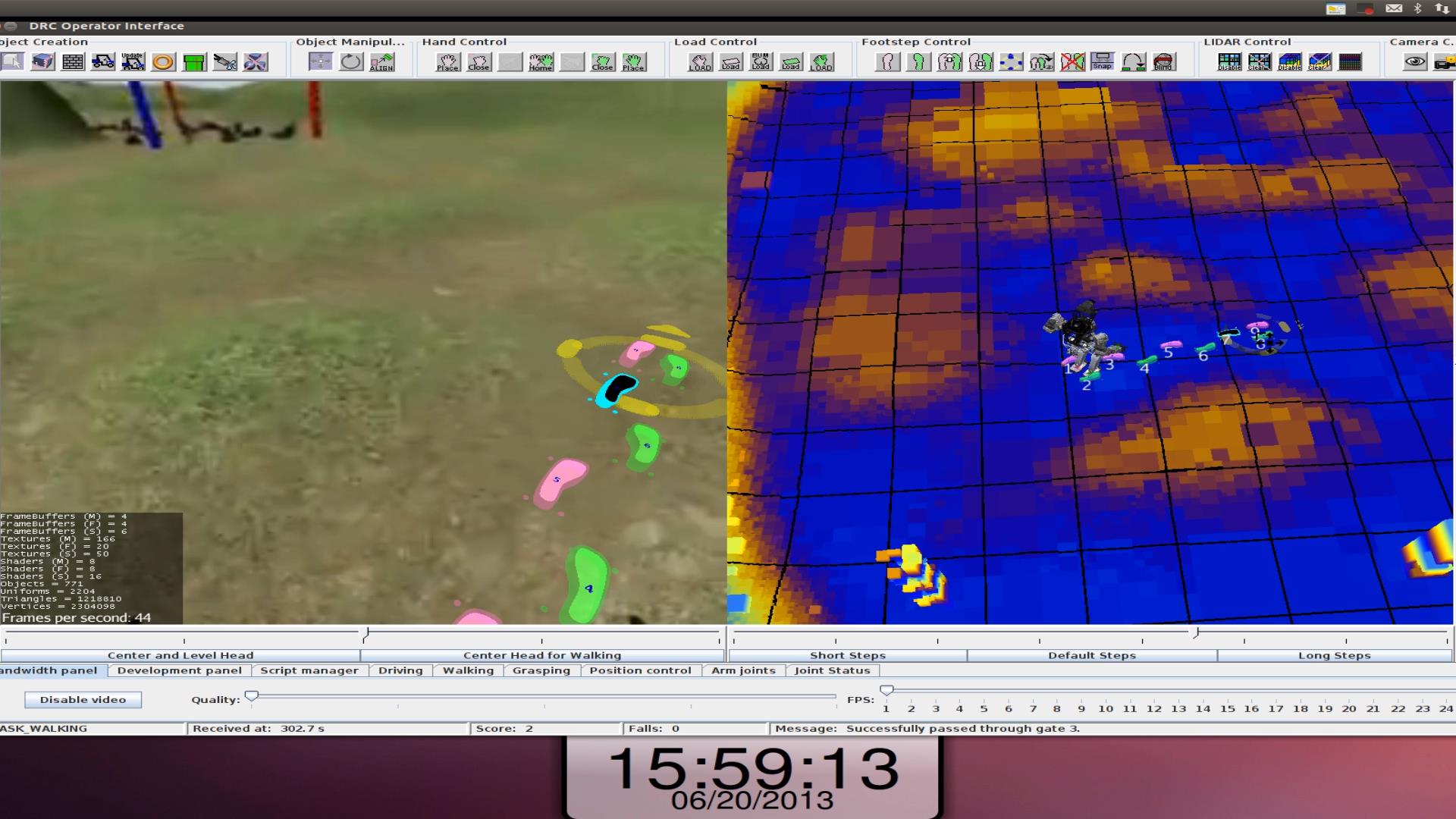

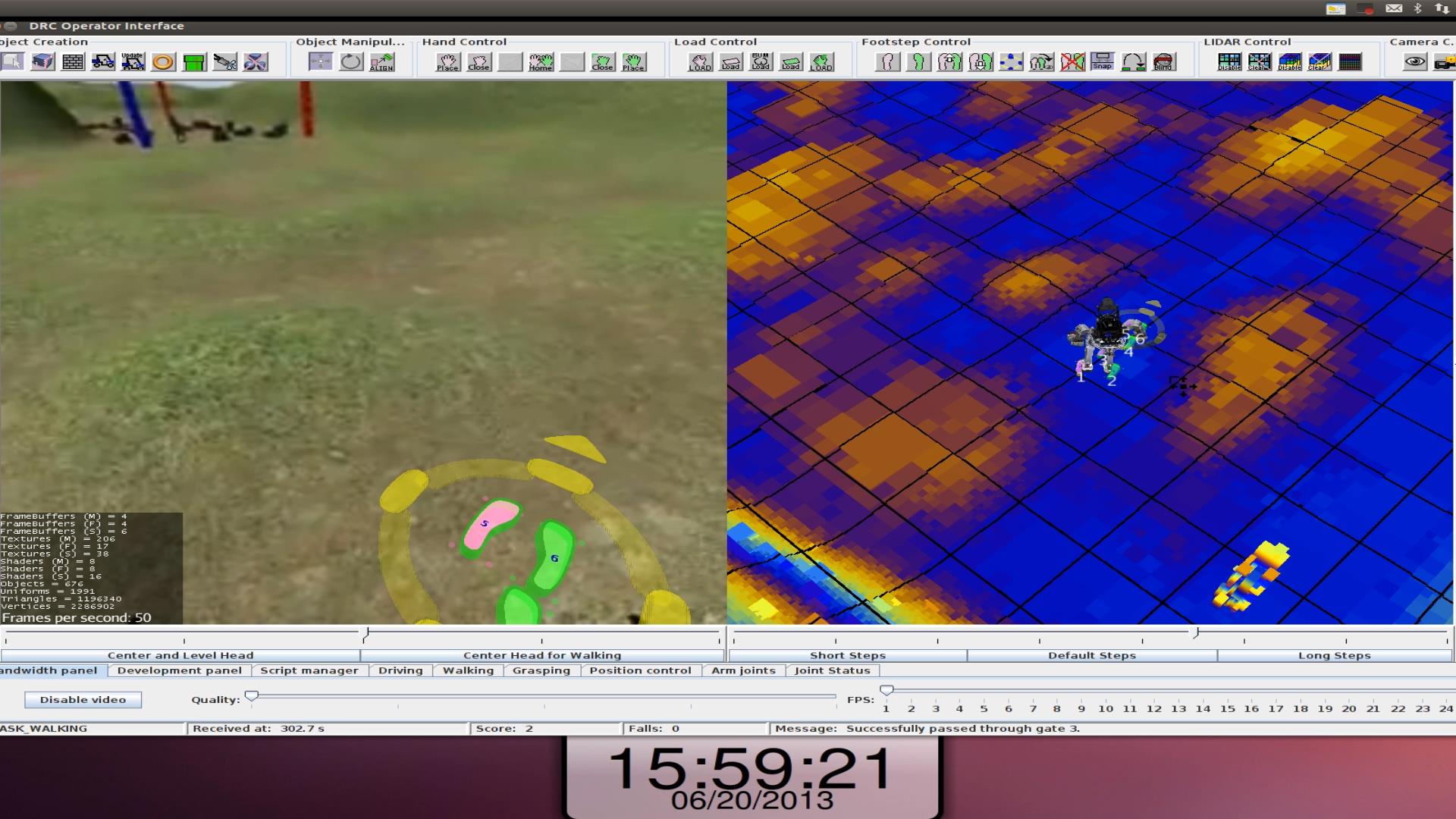

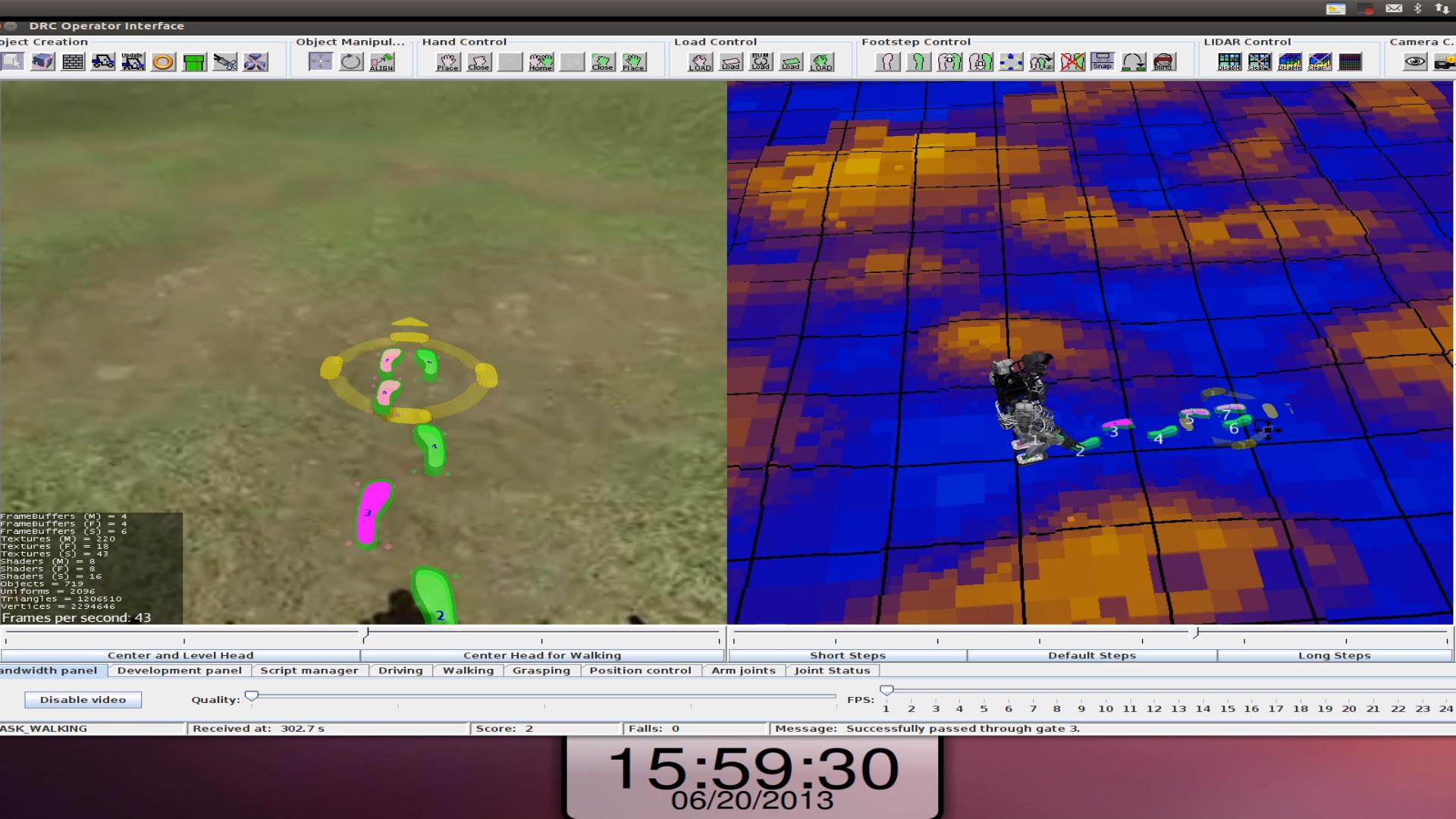

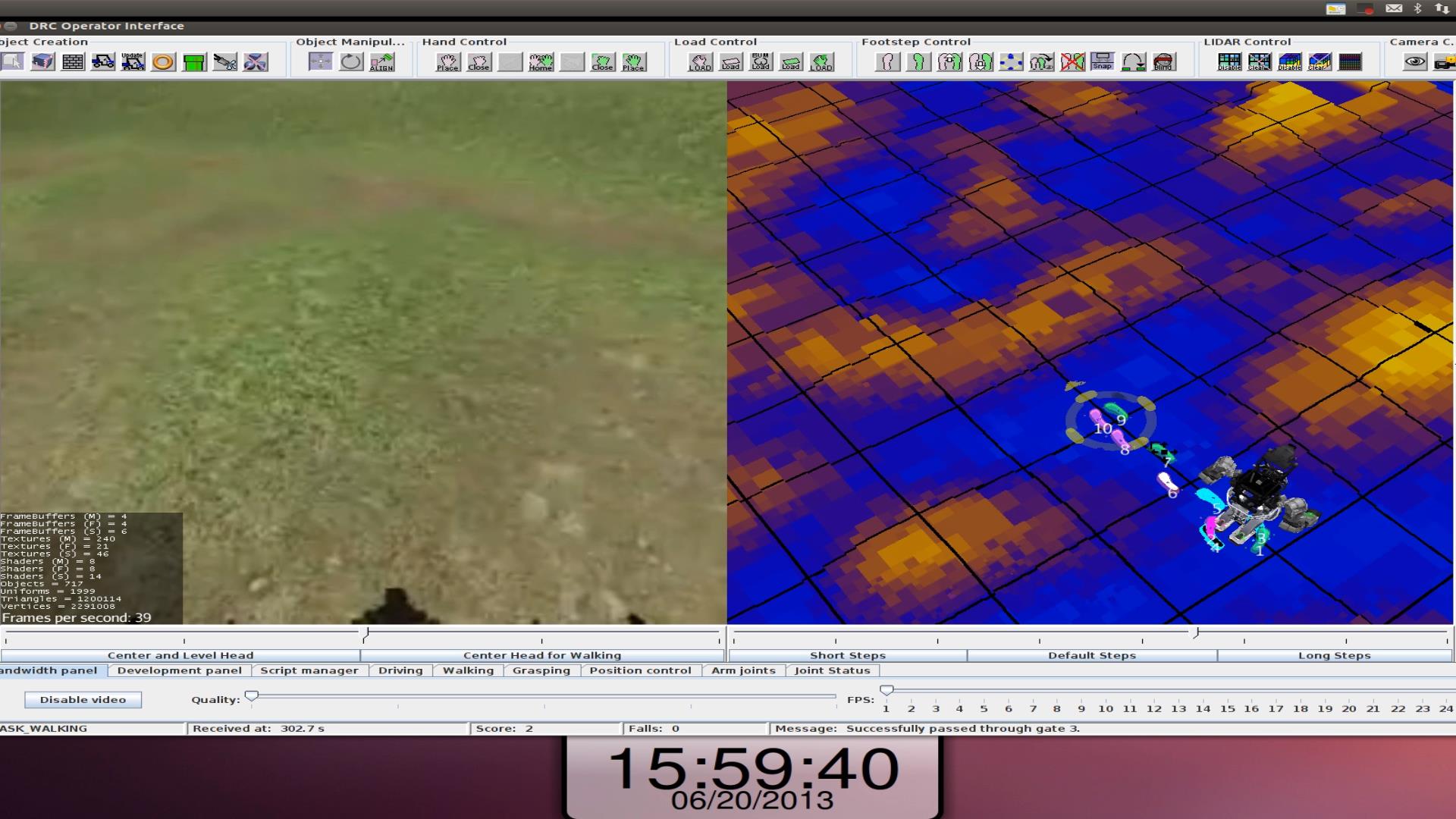

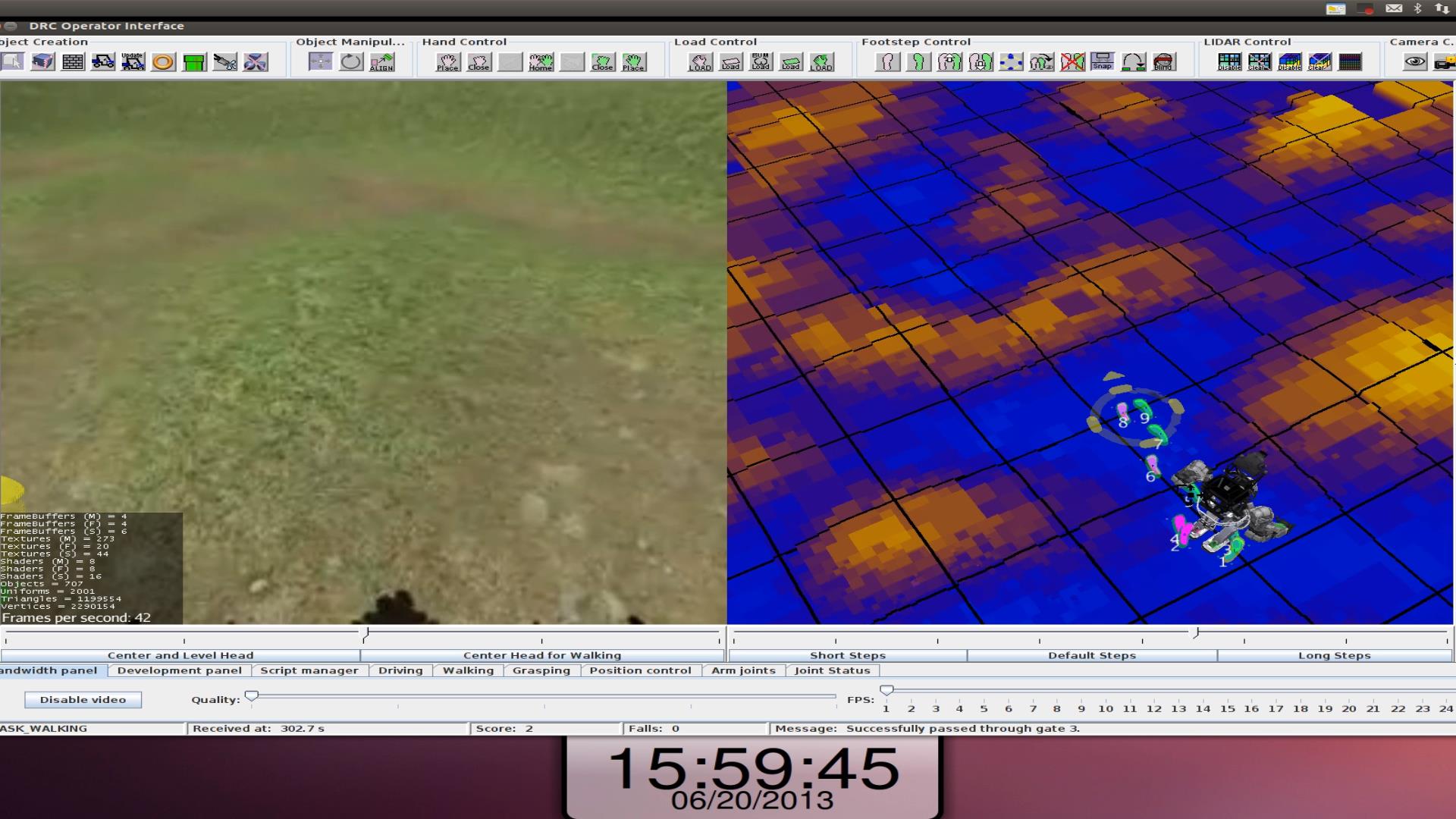

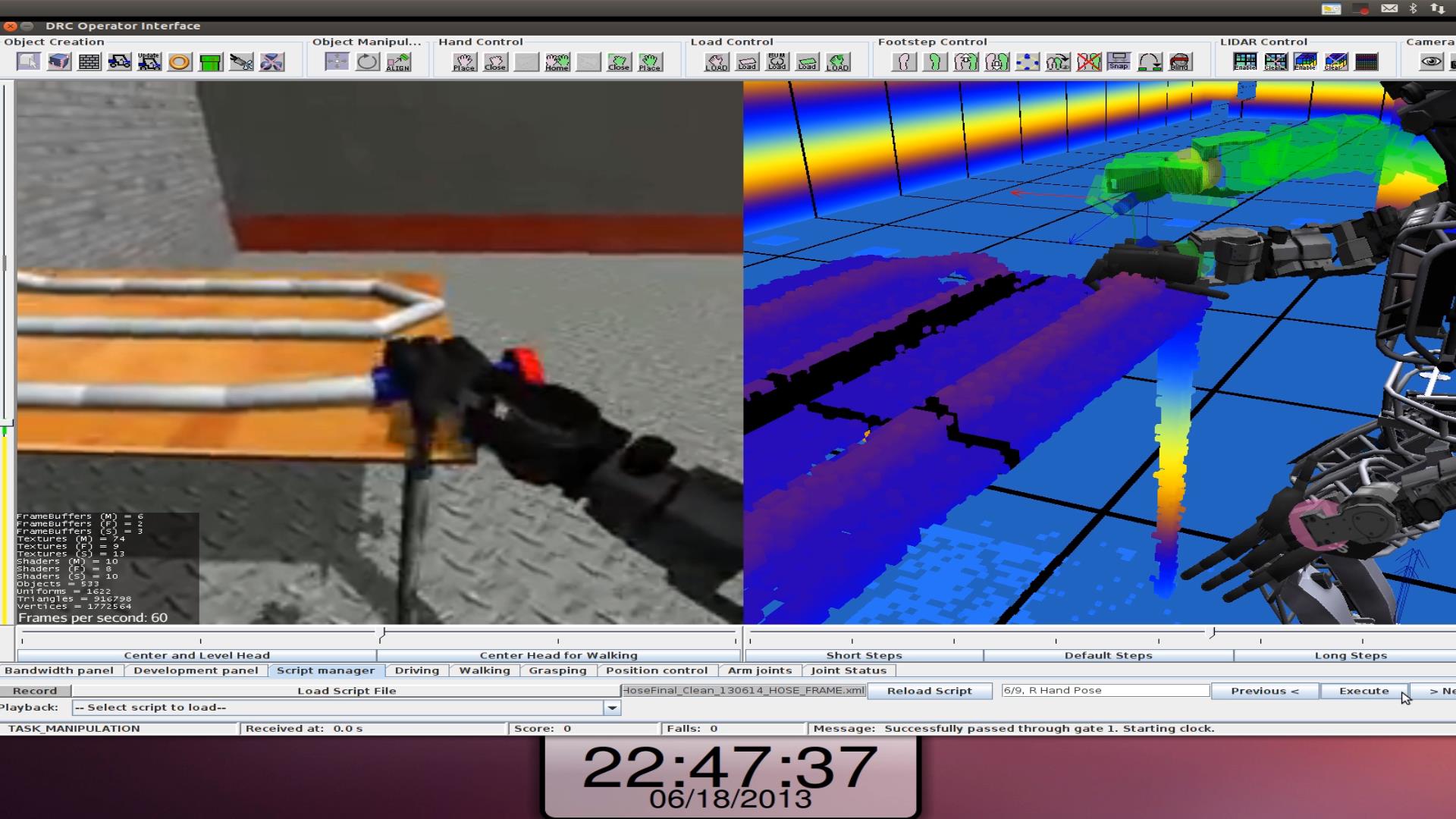

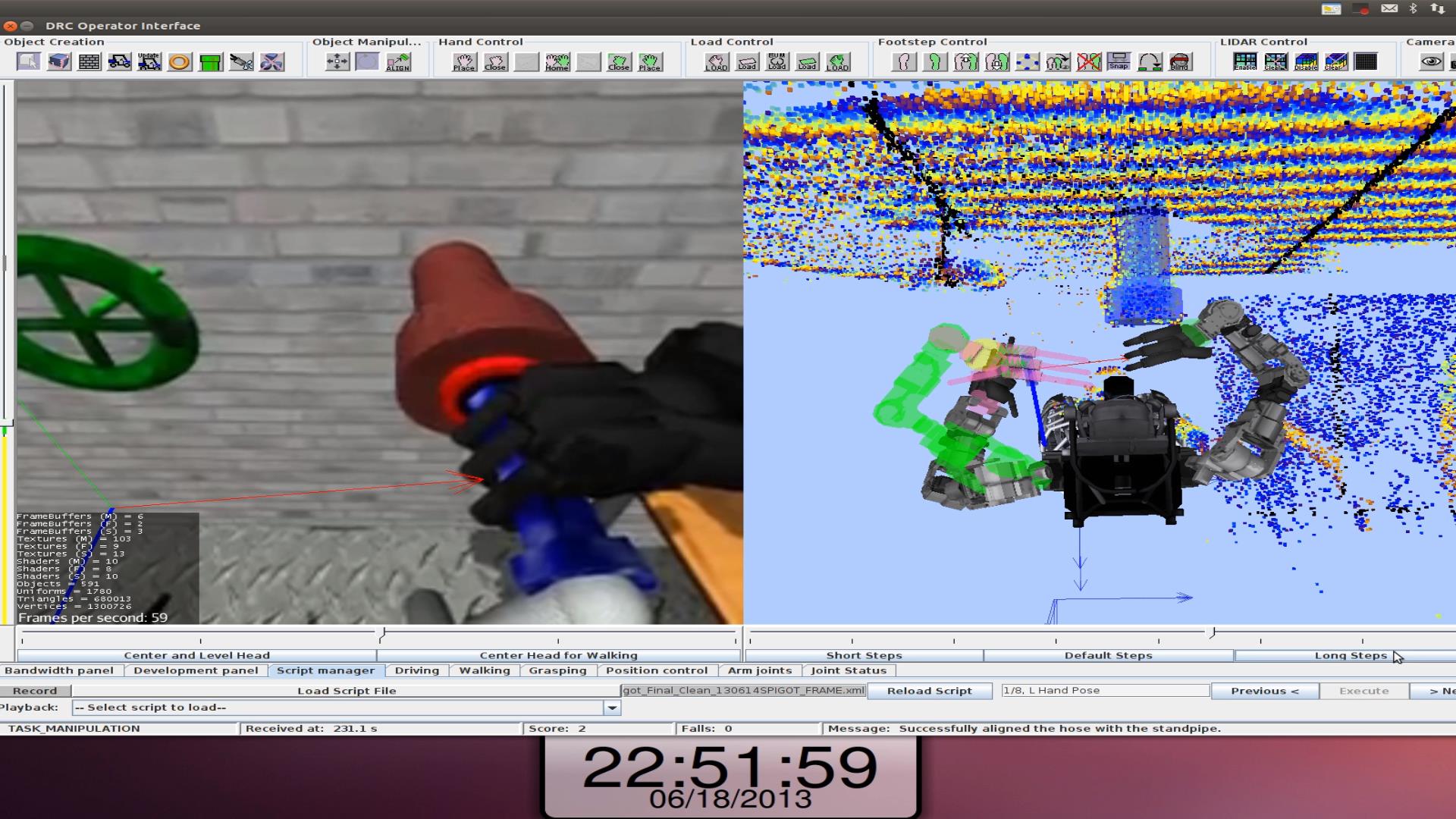

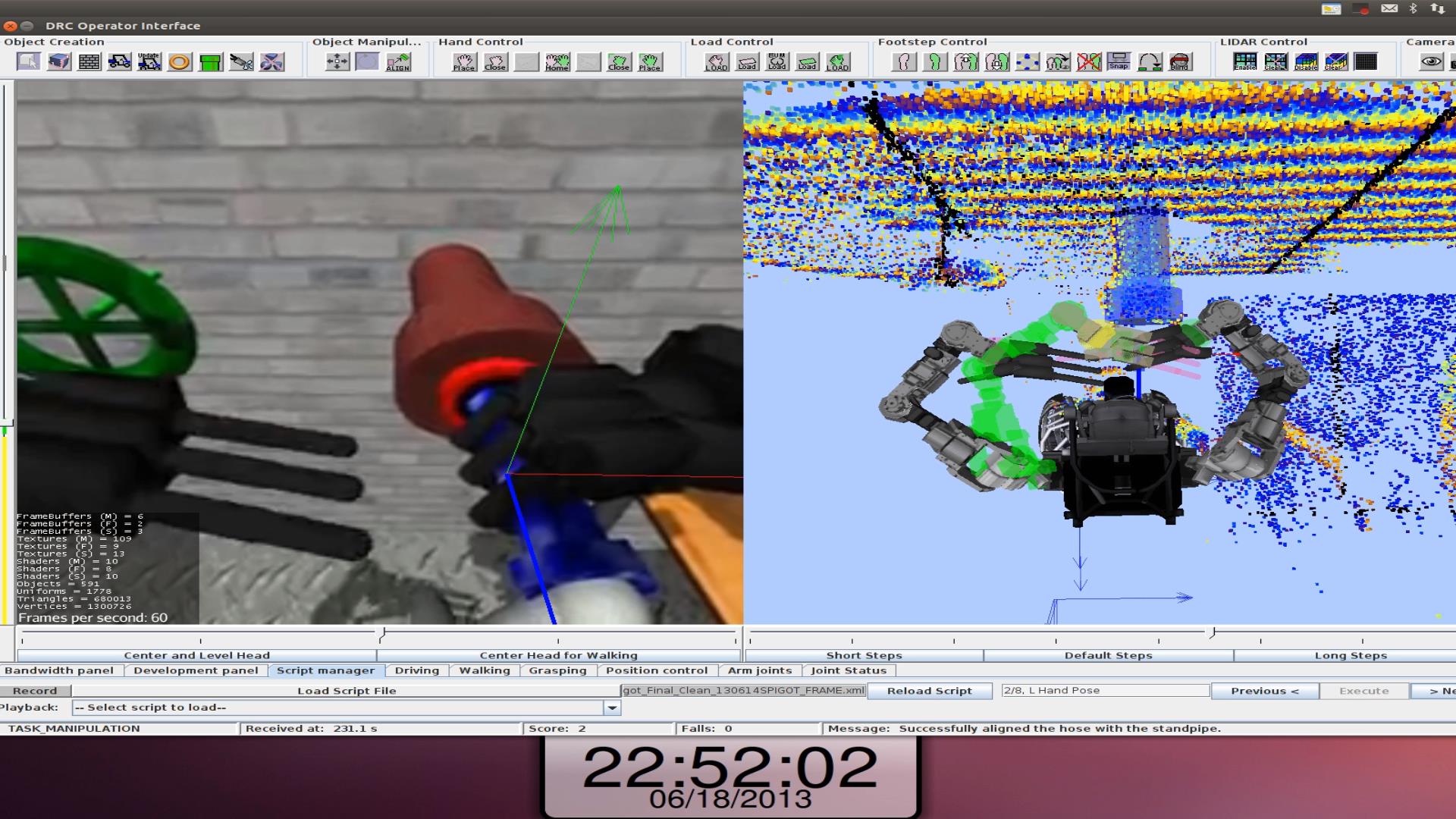

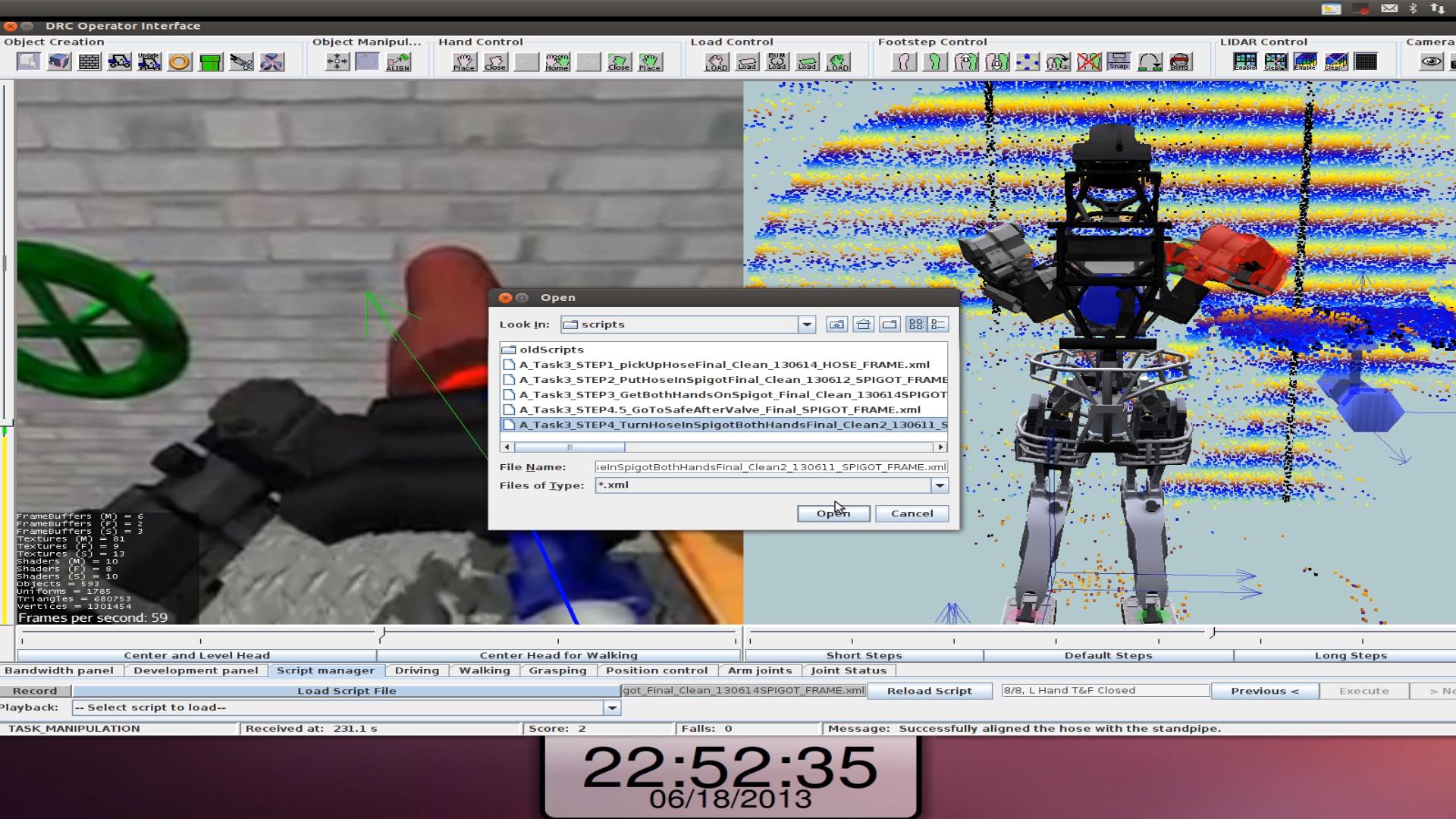

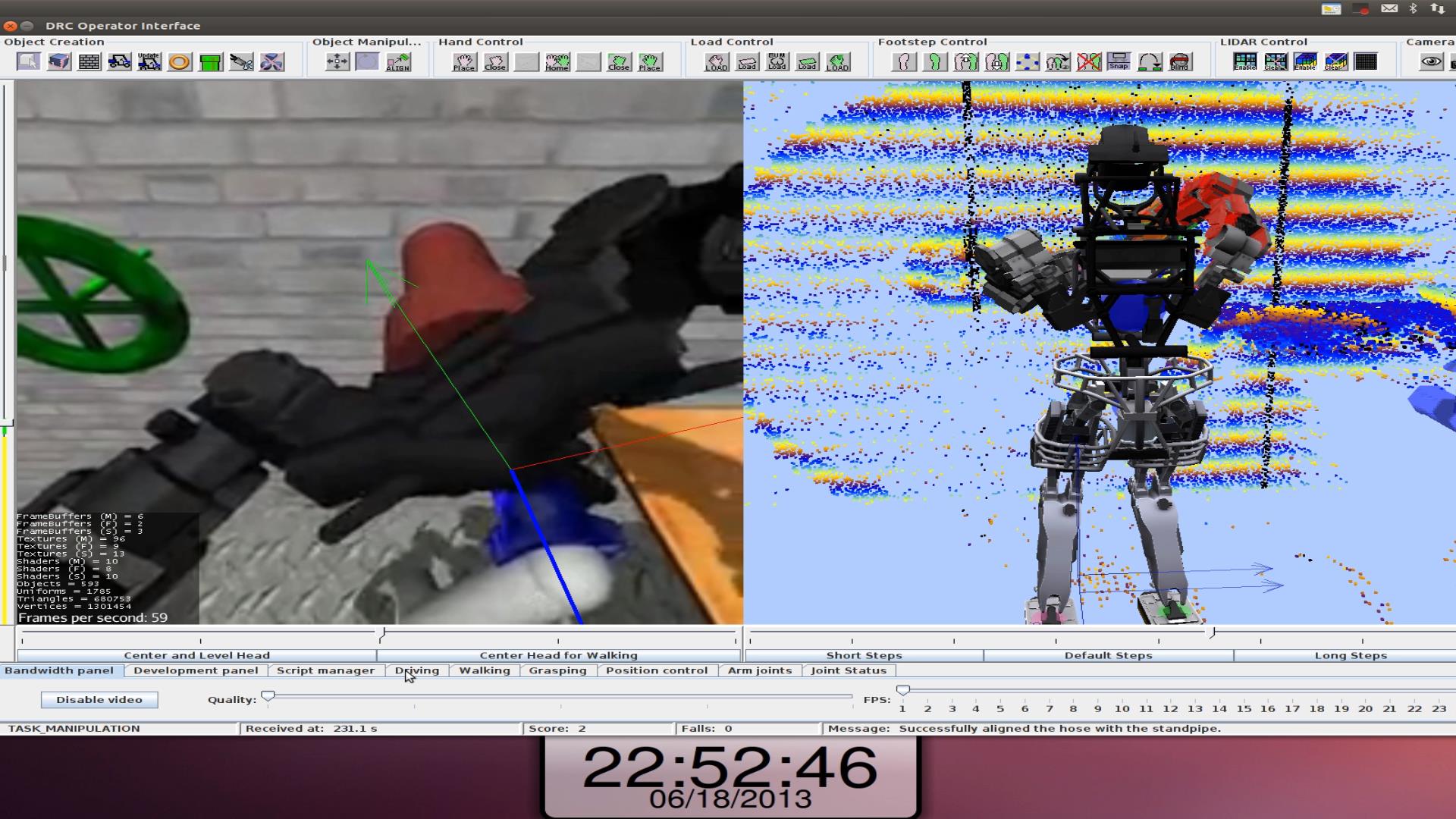

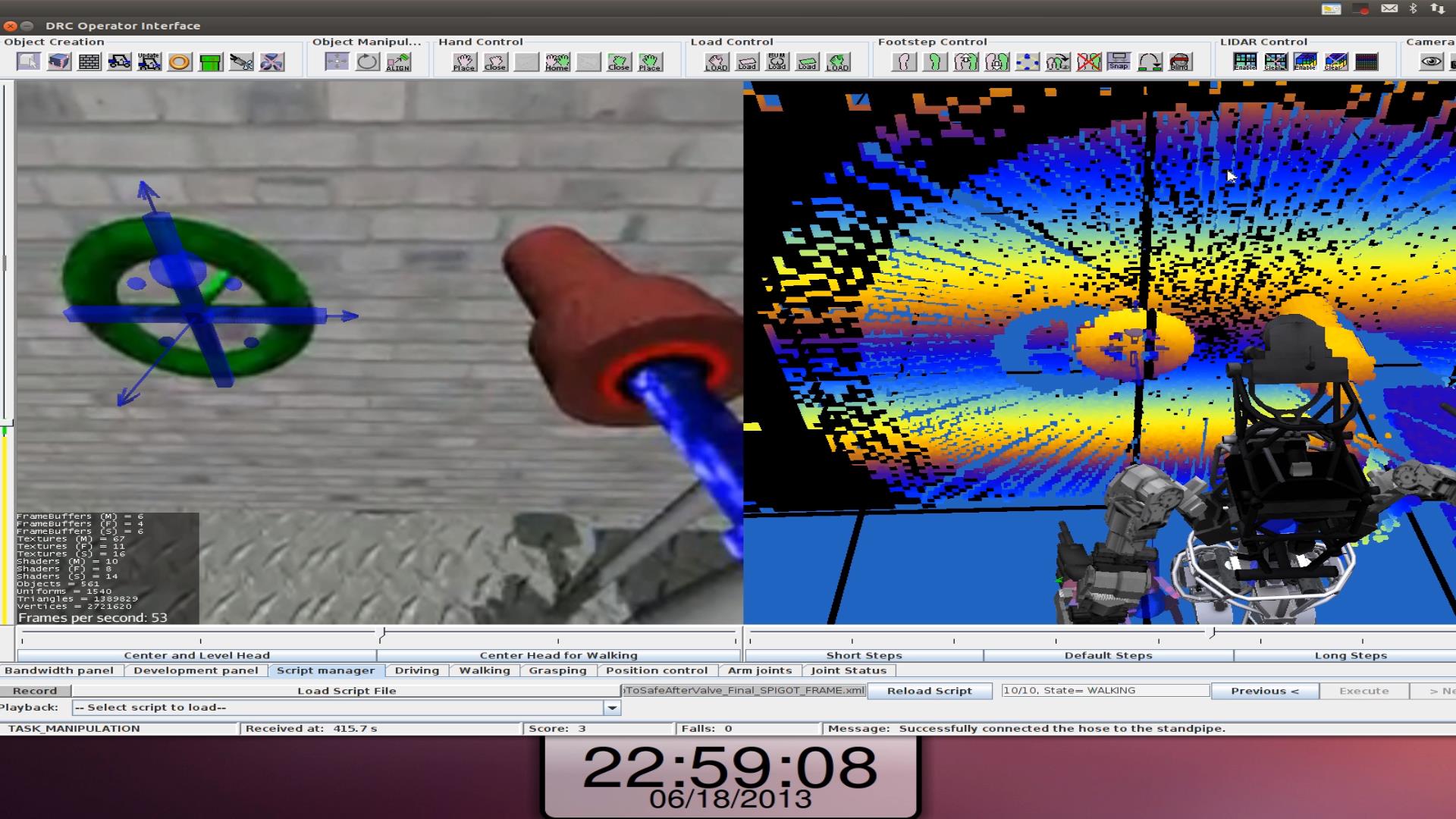

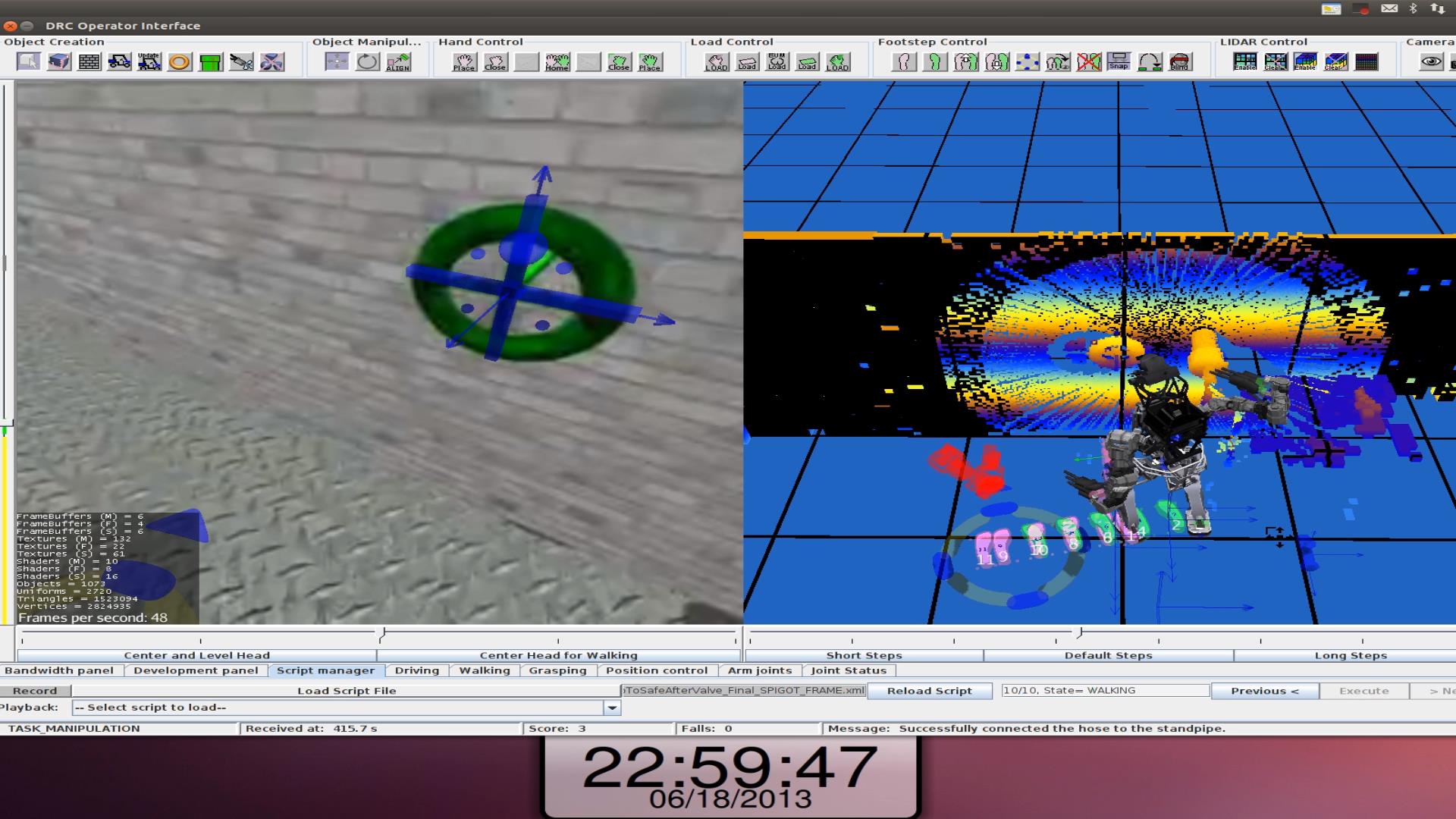

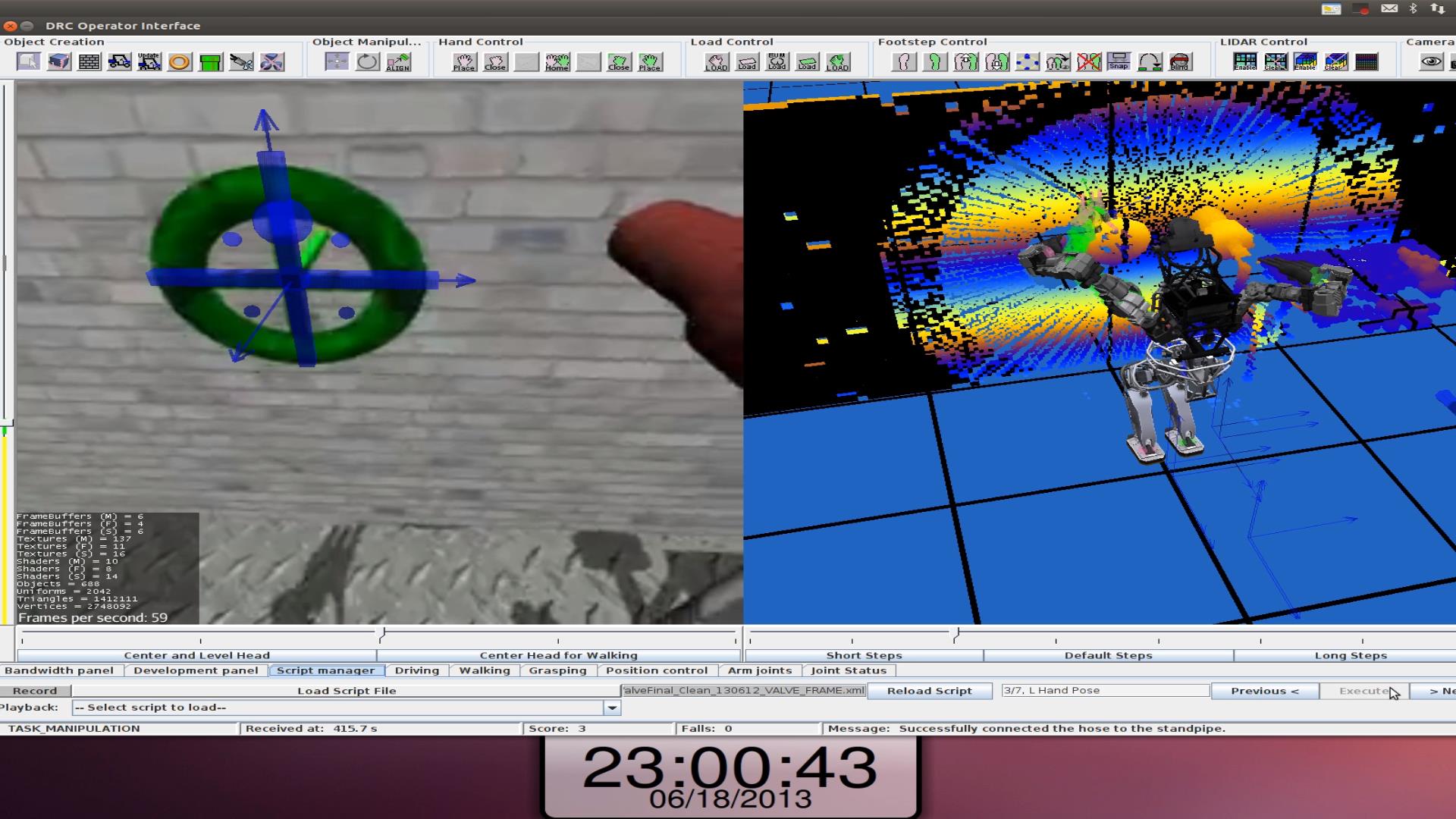

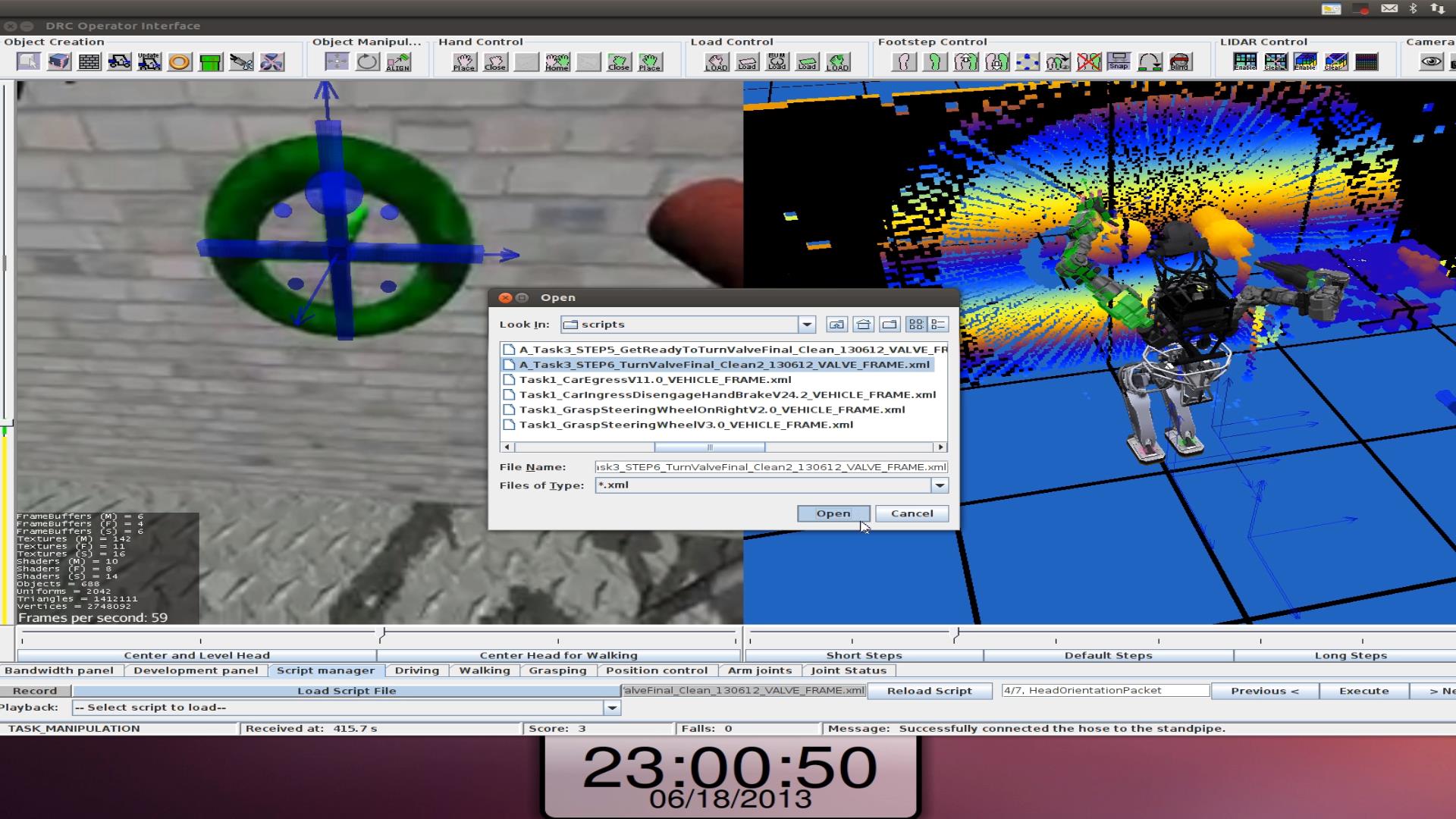

The Operator Interface

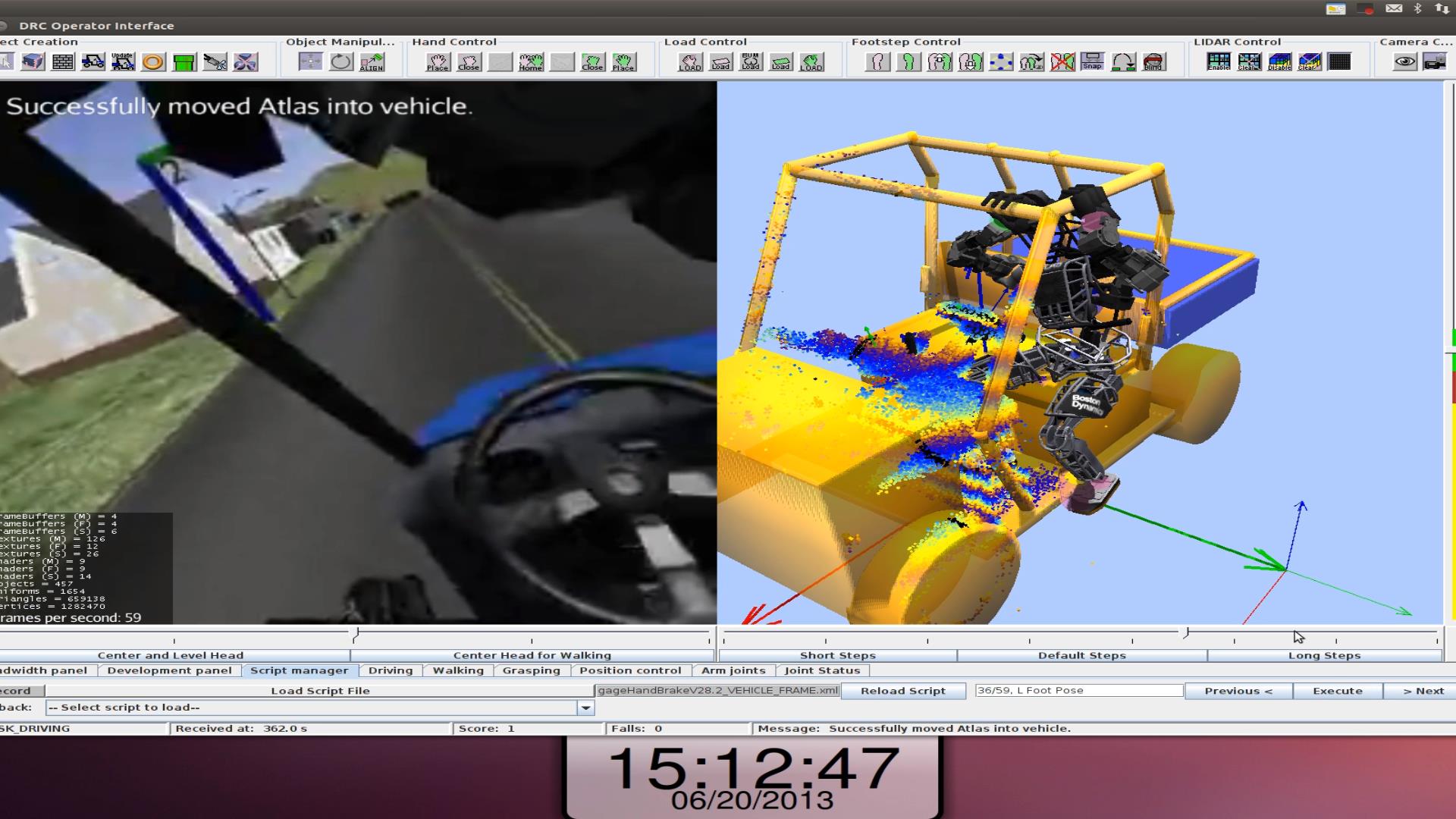

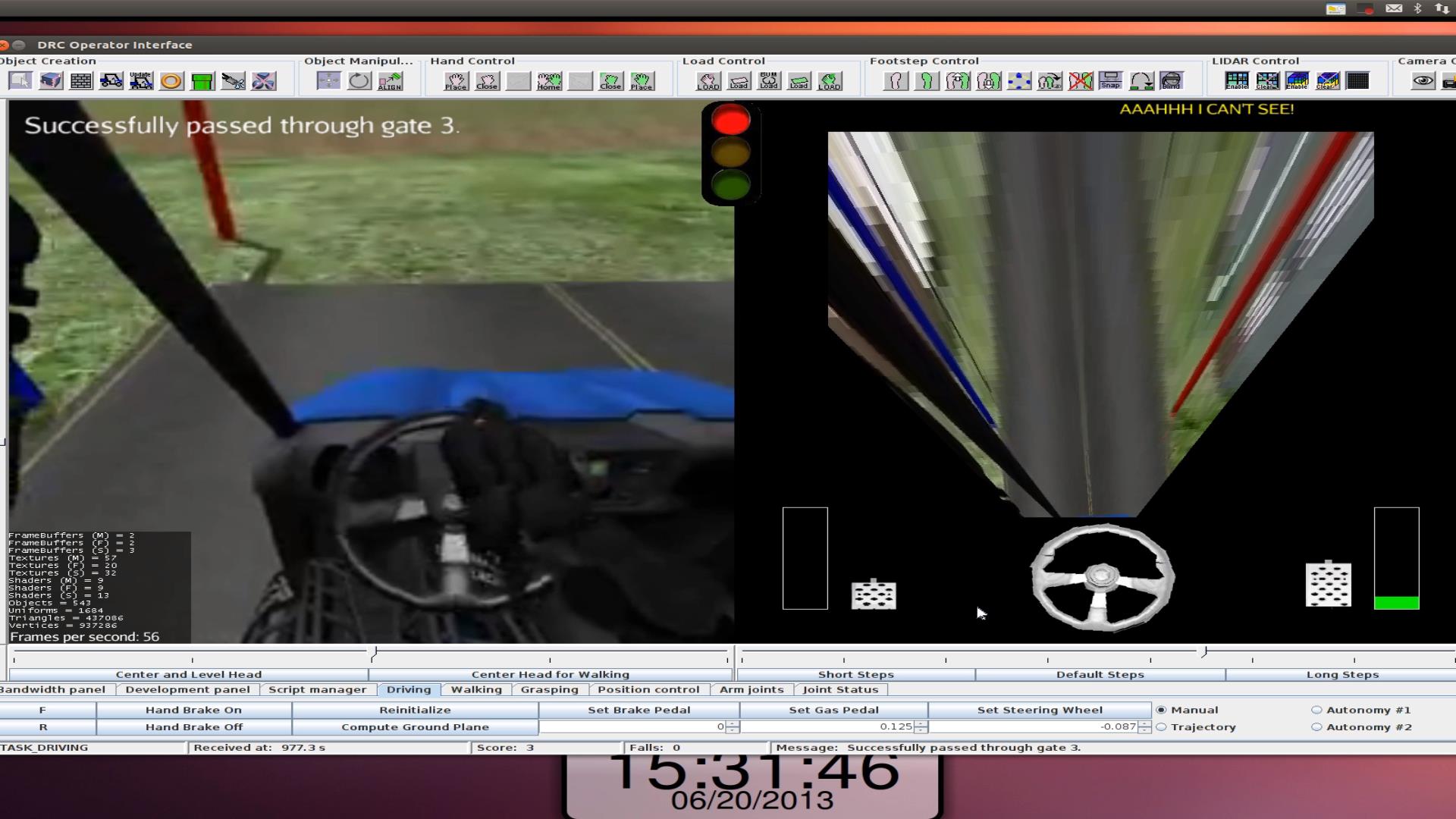

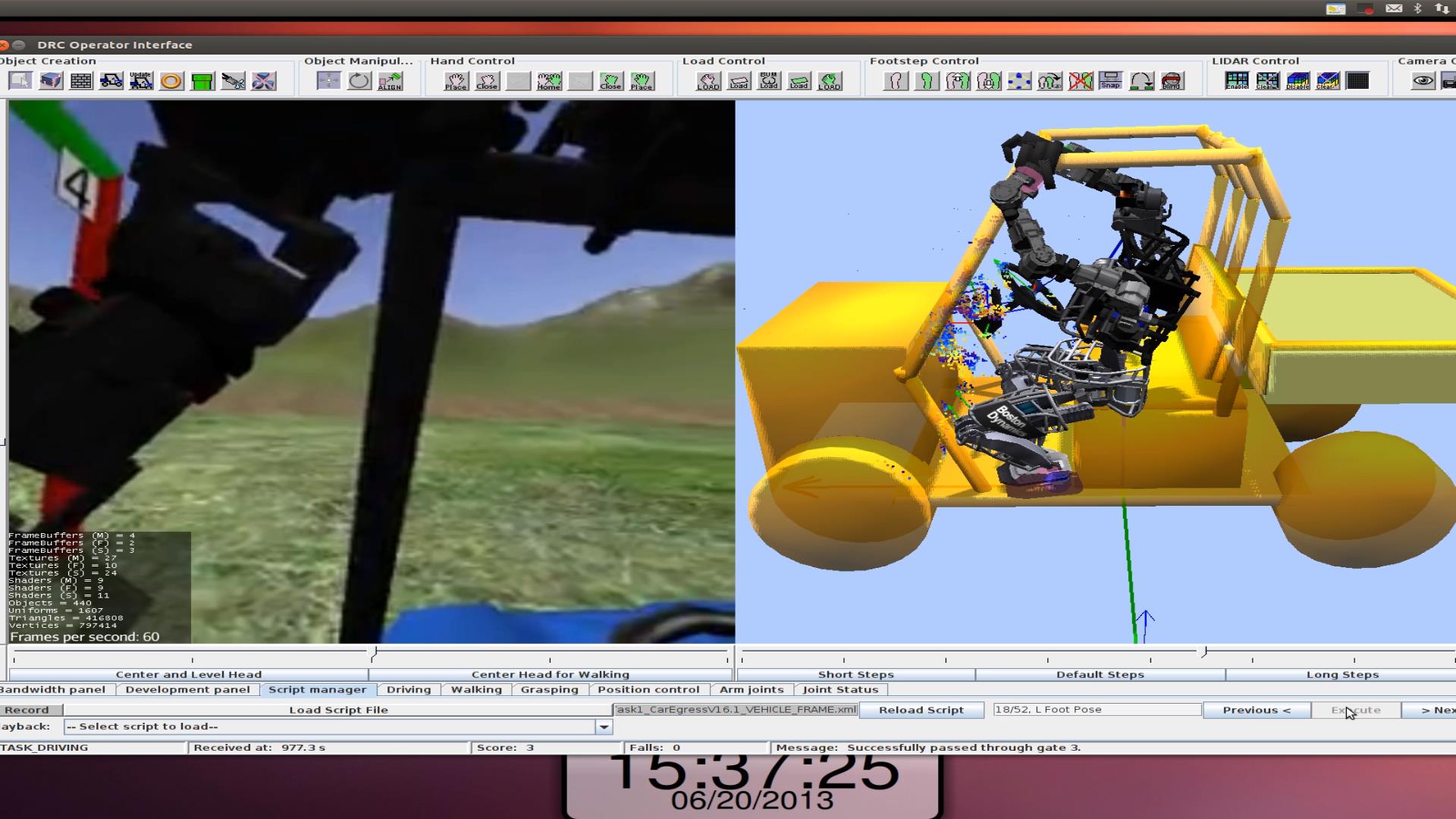

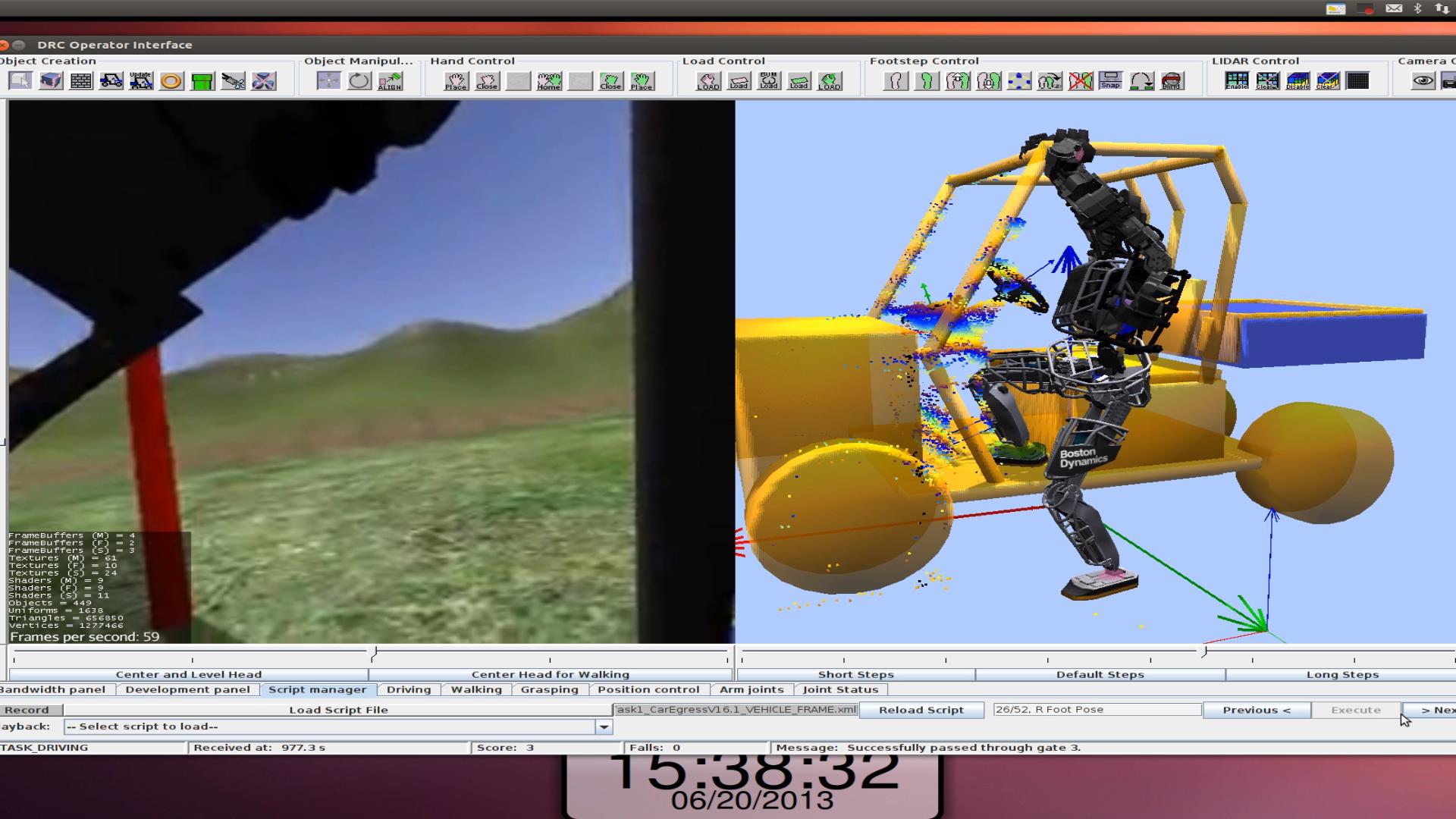

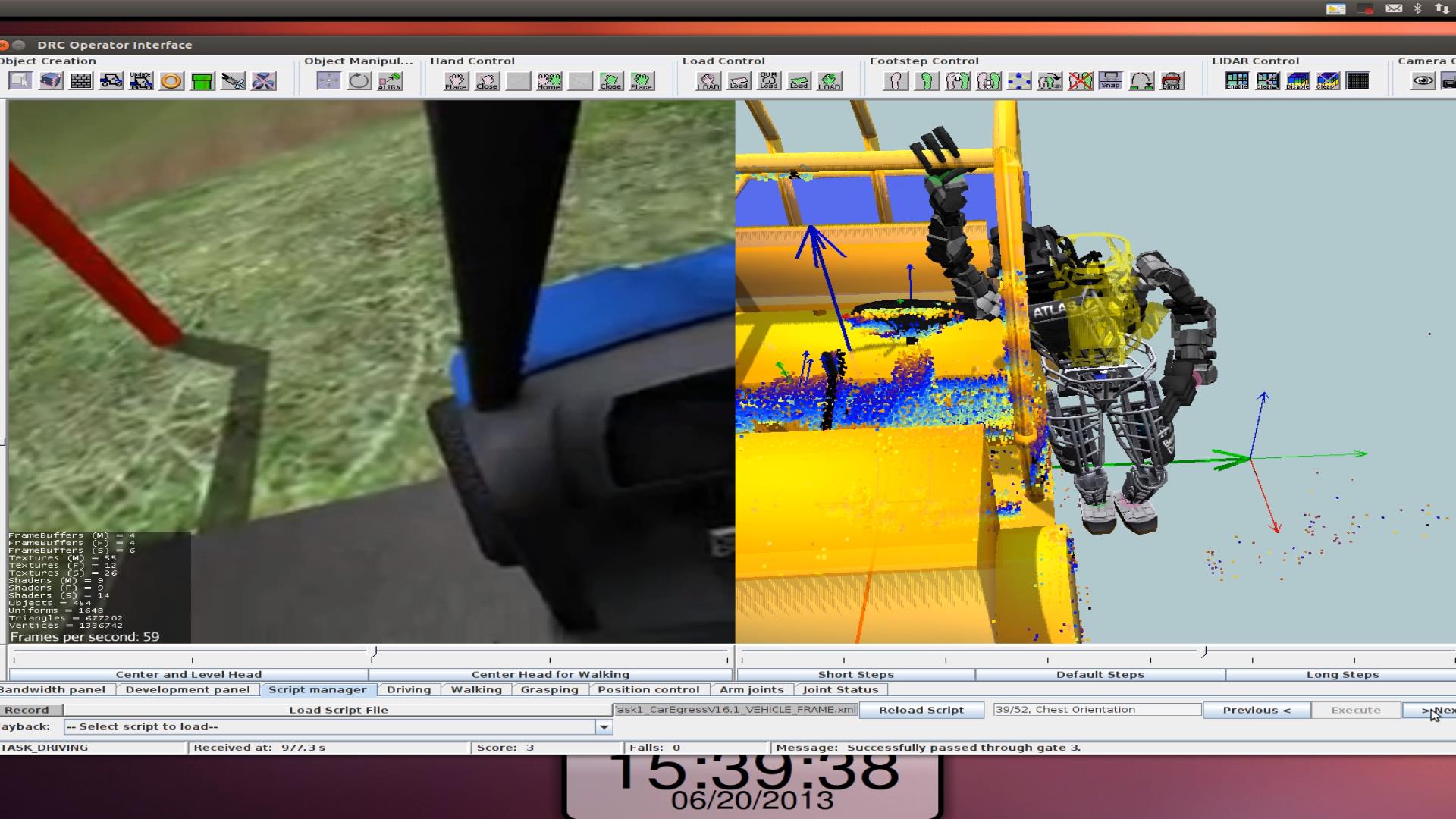

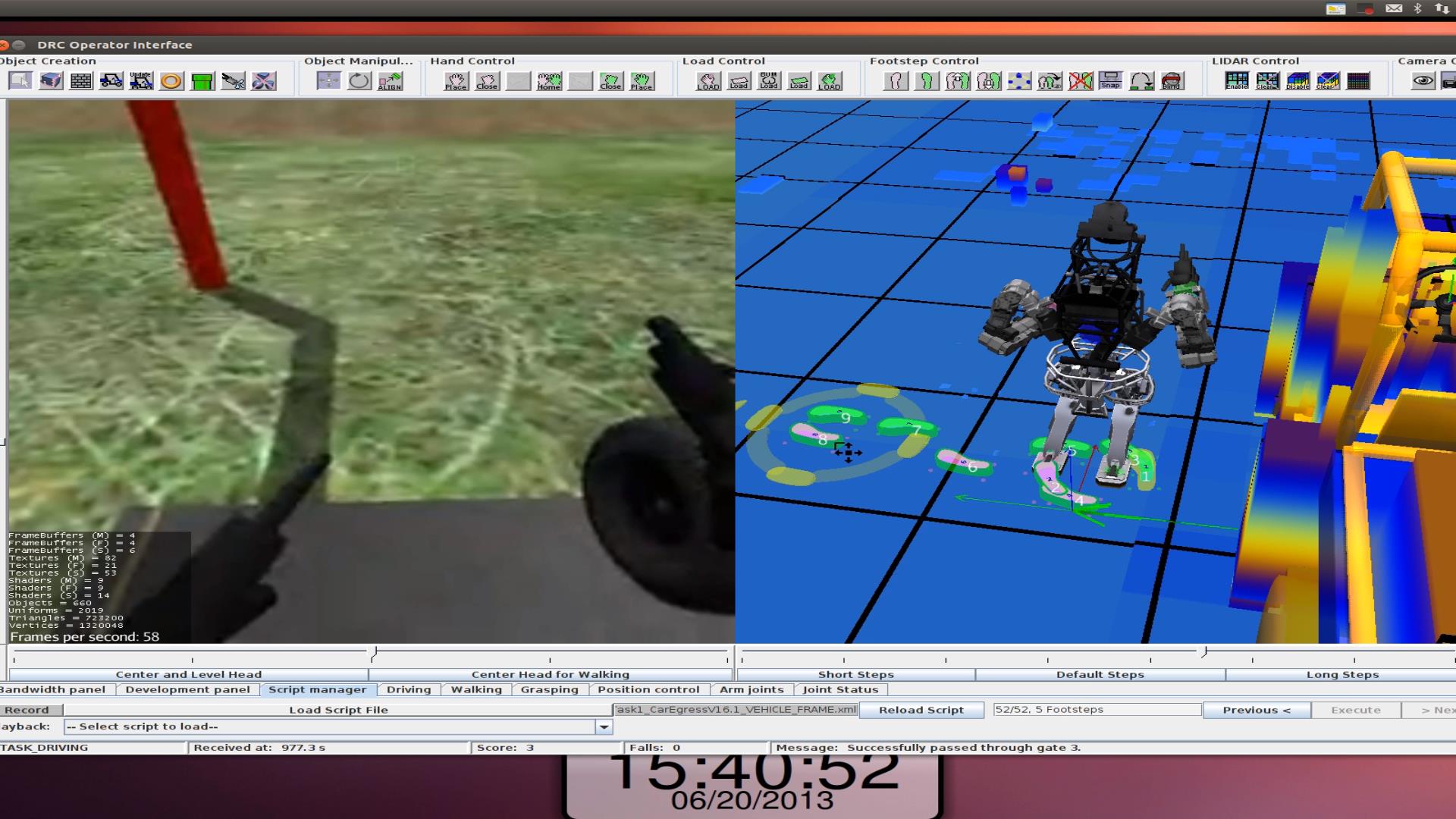

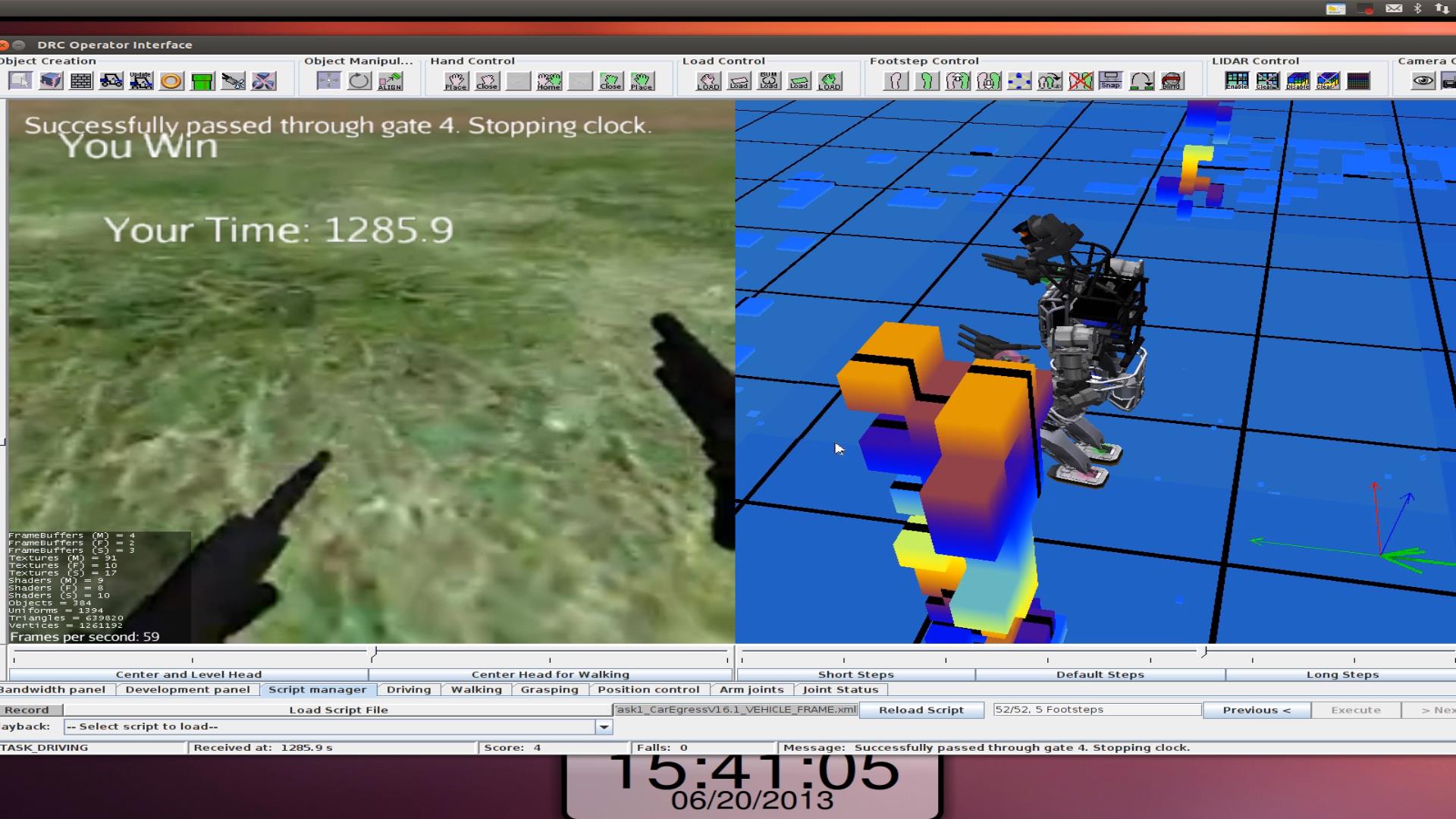

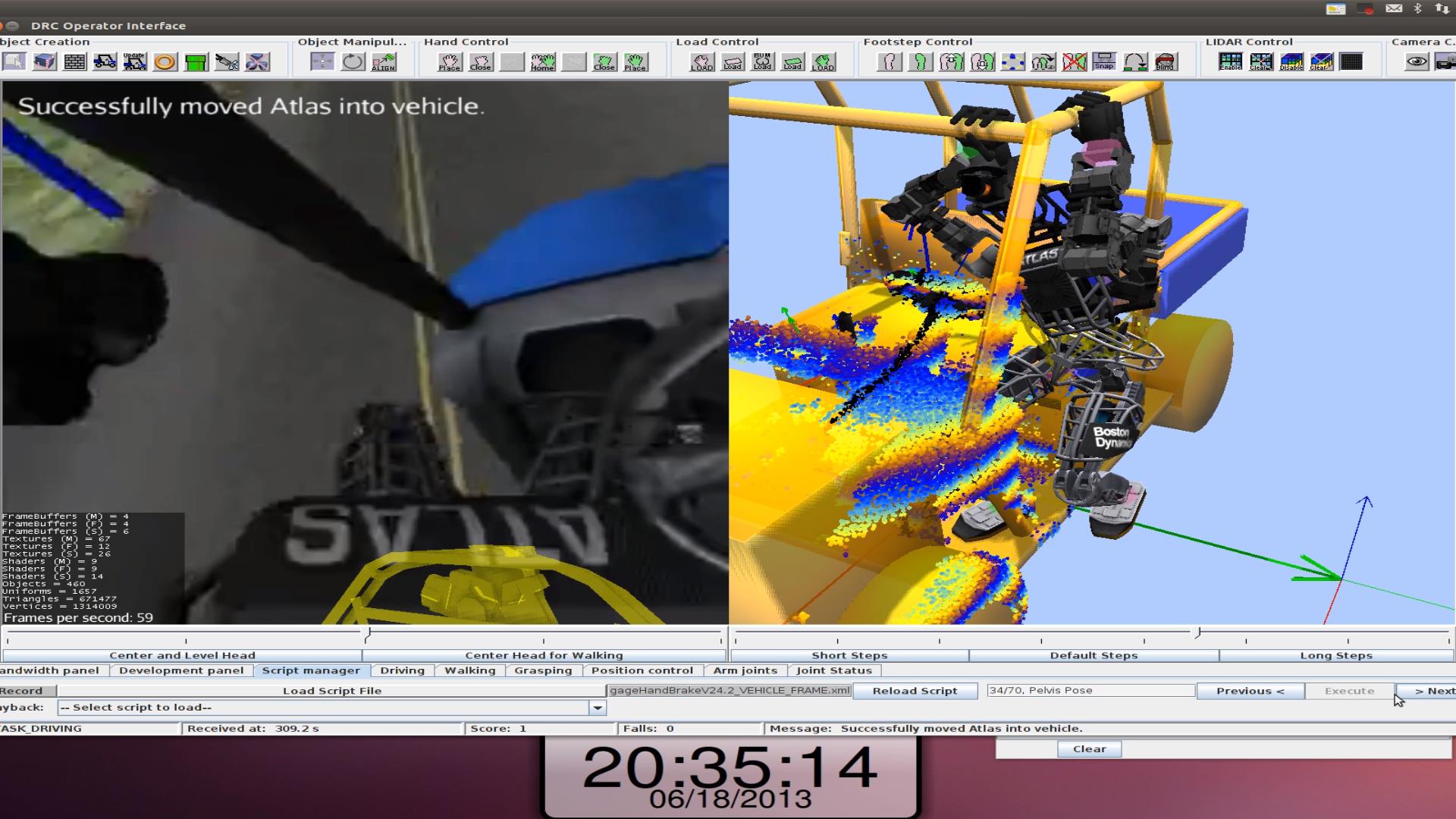

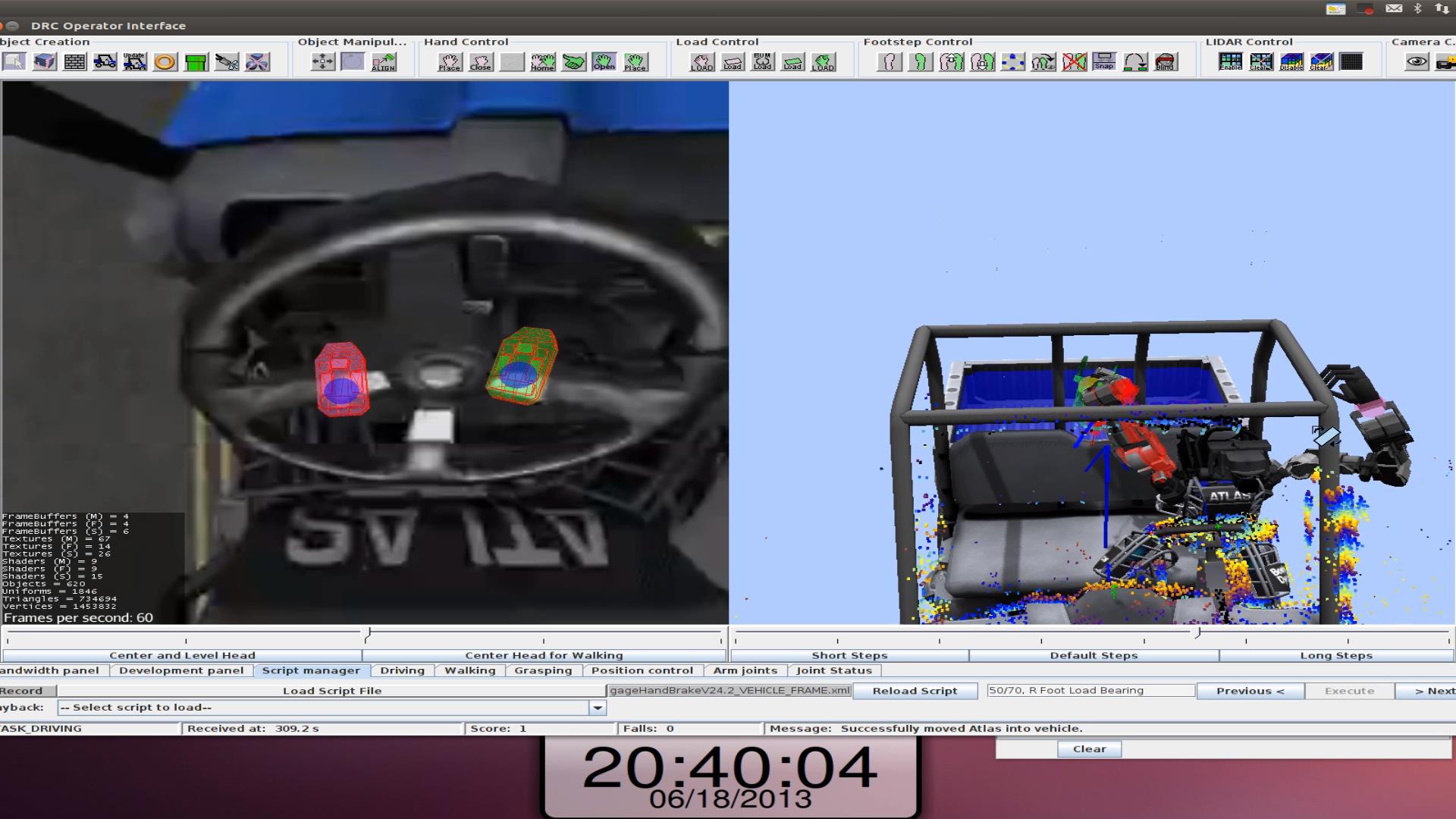

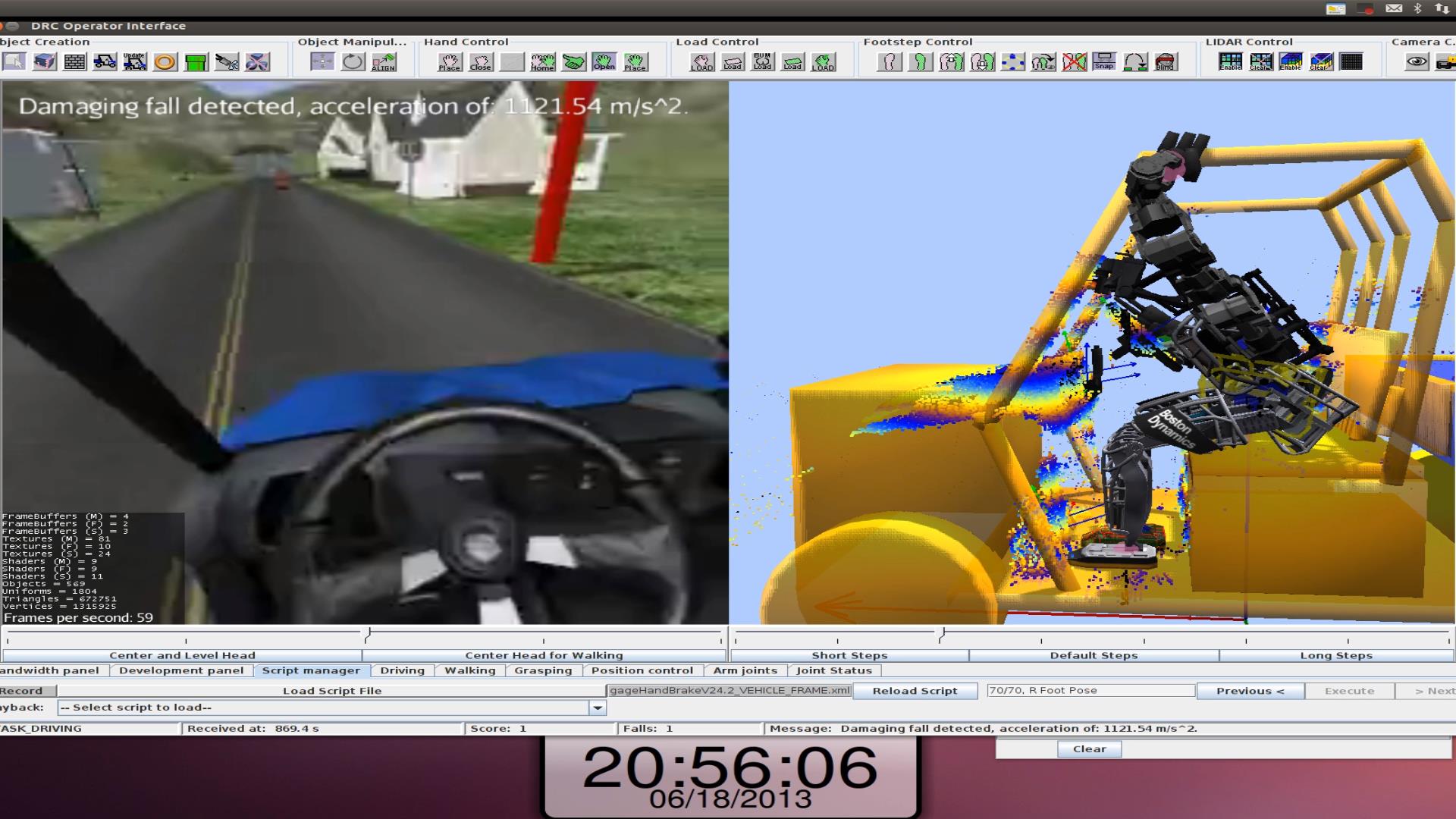

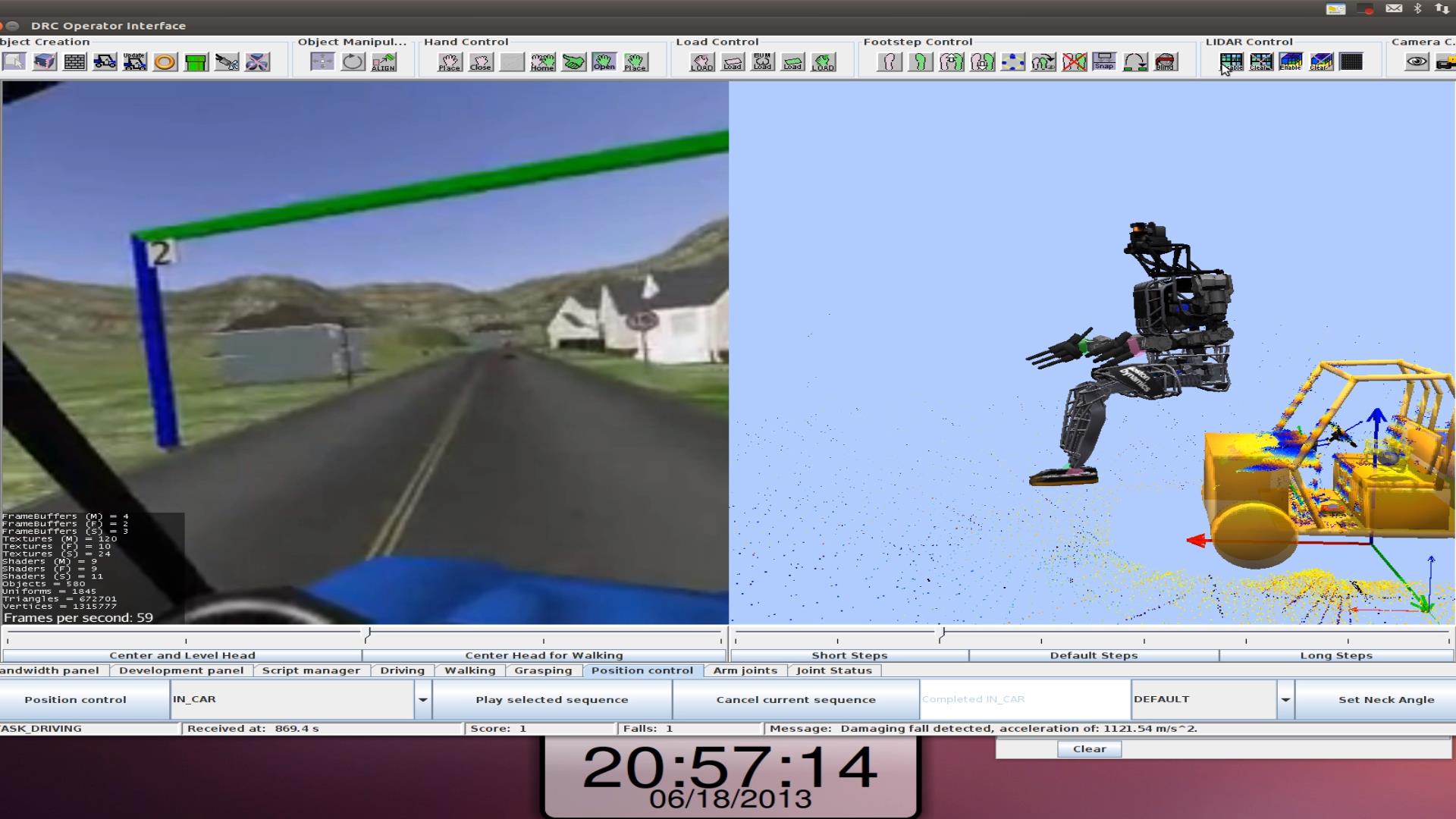

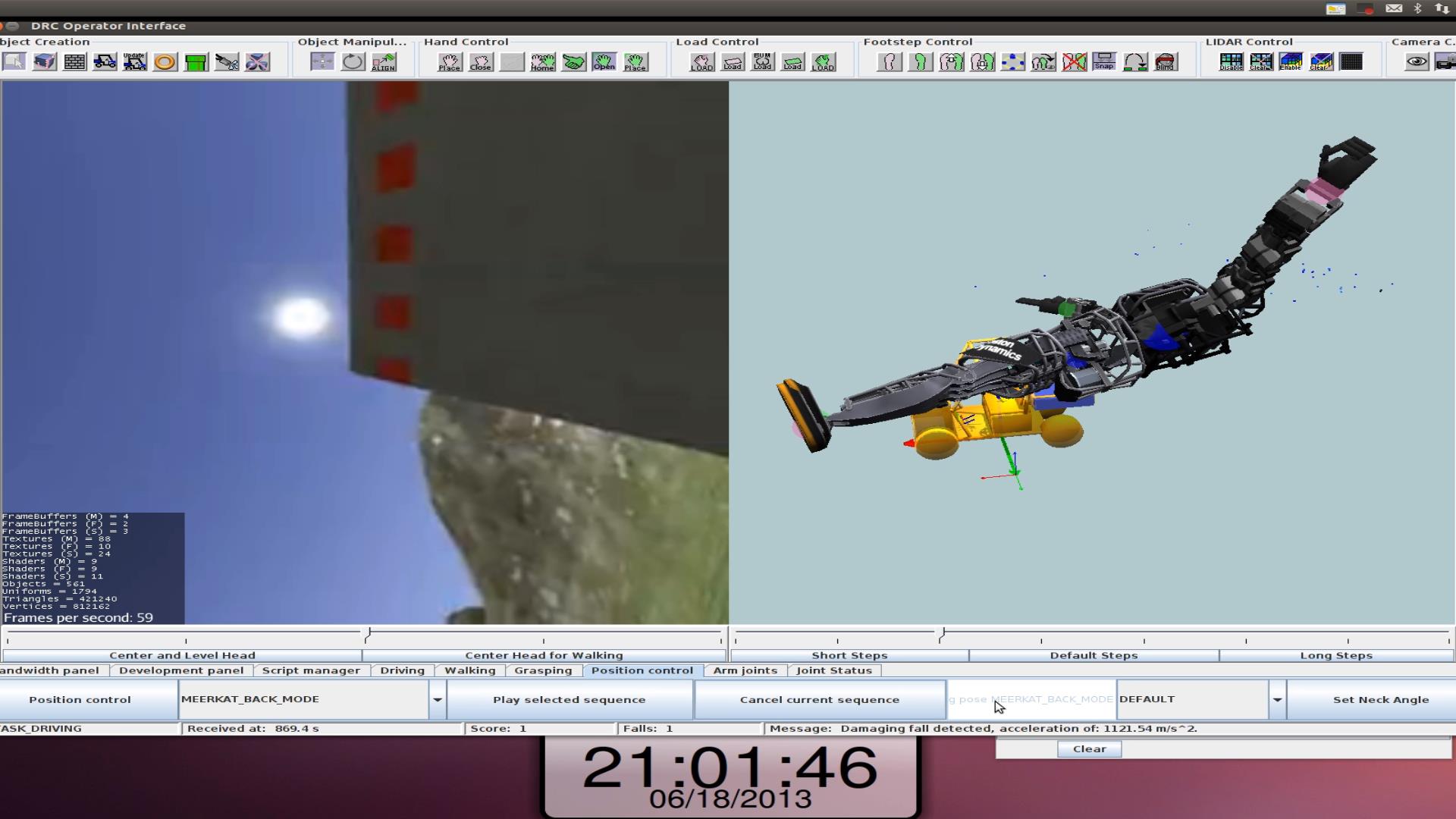

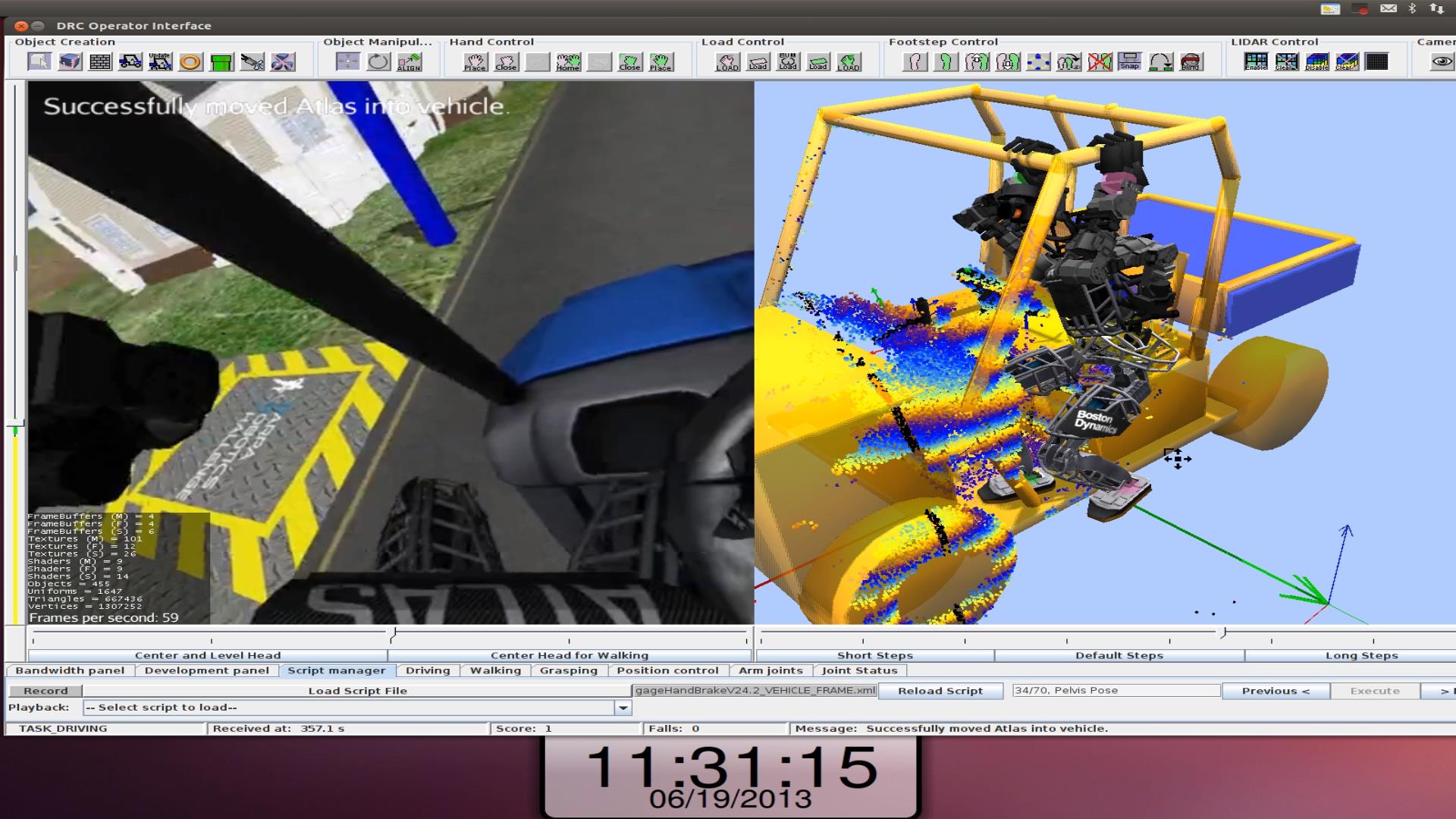

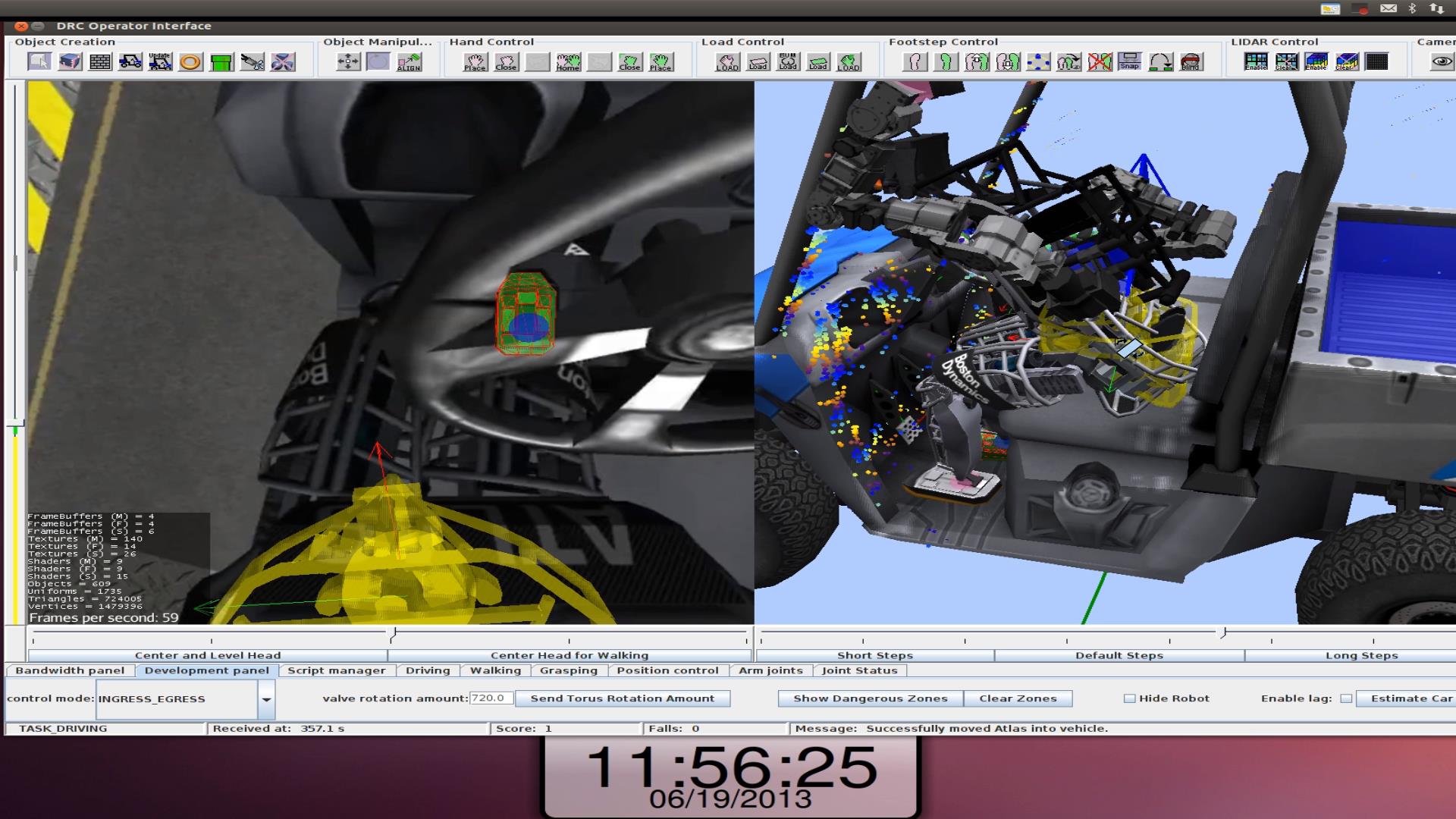

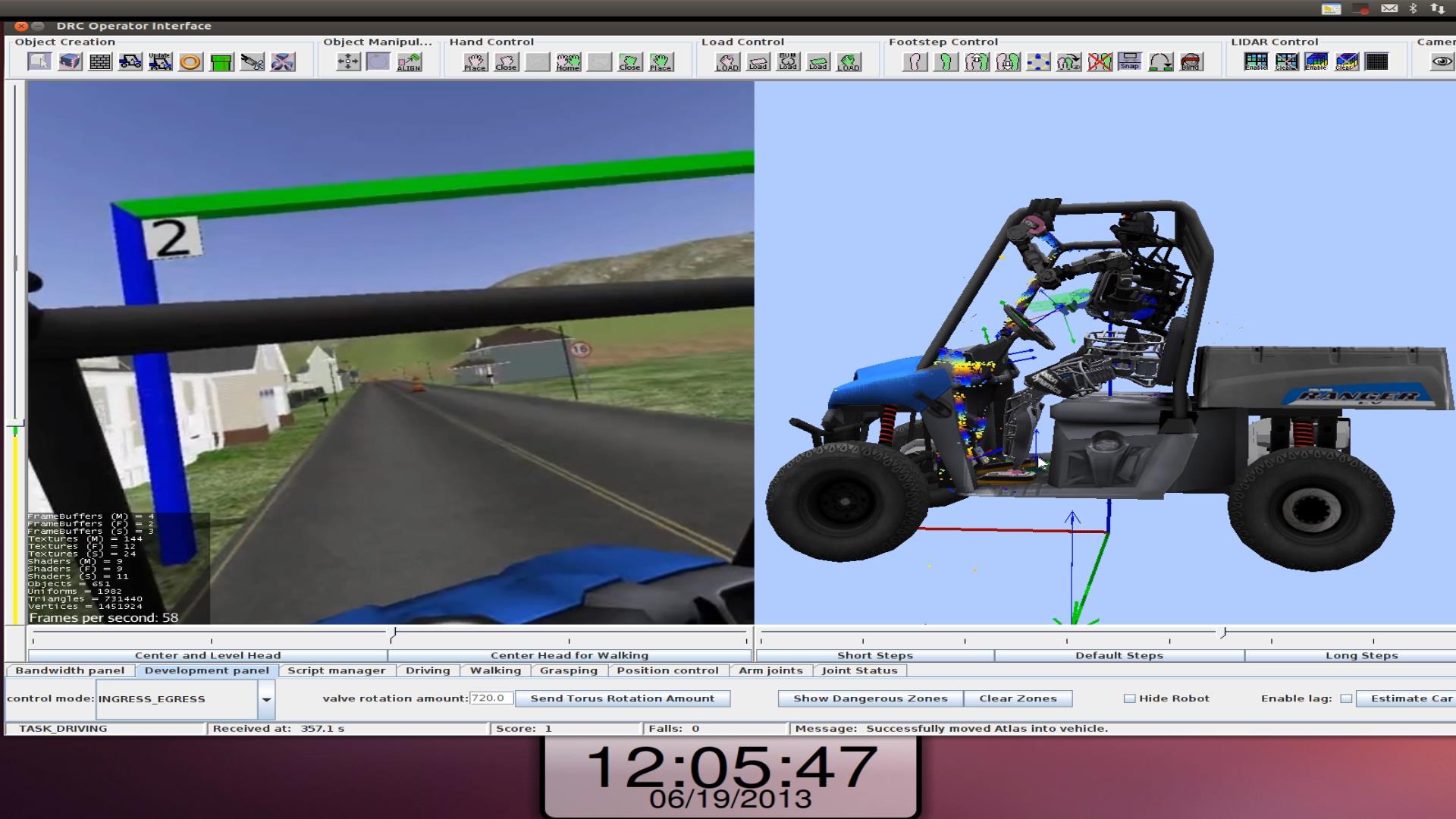

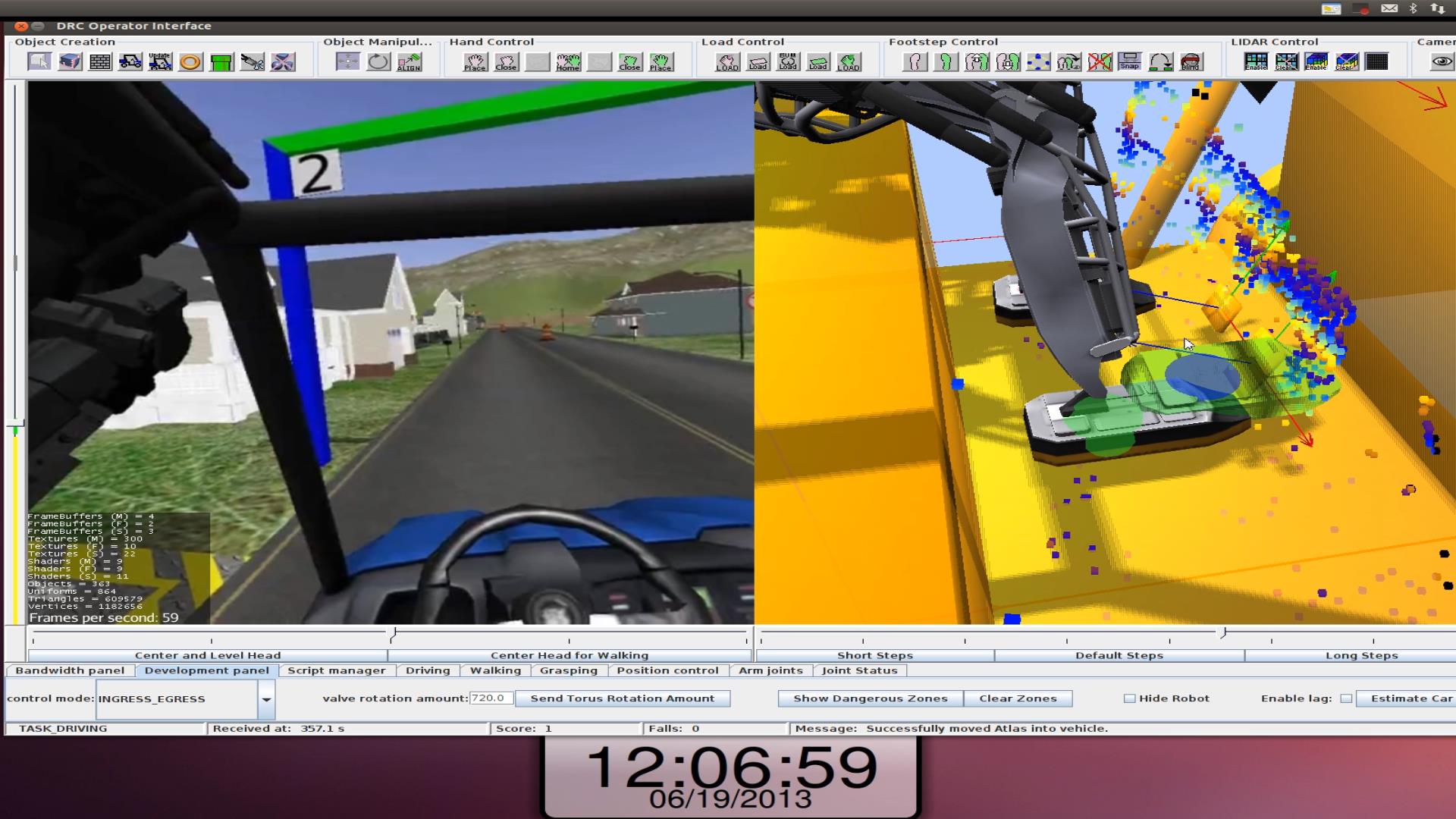

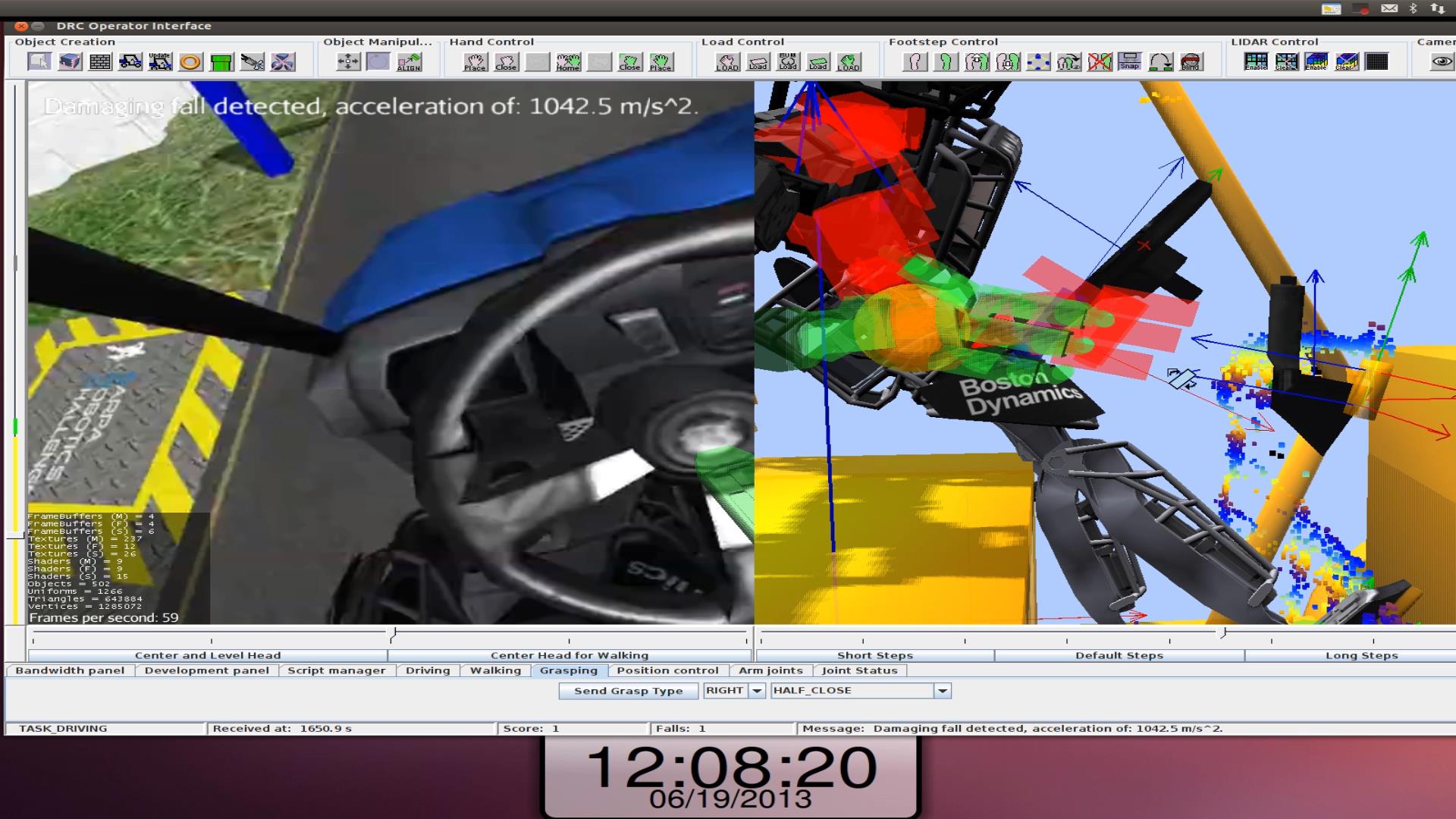

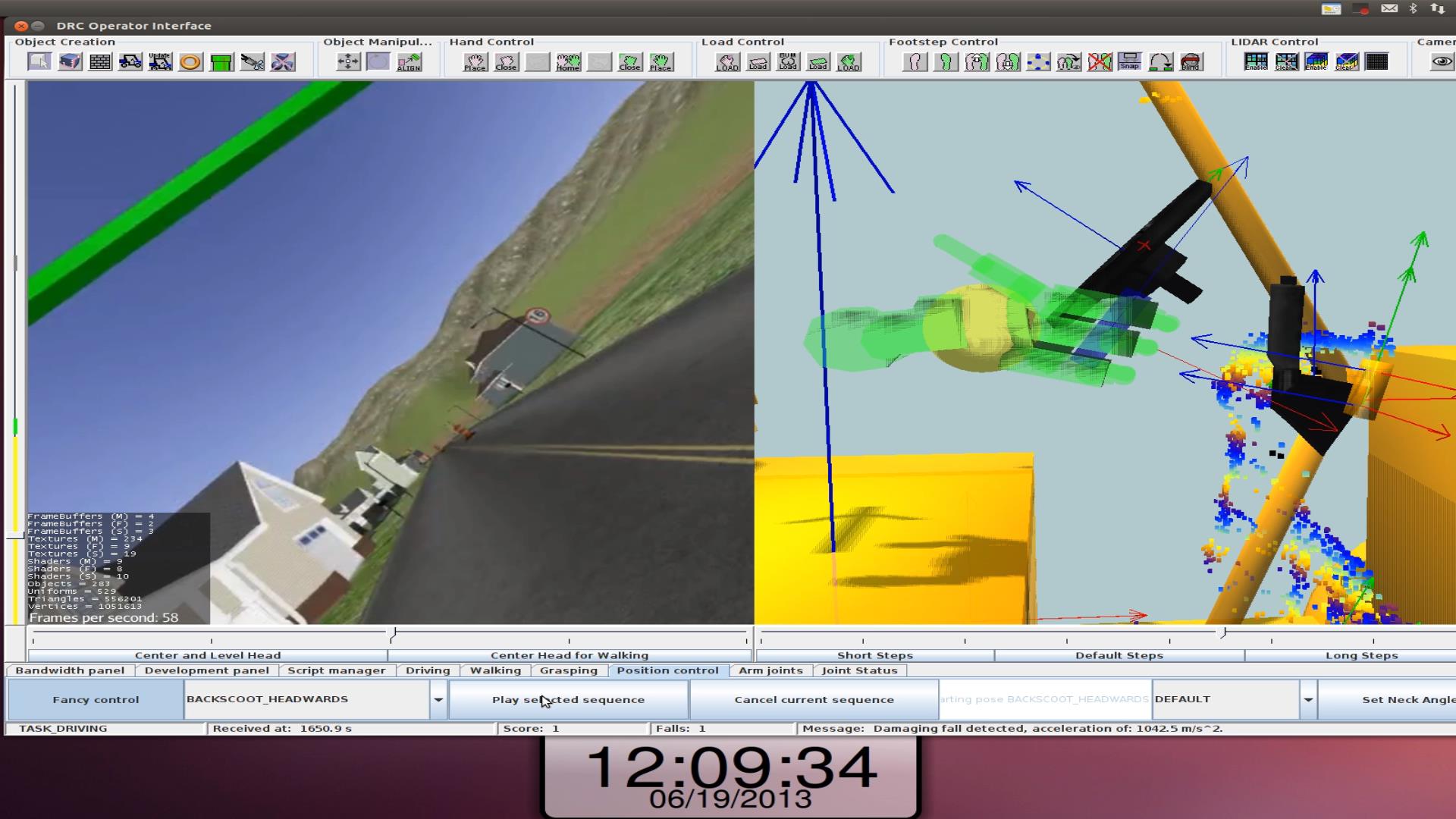

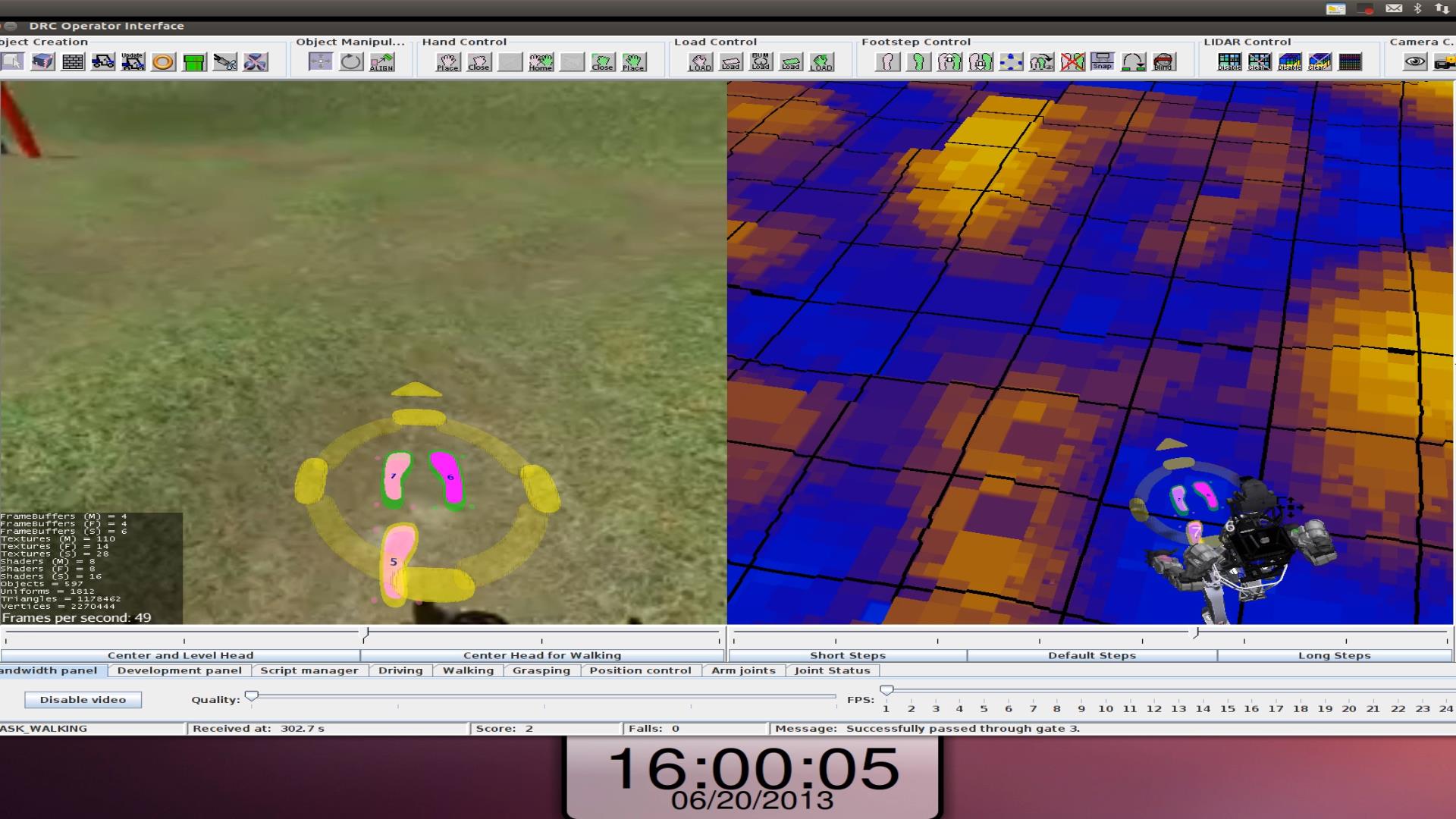

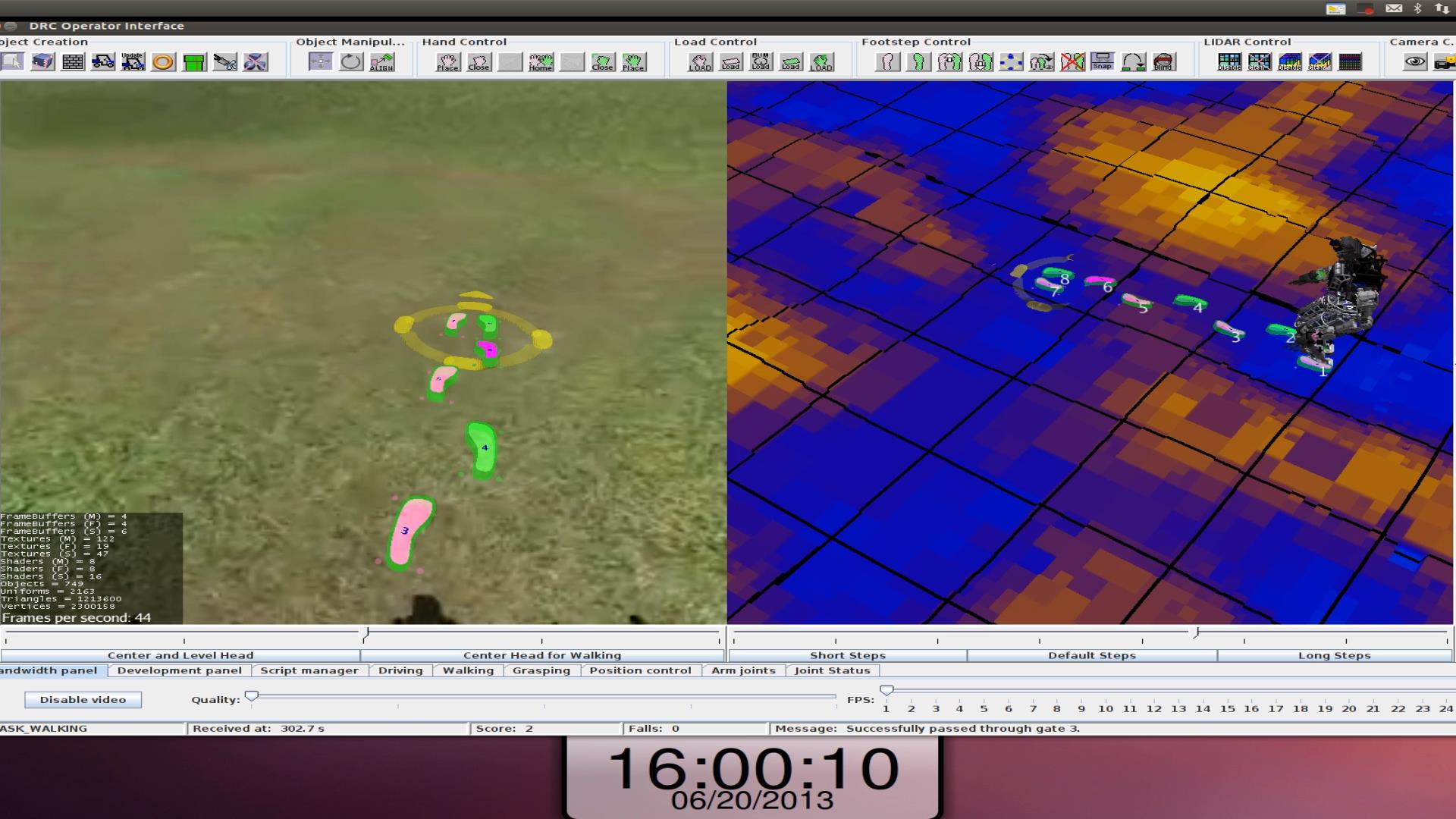

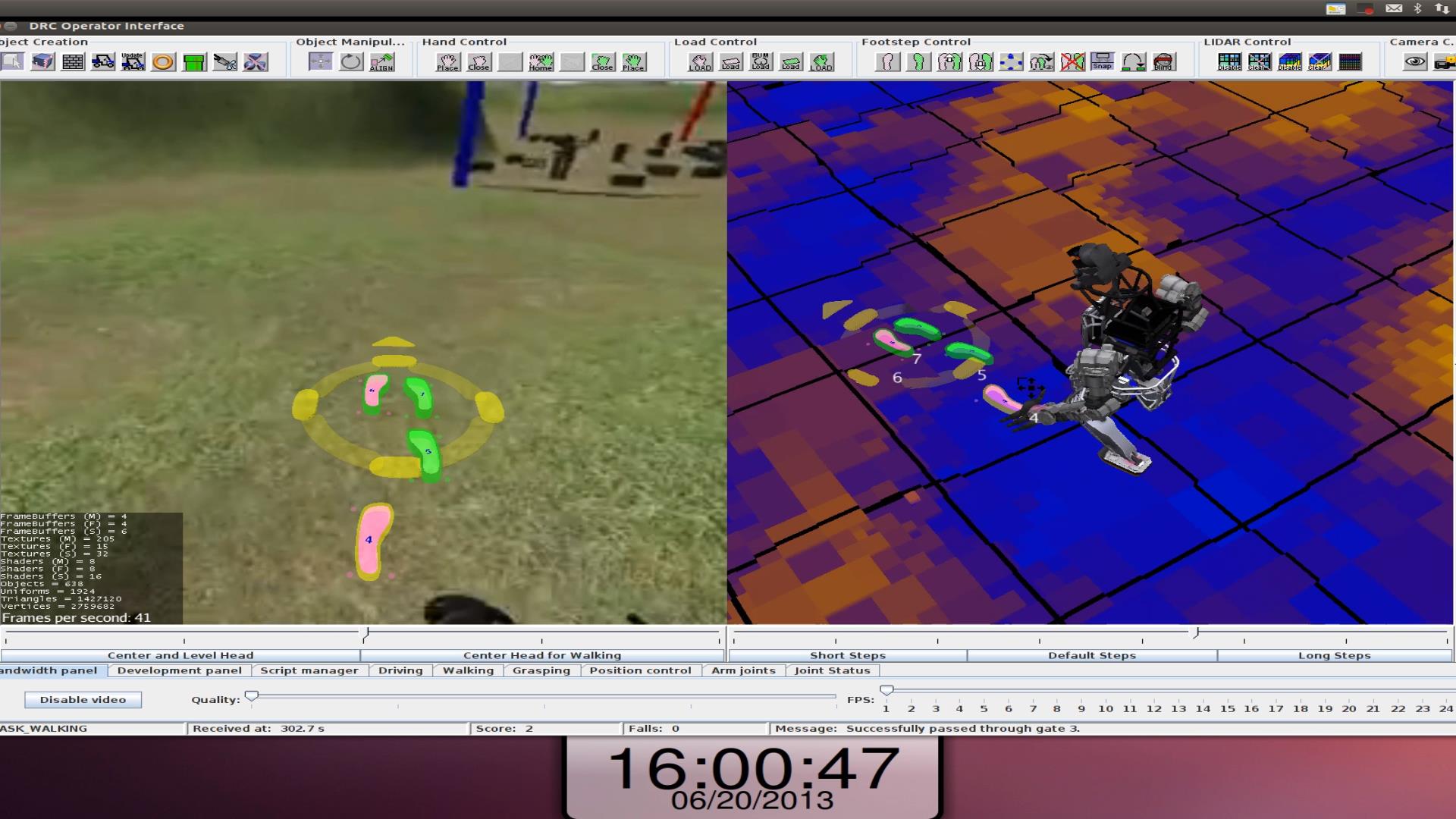

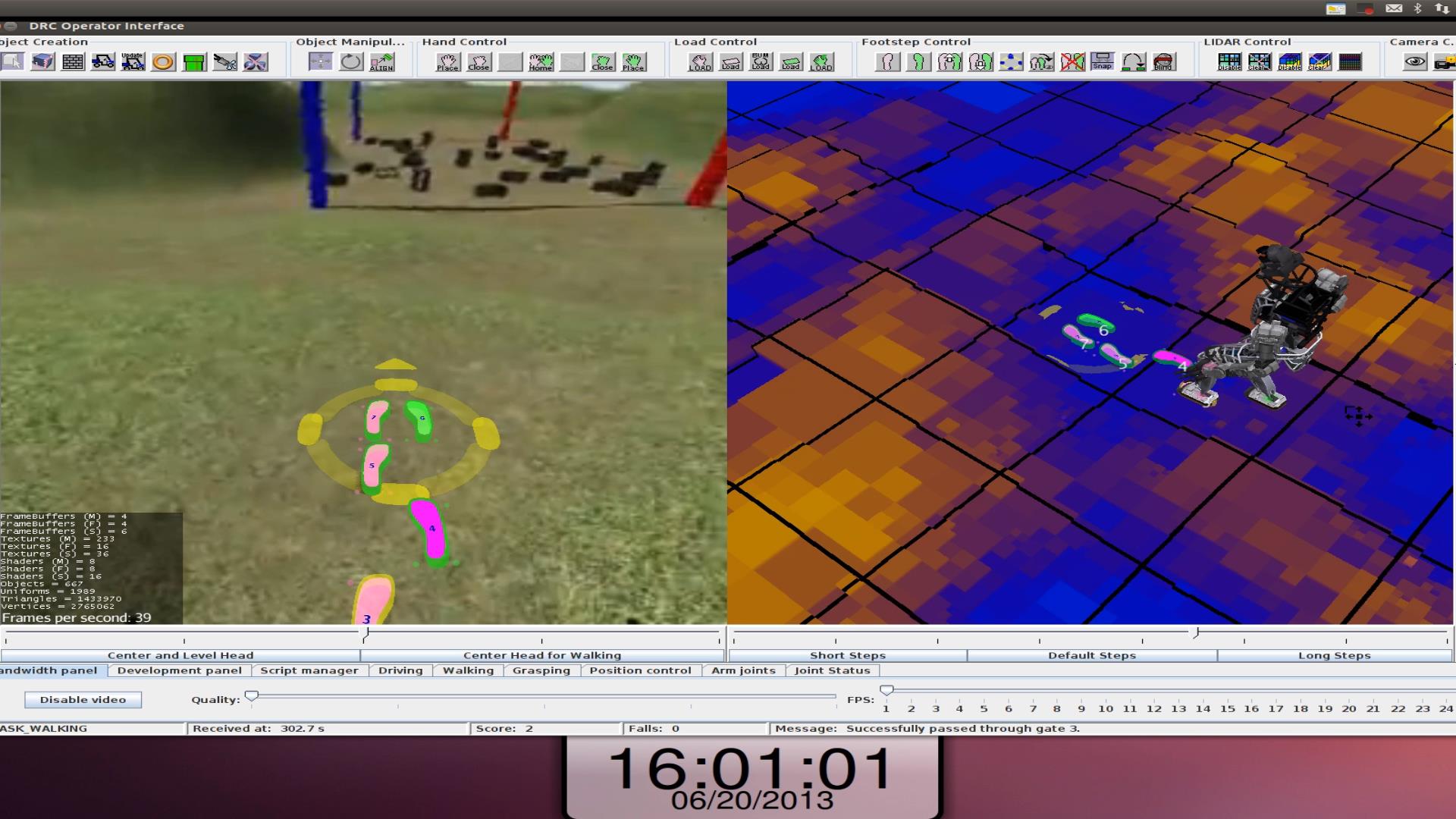

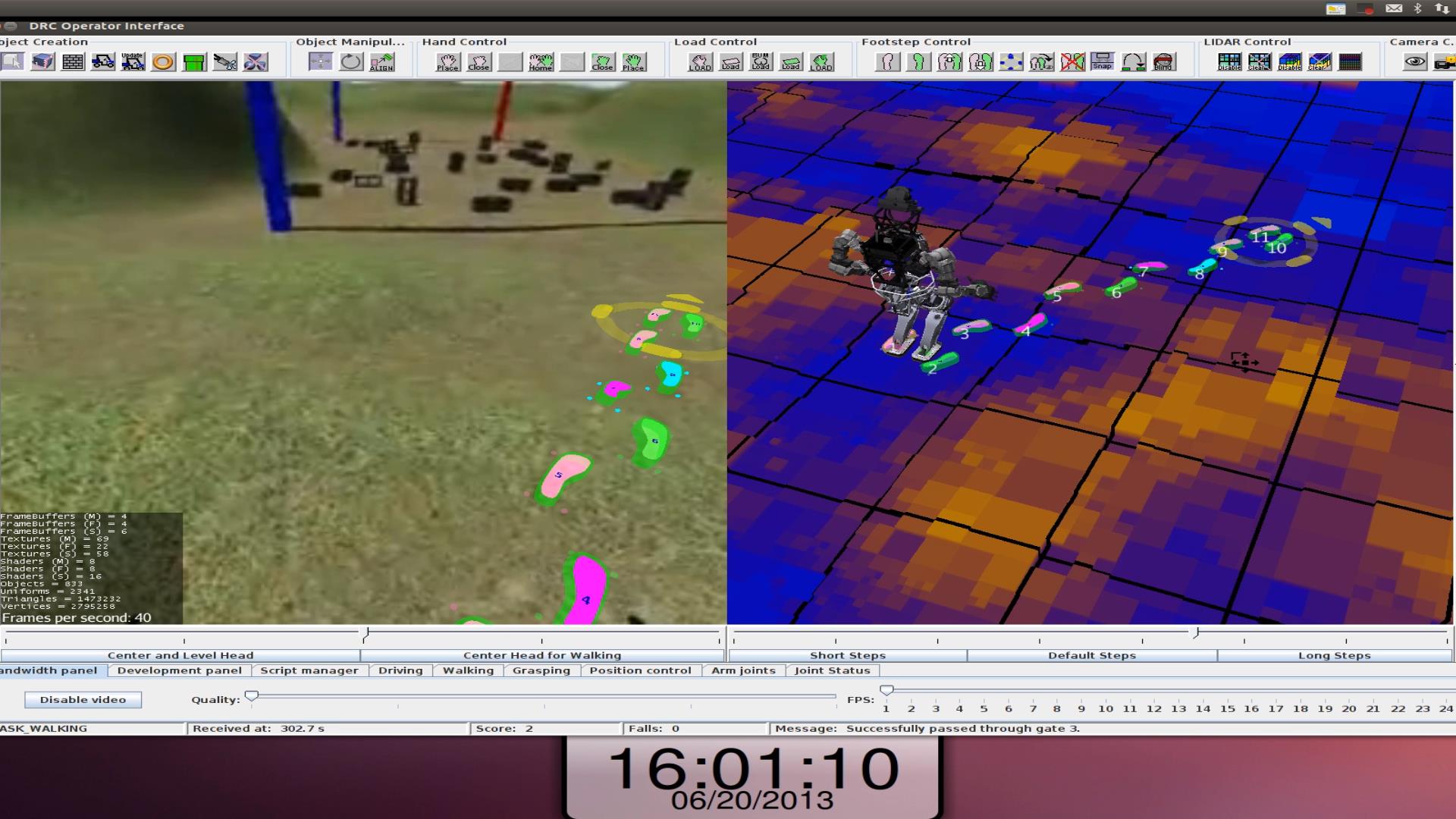

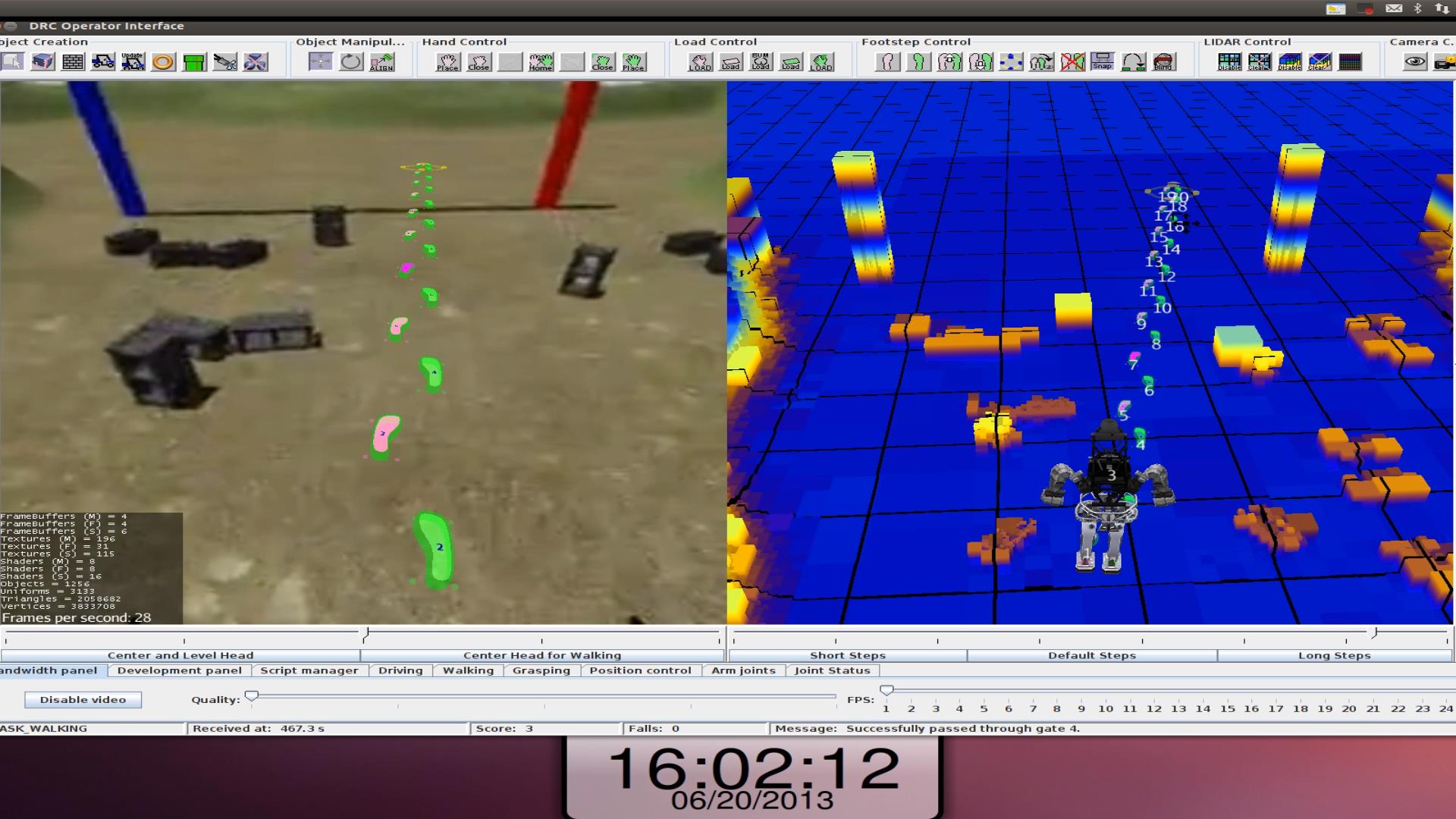

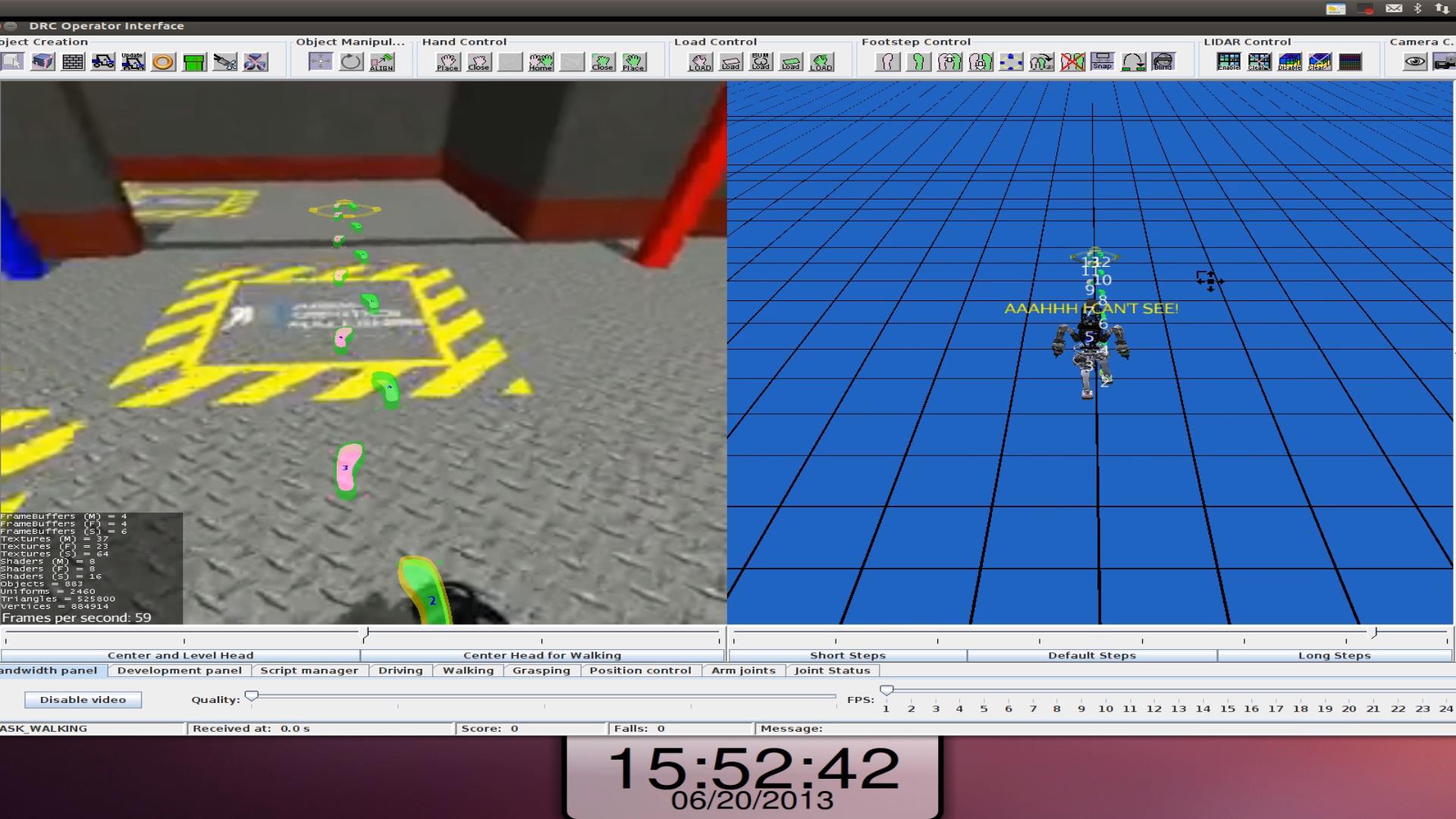

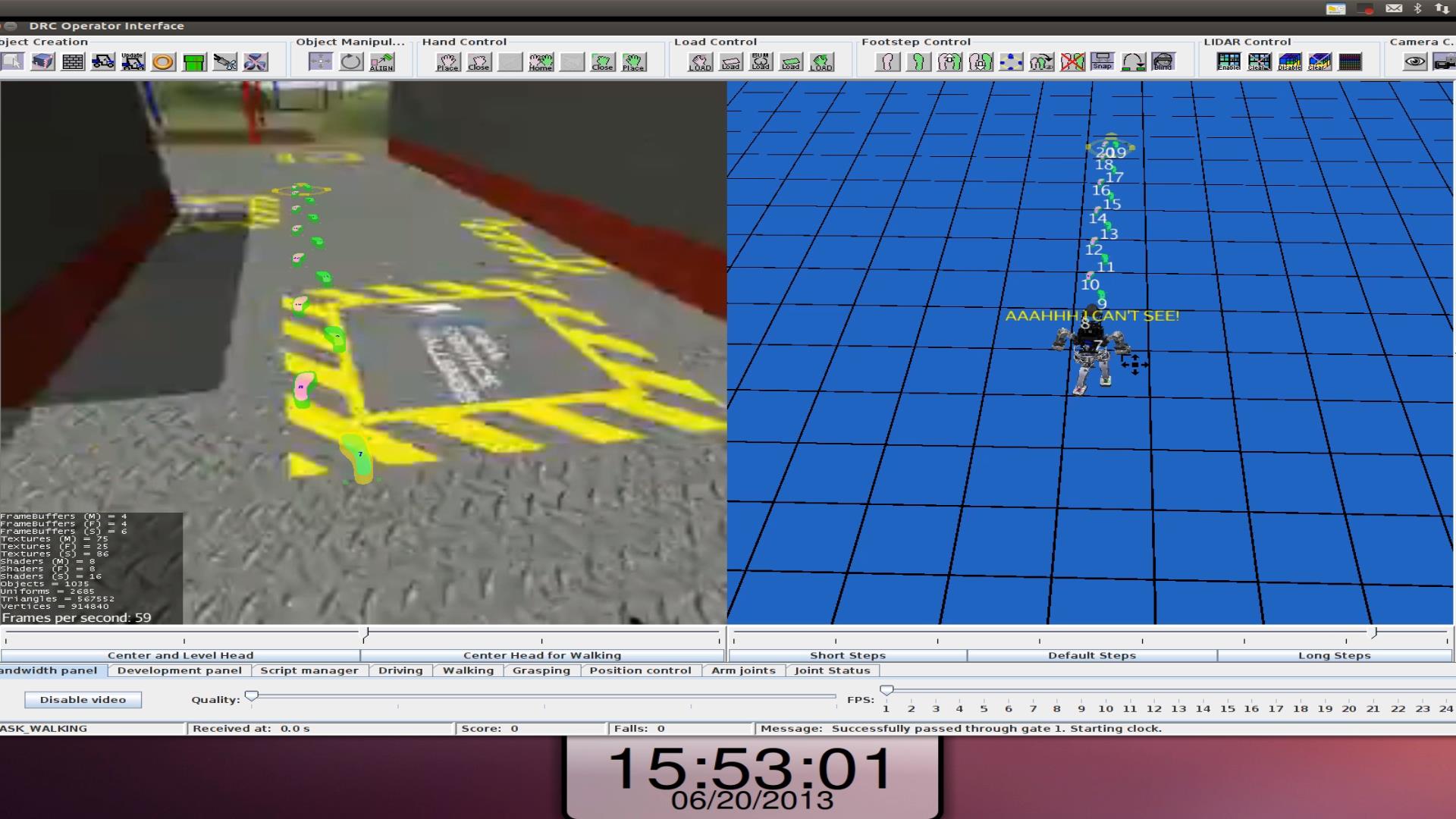

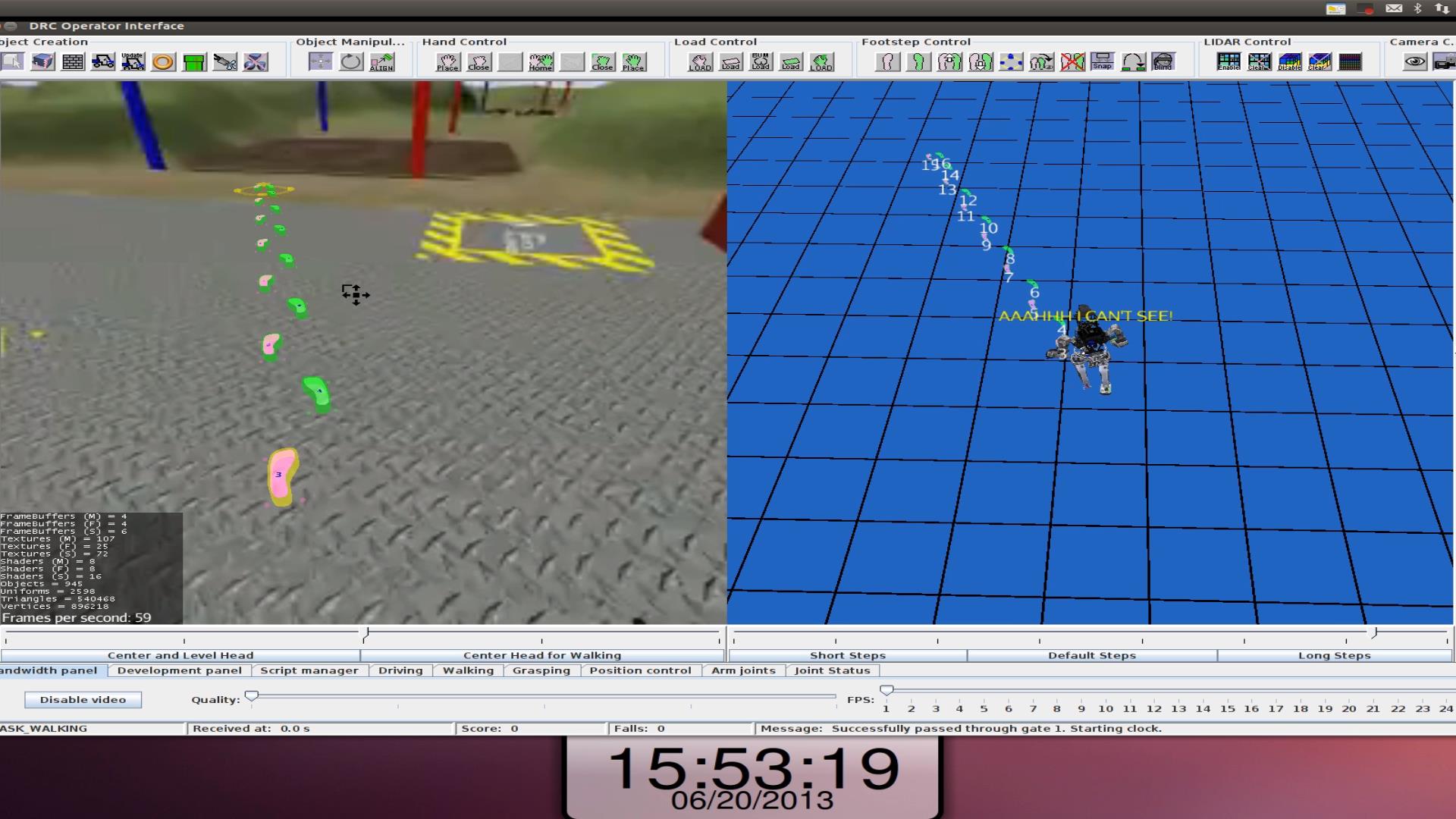

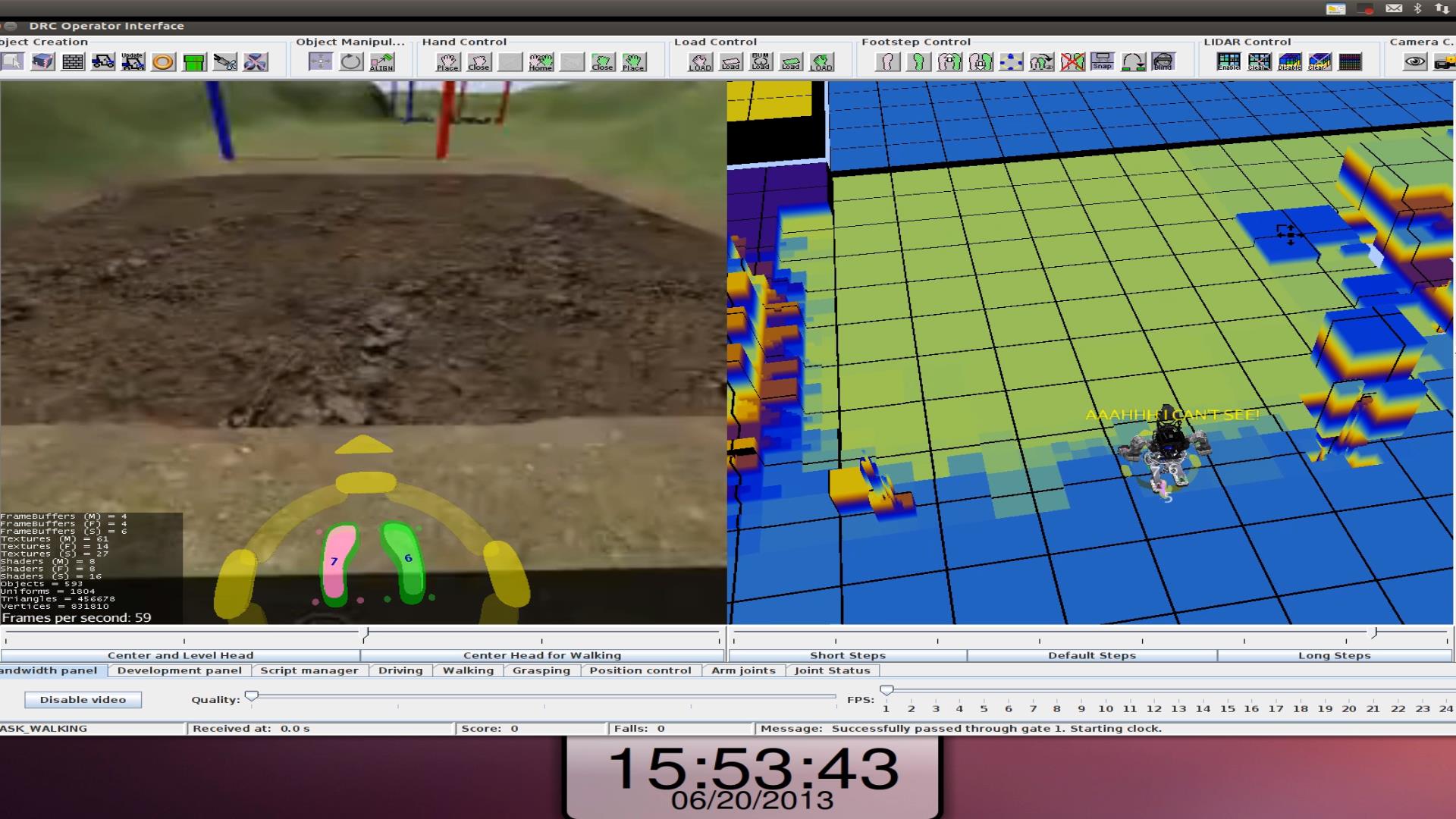

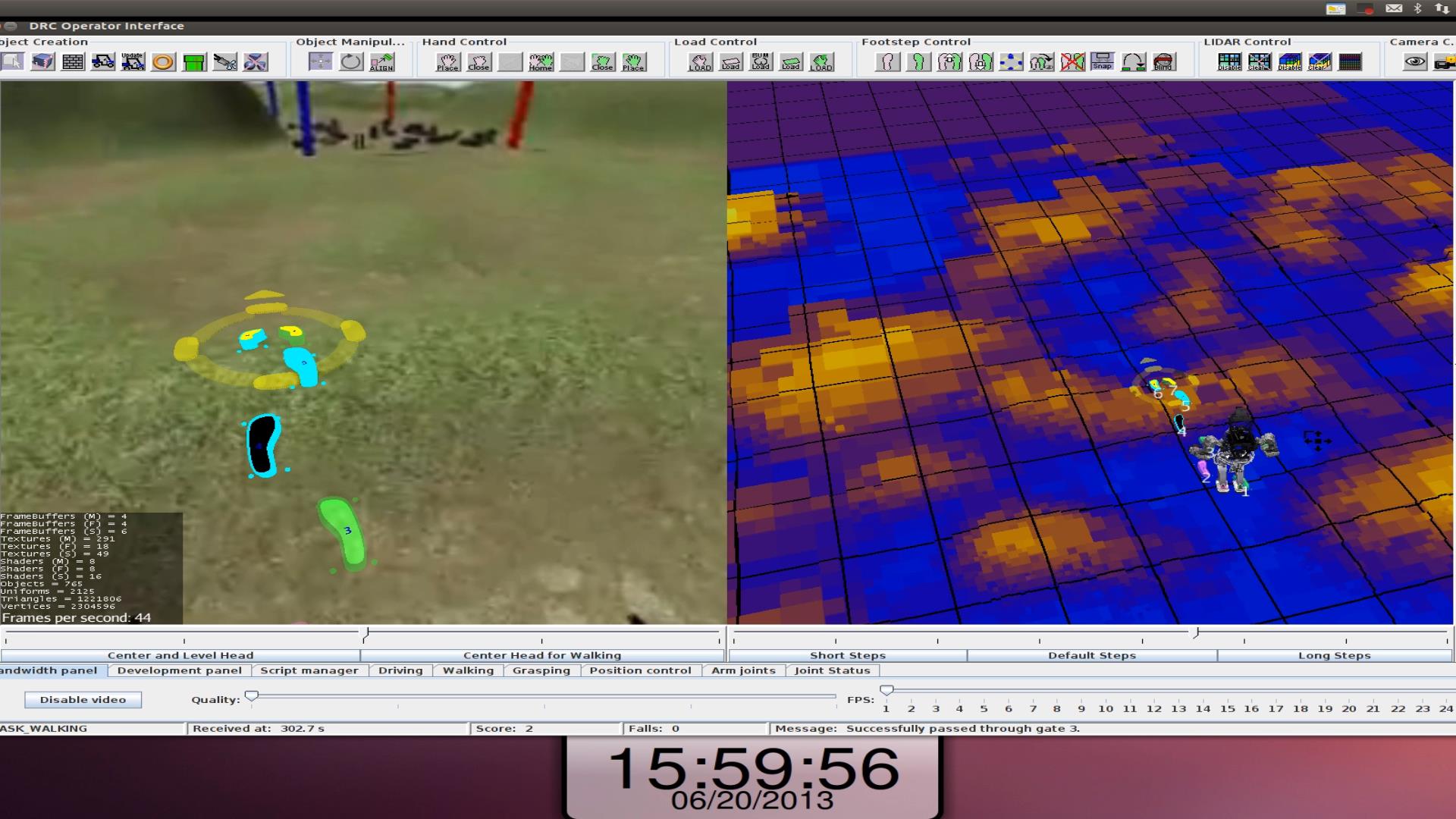

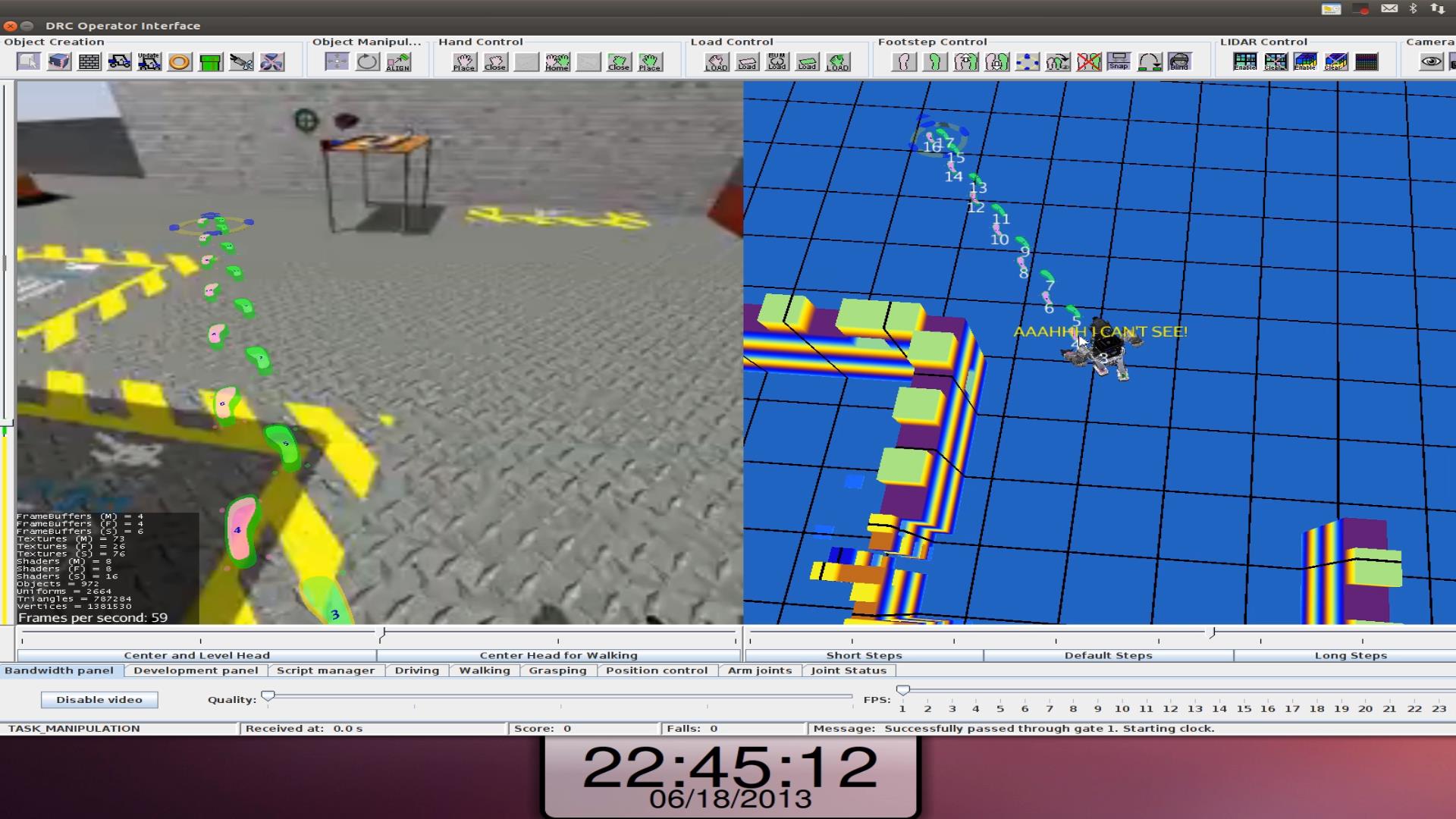

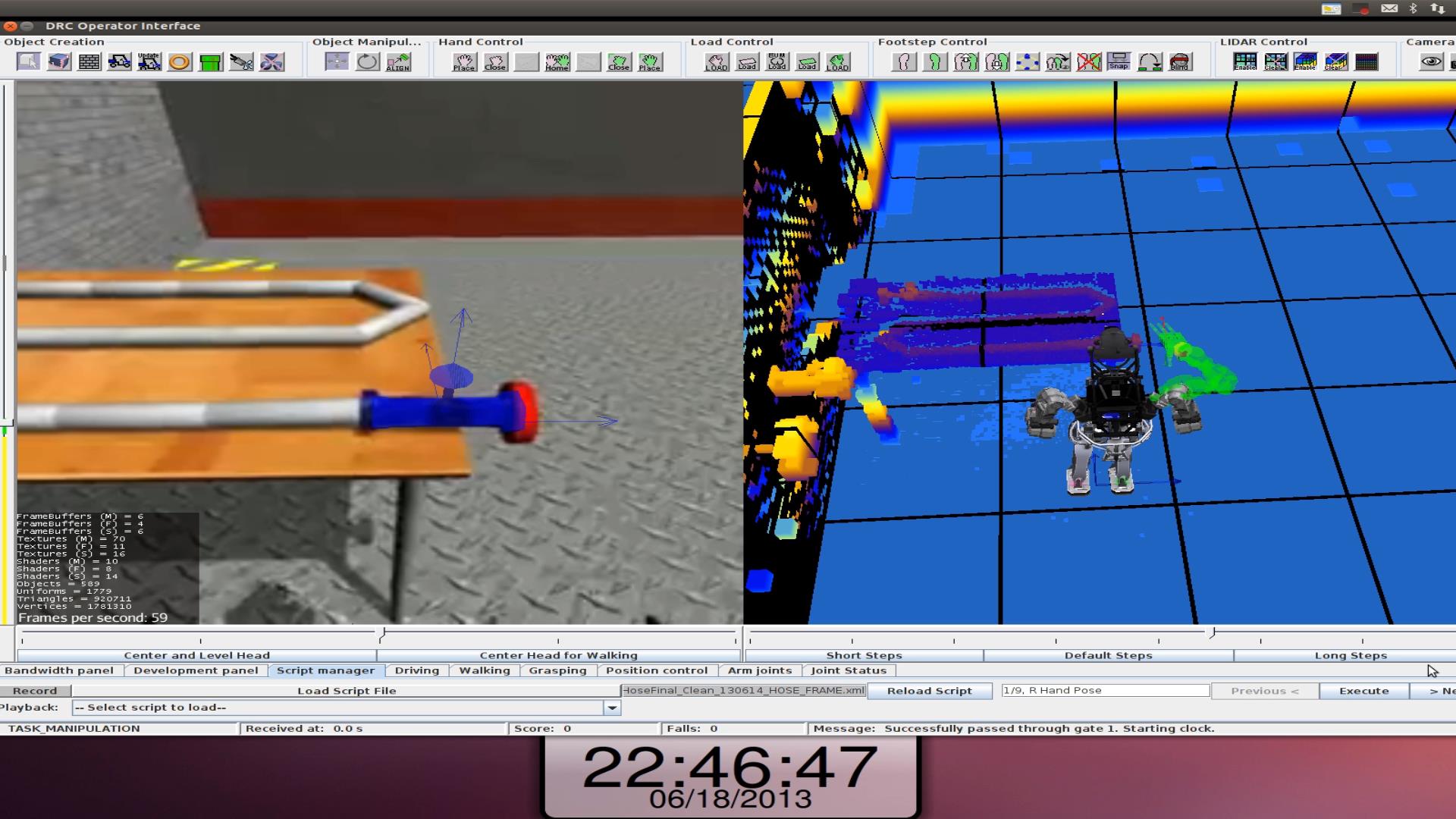

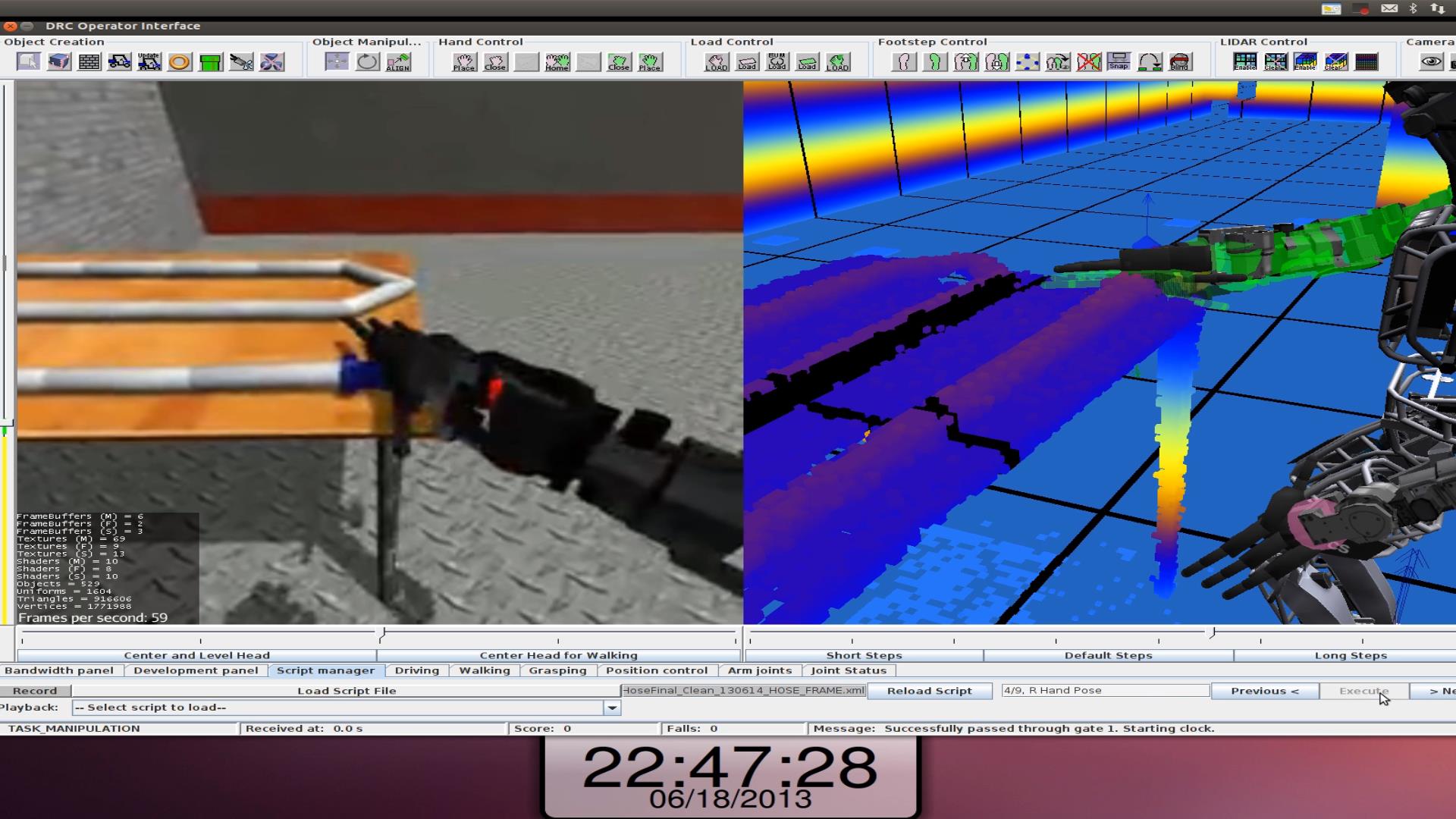

The operator interface was the only way the operator could give commands to the robot and see what the robot was doing. The left half of the operator interface is from a camera on the robot. The right half of the screen is a 3D reconstruction of the world based on the LIDAR and sensor feedback. We can pan, zoom, and rotate the right half to see what we need. Different objects could be overlaid on either image representing actions the robot is planning on taking, like footsteps, or objects that the robot needs to interact with such as the car or hose.

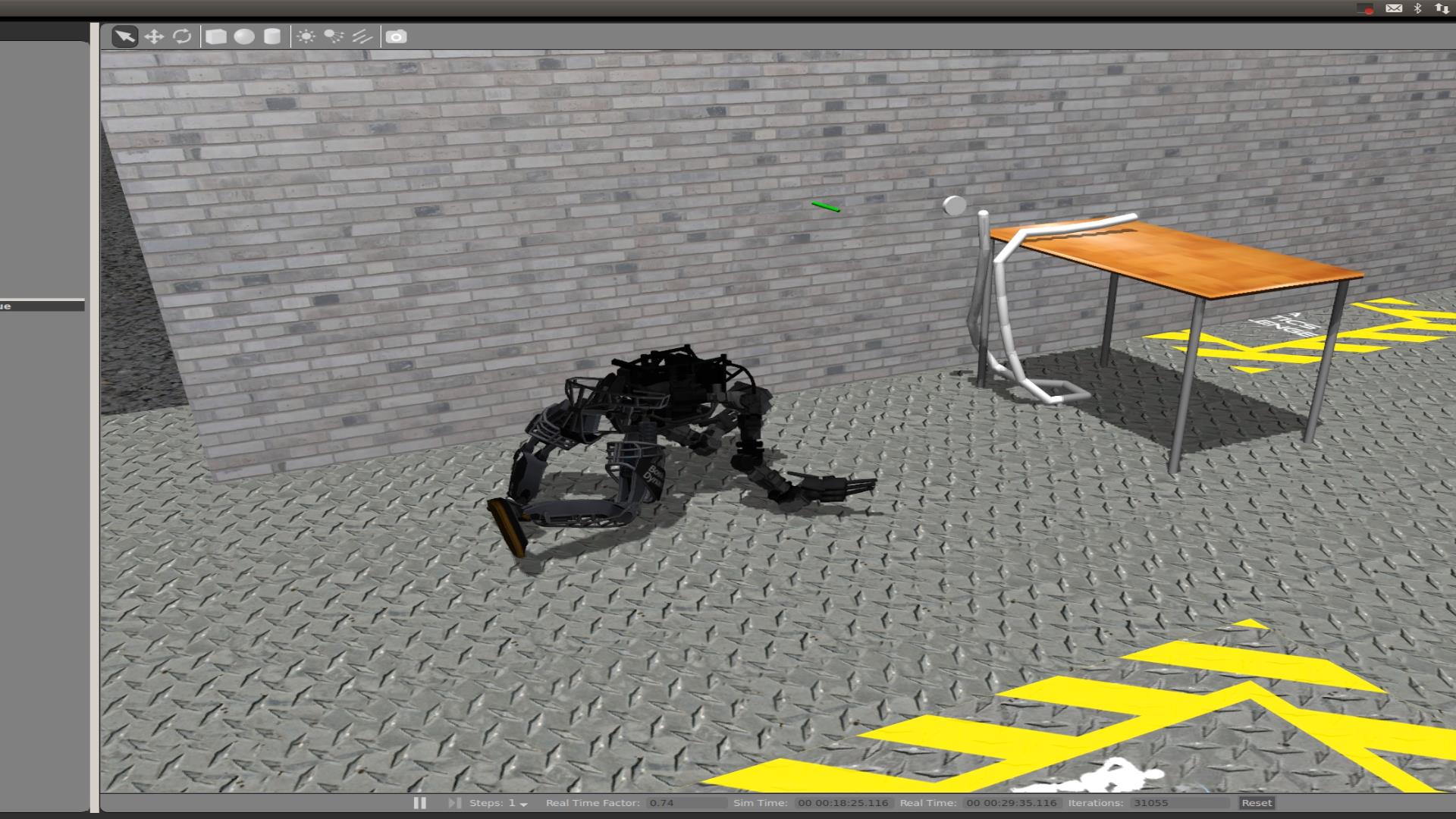

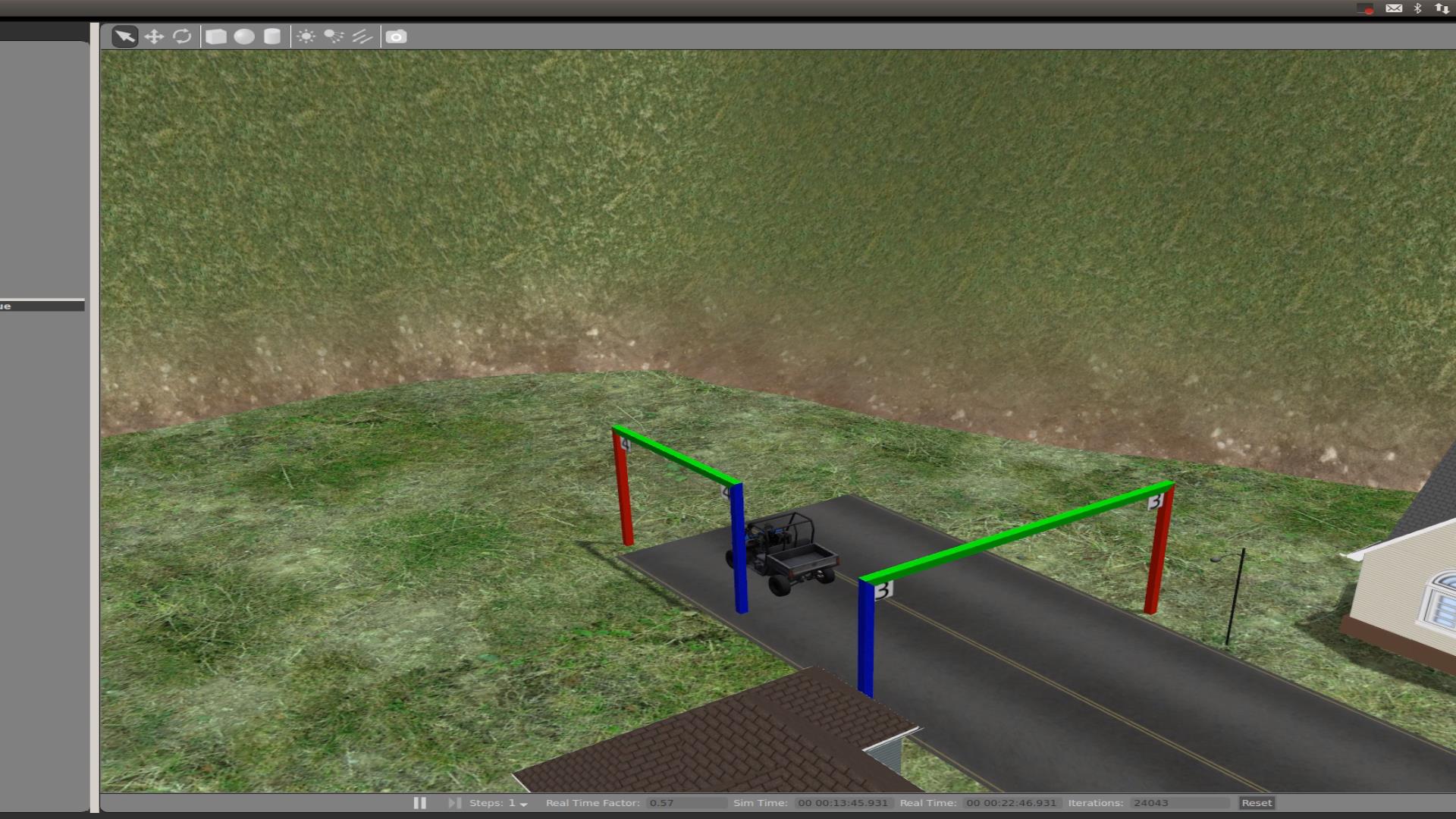

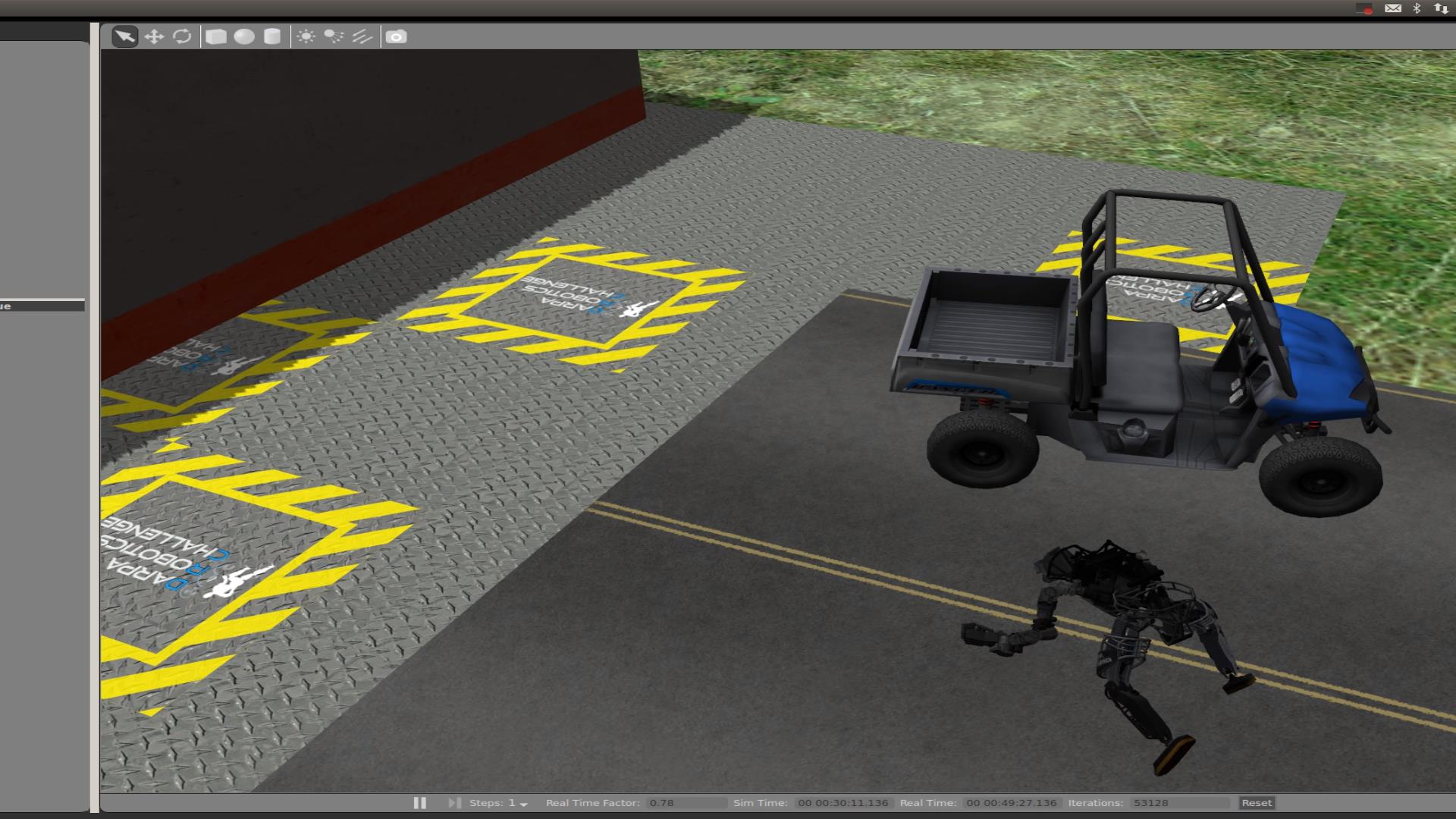

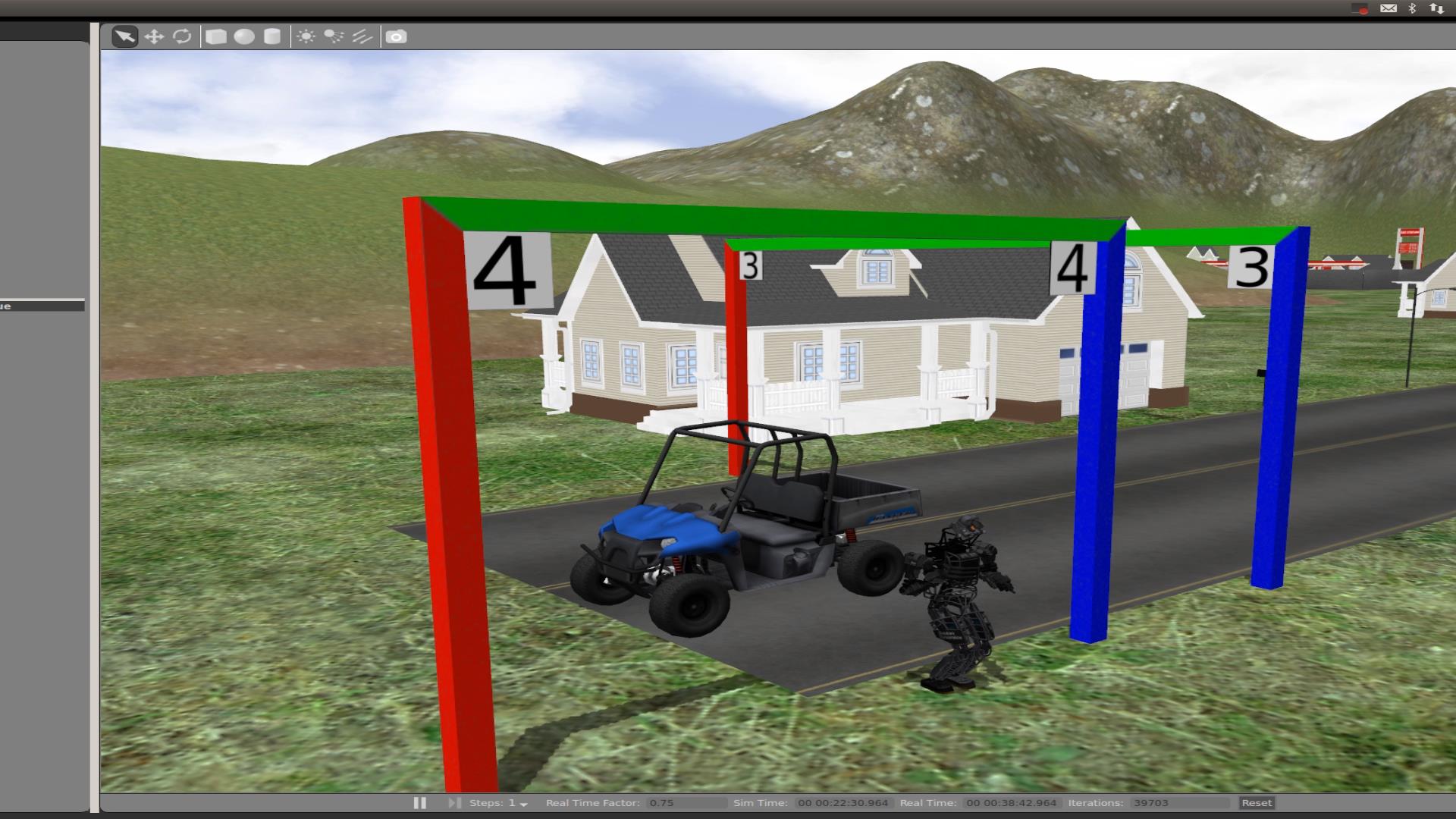

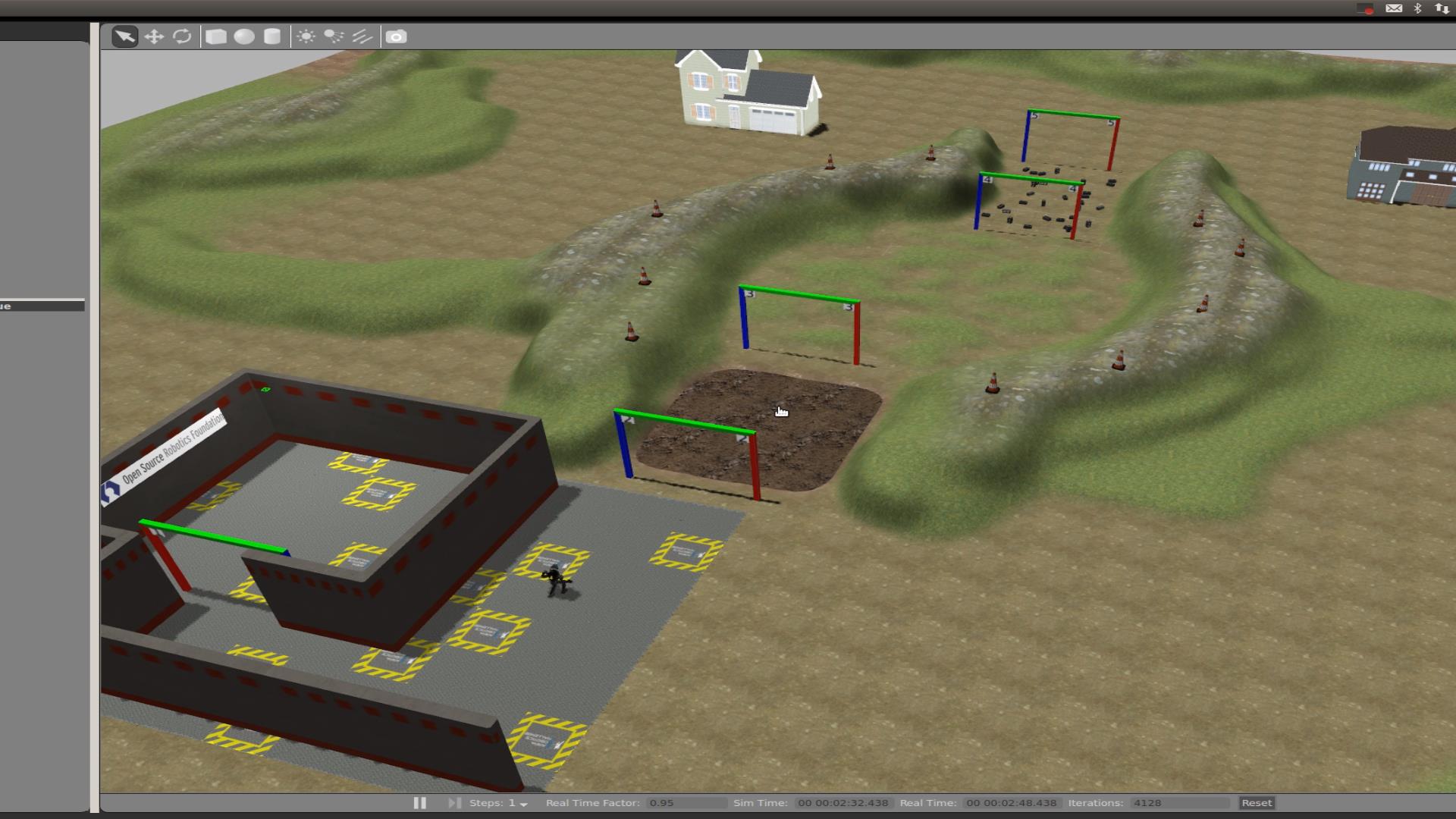

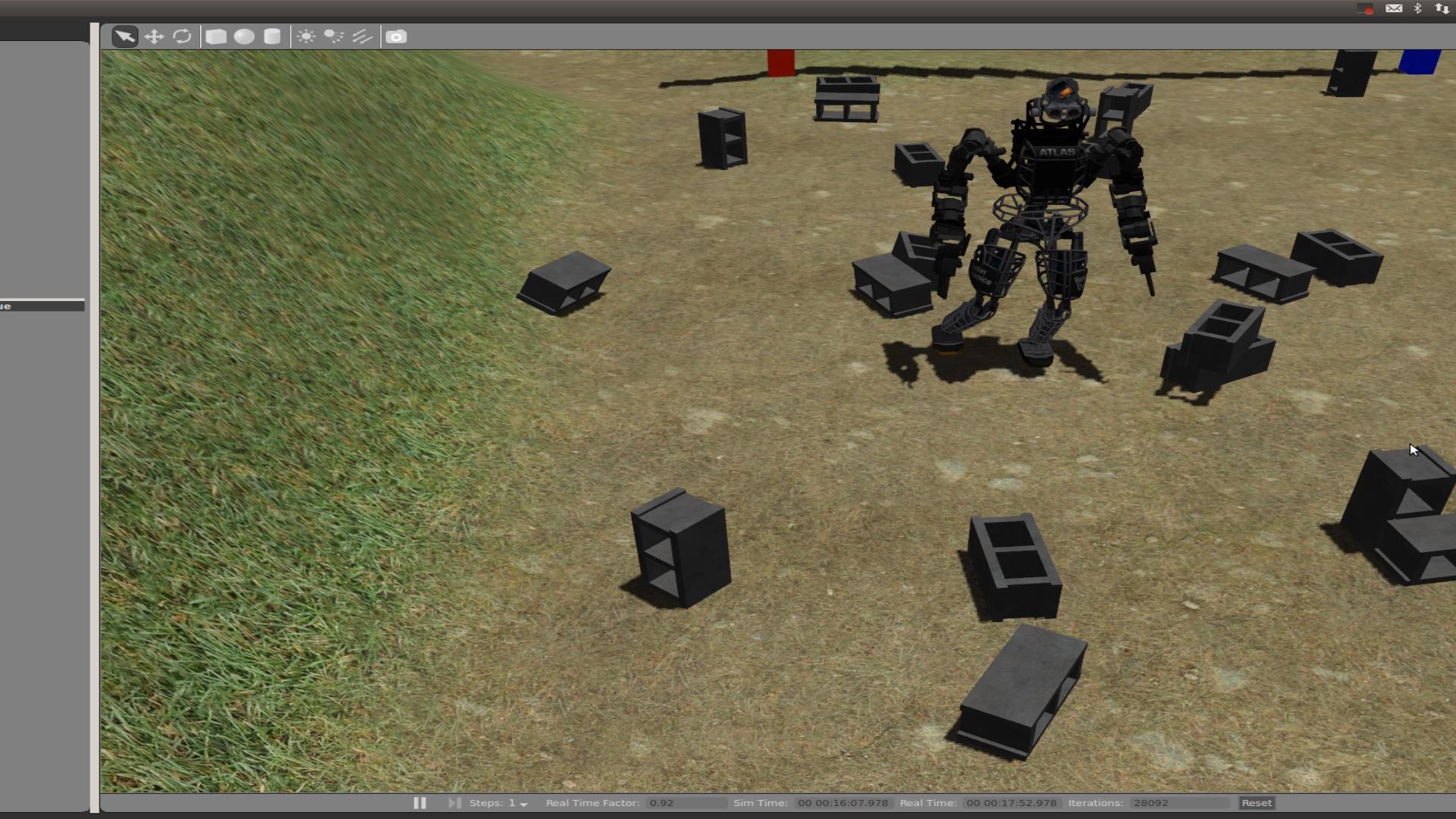

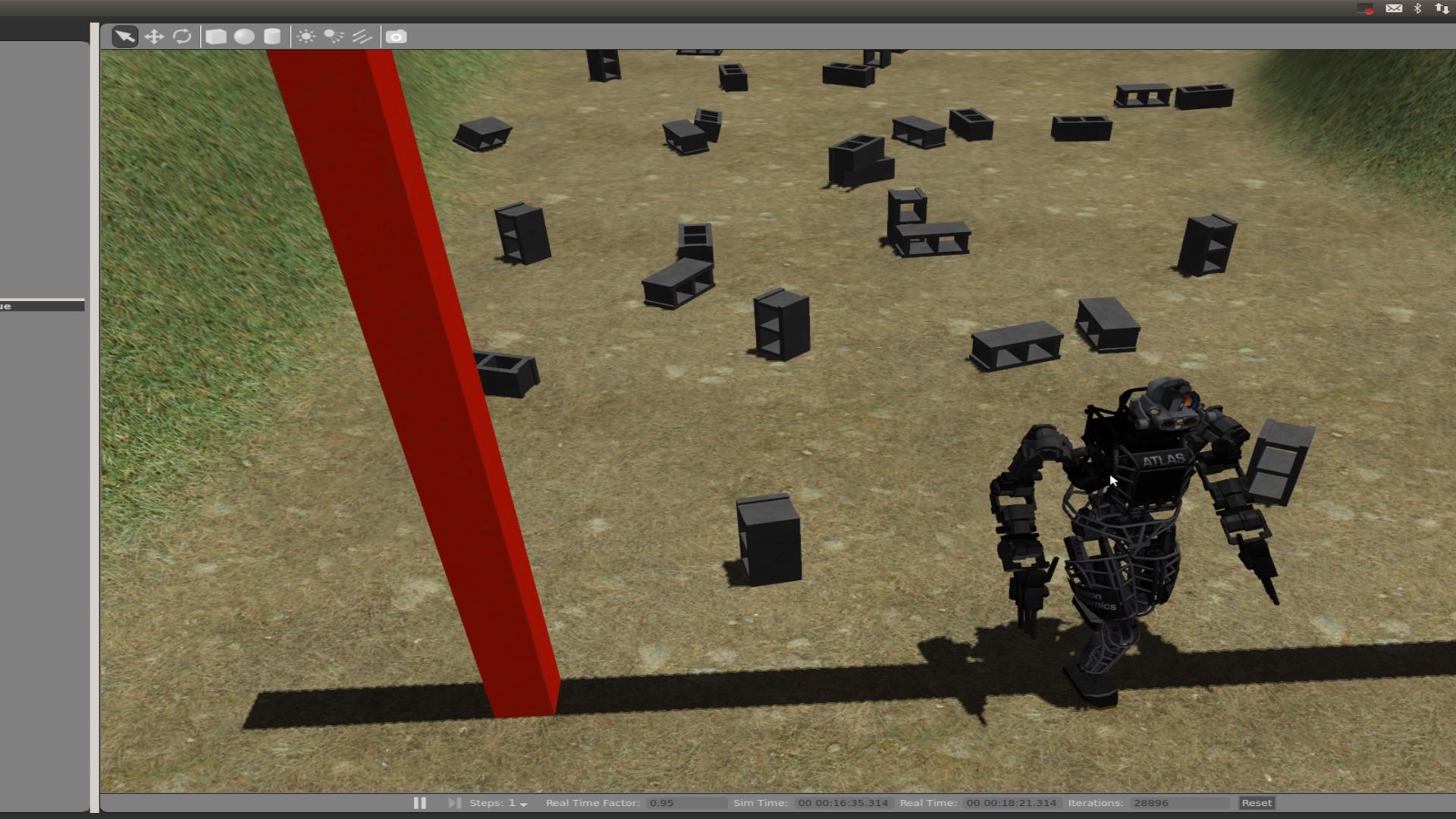

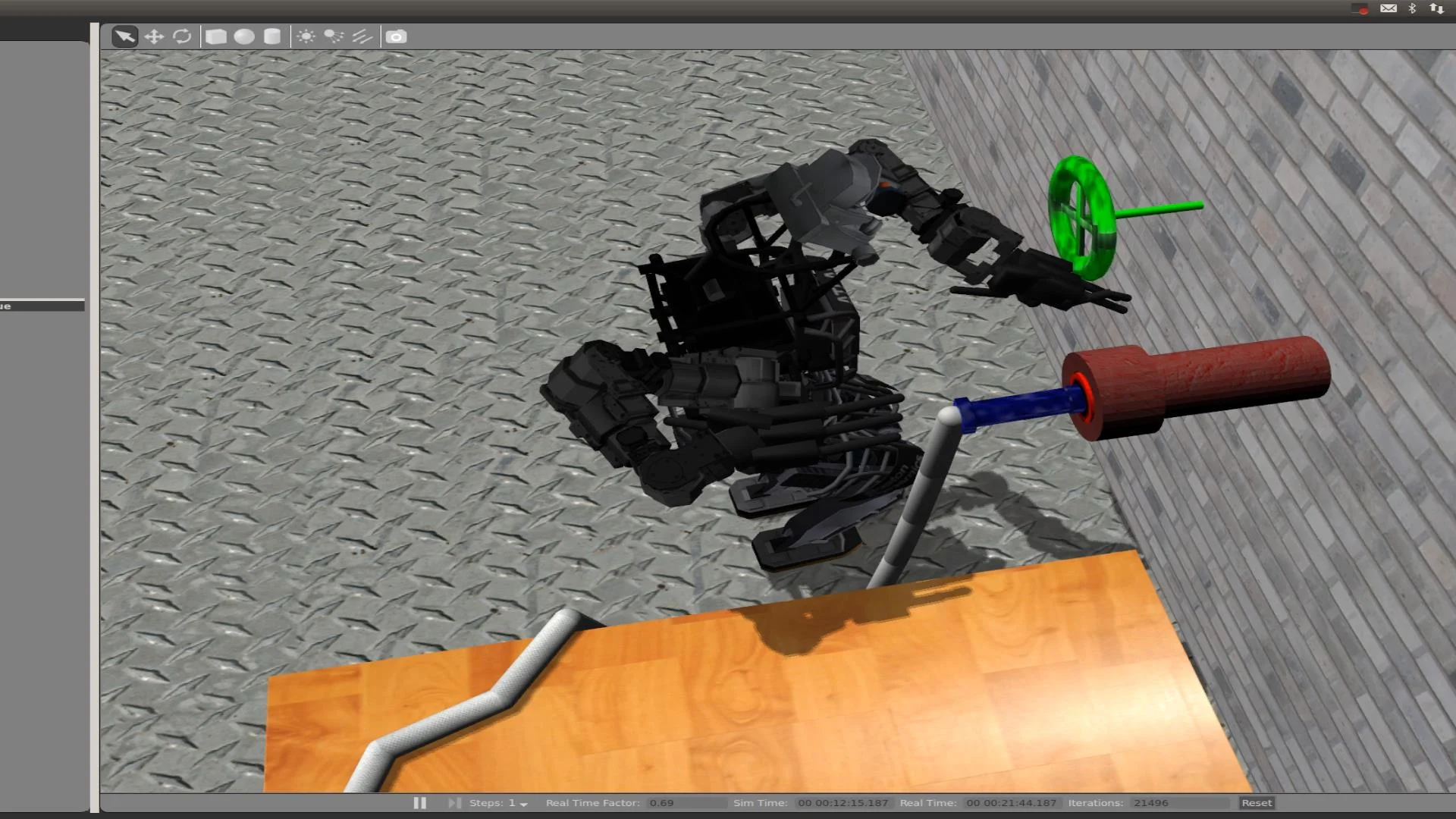

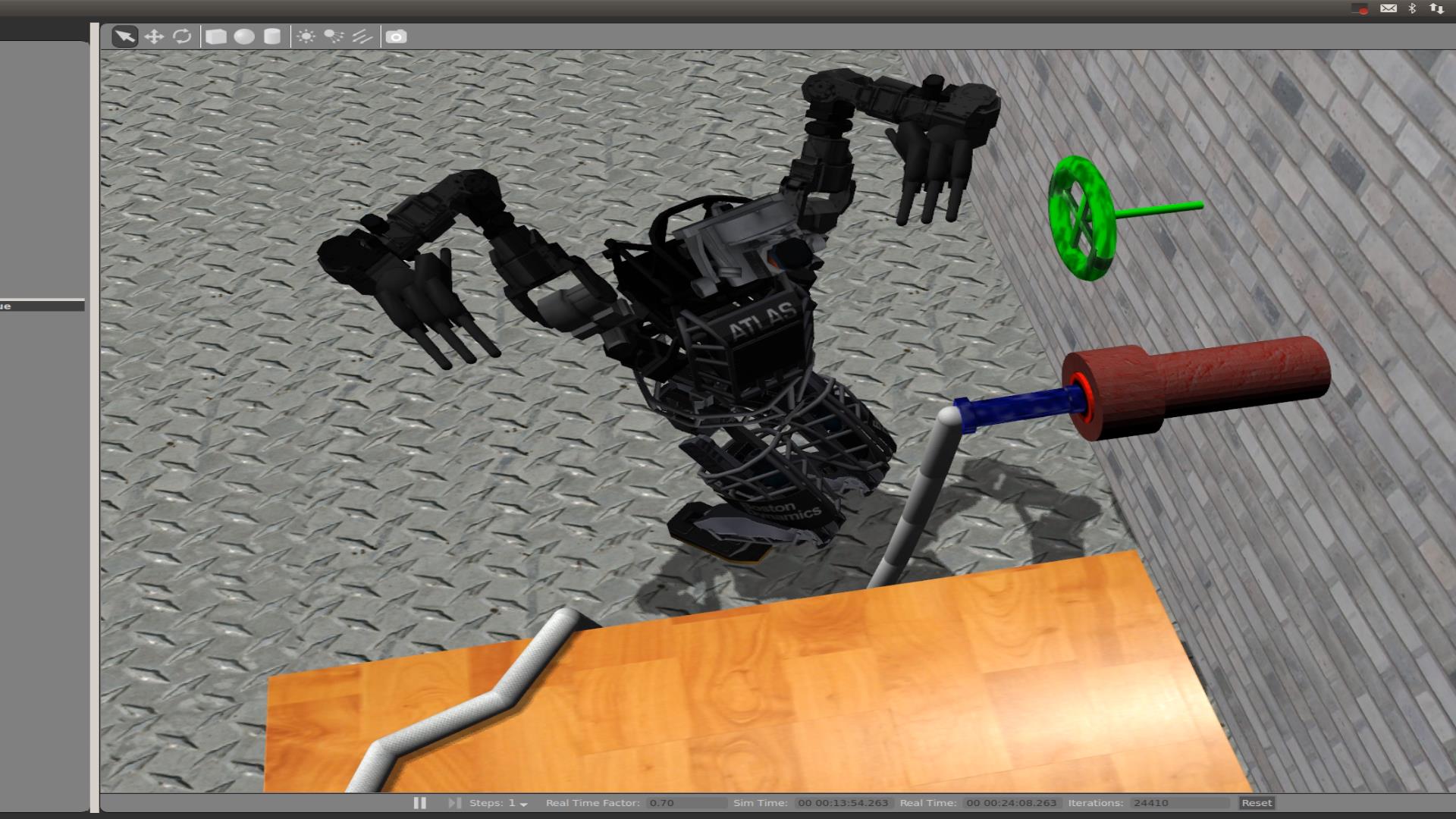

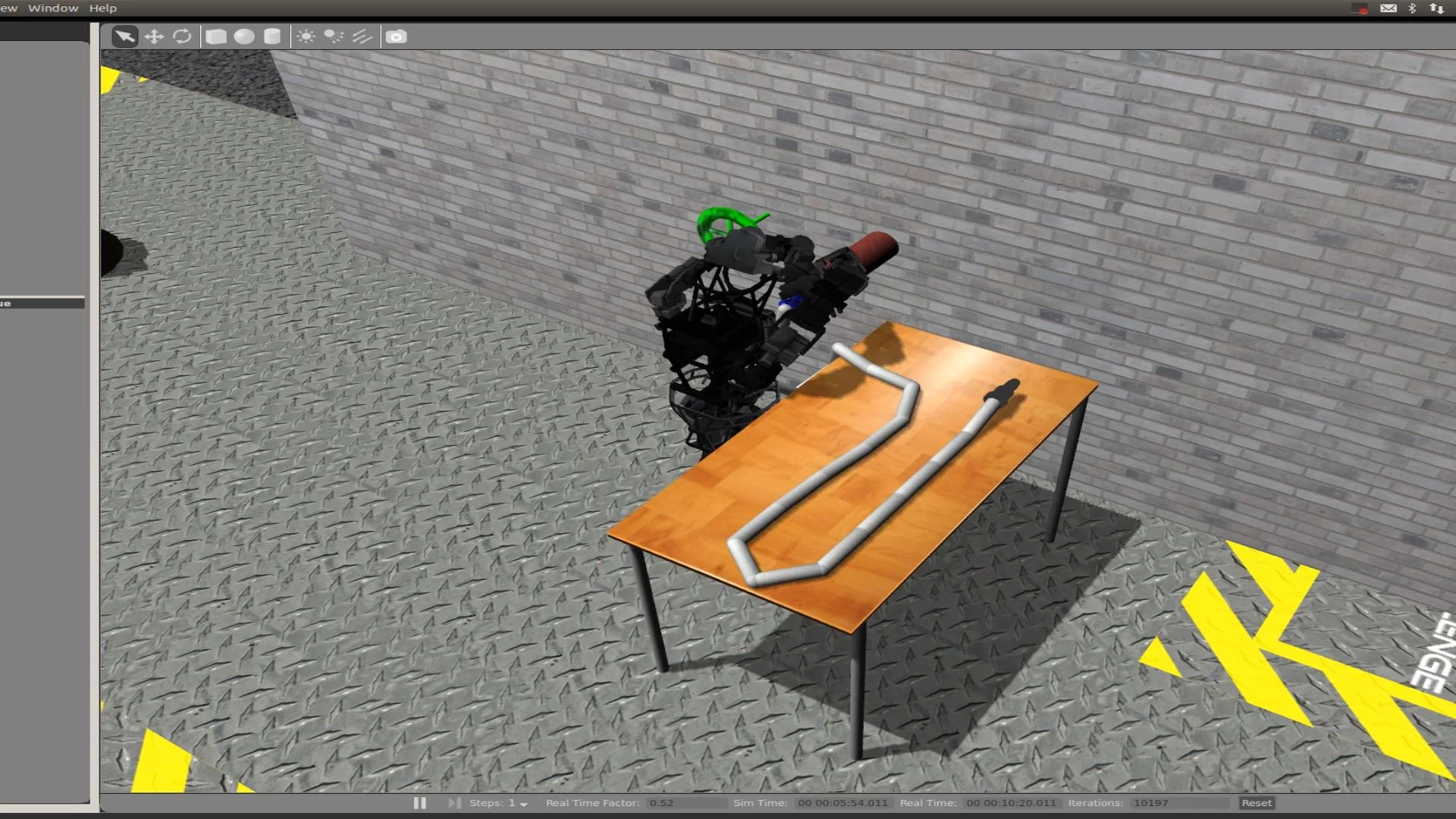

Gazebo Replays

Gazebo is the simulator used to model the physics and determine how the robot interacts with the world. During the competition, the operator had no way of seeing anything from Gazebo directly, but logs were created that allow us to review what happened afterwards. The logs can be found here.

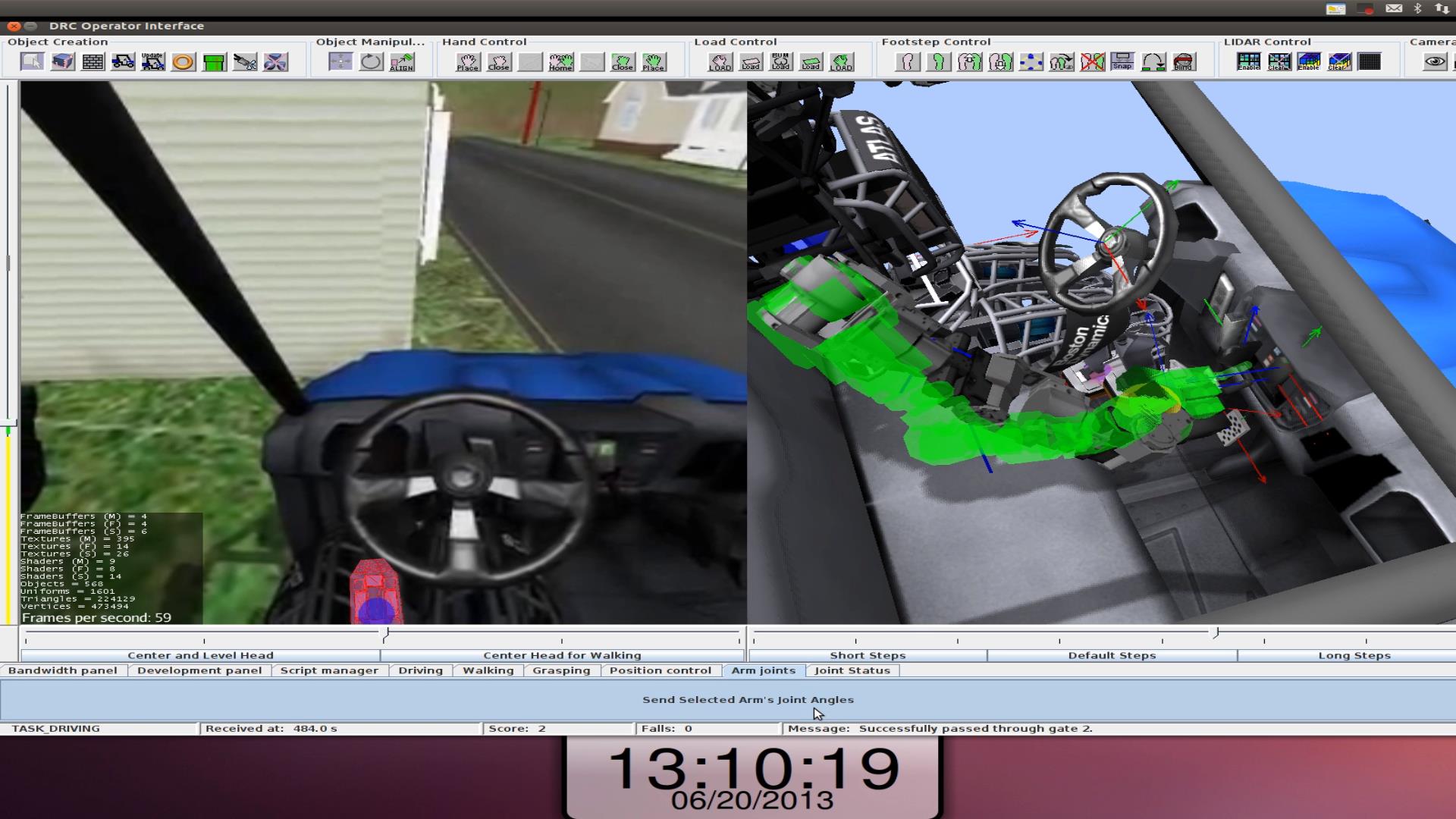

Driving

Driving was the most difficult task. Points were given for 1) getting into the car, 2) Driving through the first gate just in front of the car where it started, 3) Driving through the gate at the end of the road, and 4) Exiting the vehicle and passing through the last gate. On our first two car runs we were only able to get into the car (and one of those times we got in twice). We were able to get through the gate on the third run. Finally, we successfully completed the task on the last two car runs.

Getting in the car required a complex sequence of motions specially designed to get around the mobility limits of the robot where either joints were unable to move in the direction we would have liked, or the physical limits of the joints where our controller tended to lock the joints from moving at all. Knowing where the car was relative to the robot was critical in order to make sure that we could support the robot properly and get a secure grip on the car. It was also important to keep any part of the robot, not just hands and feet, from colliding with the car in the tight space constraints that existed.

The transition from getting into the car to actual driving was a critical point. We had to avoid sitting on or even just touching the seat for the most part because of bugs in Gazebo that passed on aliased velocity signals and caused our controller to consistently fight against a non-existent rotational hip velocity. Another difficulty was disengaging the parking break. During our second car run we unknowingly slipped off the parking break just enough to keep it from fully disengaging and put the car into reverse as well.

While driving we kept off of the seat and supported the weight of the robot by keeping a grip on the roll cage above us and pushing the free foot into the floor of the car. While driving we tried to only push on the gas and coast so we didn't have to move the foot back and forth between the break and gas pedals which had the potential of missing if the car alignment got off. We also didn't ever let go of the steering wheel for the same reason. This caused us to have wide, but controllable turns. Right turns would rotate the robot a little harder into the gas pedal. On our third car run, we didn't quite make a full right turn when a barrel was placed on the inside corner of the turn and barriers on the outside. We ended up having to put the car into reverse to back up and try again.

Getting out of the car just reversed the process of getting in. The second to last run, as we were attempting to get out, we sunk too low into the seat and the controller threw us out of the car, but through the gait. The last run we were able to get out nicely as intended.

Example driving sequence: Last car run

Problems in first car run

Problems in second car run

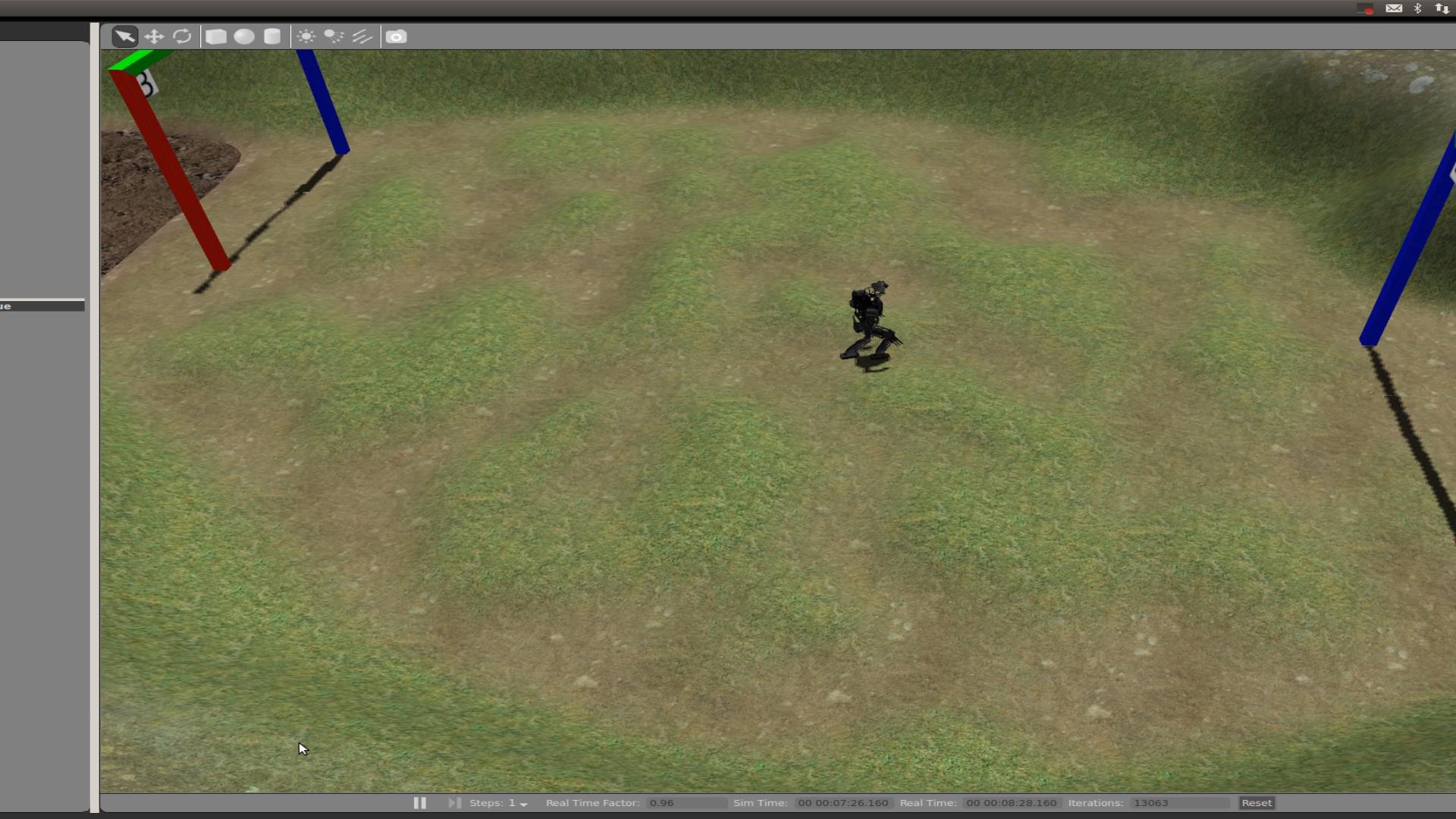

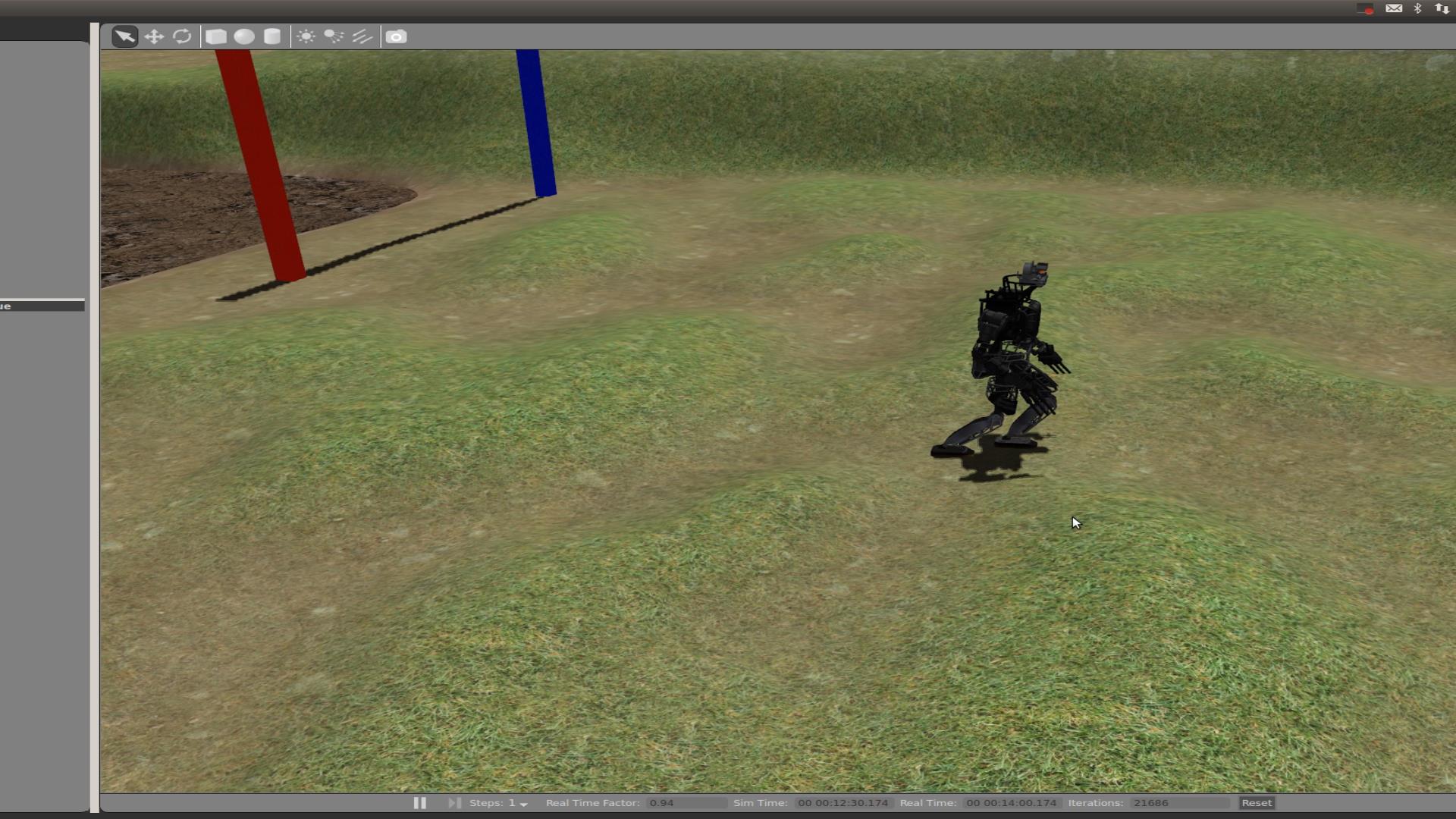

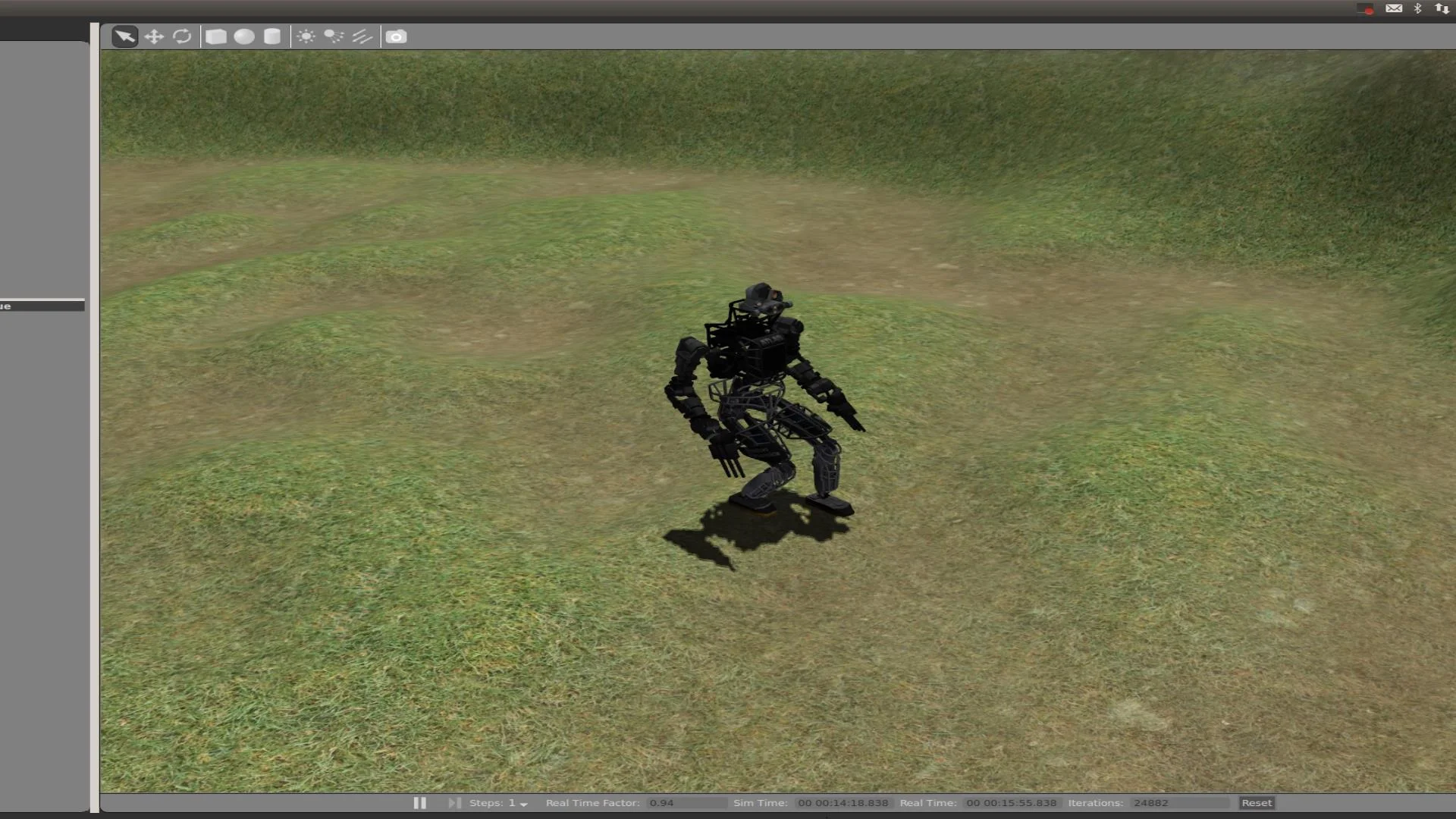

Walking

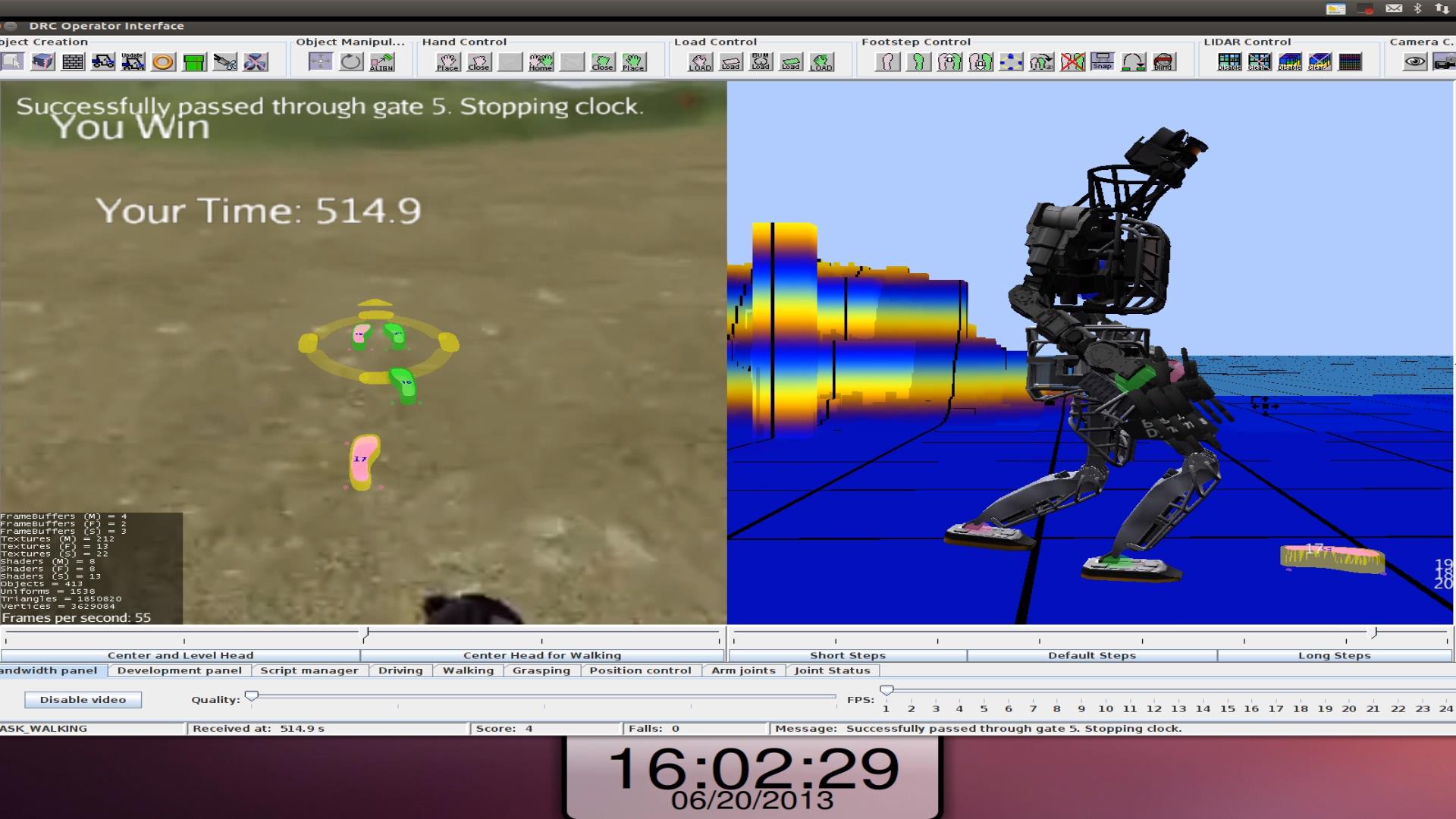

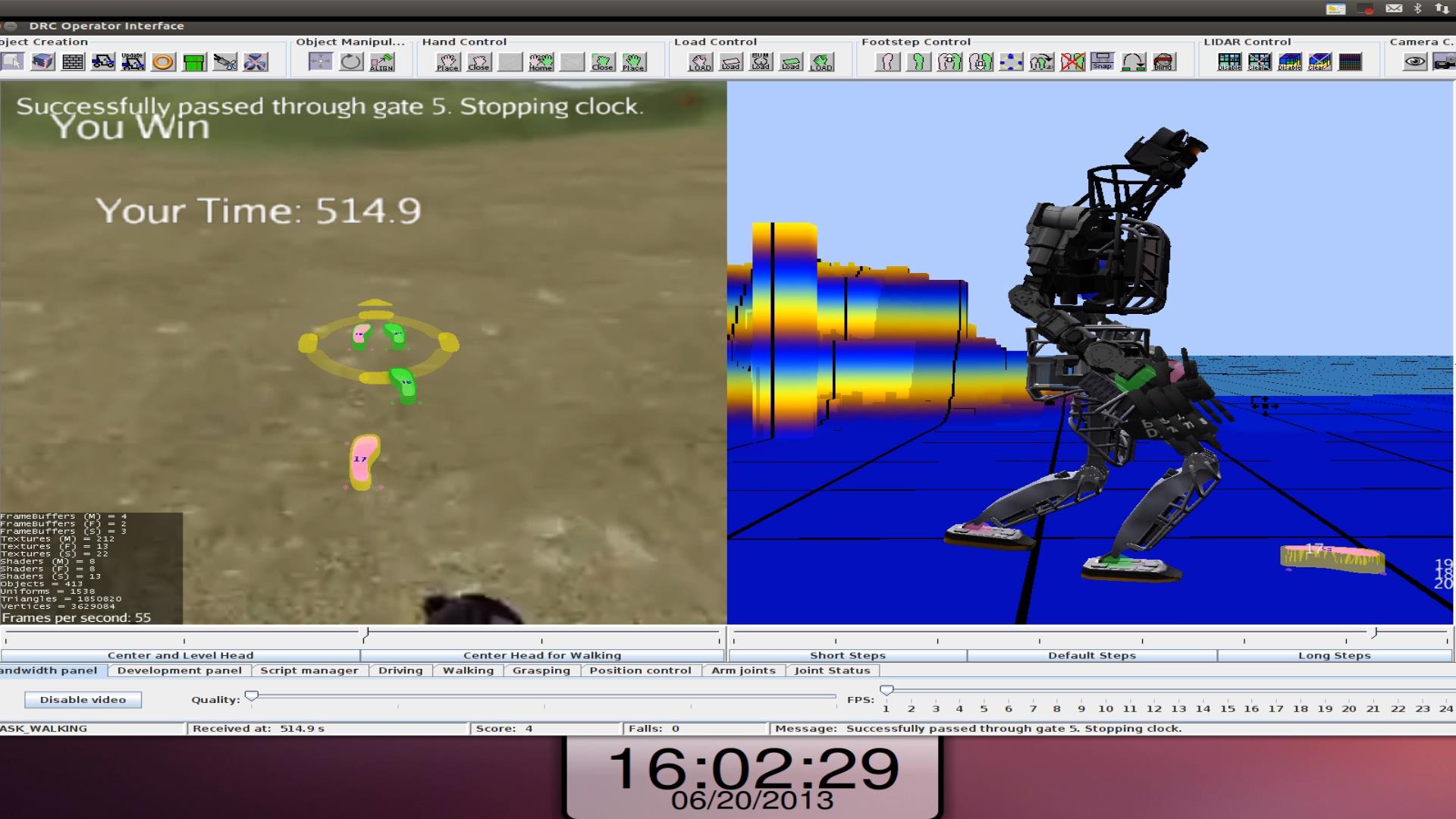

During walking points were gained after walking through a gate after level terrain, then a mudpit, hills and a field with many cinder blocks. Walking was the easiest task and we earned all walking points. With a few basic instructions someone who had never used our interface completed entire runs (but not during the competition). Simply clicking where you wanted the robot to go on either side of the operator interface displayed a target and planned steps (or guide steps during "blind" or "mud" walking). clicking on the target ring could change the orientation of how the robot walked there selecting forward, backward, or sideways. How high the robot walked (crouching vs. standing tall) could be controlled with a slider on the side. Step length could also be adjusted by a different slider. The quality of steps were highlighted in different colors to show whether it was a risky step or not. Individual steps could be placed, which was rarely used, or any of the steps in the path shown could be individually moved, which we didn't use at all. When satisfied with what it showed you sent the command to the robot and it did it. At any time walking could be stopped or started.

Thee are three walking modes: blind walking, mud walking, and standard walking. In blind walking, all LIDAR data is ignored and the ground is assumed to be planar (flat or angled). This reduces the amount of communication between the operator and robot. It also helps avoid spurious LIDAR readings from making the robot think it must step must higher than it needs to. Mud walking is the same as blind walking, but has a few different controller parameters to enhance walking the mud.

Mud was more difficult to walk through because the friction was reduced, but also exerted viscous damping forces further up on the legs.The exact frictional and viscosity parameters were changed each run. The second difficulty with the mud pit was the steep slope at the end of the mudpit. Because the ankles could not tilt upwards enough to keep from falling over, we would turn around and walk up the slope backwards.

In the hills during the competition we played it safe by staying in the valleys and choosing paths with small slopes. Although we played it safe, our walking could walk right over the hills with no problems the majority of the time. After our last walking task after scoring all points for it, we walked back through the course and purposefully walked over all the highest hills. We played it safe though because in our practice sessions occasionally we step exactly on the top of a ridge. When that happens Gazebo physics treats it as a perfectly rigid edge instead of a nice flat top of a hill and the robot can begin rocking and then sometimes falls.

The cinder block field was just about as easy as flat ground. The only difference for us was choosing a clear path to walk through, which meant a few more clicks. We had the ability to step onto or over blocks, but there were never enough blocks out to warrant the strategy.

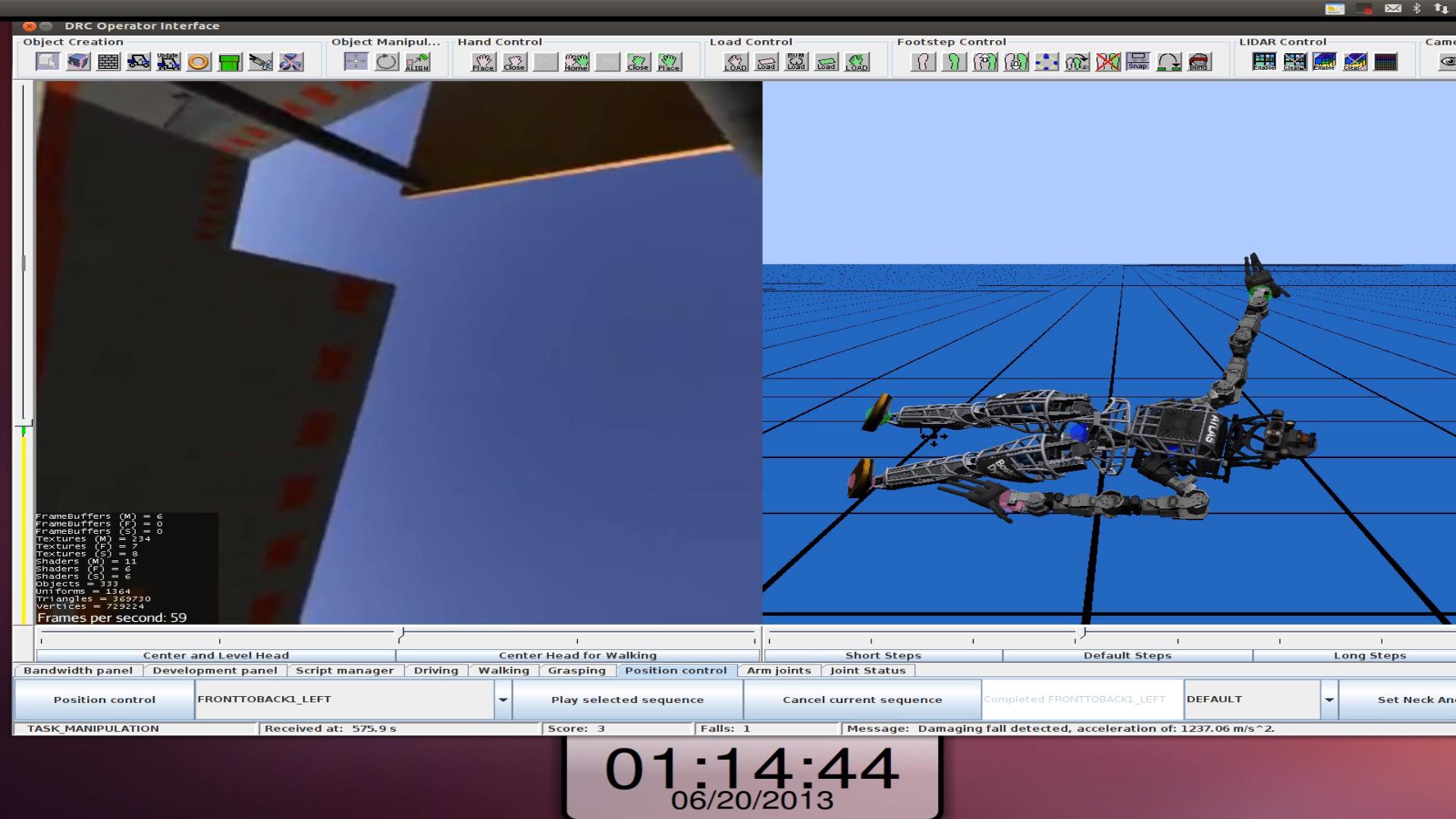

We only faced one unexpected difficulty during any of the walking tasks. Near the end of the hills in one task, our controller somehow was delayed and lost a few controller ticks. This pause caused the controller to jerk the robot when it continued again and caused the robot to fall. We had backup scripts for standing however and we were able to stand up again and complete the rest of the walking task.

Example walking task

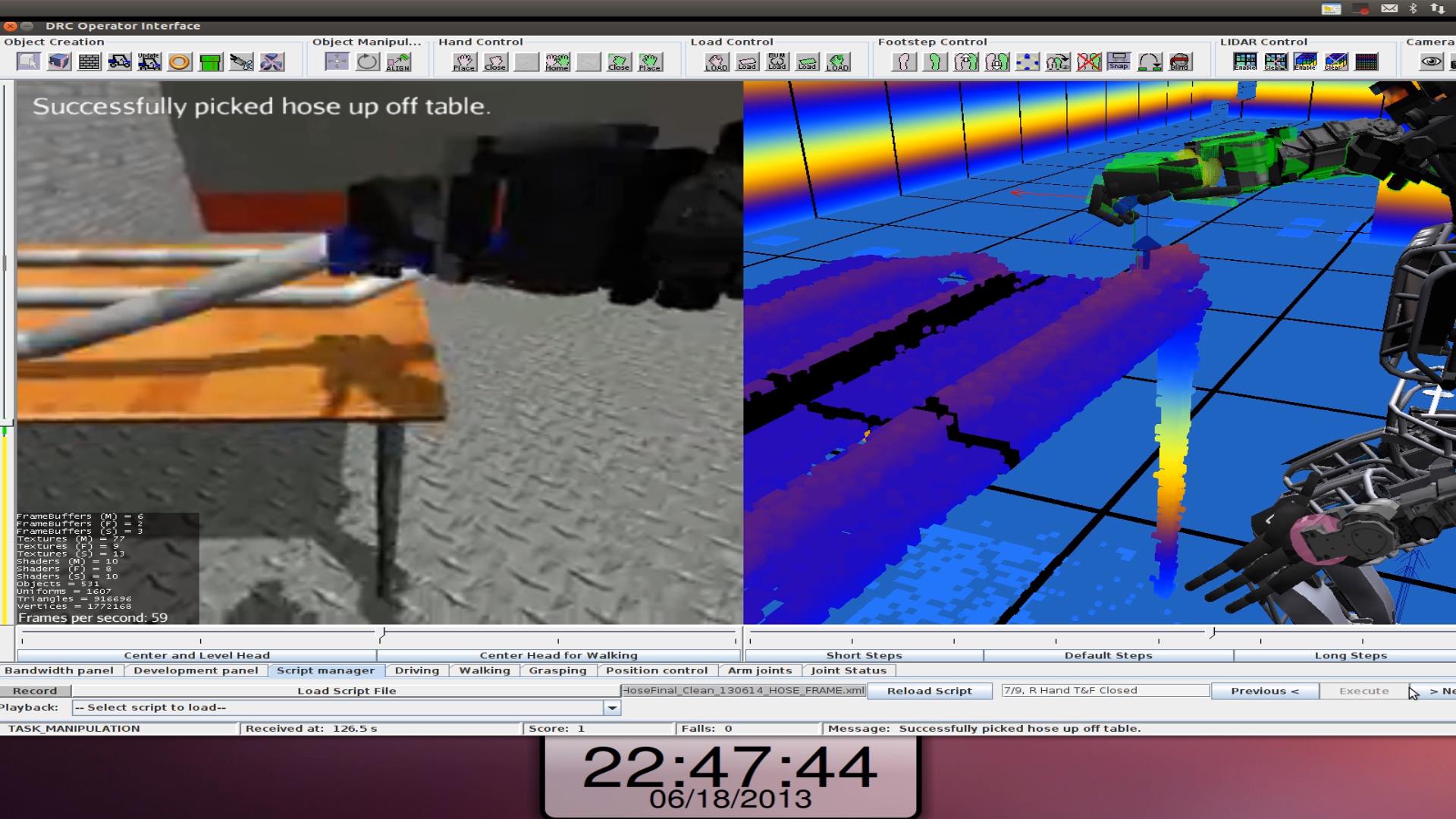

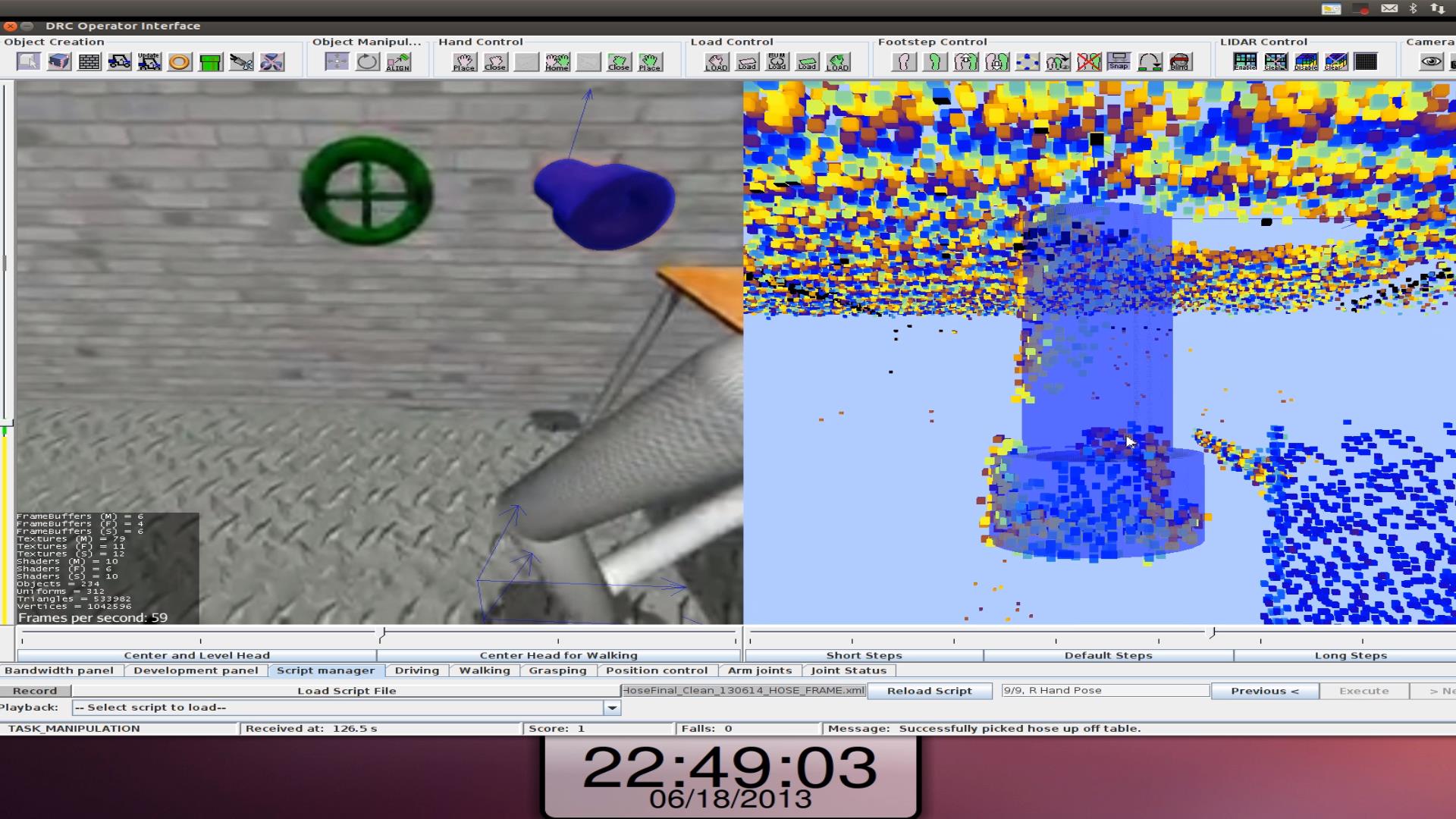

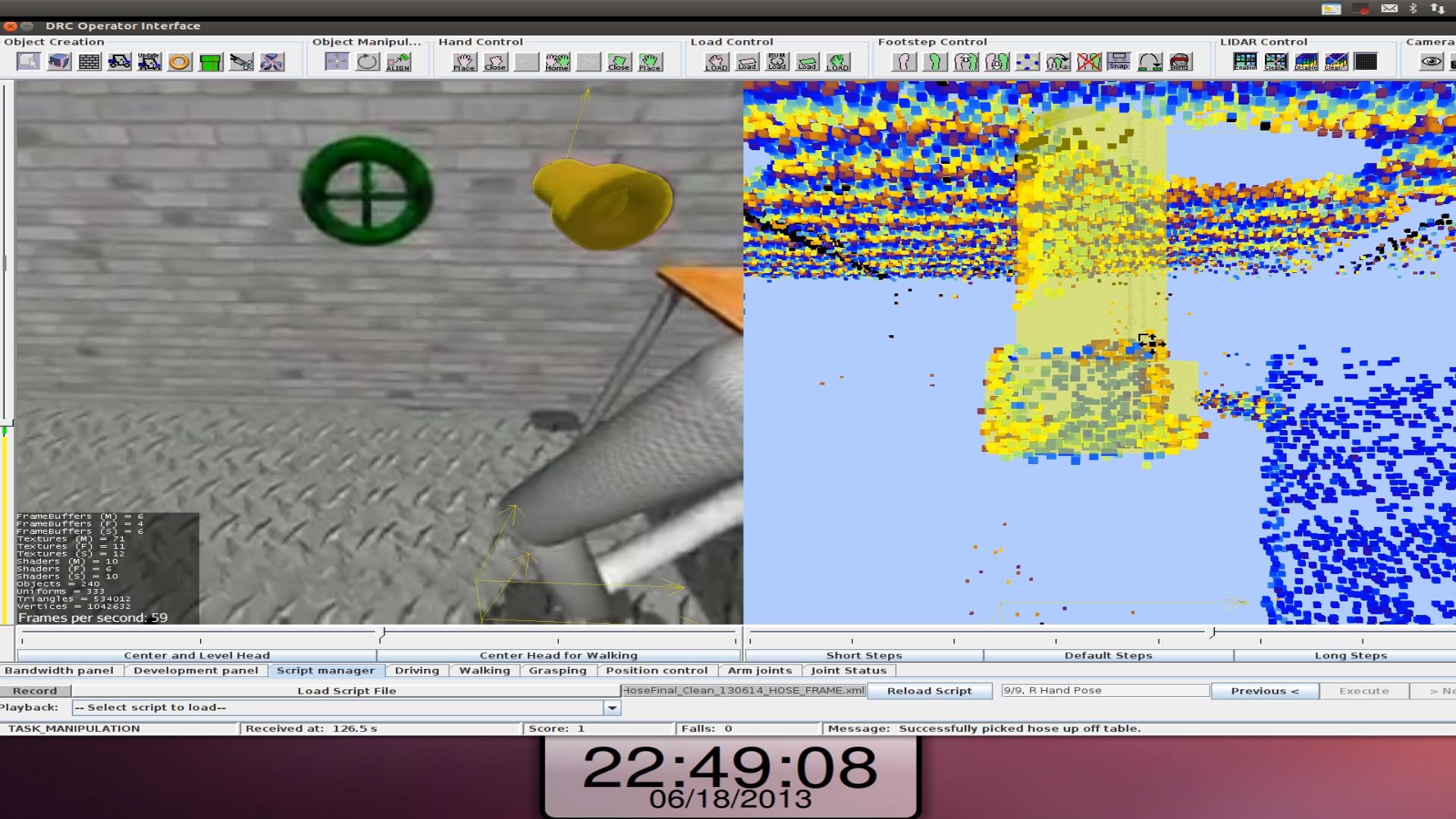

Hose Task

The hose required us to 1) pick up a hose from off a table, 2) align and insert the hose into the standpipe, 3) screw the hose into the standpipe, and 4) turn on the valve. Initially determining how to accomplish this task was difficult. We had to overcome the fact that the hands could not turn or move in the same way we would intuitively grab the hose ourselves or screw it in. We had to take into consideration not only how to grab the hose but how that same grip affected us being able to screw it in later. Because the wrist couldn't move how we wanted, the entire arm had to move to twist it in. Turning on the valve was relatively simple as we just rubbed the hand on the outside to turn it.

Although development was difficult, the resulting scripts were very reliable. During the competition we scored all the hose task points, except one was taken back because we registered too many falls before scoring it. There were three cases where we encountered something we didn't expect. In the very first hose run the valve barely moved when we first tried to turn it. Manually we told the hand to pass through the wheel just offset from the center of it in order to push on it hard enough. On another task, the hose twisted out of the connector a little on its own after we had scored the point for it. After turning the valve all the way open and not scoring the point for it we were a little confused for a while. We had to go back to the hose and screw it in again to earn the last point for turning on the valve. After that we always screwed it in much farther than necessary.

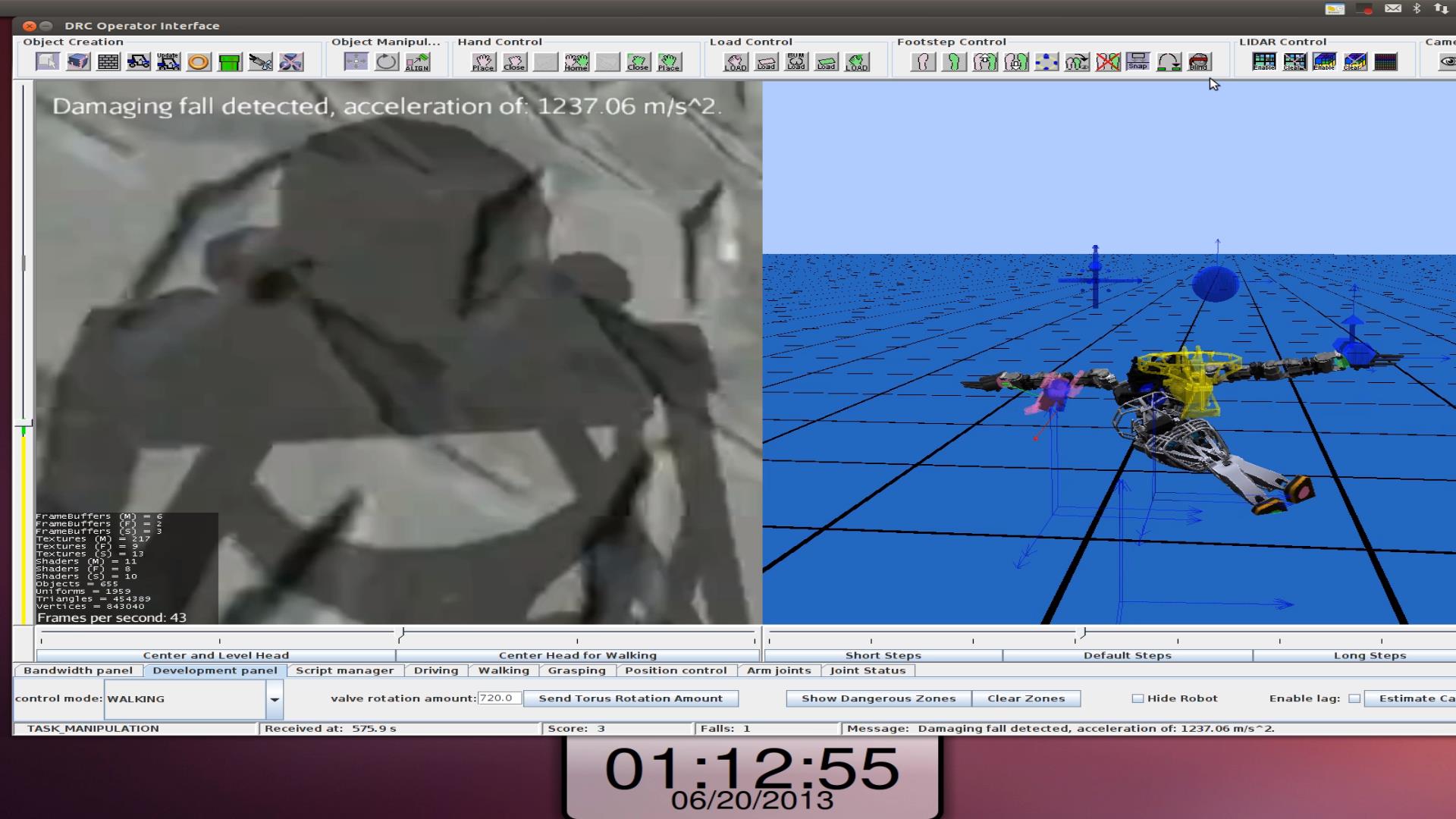

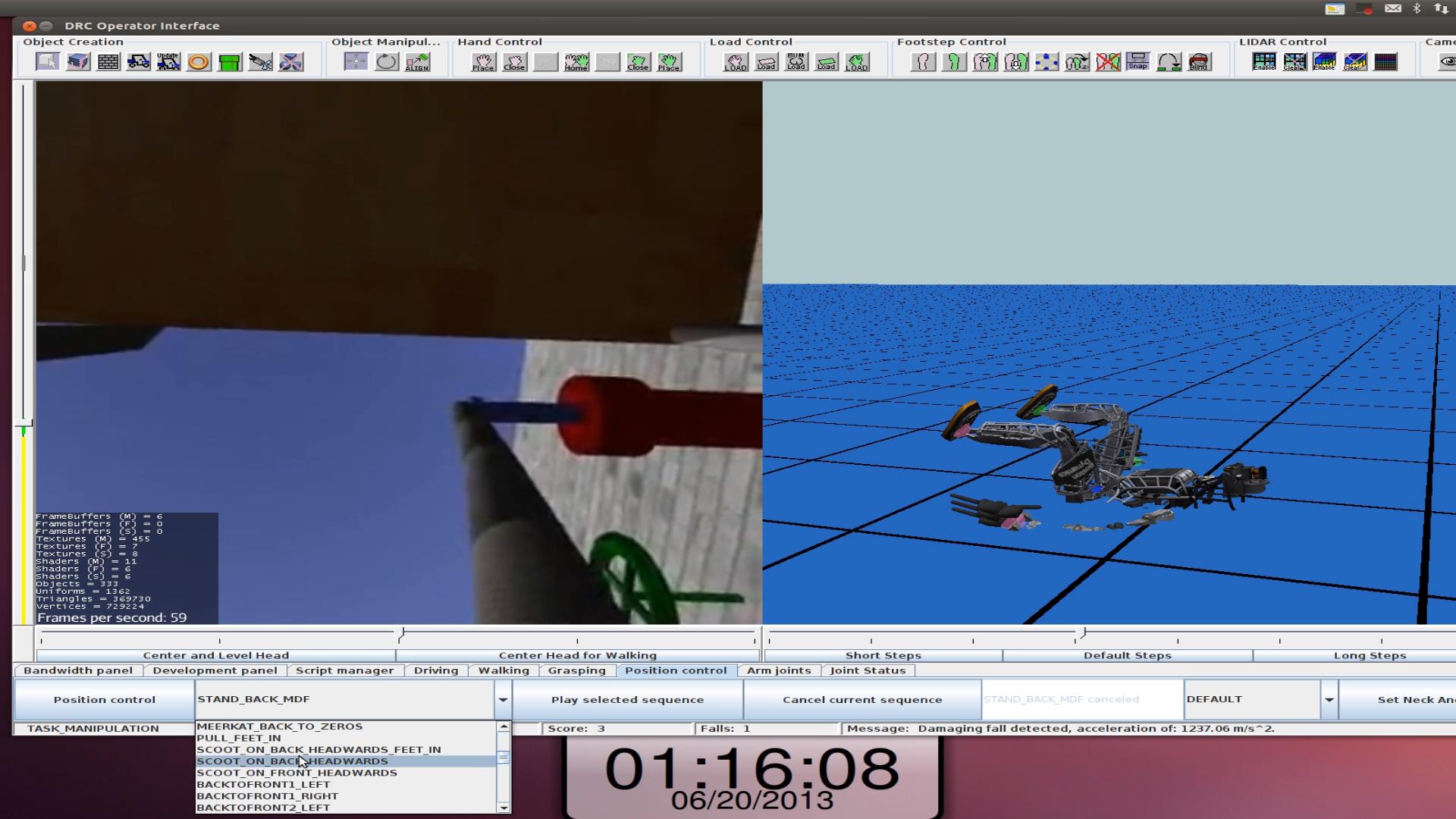

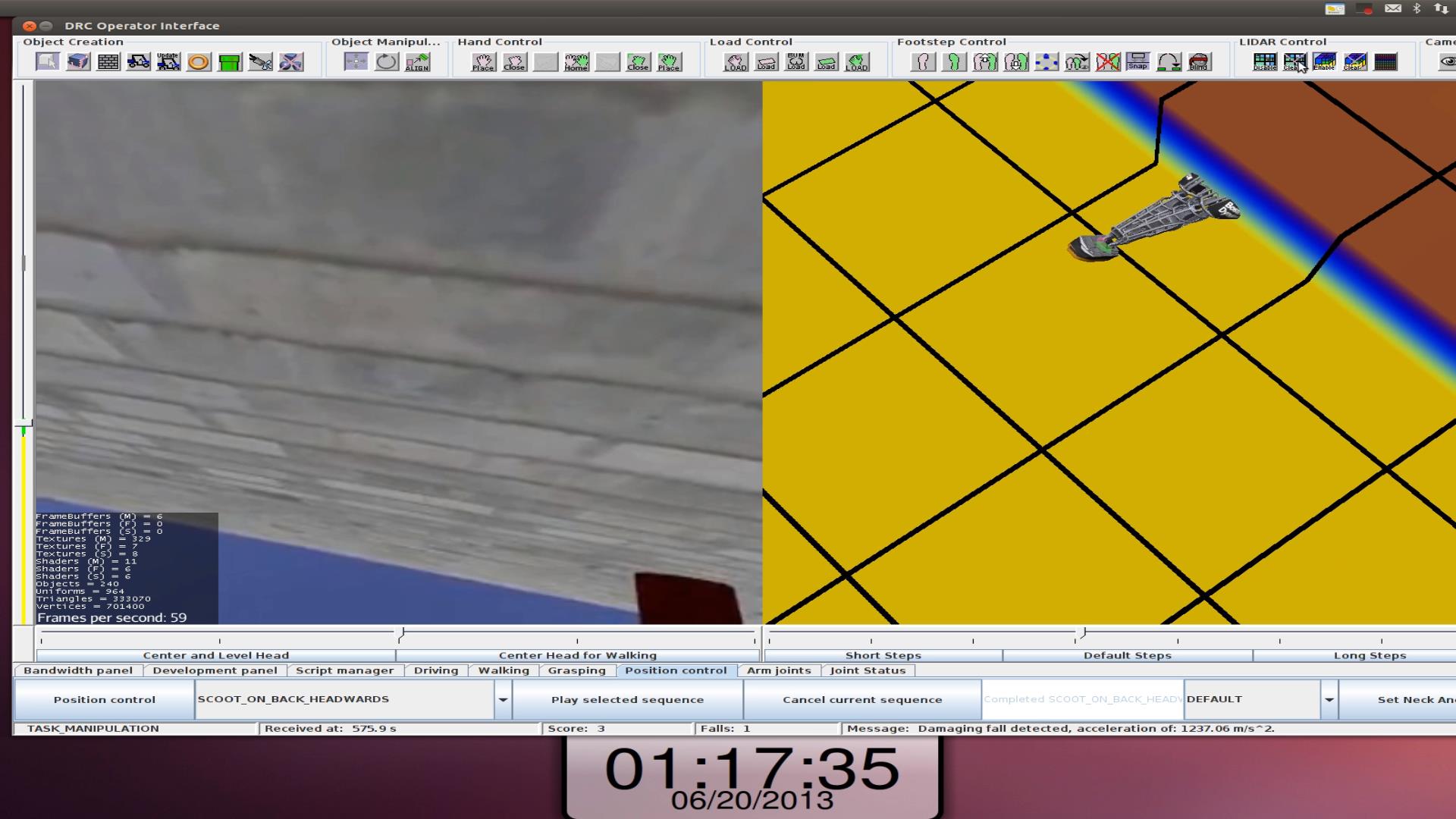

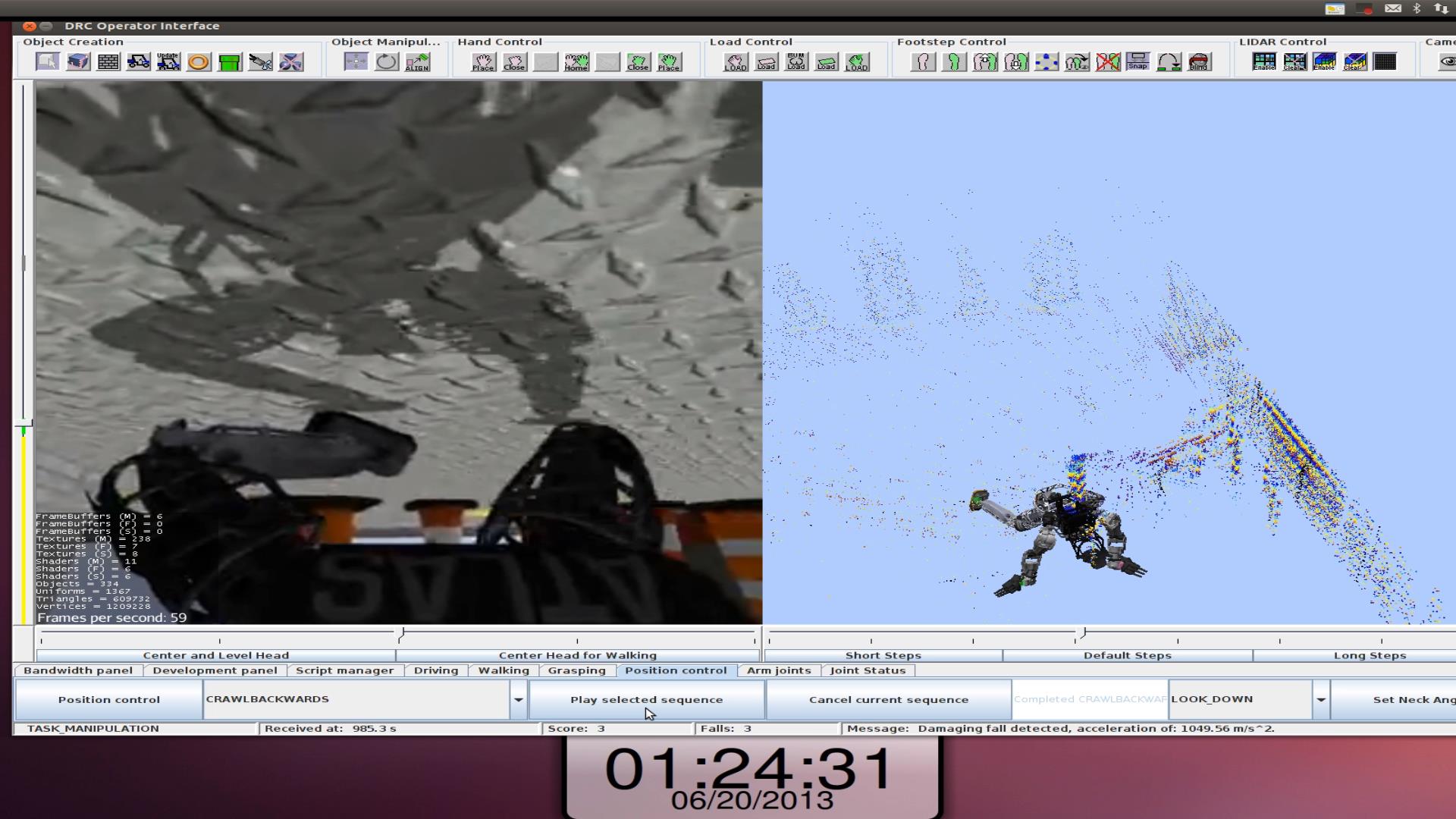

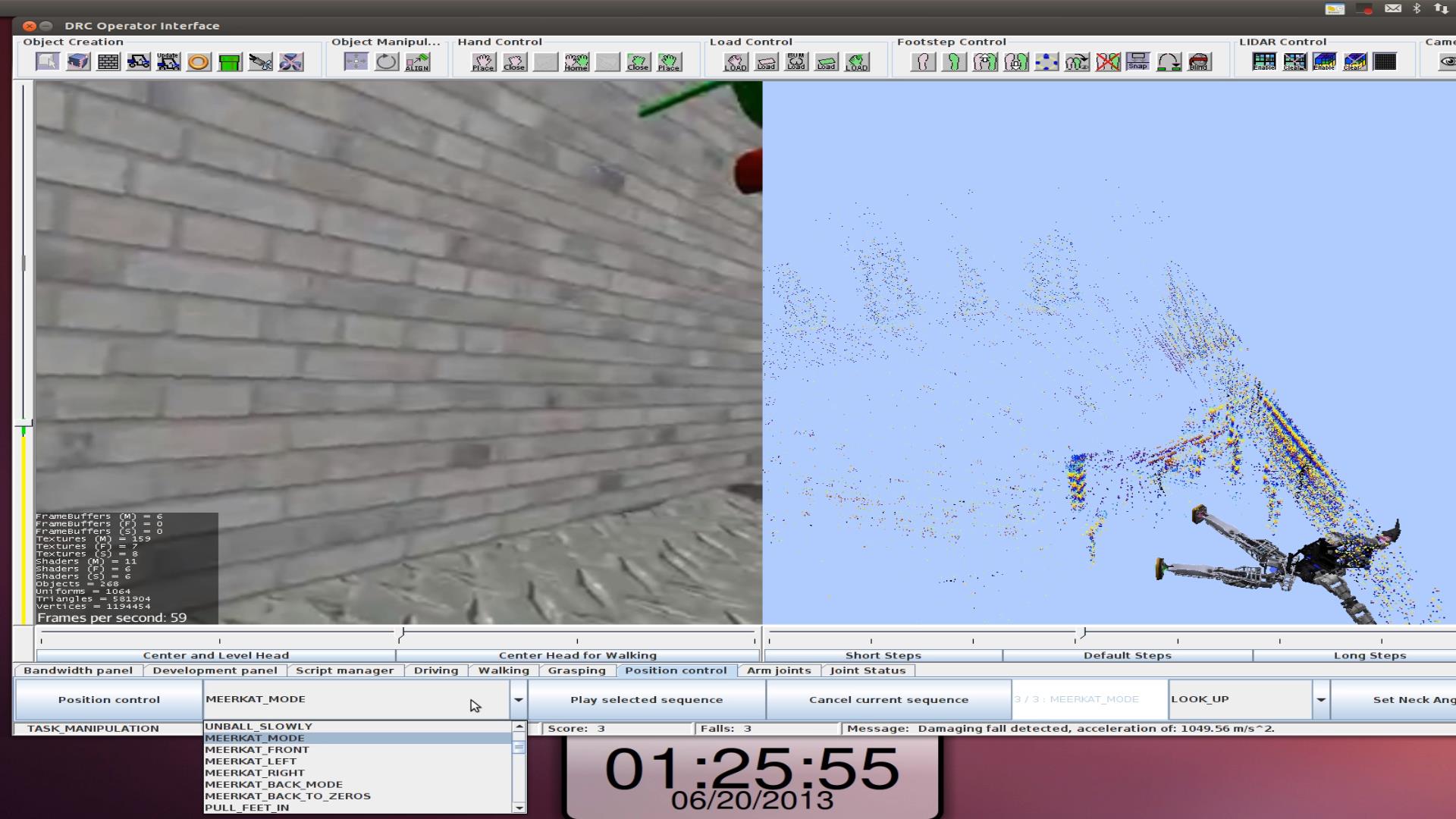

The third unexpected scenario happened due to lost controller counts that caused a fall as it had in one of the walking tasks. After we had screwed in the hose as we were trying to walk away from the

table, that's when our controller jumped and caused a fall. While on the ground, we had very little sense of where we were any more. The robot camera

could see very little and our LIDAR and state estimates were scrambled

in the process of the fall. This made it nearly impossible to know exactly where we

were in relation to the table, hose or wall. Using the backup scripts, our first attempt at

standing failed. After that, in the heat of the moment we made poor

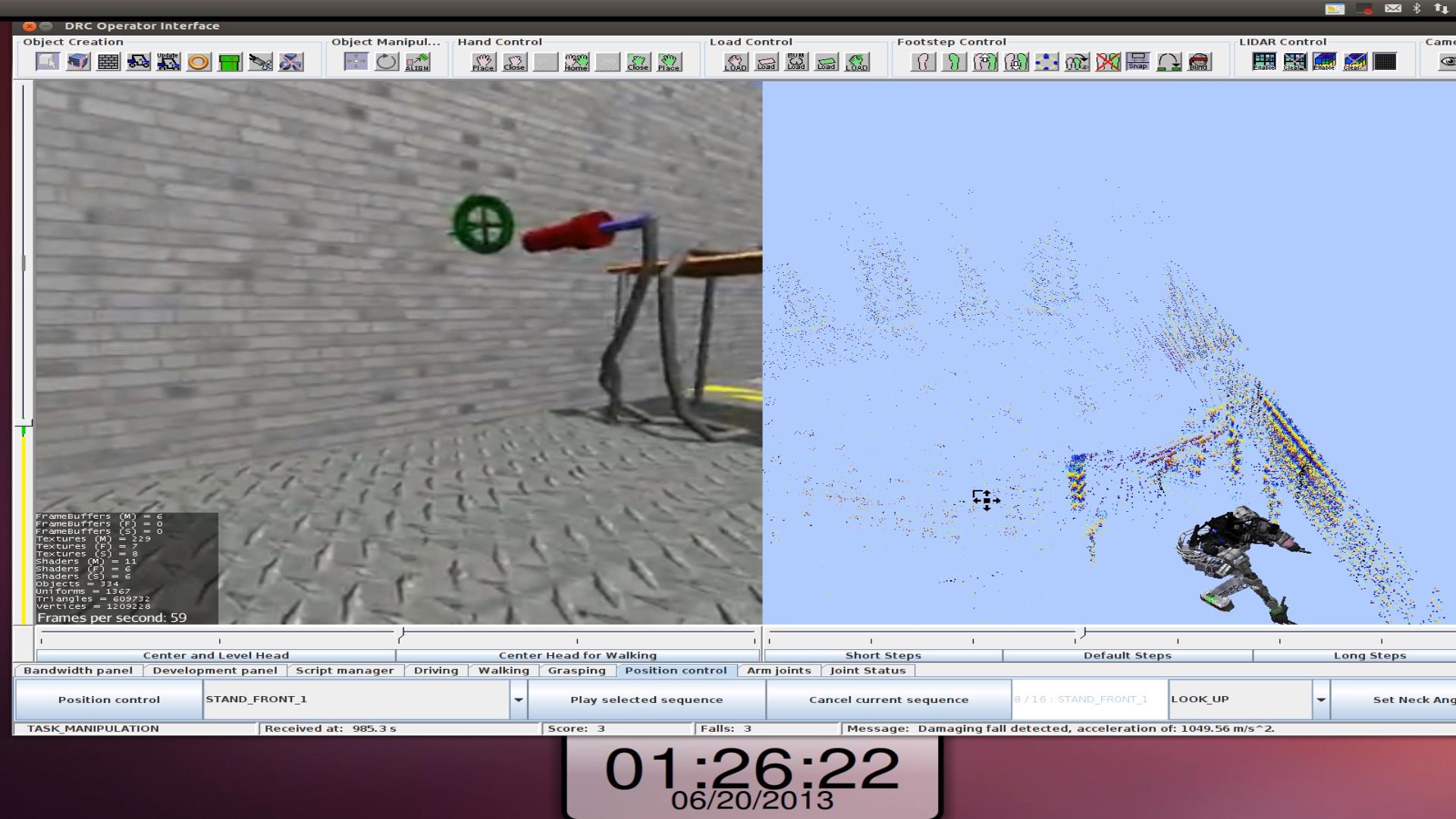

choices that worked us under the table. We finally were able to turn

over and crawl backwards out from under the table, stand up and score

the last point. Our last point was nullified, however, because in the

process we scored a total of three falls. The experience showed we were

capable of getting out of bad situations, but also showed how limited

information and stress of having no more fall-back plans can impact

decision making.

Example hose task

Hose task fall recovery